Abstract

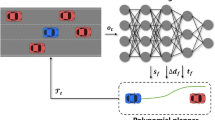

Uncertain environment on multi-lane highway, e.g., the stochastic lane-change maneuver of surrounding vehicles, is a big challenge for achieving safe automated highway driving. To improve the driving safety, a heuristic reinforcement learning decision-making framework with integrated risk assessment is proposed. First, the framework includes a long short-term memory model to predict the trajectory of surrounding vehicles and a future integrated risk assessment model to estimate the possible driving risk. Second, a heuristic decaying state entropy deep reinforcement learning algorithm is introduced to address the exploration and exploitation dilemma of reinforcement learning. Finally, the framework also includes a rule-based vehicle decision model for interaction decision problems with surrounding vehicles. The proposed framework is validated in both low-density and high-density traffic scenarios. The results show that the traffic efficiency and vehicle safety are both improved compared to the common dueling double deep Q-Network method and rule-based method.

Similar content being viewed by others

Abbreviations

- DQN:

-

Deep Q-network

- HDSE:

-

Heuristic decaying state entropy

- IDM:

-

Intelligent driver model

- LSTM:

-

Long short-term memory

- MAE:

-

Mean absolute error

- MOBIL:

-

Minimizes the overall braking induced by lane changes

- MSE:

-

Mean square error

- NGSIM:

-

Next generation simulation

- POMDP:

-

Partially observable Markov decision process

- TTC:

-

Time-to-collision

References

Dong, J., Chen, S., Li, Y., et al.: Space-weighted information fusion using deep reinforcement learning: the context of tactical control of lane-changing autonomous vehicles and connectivity range assessment. Transp. Res. Part C Emerg. Technol. 128, 103192 (2021)

Alvaro, P.K., Burnett, N.M., Kennedy, G.A., et al.: Driver education: enhancing knowledge of sleep, fatigue and risky behaviour to improve decision making in young drivers. Accid. Anal. Prev. 112, 77–83 (2018)

Li, G., Yang, Y., Zhang, T., et al.: Risk assessment based collision avoidance decision-making for autonomous vehicles in multi-scenarios. Transp. Res. Part C Emerg. Technol. 122, 102820 (2021)

Kiran, B.R., Sobh, I., Talpaert, V., et al.: Deep reinforcement learning for autonomous driving: a survey. IEEE Trans. Intell. Transp. Syst. 23(6), 1–18 (2021)

Montemerlo, M., Becker, J., Bhat, S., et al.: The DARPA Urban Challenge: Autonomous Vehicles in City Traffic. Springer, Berlin (2009)

Patz, B.J., Papelis, Y., Pillat, R., et al.: A practical approach to robotic design for the DARPA urban challenge. J. Field Rob. 25(8), 528–566 (2008)

Jiao, Y., Tang, X., Qin, Z., et al.: Real-world ride-hailing vehicle repositioning using deep reinforcement learning. Transp. Res. Part C Emerg. Technol. 130, 103289 (2021)

Xu, X., Zuo, L., Li, X., et al.: A reinforcement learning approach to autonomous decision making of intelligent vehicles on highways. IEEE Trans. Syst. Man Cybern. Syst. 50(10), 1–14 (2019)

Zhang, Y., Gao, B., Guo, L., et al.: Adaptive decision-making for automated vehicles under roundabout scenarios using optimization embedded reinforcement learning. IEEE Trans. Neural Netw. Learn. Syst. 32(12), 5526–5538 (2021)

Cao, Z., Yang, D., Xu, S., et al.: Highway exiting planner for automated vehicles using reinforcement learning. IEEE Trans. Intell. Transp. Syst. 22(2), 990–1000 (2021)

Liu, J., Zhao, W., Xu, C.: An efficient on-ramp merging strategy for connected and automated vehicles in multi-lane traffic. IEEE Trans. Intell. Transp. Syst. 23(6), 5056–5067 (2021)

Wang, G., Hu, J., Li, Z., et al.: Harmonious lane changing via deep reinforcement learning. IEEE Trans. Intell. Transp. Syst. 23(5), 4642–4650 (2021)

Chen, S., Wang, M., Song, W., et al.: Stabilization approaches for reinforcement learning-based end-to-end autonomous driving. IEEE Trans. Veh. Technol. 69(5), 4740–4750 (2020)

Li, D., Zhao, D., Zhang, Q., et al.: Reinforcement learning and deep learning based lateral control for autonomous driving. IEEE Comput Intell Mag. 14(2), 83–98 (2019)

Jaritz, M., Charette, R., Toromanoff, M., et al.: End-to-end race driving with deep reinforcement learning. Paper Presented at 2018 IEEE International Conference on Robotics and Automation (ICRA), IEEE, Brisbane, 2018

Fu, Y., Li, C., Yu, F.R., et al.: A decision-making strategy for vehicle autonomous braking in emergency via deep reinforcement learning. IEEE Trans. Veh. Technol. 69(6), 5876–5888 (2020)

Hoel, C.J., Driggs-Campbell, K., Wolff, K., et al.: Combining planning and deep reinforcement learning in tactical decision making for autonomous driving. IEEE Trans. Intell. Veh. 5(2), 294–305 (2020)

Liao, J., Liu, T., Tang, X., et al.: Decision-making strategy on highway for autonomous vehicles using deep reinforcement learning. IEEE Access. 8, 177804–177814 (2020)

Hubmann, C., Schulz, J., Becker, M., et al.: Automated driving in uncertain environments: planning with interaction and uncertain maneuver prediction. IEEE Trans. Intell. Veh. 3(1), 5–17 (2018)

Pouya, P., Madni, A.M.: Expandable-partially observable markov decision-process framework for modeling and analysis of autonomous vehicle behavior. IEEE Syst. J. 15(3), 3714–3725 (2020)

Galceran, E., Cunningham, A.G., Eustice, R.M., et al.: Multipolicy decision-making for autonomous driving via changepoint-based behavior prediction: theory and experiment. Autonomous Robots. 41(6), 1367–1382 (2017)

Mehta, D., Ferrer, G., Olson, E.: Fast discovery of influential outcomes for risk-aware MPDM. Paper Presented at 2017 IEEE International Conference on Robotics and Automation (ICRA), IEEE, Singapore, 2017

Ye, N., Somani, A., Hsu, D., et al.: DESPOT: online POMDP planning with regularization. J. Artif. Int. Res. 58(1), 231–266 (2017)

Zhang, L., Ding, W., Chen, J., et al.: Efficient uncertainty-aware decision-making for automated driving using guided branching. Paper Presented at 2020 IEEE International Conference on Robotics and Automation (ICRA), IEEE, Paris, 2020

Okumura, B., James, M.R., Kanzawa, Y., et al.: Challenges in perception and decision making for intelligent automotive vehicles: a case study. IEEE Trans. Intell Veh. 1(1), 20–32 (2016)

Sledge, I.J., Príncipe, J.C.: Balancing exploration and exploitation in reinforcement learning using a value of information criterion. Paper Presented at 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), IEEE, New Orleans, 2017

Aradi, S.: Survey of deep reinforcement learning for motion planning of autonomous vehicles. IEEE Trans. Intell. Transp. Syst. 23, 740–759 (2020)

Alizadeh, A., Moghadam, M., Bicer, Y., et al.: Automated lane change decision making using deep reinforcement learning in dynamic and uncertain highway environment. Paper Presented at 2019 IEEE Intelligent Transportation Systems Conference (ITSC), IEEE, Auckland, 2019

Ye, Y., Zhang, X., Sun, J.: Automated vehicle’s behavior decision making using deep reinforcement learning and high-fidelity simulation environment. Transp. Res. Part C Emerg. Technol. 107, 155–170 (2019)

Hoel, C., Wolff, K., Laine, L.: Automated speed and lane change decision making using deep reinforcement learning. Paper Presented at 2018 21st International Conference on Intelligent Transportation Systems (ITSC), IEEE, Maui, 2018

Wolf, P., Kurzer, K., Wingert, T., et al.: Adaptive behavior generation for autonomous driving using deep reinforcement learning with compact semantic states. Paper Presented at 2018 IEEE Intelligent Vehicles Symposium (IV), IEEE, Changshu, 2018

Nageshrao, S., Tseng, H.E., Filev, D.: Autonomous highway driving using deep reinforcement learning. Paper Presented at 2019 IEEE International Conference on Systems, Man and Cybernetics (SMC), IEEE, Bari, 2019

Aradi, S., Becsi, T., Gaspar, P.: Policy gradient based reinforcement learning approach for autonomous highway driving. Paper Presented at 2018 IEEE Conference on Control Technology and Applications (CCTA), IEEE, Copenhagen, 2018

Yu, C., Wang, X., Xu, X., et al.: Distributed multiagent coordinated learning for autonomous driving in highways based on dynamic coordination graphs. IEEE Trans. Intell. Transp. Syst. 21(2), 735–748 (2020)

Mo, S., Pei, X., Wu, C.: Safe reinforcement learning for autonomous vehicle using monte carlo tree search. IEEE Trans. Intell. Transp. Syst. 23(7), 6766–6773 (2021)

Treiber, M., Hennecke, A., Helbing, D.: Congested traffic states in empirical observations and microscopic simulations. Phys Rev E. 62(2), 1805–1824 (2000)

Kesting, A., Treiber, M., Helbing, D.: General lane-changing model mobil for car-following models. Transp Res Rec. 1999(1), 86–94 (2007)

Li L., Li P.: Analysis of driver’s steering behavior for lane change prediction. Paper Presented at 2019 11th International Conference on Intelligent Human-Machine Systems and Cybernetics (IHMSC), IEEE, Hangzhou, 2019

Alexiadis, V., Colyar, J., Halkias, J., et al.: The next generation simulation program. ITE J. Inst. Transp. Eng. 74(8), 22 (2004)

Dahl, J., De Campos, G.R., Olsson, C., et al.: Collision avoidance: a literature review on threat-assessment techniques. IEEE Trans. Intell Veh. 4(1), 101–113 (2019)

Xu, C., Zhao, W., Wang, C.: An Integrated threat assessment algorithm for decision-making of autonomous driving vehicles. IEEE Trans. Intell. Transp. Syst. 21(6), 2510–2521 (2020)

Elefteriadou, L.A.: The highway capacity manual, 6th edition: a guide for multimodal mobility analysis. ITE J. 86(4), (2016)

Arulkumaran, K., Deisenroth, M.P., Brundage, M., et al.: Deep reinforcement learning: a brief survey. IEEE Signal Process. Mag. 34(6), 26–38 (2017)

Mnih, V., Kavukcuoglu, K., Silver, D., et al.: Playing atari with deep reinforcement learning. arXiv preprint http://arxiv.org/abs/13125602 (2013)

Usama, M., Chang, D.E.: Learning-driven exploration for reinforcement learning. Paper Presented at 2021 21st International Conference on Control, Automation and Systems (ICCAS), IEEE, Jeju, 2021

Leurent, E.: An environment for autonomous driving decision-making. https://github.com/eleurent/highway-env (2022). Accessed 05 June 2022

Acknowledgements

The authors would like to appreciate the financial support of the National Engineering Laboratory of High Mobility anti-riot vehicle technology under Grant B20210017, the National Natural Science Foundation of China under Grant 11672127, the Fundamental Research Funds for the Central Universities under Grant NP2022408, the Postgraduate Research & Practice Innovation Program of Jiangsu Province under Grant KYCX21_0188 and the Chinese Scholar Council under Grant 202106830118.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declared no potential conflict of interest with respect to the research, authorship, and/or publication of this article.

Additional information

Academic Editor : Weichao Zhuang

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Deng, H., Zhao, Y., Wang, Q. et al. Deep Reinforcement Learning Based Decision-Making Strategy of Autonomous Vehicle in Highway Uncertain Driving Environments. Automot. Innov. 6, 438–452 (2023). https://doi.org/10.1007/s42154-023-00231-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42154-023-00231-6