Abstract

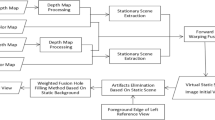

Free-viewpoint video allows the user to view objects from any virtual perspective, creating an immersive visual experience. This technology enhances the interactivity and freedom of multimedia performances. However, many free-viewpoint video synthesis methods hardly satisfy the requirement to work in real time with high precision, particularly for sports fields having large areas and numerous moving objects. To address these issues, we propose a free-viewpoint video synthesis method based on distance field acceleration. The central idea is to fuse multi-view distance field information and use it to adjust the search step size adaptively. Adaptive step size search is used in two ways: for fast estimation of multi-object three-dimensional surfaces, and synthetic view rendering based on global occlusion judgement. We have implemented our ideas using parallel computing for interactive display, using CUDA and OpenGL frameworks, and have used real-world and simulated experimental datasets for evaluation. The results show that the proposed method can render free-viewpoint videos with multiple objects on large sports fields at 25 fps. Furthermore, the visual quality of our synthetic novel viewpoint images exceeds that of state-of-the-art neural-rendering-based methods.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Fukushima, N.; Fujii, T.; Ishibashi, Y.; Yendo, T.; Tanimoto, M. Real-time free viewpoint image rendering by using fast multi-pass dynamic programming. In: Proceedings of the 3DTV-Conference: The True Vision - Capture, Transmission and Display of 3D Video, 1–4, 2010.

Wang, R. G.; Luo, J. J.; Jiang, X. B.; Wang, Z. Y.; Wang, W. M.; Li, G.; Gao, W. Accelerating image-domain-warping virtual view synthesis on GPGPU. IEEE Transactions on Multimedia Vol. 19, No. 6, 1392–1400, 2017.

Ceulemans, B.; Lu, S. P.; Lafruit, G.; Munteanu, A. Robust multiview synthesis for wide-baseline camera arrays. IEEE Transactions on Multimedia Vol. 20, No. 9, 2235–2248, 2018.

Cheung, C. H.; Sheng, L.; Ngan, K. N. Motion compensated virtual view synthesis using novel particle cell. IEEE Transactions on Multimedia Vol. 23, 1908–1923, 2021.

Nonaka, K.; Sabirin, H.; Chen, J.; Sankoh, H.; Naito, S. Optimal billboard deformation via 3D voxel for free-viewpoint system. IEICE Transactions on Information and Systems Vol. E101.D, No. 9, 2381–2391, 2018.

Sabirin, H.; Yao, Q.; Nonaka, K.; Sankoh, H.; Naito, S. Toward real-time delivery of immersive sports content. IEEE MultiMedia Vol. 25, No. 2, 61–70, 2018.

Sankoh, H.; Naito, S.; Nonaka, K.; Sabirin, H.; Chen, J. Robust billboard-based, free-viewpoint video synthesis algorithm to overcome occlusions under challenging outdoor sport scenes. In: Proceedings of the 26th ACM International Conference on Multimedia, 1724–1732, 2018.

Yao, Q.; Nonaka, K.; Sankoh, H.; Naito, S. Robust moving camera calibration for synthesizing free viewpoint soccer video. In: Proceedings of the IEEE International Conference on Image Processing, 1185–1189, 2016.

Chen, J.; Watanabe, R.; Nonaka, K.; Konno, T.; Sankoh, H.; Naito, S. A robust billboard-based free-viewpoint video synthesizing algorithm for sports scenes. arXiv preprint arXiv:1908.02446, 2019.

Yamada, K.; Sankoh, H.; Sugano, M.; Naito, S. Occlusion robust free-viewpoint video synthesis based on inter-camera/-frame interpolation. In: Proceedings of the IEEE International Conference on Image Processing, 2072–2076, 2013.

Shin, T.; Kasuya, N.; Kitahara, I.; Kameda, Y.; Ohta, Y. A comparison between two 3D free-viewpoint generation methods: Player-billboard and 3D reconstruction. In: Proceedings of the 3DTV-Conference: The True Vision - Capture, Transmission and Display of 3D Video, 1–4, 2010.

Carballeira, P.; Carmona, C.; Díaz, C.; Berjón, D.; Corregidor, D.; Cabrera, J.; Morán, F.; Doblado, C.; Arnaldo, S.; del Mar Martín, M.; et al. FVV live: A real-time free-viewpoint video system with consumer electronics hardware. IEEE Transactions on Multimedia Vol. 24, 2378–2391, 2022.

Hedman, P.; Philip, J.; Price, T.; Frahm, J. M.; Drettakis, G.; Brostow, G. Deep blending for free-viewpoint image-based rendering. ACM Transactions on Graphics Vol. 37, No. 6, Article No. 257, 2018.

Do, L.; Bravo, G.; Zinger, S.; De With, P. H. N. GPU-accelerated real-time free-viewpoint DIBR for 3DTV. IEEE Transactions on Consumer Electronics Vol. 58, No. 2, 633–640, 2012.

Gao, X. S.; Li, K. Q.; Chen, W. Q.; Yang, Z. Y.; Wei, W. G.; Cai, Y. G. Free viewpoint video synthesis based on DIBR. In: Proceedings of the IEEE Conference on Multimedia Information Processing and Retrieval, 275–278, 2020.

Li, S.; Zhu, C.; Sun, M. T. Hole filling with multiple reference views in DIBR view synthesis. IEEE Transactions on Multimedia Vol. 20, No. 8, 1948–1959, 2018.

Tian, S. S.; Zhang, L.; Morin, L.; Déforges, O. A benchmark of DIBR synthesized view quality assessment metrics on a new database for immersive media applications. IEEE Transactions on Multimedia Vol. 21, No. 5, 1235–1247, 2019.

Wang, X. J.; Shao, F.; Jiang, Q. P.; Meng, X. C.; Ho, Y. S. Measuring coarse-to-fine texture and geometric distortions for quality assessment of DIBR-synthesized images. IEEE Transactions on Multimedia Vol. 23, 1173–1186, 2021.

Jin, J.; Wang, A. H.; Zhao, Y.; Lin, C. Y.; Zeng, B. Region-aware 3-D warping for DIBR. IEEE Transactions on Multimedia Vol. 18, No. 6, 953–966, 2016.

Liu, Z. M.; Jia, W.; Yang, M.; Luo, P. Y.; Guo, Y.; Tan, M. K. Deep view synthesis via self-consistent generative network. IEEE Transactions on Multimedia Vol. 24, 451–465, 2022.

Zhou, T. H.; Tucker, R.; Flynn, J.; Fyffe, G.; Snavely, N. Stereo magnification: Learning view synthesis using multiplane images. ACM Transactions on Graphics Vol. 37, No. 4, Article No. 65, 2018.

Wang, Y. R.; Huang, Z. H.; Zhu, H.; Li, W.; Cao, X.; Yang, R. G. Interactive free-viewpoint video generation. Virtual Reality & Intelligent Hardware Vol. 2, No. 3, 247–260, 2020.

Broxton, M.; Flynn, J.; Overbeck, R.; Erickson, D.; Hedman, P.; Duvall, M.; Dourgarian, J.; Busch, J.; Whalen, M.; Debevec, P. Immersive light field video with a layered mesh representation. ACM Transactions on Graphics Vol. 39, No. 4, Article No. 86, 2020.

Broxton, M.; Busch, J.; Dourgarian, J.; DuVall, M.; Erickson, D.; Evangelakos, D.; Flynn, J.; Hedman, P.; Overbeck, R.; Whalen, M.; et al. DeepView immersive light field video. In: Proceedings of the ACM SIGGRAPH Immersive Pavilion, Article No. 15, 2020.

Flynn, J.; Broxton, M.; Debevec, P.; DuVall, M.; Fyffe, G.; Overbeck, R.; Snavely, N.; Tucker, R. DeepView: View synthesis with learned gradient descent. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2362–2371, 2019.

Penner, E.; Zhang, L. Soft 3D reconstruction for view synthesis. ACM Transactions on Graphics Vol. 36, No. 6, Article No. 235, 2017.

Dou, M. S.; Khamis, S.; Degtyarev, Y.; Davidson, P.; Fanello, S. R.; Kowdle, A.; Escolano, S. O.; Rhemann, C.; Kim, D.; Taylor, J.; et al. Fusion4D: Real-time performance capture of challenging scenes. ACM Transactions on Graphics Vol. 35, No. 4, Article No. 114, 2016.

Wei, D. X.; Xu, X. W.; Shen, H. B.; Huang, K. J. GAC-GAN: A general method for appearance-controllable human video motion transfer. IEEE Transactions on Multimedia Vol. 23, 2457–2470, 2021.

Collet, A.; Chuang, M.; Sweeney, P.; Gillett, D.; Evseev, D.; Calabrese, D.; Hoppe, H.; Kirk, A.; Sullivan, S. High-quality streamable free-viewpoint video. ACM Transactions on Graphics Vol. 34, No. 4, Article No. 69, 2015.

Huang, Z.; Li, T. Y.; Chen, W. K.; Zhao, Y. J.; Xing, J.; LeGendre, C.; Luo, L. J.; Ma, C. Y.; Li, H. Deep volumetric video from very sparse multi-view performance capture. In: Computer Vision–ECCV 2018. Lecture Notes in Computer Science, Vol. 11220. Ferrari, V.; Hebert, M.; Sminchisescu, C.; Weiss, Y. Eds. Springer Cham, 351–369, 2018.

Natsume, R.; Saito, S.; Huang, Z.; Chen, W. K.; Ma, C. Y.; Li, H.; Morishima, S. SiCloPe: Silhouette-based clothed people. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 4475–4485, 2019.

Leroy, V.; Franco, J. S.; Boyer, E. Volume sweeping: Learning photoconsistency for multi-view shape reconstruction. International Journal of Computer Vision Vol. 129, No. 2, 284–299, 2021.

Zheng, Z. R.; Yu, T.; Liu, Y. B.; Dai, Q. H. PaMIR: Parametric model-conditioned implicit representation for image-based human reconstruction. IEEE Transactions on Pattern Analysis and Machine Intelligence Vol. 44, No. 6, 3170–3184, 2022.

Meerits, S.; Thomas, D.; Nozick, V.; Saito, H. FusionMLS: Highly dynamic 3D reconstruction with consumer-grade RGB-D cameras. Computational Visual Media Vol. 4, No. 4, 287–303, 2018.

Li, J. W.; Gao, W.; Wu, Y. H.; Liu, Y. D.; Shen, Y. F. High-quality indoor scene 3D reconstruction with RGB-D cameras: A brief review. Computational Visual Media Vol. 8, No. 3, 369–393, 2022.

Nonaka, K.; Watanabe, R.; Chen, J.; Sabirin, H.; Naito, S. Fast plane-based free-viewpoint synthesis for real-time live streaming. In: Proceedings of the IEEE Visual Communications and Image Processing, 1–4, 2018.

Yusuke, U.; Takahashi, K.; Fujii, T. Free viewpoint video generation system using visual hull. In: Proceedings of the International Workshop on Advanced Image Technology, 1–4, 2018.

Chen, J.; Watanabe, R.; Nonaka, K.; Konno, T.; Sankoh, H.; Naito, S. Fast free-viewpoint video synthesis algorithm for sports scenes. In: Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, 3209–3215, 2019.

Watanabe, T.; Tanaka, T. Free viewpoint video synthesis on human action using shape from silhouette method. In: Proceedings of the SICE Annual Conference, 2748–2751, 2010.

Dellaert, F.; Yen-Chen, L. Neural volume rendering: NeRF and beyond. arXiv preprint arXiv:2101.05204, 2020.

Mildenhall, B.; Srinivasan, P. P.; Tancik, M.; Barron, J. T.; Ramamoorthi, R.; Ng, R. NeRF: Representing scenes as neural radiance fields for view synthesis. In: Computer Vision–ECCV 2020. Lecture Notes in Computer Science, Vol. 12346. Vedaldi, A.; Bischof, H.; Brox, T.; Frahm, J. M. Eds. Springer Cham, 405–421, 2020.

Lombardi, S.; Simon, T.; Schwartz, G.; Zollhoefer, M.; Sheikh, Y.; Saragih, J. Mixture of volumetric primitives for efficient neural rendering. ACM Transactions on Graphics Vol. 40, No. 4, Article No. 59, 2021.

Yu, A.; Li, R. L.; Tancik, M.; Li, H.; Ng, R.; Kanazawa, A. PlenOctrees for real-time rendering of neural radiance fields. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 5732–5741, 2021.

Fridovich-Keil, S.; Yu, A.; Tancik, M.; Chen, Q. H.; Recht, B.; Kanazawa, A. Plenoxels: Radiance fields without neural networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 5491–5500, 2022.

Müller, T.; Evans, A.; Schied, C.; Keller, A. Instant neural graphics primitives with a multiresolution hash encoding. ACM Transactions on Graphics Vol. 41, No. 4, Article No. 102, 2022.

Peng, S. D.; Zhang, Y. Q.; Xu, Y. H.; Wang, Q. Q.; Shuai, Q.; Bao, H. J.; Zhou, X. W. Neural body: Implicit neural representations with structured latent codes for novel view synthesis of dynamic humans. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 9050–9059, 2021.

Deng, K. L.; Liu, A.; Zhu, J. Y.; Ramanan, D. Depth-supervised NeRF: Fewer views and faster training for free. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 12872–12881, 2022.

Martin-Brualla, R.; Radwan, N.; Sajjadi, M. S. M.; Barron, J. T.; Dosovitskiy, A.; Duckworth, D. NeRF in the wild: Neural radiance fields for unconstrained photo collections. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 7206–7215, 2021.

Abdel-Aziz, Y. I.; Karara, H. M.; Hauck, M. Direct linear transformation from comparator coordinates into object space coordinates in close-range photogrammetry. Photogrammetric Engineering & Remote Sensing Vol. 81, No. 2, 103–107, 2015.

Chen, L. C.; Zhu, Y. K.; Papandreou, G.; Schroff, F.; Adam, H. Encoder–decoder with atrous separable convolution for semantic image segmentation. In: Computer Vision–ECCV 2018. Lecture Notes in Computer Science, Vol. 11211. Ferrari, V.; Hebert, M.; Sminchisescu, C.; Weiss, Y. Eds. Springer Cham, 833–851, 2018.

Shah, S.; Dey, D.; Lovett, C.; Kapoor, A. AirSim: High-fidelity visual and physical simulation for autonomous vehicles. In: Field and Service Robotics. Springer Proceedings in Advanced Robotics, Vol. 5. Hutter, M.; Siegwart, R. Eds. Springer Cham, 621–635, 2018.

Acknowledgements

This work was supported by the National Natural Science Foundation of China (Nos. 62172315, 62073262, and 61672429), the Fundamental Research Funds for the Central Universities, the Innovation Fund of Xidian University (No. 20109205456), the Key Research and Development Program of Shaanxi (No. S2021-YF-ZDCXL-ZDLGY-0127), and HUAWEI.

Author information

Authors and Affiliations

Contributions

Yanran Dai made a significant contribution to the design and implementation of the methodology, the analysis of the data, and the writing of the manuscript. Jing Li contributed significantly to the conception of the study and reviewed and revised the first draft. Yuqi Jiang, Haidong Qin, and Haozhe Pan set up the real-world and simulation experimental environment and collated the experimental data. Bang Liang and Shikuan Hong designed and validated the experiments. Tao Yang helped with the analysis and gave constructive comments.

Corresponding author

Ethics declarations

The authors have no competing interests to declare that are relevant to the content of this article.

Additional information

Yanran Dai received her B.S. degree from the School of Communication Engineering, South-Central University for Nationalities, Wuhan, China, in 2017, and her M.S. degree from the School of Communication Engineering, Xidian University, Xi’an, China, in 2020. She is currently pursuing a Ph.D. degree in the School of Communication Engineering, Xidian University. Her research interests include camera array computational imaging and light field analysis.

Jing Li is a professor and leader of the Intelligent Signal Processing and Pattern Recognition Laboratory in the School of Telecommunications Engineering, Xidian University. She received her Ph.D. degree in control theory and engineering from Northwestern Polytechnical University, Xi’an, China, in 2008. She visited the University of Delaware, USA, from 2013 to 2014, was a research assistant in the Department of Computing, Hong Kong Polytechnic University in 2008, and a visiting scholar at the National Laboratory of Pattern Recognition, Beijing from 2004 to 2005. Her research interests include free-viewpoint video, image synthesis, and video content analysis and understanding.

Yuqi Jiang received his B.S. degree from the School of Communication Engineering, Hangzhou Dianzi University, China, in 2019. He is currently pursuing a Ph.D. degree with the School of Telecommunications Engineering, Xidian University. His research interests include array computational imaging and three-dimensional reconstruction.

Haidong Qin received his B.S. degree from Northwestern Polytechnical University in 2019. He is working towards a Ph.D. degree in the School of Computer Science, Northwestern Polytechnical University. His research interests include free-viewpoint video.

Bang Liang received his B.S. degree from the School of Information and Communication, Guilin University of Electronic Technology, China, in 2018 and his M.S. degree from the School of Computer Science, Northwestern Polytechnical University in 2021. His research interests include three-dimensional reconstruction and free-viewpoint synthesis.

Shikuan Hong received his B.S. degree in electronic information engineering from Hebei University of Engineering, China, in 2019. He is currently pursuing an M.S. degree in the School of Communication Engineering, Xidian University. His research interests include multiple camera arrays and natural image registration.

Haozhe Pan received his B.E. degree from the School of Communication Engineering, Wuhan University of Technology, China, in 2020. He is currently pursuing an M.S. degree in the School of Communication Engineering, Xidian University. His research interests concentrate on three-dimensional reconstruction.

Tao Yang is a professor in the School of Computer Science, Northwestern Polytechnical University, where he received his Ph.D. degree in control theory and engineering in 2008. He was a visiting scholar at the University of Delaware, USA, from 2013 to 2014, a postdoctoral fellow at Shaanxi Provincial Key Laboratory of Speech and Image Information Processing, Northwestern Polytechnical University from 2008 to 2010, a research intern at FX Palo Alto Laboratory, CA, USA from 2006 to 2007, and a visiting scholar of the Intelligent Video Surveillance Group, National Laboratory of Pattern Recognition, Beijing from 2004 to 2005. His research interests include camera array computational imaging and 3D vision.

Electronic supplementary material

Supplementary material, approximately 64.7 MB.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

Other papers from this open access journal are available free of charge from http://www.springer.com/journal/41095. To submit a manuscript, please go to https://www.editorialmanager.com/cvmj.

About this article

Cite this article

Dai, Y., Li, J., Jiang, Y. et al. Real-time distance field acceleration based free-viewpoint video synthesis for large sports fields. Comp. Visual Media 10, 331–353 (2024). https://doi.org/10.1007/s41095-022-0323-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s41095-022-0323-3