Abstract

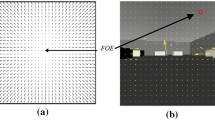

This paper aims to present vision-based navigation structures for a wheeled mobile robot using optical flow techniques. The two algorithms of the differential approach are examined and investigated for visual motion in unknown static and dynamic indoor environments. Horn-Schunck (HS) and Lucas-Kanade (LK) algorithms of the optical flow (OF) technique are employed to extract information about the environment surrounding the controlled robot by an installed color camera on the robot platform. Obstacles and objects are identified and detected based on image processing and video acquisition steps for the different tasks of mobile robots: navigation of one robot with static obstacle avoidance, navigation with dynamic obstacle avoidance, and multi-robot navigation with a static obstacle. The proposed control structures are based on motion estimation and decision mechanisms that use the necessary measured variables calculated by optical flow algorithms to carry out the appropriate steering actions to guide autonomously the robot in its workspace. The efficiency of the proposed control structures is tested in 2D and 3D environments using the Virtual Reality Modeling Language (VRML) Toolbox of Matlab. The obtained simulation results are discussed and investigated, and they will be compared to demonstrate the autonomous navigation of mobile robots without any collision with obstacles for these visual-based navigation systems.

Similar content being viewed by others

References

Ammar A, Fredj HB, Souani C (2021) Accurate realtime motion estimation using optical flow on an embedded system. Electronics 10:2164. https://doi.org/10.3390/electronics10172164

Aqel MO, Marhaban MH, Saripan MI, Ismail NB (2016) Review of visual odometry: types, approaches, challenges, and applications. Springerplus 5(1):1897. https://doi.org/10.1186/s40064-016-3573-7.

Barron JL, Fleet DJ, Beauchemin SS (1994) Performance of optical flow techniques. Int J Comp Vis 12:43–77. https://doi.org/10.1007/BF01420984

Beauchemin S, Barron J (1995) The computation of optical flow. ACM Comput Surv 27:433–467. https://doi.org/10.1145/212094.212141

Blachut K, Kryjak T (2022) Real-time efficient FPGA implementation of the multi-scale Lucas-Kanade and Horn-Schunck optical flow algorithms for a 4K video stream. Sensors 22:5017. https://doi.org/10.3390/s2213501

Chao H, Gu Y, Napolitano M (2014) A survey of optical flow techniques for robotics navigation applications. J Intell Rob Syst 73:361–372. https://doi.org/10.1007/s10846-013-9923-6

Chen Q, Yao L, Xu L, Yang Y, Xu T, Yang Y, Liu Y (2022) Horticultural image feature matching algorithm based on improved ORB and LK optical flow. Remote Sens 14: 4465. https://doi.org/10.3390/rs14184465

Cherroun L, Boumehraz M (2014) Path Following behavior for an autonomous mobile robot using neuro-fuzzy controller. Int J Syst Assur Eng Manag (IJSA) 5:352–360. https://doi.org/10.1007/s13198-013-0174-5

Cherroun L, Nadour M, Boudiaf M, Kouzou A (2018) Comparison between Type-1 and Type-2 Takagi-Sugeno fuzzy logic controllers for robot design. Electrotehnică Electronică Automatică 66:94–103. http://www.eea-journal.ro/ro/d/5/p/EEA66_2_15

Cherroun L, Boumehraz M, Kouzou A (2019) Mobile robot path planning based on optimized fuzzy controllers. In: Chapter in new developments and advances in robot control, studies in systems, decision and control. Springer-Verlag, Cham, vol 175, pp 255–283. https://doi.org/10.1007/978-981-13-2212-9_12.

da Silva SPP, Almeida JS, Ohata EF, Rodrigues JJ (2020) Monocular vision aided depth map from RGB images to estimate of localization and support to navigation of mobile robots. IEEE Sens J 20:12040–12048. https://doi.org/10.1109/JSEN.2020.2964735

Deng H, Arif U, Yang K, Xi Z, Quan Q, Cai KY (2020) Global optical flow-based estimation of velocity for multicopters using monocular vision in GPS-denied environments. Optik 219:164923. https://doi.org/10.1016/j.ijleo.2020.164923

Desouza GN, Kak AC (2002) Vision for mobile robot navigation, a survey. IEEE Trans Pattern Anal Mach Intell 24:237–267. https://doi.org/10.1109/34.982903

Dirik M, Castillo O, Kocamaz F (2021) Vision-based mobile robot control and path planning algorithms in obstacle environments using type-2 fuzzy logic. Book in Studies in Fuzziness and Soft Computing, 407. https://doi.org/10.1007/978-3-030-69247-6.

Dönmez E, Kocamaz AF, Dirik M (2018) A Vision-based real-time mobile robot controller design based on gaussian function for indoor environment. Arab J Sci Eng 43:7127–7142. https://doi.org/10.1007/s13369-017-2917-0

Elasri A, Cherroun L, Nadour M (2022) Multi-robot visual navigation structure based on Lucas-Kanade algorithm, artificial intelligence and its applications. Springer, Cham, Lecture Notes in Networks and Systems 413:534–547. https://doi.org/10.1007/978-3-030-96311-8_50

Farbod F (2009) Autonomous robots, modeling, path planning, and control. Springer, Boston. https://doi.org/10.1007/978-0-387-09538-7

Font FB, Ortiz A, Oliver G (2008) Visual navigation for mobile robots: a survey. J Intell Robot Syst 53:263–296. https://doi.org/10.1007/s10846-008-9235-4

Guan L, Zhai L, Cai H, Zhang P, Li Y, Chu J, Jin R, Xie H (2020) Study on displacement estimation in low illumination environment through polarized contrast-enhanced optical flow method for polarization navigation applications. Optik 210:164513. https://doi.org/10.1016/j.ijleo.2020.164513

Horn B, Schunck B (1981) Determining optical flow. Artif Intell 17:185–203. https://doi.org/10.1016/0004-3702(81)90024-2

Huang C, Zhou W (2014) A real-time image matching algorithm for integrated navigation system. Optik 125:4434–4436. https://doi.org/10.1016/j.ijleo.2014.02.033

Iyer V, Najafi A, James J, Fuller S, Gollakota S (2020) Wireless steerable vision for live insects and insect-scale robots. Sci Robot 5. https://doi.org/10.1126/scirobotics.abb0839.

Khalid M, Pénard L, Mémin E (2019) Optical flow for image-based river velocity estimation. Flow Meas Instrum 65:110–121. https://doi.org/10.1016/j.flowmeasinst.2018.11.009

Lu Y, Song D (2015) Visual navigation using heterogeneous landmarks and unsupervised geometric constraints. IEEE Trans Robot 31:736–749. https://doi.org/10.1109/TRO.2015.2424032

Lu Y, Xue Z, Xia GS, Zhang L (2018) A survey on vision-based UAV navigation. Geo-Spatial Inform Sci 21:21–32. https://doi.org/10.1080/10095020.2017.1420509

Lucas BD, Kanade T (1981) An iterative image registration technique with an application to stereo vision. In: Proceedings: Imaging Understanding Workshop, 2, pp 674–679. ISBN 1-55860-070-1.

Nadour M, Boumehraz M, Cherroun L, Puig V (2019) Mobile robot visual navigation based on fuzzy logic and optical flow approaches. Int J Syst Assurance Eng Manage (IJSA) 10:1654–1667. https://doi.org/10.1007/s13198-019-00918-2.

Nadour M, Boumehraz M, Cherroun L, Puig V (2019) Hybrid Type-2 fuzzy logic obstacle avoidance system based on Horn-Schunck method. Electrotehnică Electronică Automatică (EEA) 67:45–51. http://www.eea-journal.ro/ro/d/5/p/EEA67_3_5.

Nadour M, Cherroun L, Boumehraz M (2022) Intelligent visual robot navigator via Type-2 Takagi-Sugeno fuzzy logic controller and Horn-Schunck estimator. In: 2nd international conference on artificial intelligence and applications. http://dspace.univ-eloued.dz/handle/123456789/10810

Nguyen TXB, Rosser K, Chahl J (2022) A comparison of dense and sparse optical flow techniques for low-resolution aerial thermal imagery. J Imaging 8:116. https://doi.org/10.3390/jimaging8040116

Peng Y, Liu X, Shen C, Huang H, Zhao D, Cao H, Guo X (2019) An improved optical flow algorithm based on mask-R-CNN and K-means for velocity calculation. Appl Sci 9:2808. https://doi.org/10.3390/app9142808

Pookkuttath S, Gomez BF, Elara MR, Thejus P (2023) An optical flow-based method for condition-based maintenance and operational safety in autonomous cleaning robots. Expert Syst Appl 222:119802. https://doi.org/10.1016/j.eswa.2023.119802

Rubio F, Valero F, Llopis-Albert C (2019) A review of mobile robots: concepts, methods, theoretical framework, and applications. Int J Adv Robot Syst 16:1–22. https://doi.org/10.1177/1729881419839596

Sengar SS, Mukhopadhyay S (2017) Detection of moving objects based on enhancement of optical flow. Optik 145:130–141. https://doi.org/10.1016/j.ijleo.2017.07.040

Serres JR, Ruffier F (2017) Optic flow-based collision-free strategies: from insects to robots. Arthropod Struct Dev 46:703–717. https://doi.org/10.1016/j.asd.2017.06.003

Shi L, Copot C, Vanlanduit S (2021) A bayesian deep neural network for safe visual servoing in human-robot interaction. Front Robot AI 8:687031. https://doi.org/10.3389/frobt.2021.687031

Shuzhi SG, Lewis FL (2006) Autonomous mobile robots, sensing, control, decision, making and applications. CRC, Taylor and Francis Group, Boca Raton. ISBN 9780849337482.

Sleaman WK, Hameed AA, Jamil A (2023) Monocular vision with deep neural networks for autonomous mobile robots navigation. Optik 272:170162. https://doi.org/10.1016/j.ijleo.2022.170162

Wang B, Gao M (2022) End-to-end efficient indoor navigation with optical flow. In: 8th International Conference on Systems and Informatics (ICSAI), Kunming, China, pp 1–7. https://doi.org/10.1109/ICSAI57119.2022.10005455.

Wei S, Yang L, Chen Z, Liu Z (2011) Motion detection based on optical flow and self-adaptive threshold segmentation. Procedia Eng 15:3471–3476. https://doi.org/10.1016/j.proeng.2011.08.650

Wen S, Wen Z, Zhang D, Zhang H, Wang T (2021) A multi-robot path-planning algorithm for autonomous navigation using meta-reinforcement learning based on transfer learning. Appl Soft Comput 110:107605. https://doi.org/10.1016/j.asoc.2021.107605

Yao P, Yunxia W (2022) Z, Zhao, Null-space-based modulated reference trajectory generator for multi-robots formation in obstacle environment. ISA Trans 123:168–178. https://doi.org/10.1016/j.isatra.2021.05.033

Yedjour H (2021) Optical flow based on Lucas-Kanade method for motion estimation. In: International conference in artificial intelligence in renewable energetic systems. Springer, Cham, vol 4, pp 937–945, https://doi.org/10.1007/978-3-030-63846-7_92.

Zhang L, Xiong Z, Lai J, Liu J (2016) Optical flow-aided navigation for UAV: a novel information fusion of integrated MEMS navigation system. Optik 127:447–451. https://doi.org/10.1016/j.ijleo.2015.10.092

Acknowledgements

The authors gratefully acknowledge the support of our colleagues in the LAADI laboratory of Djelfa.

Funding

Not applicable.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interests

Authors declare no conflict of interest to this work.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Elasri, A., Cherroun, L. & Nadour, M. Robotic Visual-Based Navigation Structures Using Lucas-Kanade and Horn-Schunck Algorithms of Optical Flow. Iran J Sci Technol Trans Electr Eng (2024). https://doi.org/10.1007/s40998-024-00722-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s40998-024-00722-0