Abstract

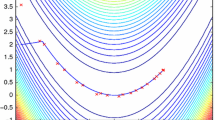

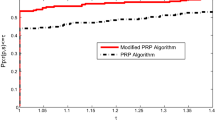

In an effort to make modification on the classical Fletcher–Reeves method, Jiang and Jian suggested an efficient nonlinear conjugate gradient algorithm which possesses the sufficient descent property when the line search fulfills the strong Wolfe conditions. Here, we develop a scaled modified version of the method which satisfies the sufficient descent condition independent of the line search. Also, a nonmonotone backtracking Armijo-type line search is proposed under which the global convergence of the method is established without convexity assumption. Performance of the method is evaluated by computational experiments on a set of CUTEr test functions and also, on the image reconstruction as a case study. The results show numerical efficiency of the method.

Similar content being viewed by others

References

Abubakar, A.B., Kumam, P., Mohammad, H., Awwal, A.M., Sitthithakerngkiet, K.: A modified Fletcher–Reeves conjugate gradient method for monotone nonlinear equations with some applications. Mathematics 7(8), 745 (2019)

Aminifard, Z., Babaie–Kafaki, S.: An optimal parameter choice for the Dai–Liao family of conjugate gradient methods by avoiding a direction of the maximum magnification by the search direction matrix. 4OR, 17:317–330, (2019)

Andrei, N.: Convex functions. Adv. Model. Optim. 9(2), 257–267 (2007)

Babaie-Kafaki, S., Ghanbari, R.: A hybridization of the Polak-Ribière-Polyak and Fletcher-Reeves conjugate gradient methods. Numer. Algorithms 68(3), 481–495 (2015)

Barzilai, J., Borwein, J.M.: Two-point stepsize gradient methods. IMA J. Numer. Anal. 8(1), 141–148 (1988)

Cao, J., Wu, J.: A conjugate gradient algorithm and its applications in image restoration. Appl. Numer. Math. 152, 243–252 (2020)

Chen, C., Ma, Y., Ren, G.: A convolutional neural network with Fletcher–Reeves algorithm for hyperspectral image classification. Remote Sens. 11(11), 1325 (2019)

Dai, Y.H.: On the nonmonotone line search. J. Optim. Theory Appl. 112(2), 315–330 (2002)

Dai, Y.H., Han, J.Y., Liu, G.H., Sun, D.F., Yin, H.X., Yuan, Y.X.: Convergence properties of nonlinear conjugate gradient methods. SIAM J. Optim. 10(2), 348–358 (1999)

Dai, Zh., Kang, J.: Some new efficient mean-variance portfolio selection models. Int. J. Finance Econ. (2021). https://doi.org/10.1002/ijfe.2400

Dai, Zh., Kang, J., Wen, F.: Predicting stock returns: a risk measurement perspective. Int. Rev. Financial Anal. 74, 101676 (2021)

Dai, Zh., Zhu, H., Kang, J.: New technical indicators and stock returns predictability. Int. Rev. Econ. Finance 71, 127–142 (2021)

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91(2 Ser A), 201–213 (2002)

Esmaeili, H., Shabani, S., Kimiaei, M.: A new generalized shrinkage conjugate gradient method for sparse recovery. Calcolo 56(1), 1–38 (2019)

Fletcher, R., Reeves, C.M.: Function minimization by conjugate gradients. Comput. J. 7(2), 149–154 (1964)

Gilbert, J.C., Nocedal, J.: Global convergence properties of conjugate gradient methods for optimization. SIAM J. Optim. 2(1), 21–42 (1992)

Gould, N.I.M., Orban, D., Toint, Ph.L.: CUTEr: a constrained and unconstrained testing environment, revisited. ACM Trans. Math. Softw. 29(4), 373–394 (2003)

Grippo, L., Lampariello, F., Lucidi, S.: A nonmonotone line search technique for Newton’s method. SIAM J. Numer. Anal. 23(4), 707–716 (1986)

Hager, W.W., Zhang, H.: Algorithm 851: CG\(_{-}\)Descent, a conjugate gradient method with guaranteed descent. ACM Trans. Math. Softw. 32(1), 113–137 (2006)

Hager, W.W., Zhang, H.: A survey of nonlinear conjugate gradient methods. Pac. J. Optim. 2(1), 35–58 (2006)

Jiang, X., Jian, J.: Improved Fletcher-Reeves and Dai-Yuan conjugate gradient methods with the strong Wolfe line search. J. Comput. Appl. Math. 348, 525–534 (2019)

Keshtegar, B., Hasanipanah, M., Bakhshayeshi, I., Sarafraz, M.E.: A novel nonlinear modeling for the prediction of blast-induced airblast using a modified conjugate FR method. Measurement 131, 35–41 (2019)

Li, X., Zhang, W., Dong, X.: A class of modified FR conjugate gradient method and applications to non-negative matrix factorization. Comput. Math. Appl. 73, 270–276 (2017)

Liu, J.K., Feng, Y.M., Zou, L.M.: A spectral conjugate gradient method for solving large-scale unconstrained optimization. Comput. Math. Appl. 77(3), 731–739 (2019)

Ng, K.W., Rohanin, A.: Modified Fletcher-Reeves and Dai-Yuan conjugate gradient methods for solving optimal control problem of monodomain model. Appl. Math. 73(2), 864–872 (2012)

Nocedal, J., Wright, S.J.: Numerical Optimization. Springer, New York (2006)

Polak, E., Ribière, G.: Note sur la convergence de méthodes de directions conjuguées. Rev. Française Informat. Recherche Opérationnelle 3(16), 35–43 (1969)

Polyak, B.T.: The conjugate gradient method in extreme problems. USSR Comp. Math. Math. Phys. 9(4), 94–112 (1969)

Sun, W., Yuan, Y.X.: Optimization Theory and Methods: Nonlinear Programming. Springer, New York (2006)

Sun, Z., Tian, Y., Wang, J.: A novel projected Fletcher-Reeves conjugate gradient approach for finite-time optimal robust controller of linear constraints optimization problem: Application to bipedal walking robots. Optim. Control Appl. Methods 39(1), 130–159 (2018)

Toint, Ph.L.: An assessment of nonmonotone line search techniques for unconstrained optimization. SIAM J. Sci. Comput. 17(3), 725–739 (1996)

Yu, G., Huang, J., Zhou, Y.: A descent spectral conjugate gradient method for impulse noise removal. Appl. Math. Lett. 23(5), 555–560 (2010)

Yuan, G., Li, T., Hu, W.: A conjugate gradient algorithm and its application in large-scale optimization problems and image restoration. J. Inequal. Appl. 2019(1), 247 (2019)

Yuan, G., Li, T., Hu, W.: A conjugate gradient algorithm for large-scale nonlinear equations and image restoration problems. Appl. Numer. Math. 147, 129–141 (2020)

Yuan, G., Meng, Z., Li, Y.: A modified Hestenes and Stiefel conjugate gradient algorithm for large-scale nonsmooth minimizations and nonlinear equations. J. Optim. Theory Appl. 168(1), 129–152 (2016)

Zhang, H., Hager, W.W.: A nonmonotone line search technique and its application to unconstrained optimization. SIAM J. Optim. 14(4), 1043–1056 (2004)

Zhang, L., Zhou, W., Li, D.: Global convergence of a modified Fletcher-Reeves conjugate gradient method with Armijo-type line search. Numer. Math. 104(4), 561–572 (2006)

Zhang, L., Zhou, W., Li, D.H.: A descent modified Polak–Ribière–Polyak conjugate gradient method and its global convergence. IMA J. Numer. Anal. 26(4), 629–640 (2006)

Zhang, L., Zhou, W., Li, D.H.: Global convergence of a modified Fletcher-Reeves conjugate gradient method with Armijo-type line search. Numer. Math. 104(4), 561–572 (2006)

Acknowledgements

This work is based upon research funded by Iran National Science Foundation (INSF) under project No 4000309. The authors are grateful to Professor Michael Navon for providing the strong Wolfe line search code. They also thank the anonymous reviewers for their valuable comments that helped to improve the quality of this work.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Anton Abdulbasah Kamil.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Mirhoseini, N., Babaie-Kafaki, S. & Aminifard, Z. A Nonmonotone Scaled Fletcher–Reeves Conjugate Gradient Method with Application in Image Reconstruction. Bull. Malays. Math. Sci. Soc. 45, 2885–2904 (2022). https://doi.org/10.1007/s40840-022-01303-2

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40840-022-01303-2

Keywords

- Unconstrained optimization

- Conjugate gradient method

- Nonmonotone line search

- Sufficient descent condition

- Image reconstruction