Abstract

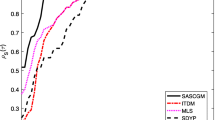

This paper presents two new supermemory gradient algorithms for solving convex-constrained nonlinear monotone equations, which combine the idea of supermemory gradient method with the projection method. The feature of these proposed methods is that at each iteration, they do not require the Jacobian information and solve any subproblem, even if they do not store any matrix. Thus, they are suitable for solving large-scale equations. Under mild conditions, the proposed methods are shown to be globally convergent. Preliminary numerical results show that the proposed methods are efficient and can be applied to solve large-scale nonsmooth equations.

Similar content being viewed by others

References

Ahookhosh M, Amini K, Bahrami S (2013) Two derivative-free projection approaches for systems of large-scale nonlinear monotone equations. Numer Algorithms 64:21–42

Dolan ED, More JJ (2002) Benchmarking optimization software with performance profiles. Math Program Ser A 91:201–213

Fan JY (2013) On the Levenberg–Marquardt method for convex constrained nonlinear equations. J Ind Manag Optim 9:227–241

Figueiredo M, Nowak R, Wright SJ (2007) Gradient projection for sparse reconstruction: application to compressed sensing and other inverse problems. IEEE J Sel Topics Signal Process 1:586–597

Hager WW, Zhang HC (2005) A new conjugate gradient method with guaranteed descent and an efficient line search. SIAM J Optim 16:170–192

Hager WW, Zhang HC (2006) A survey of nonlinear conjugate gradient methods. Pac J Optim 2:35–58

Iusem AN, Solodov MV (1997) Newton-type methods with generalized distance for constrained optimization. Optimization 41:257–278

Jia CX, Zhu DT (2011) Projected gradient trust-region method for solving nonlinear systems with convex constraints. Appl Math J Chin Univ (Ser B) 26:57–69

Li QN, Li DH (2011) A class of derivative-free methods for large-scale nonlinear monotone equations. IMA J Numer Anal 31:1625–1635

Narushima Y, Yabe H (2006) Global convergence of a memory gradient method for unconstrained optimization. Comput Optim Appl 35:325–346

Nocedal J, Wright SJ (1999) Numerical optimization. Springer, New York

Ou YG, Wang GS (2012) A new supermemory gradient method for unconstrained optimization problems. Optim Lett 6:975–992

Ou YG, Liu Y (2014) A nonmonotone supermemory gradient algorithm for unconstrained optimization. J Appl Math Comput 46:215–235

Shi ZJ, Shen J (2005) A new supermemory gradient method with curve search rule. Appl Math Comput 170:1–16

Solodov MV, Svaiter BF (1999) A new projection method for variational inequality problems. SIAM J Control Optim 37:765–776

Sun M, Bai QG (2011) A new descent memory gradient method and its global convergence. J Syst Sci Complexity 24:784–794

Tong XJ, Zhou SZ (2005) A smoothing projected Newton-type method for semismooth equations with bound constraints. J Ind Manag Optim 1:235–250

Wang CW, Wang YJ, Xu CL (2007) A projection method for a system of nonlinear monotone equations with convex constraints. Math Methods Oper Res 66:33–46

Wood AJ, Wollenberg BF (1996) Power generations, operations, and control. Wiley, New York

Xiao YH, Zhu H (2013) A conjugate gradient method to solve convex constrained monotone equations with applications in compressive sensing. J Math Anal Appl 405:310–319

Yan QR, Peng XZ, Li DH (2010) A globally convergent derivative-free method for solving large scale nonlinear monotone equations. J Comput Appl Math 234:649–657

Yu ZS, Lin J, Sun J et al (2009) Spectral gradient projection method for monotone nonlinear equations with convex constraints. Appl Numer Math 59:2416–2423

Zhao YB, Li D (2001) Monotonicity of fixed point and normal mapping associated with variational inequality and is applications. SIAM J Optim 11:962–973

Acknowledgments

The authors are very grateful to the anonymous referees and the associate editor for their valuable comments and suggestions that greatly improved this paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Ernesto G. Birgin.

Supported by NNSF of China (No. 11261015) and NSF of Hainan Province (No. 111001).

Rights and permissions

About this article

Cite this article

Ou, Y., Liu, Y. Supermemory gradient methods for monotone nonlinear equations with convex constraints. Comp. Appl. Math. 36, 259–279 (2017). https://doi.org/10.1007/s40314-015-0228-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40314-015-0228-1