Abstract

In informing decisions, utilising health technology assessment (HTA), expert elicitation can provide valuable information, particularly where there is a less-developed evidence-base at the point of market access. In these circumstances, formal methods to elicit expert judgements are preferred to improve the accountability and transparency of the decision-making process, help reduce bias and the use of heuristics, and also provide a structure that allows uncertainty to be expressed. Expert elicitation is the process of transforming the subjective and implicit knowledge of experts into their quantifiable expressions. The use of expert elicitation in HTA is gaining momentum, and there is particular interest in its application to diagnostics, medical devices and complex interventions such as in public health or social care. Compared with the gathering of experimental evidence, elicitation constitutes a reasonably low-cost source of evidence. Given its inherent subject nature, the potential biases in elicited evidence cannot be ignored and, due to its infancy in HTA, there is little guidance to the analyst wishing to conduct a formal elicitation exercise. This article attempts to summarise the stages of designing and conducting an expert elicitation, drawing on key literature and examples, most of which are not in HTA. In addition, we critique their applicability to HTA, given its distinguishing features. There are a number of issues that the analyst should be mindful of, in particular the need to appropriately characterise the uncertainty associated with model inputs and the fact that there are often numerous parameters required, not all of which can be defined using the same quantities. This increases the need for the elicitation task to be as straightforward as possible for the expert to complete.

Similar content being viewed by others

References

Hunger T, et al. Using expert opinion in health technology assessment: a guideline review. Int J Technol Assessm Health Care. 2016;32(3):131–9.

Grigore B, et al. Methods to elicit probability distributions from experts: a systematic review of reported practice in health technology assessment. Pharmacoeconomics. 2013;31(11):991–1003.

Hadorn D, et al. Use of expert knowledge elicitation to estimate parameters in health economic decision models. Int J Technol Assess Health Care. 2014;30(4):461–8.

Iglesias CP, et al. Reporting guidelines for the use of expert judgement in model-based economic evaluations. Pharmacoeconomics. 2016;34(11):1161–72.

Hart A, O’Hagan A, Quigley J, Bolger F. Training course on steering an expert knowledge elicitation. Final report. EFSA Supporting publ. 2016;13(5):1009E. doi:10.2903/sp.efsa.2016.EN-1009.

O’Hagan A, et al. Uncertain judgements: eliciting experts’ probabilities. New York: Wiley; 2006.

Hora SC, Von Winterfeldt D. Nuclear waste and future societies: a look into the deep future. Technol Forecast Soc Change. 1997;56(2):155–70.

Jenkinson D. The elicitation of probabilities: a review of the statistical literature. Bayesian elicitation of experts’ probabilities (BEEP). Sheffield: Sheffield University; 2005.

Soares MO, et al. Methods to elicit experts’ beliefs over uncertain quantities: application to a cost effectiveness transition model of negative pressure wound therapy for severe pressure ulceration. Stat Med. 2011;30(19):2363–80.

Clemen RT, Winkler RL. Aggregating probability distributions. In: Edwards W, Miles Jr R, Von Winterfeldt D, editors. Advances in decision analysis: from foundations to applications. Cambridge: Cambridge University Press; 2007. p. 154–76.

French S. Aggregating expert judgement. Revista de la Real Academia de Ciencias Exactas, Fisicas y Naturales. Serie A. Matematicas. 2011;105(1):181–206.

Johnson SR, et al. Methods to elicit beliefs for Bayesian priors: a systematic review. J Clin Epidemiol. 2010;63(4):355–69.

Kattan MW, et al. The wisdom of crowds of doctors: their average predictions outperform their individual ones. Med Dec Mak. 2016;36(4):536–40.

Cooke RM, Goossens LHJ. Expert judgement elicitation for risk assessments of critical infrastructures. J Risk Res. 2004;7(6):643–56.

Knol AB, et al. The use of expert elicitation in environmental health impact assessment: a seven step procedure. Environ Health Glob Access Sci Source. 2010;9:19.

Kadane J, Wolfson LJ. Experiences in elicitation. J R Stat Soc Ser D Stat. 1998;47(1):3–19.

Renooij S, Witteman C. Talking probabilities: communicating probabilistic information with words and numbers. Int J Approx Reas. 1999;22(3):169–94.

Bruine de Bruin W, et al. What number is “fifty-fifty”? Redistributing excessive 50% responses in elicited probabilities. Risk Anal. 2002;22(4):713–23.

Claxton K, et al. Probabilistic sensitivity analysis for NICE technology assessment: not an optional extra. Health Econ. 2005;14(4):339–47.

Leal J, Wordsworth S, Legood R, Blair E. Eliciting expert opinion for economic models: an applied example. Value Health. 2007;10(3):195–203.

Grigore B, et al. A comparison of two methods for expert elicitation in health technology assessments. BMC Med Res Methodol. 2016;16:85.

Pibouleau L, Chevret S. An internet-based method to elicit experts’ beliefs for Bayesian priors: a case study in intracranial stent evaluation. Int J Technol Assess Health Care. 2014;30(4):1–8.

Bojke L, et al. Eliciting distributions to populate decision analytic models. Value Health. 2010;13(5):557–64.

McKenna C, et al. Enhanced external counterpulsation for the treatment of stable angina and heart failure: a systematic review and economic evaluation. Health Technol Assess. 2009;13(24):1–90, iii–iv, ix–xi.

Speight PM, et al. The cost-effectiveness of screening for oral cancer in primary care. Health Technol Assess. 2006;10(14):1–144, iii–iv.

Van Noortwijk JM, et al. Expert judgment in maintenance optimization. IEEE Trans Reliab. 1992;41(3):427–32.

Bowling A. Mode of questionnaire administration can have serious effects on data quality. J Publ Health. 2005;27:281–91.

Knol AB, et al. The use of expert elicitation in environmental health impact assessment: a seven step procedure. Environ Health. 2010;9:19.

Baker E, et al. Facing the experts: survey mode and expert elicitation. Fondazione Eni Enrico Mattei. Nora di Lavoro; 2014.

Morris DE, Oakley JE, Crowe JA. A web-based tool for eliciting probability distributions from experts. Environ Model Softw. 2014;52:1–4.

Expert Judgement Network 2016. http://www.expertsinuncertainty.net/Software/tabid/4149/Default.aspx. Accessed 13 Jun 2017.

Elfadaly FG, Garthwaite PH. Eliciting Dirichlet and Connor–Mosimann prior distributions for multinomial models. TEST. 2013;22(4):628–46.

Garthwaite PH, et al. Use of expert knowledge in evaluating costs and benefits of alternative service provisions: a case study. Int J Technol Assess Health Care. 2008;24(3):350–7.

WikiBooks. Cognitive science: an introduction/biases and reasoning heuristics. 2016. https://en.wikibooks.org/wiki/Cognitive_Science:_An_Introduction/Biases_and_Reasoning_Heuristics. Accessed 3 May 2017.

Tversky A, Kahneman D. The framing of decisions and the psychology of choice. Science. 1981;4481:453–8.

Garthwaite PH, Kadane JB, O’Hagan A. Statistical methods for eliciting probability distributions. J Am Stat Assoc. 2005;100(470):680–701.

Montibeller G, von Winterfeldt D. Cognative and motivational biases in decison and risk analysis. Risk Anal. 2015;35(7):1230–51.

Kynn M. The ‘heuristics and biases’ bias in expert elicitation. J R Stat Soc Ser A Stat Soc. 2008;171(1):239–64.

Johnson SR, et al. Methods to elicit beliefs for Bayesian priors: a systematic review. J Clin Epidemiol. 2010;63(4):355–69.

Kuhnert PM, Martin TG, Griffiths SP. A guide to eliciting and using expert knowledge in Bayesian ecological models. Ecol Lett. 2010;13:900–14.

Mullin TM. Understanding and supporting the process of probabilistic estimation. Pittsburgh: Carnegie-Mellon University; 1986.

Dalkey N, Helmer O. An experimental application of the Delphi method to the use of experts. Manag Sci. 1963;9(3):458–67.

Clemen RT, Winkler RL. Aggregating probability distributions. In: Edwards W, Miles Jr RF, von Winterfeldt D, editors. Advances in decision analysis: from foundations to applications. Cambridge: Cambridge University Press; 2007.

Ayyub B. Elicitation of expert opinions for uncertainty and risks. Boca Raton: CRC Press; 2001.

Rohrbaugh J. Improving the quality of group judgment: social judgment analysis and the nominal group technique. Organ Behav Hum Perform. 1981;28(2):272–88.

Sullivan W, Payne K. The appropriate elicitation of expert opinion in economic models. Pharmacoeconomics. 2011;29(6):455–9.

Keeney S, McKenna H, Hasson F. The Delphi technique in nursing and health research. New York: Wiley; 2010. p. 208.

Myers DG, Lamm H. The polarizing effect of group discussion: the discovery that discussion tends to enhance the average prediscussion tendency has stimulated new insights about the nature of group influence. Am Sci. 1975;63(3):297–303.

Sniezek JA. Groups under uncertainty: an examination of confidence in group decision making. Organ Behav Hum Decis Process. 1992;52(1):124–55.

White IR, Pocock SJ, Wang D. Eliciting and using expert opinions about influence of patient characteristics on treatment effects: a Bayesian analysis of the CHARM trials. Stat Med. 2005;24(24):3805–21.

Shabaruddin F, Elliott R, Valle JW, Newman W, Payne K. Understanding chemotherapy treatment pathways of advanced colorectal cancer patients to inform an economic evaluation in the United Kingdom. Br J Cancer. 2010;103(3):315–23.

Genest C, McConway KJ. Allocating the weights in the linear opinion pool. J Forecast. 1990;9(1):53–73.

Moatti M, et al. Modeling of experts’ divergent prior beliefs for a sequential phase III clinical trial. Clin Trials. 2013;10(4):505–14.

Wilson KJ. An investigation of dependence in expert judgement studies with multiple experts. Int J Forecast. 2017;33(1):325–36.

Hoelzer K, et al. Structured expert elicitation about listeria monocytogenes cross-contamination in the environment of retail deli operations in the United States. Risk Anal. 2012;32(7):1139–56.

Wallsten TS, Budescu DV. State of the art-encoding subjective probabilities: a psychological and psychometric review. Manag Sci. 1983;29(2):151–73.

Cooke RM. Experts in uncertainty. Oxford: Oxford University Press; 1991.

Brier GW. Verification of forecasts expressed in terms of probability. Mon Weather Rev. 1950;78(1):1–3.

Murphy AH. A new vector partition of the probability score. J Appl Meteorol. 1973;12(4):595–600.

Yates JF. Subjective probability analysis. In: Wright G, Ayton P, editors. Subjective probability. London: Wiley; 1994. p. 382–410.

Remus W, O’Conner M, Griggs K. Does feedback improve the accuracy of recurrent judgmental forecasts? Organ Behav Hum Decis Process. 1996;66(1):22–30.

Subbotin V. Outcome feedback effects on under- and overconfident judgments (general knowledge tasks). Organ Behav Hum Decis Process. 1996;66(3):268–76.

Bolger F, Rowe G. The aggregation of expert judgment: do good things come to those who weight? Risk Anal. 2015;35(1):12–5.

Chaloner K, et al. Graphical elicitation of a prior distribution for a clinical trial. Statistician. 1993;42:341–53.

National Institute for Health and Care Excellence. Guide to the methods of technology appraisal. London: National Institute for Health and Care Excellence; 2013.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Funding

No funding was received for the work carried out in the preparation of this manuscript. Laura Bojke was supported in the preparation/submission of this paper by the HEOM Theme of the National Institute for Health Research Collaboration for Leadership in Applied Health Research and Care Yorkshire and Humber (NIHR CLAHRC YH; http://clahrc-yh.nihr.ac.uk/). The views and opinions expressed are those of the authors and not necessarily those of the National Health Service, the National Institute for Health Research, or the Department of Health.

Conflict of interest

Laura Bojke, Bogdan Grigore, Dina Jankovic, Jaime Peters, Marta Soares and Ken Stein have no conflicts of interest to declare.

Author contributions

LB, BG and DJ were primarily responsible for drafting the manuscript. JP, MS and KS contributed towards writing of the manuscript and commented on its various versions.

Appendices

Appendix

See Boxes 1, 2 and 3.

Box 1: Uses of elicitation in cost-effectiveness modelling

-

Generating an appropriate set of comparators.

-

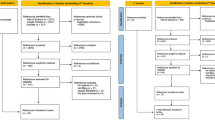

Identifying appropriate patient pathways and relevant events.

-

Describing parameters and their associated uncertainty.

-

Quantifying the extent of bias, or improving generalisability from one context to another.

-

Characterising structural uncertainties either through generating differential weights for scenarios or by eliciting distributions of parameterized uncertainties.

-

Validating or calibrating model estimates.

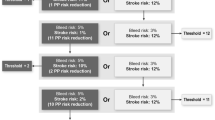

Box 2: Application of the histogram method

Box 3: Examples of biases in elicitation

Biases in elicitation can include:

-

Biases associated with experts.

-

Motivation biases, e.g. when experts have an incentive (for example, financial) to reach a certain conclusion.

-

Cognitive biases: these commonly involve the use of heuristics, or ‘rules of thumb’, to help reach decisions, solve problems or form judgements quickly. Examples are:

-

Conjunction fallacy: When the probability of conjunction (combined) events is judged to be more likely than either of its constituents.

-

Availability: Where easy-to-recall events (such as natural disasters) are judged to have a high probability of occurring.

-

Hindsight bias: The tendency to overestimate the predictability of past events.

-

Anchoring effect: The tendency to rely on an anchor value that does not provide any information about the actual value.

-

-

-

Biases associated with elicitation methods

-

Structuring elicitation questions: Biases may arise from how the question is framed, for example if relevant events have been omitted, experts are less likely to consider these in replying. But biases can also occur when scales are used; for example, contraction bias occurs when the full range of a scale has not been presented to the expert.

-

Elicitation medium (e.g. interview or email survey) or aggregation method: As an example, experts in group meetings (typically conducted when consensus aggregation methods are applied) tend to adopt a stronger position, often resulting in overconfident statements.

-

Fitting probability distributions: The encoding of the summaries elicited from the expert as a distribution usually implies assumptions referring to the shape of the distribution that may differ from what the expert intended. For example an expert, when fitting distributions to his/her own beliefs, may be driven by familiar probability distribution shapes, in particular bell-shaped curves (familiarity bias).

While the literature suggests that biases cannot be completely avoided, it is good practice to be aware of possible biases and to employ strategies to mitigate against these (debiasing) in both designing and conducting the elicitation exercise.

-

Rights and permissions

About this article

Cite this article

Bojke, L., Grigore, B., Jankovic, D. et al. Informing Reimbursement Decisions Using Cost-Effectiveness Modelling: A Guide to the Process of Generating Elicited Priors to Capture Model Uncertainties. PharmacoEconomics 35, 867–877 (2017). https://doi.org/10.1007/s40273-017-0525-1

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40273-017-0525-1