Abstract

Consider a probability measure on a Hilbert space defined via its density with respect to a Gaussian. The purpose of this paper is to demonstrate that an appropriately defined Markov chain, which is reversible with respect to the measure in question, exhibits a diffusion limit to a noisy gradient flow, also reversible with respect to the same measure. The Markov chain is defined by applying a Metropolis–Hastings accept–reject mechanism (Tierney, Ann Appl Probab 8:1–9, 1998) to an Ornstein–Uhlenbeck (OU) proposal which is itself reversible with respect to the underlying Gaussian measure. The resulting noisy gradient flow is a stochastic partial differential equation driven by a Wiener process with spatial correlation given by the underlying Gaussian structure. There are two primary motivations for this work. The first concerns insight into Monte Carlo Markov Chain (MCMC) methods for sampling of measures on a Hilbert space defined via a density with respect to a Gaussian measure. These measures must be approximated on finite dimensional spaces of dimension \(N\) in order to be sampled. A conclusion of the work herein is that MCMC methods based on prior-reversible OU proposals will explore the target measure in \({\mathcal O}(1)\) steps with respect to dimension \(N\). This is to be contrasted with standard MCMC methods based on the random walk or Langevin proposals which require \({\mathcal O}(N)\) and \({\mathcal O}(N^{1/3})\) steps respectively (Mattingly et al., Ann Appl Prob 2011; Pillai et al., Ann Appl Prob 22:2320–2356 2012). The second motivation relates to optimization. There are many applications where it is of interest to find global or local minima of a functional defined on an infinite dimensional Hilbert space. Gradient flow or steepest descent is a natural approach to this problem, but in its basic form requires computation of a gradient which, in some applications, may be an expensive or complex task. This paper shows that a stochastic gradient descent described by a stochastic partial differential equation can emerge from certain carefully specified Markov chains. This idea is well-known in the finite state (Kirkpatricket al., Science 220:671–680, 1983; Cerny, J Optim Theory Appl 45:41–51, 1985) or finite dimensional context (German, IEEE Trans Geosci Remote Sens 1:269–276, 1985; German, SIAM J Control Optim 24:1031, 1986; Chiang, SIAM J Control Optim 25:737–753, 1987; J Funct Anal 83:333–347, 1989). The novelty of the work in this paper is that the emergence of the noisy gradient flow is developed on an infinite dimensional Hilbert space. In the context of global optimization, when the noise level is also adjusted as part of the algorithm, methods of the type studied here go by the name of simulated–annealing; see the review (Bertsimas and Tsitsiklis, Stat Sci 8:10–15, 1993) for further references. Although we do not consider adjusting the noise-level as part of the algorithm, the noise strength is a tuneable parameter in our construction and the methods developed here could potentially be used to study simulated annealing in a Hilbert space setting. The transferable idea behind this work is that conceiving of algorithms directly in the infinite dimensional setting leads to methods which are robust to finite dimensional approximation. We emphasize that discretizing, and then applying standard finite dimensional techniques in \(\mathbb {R}^N\), to either sample or optimize, can lead to algorithms which degenerate as the dimension \(N\) increases.

Similar content being viewed by others

1 Introduction

There are many applications where it is of interest to find global or local minima of a functional

where \(C\) is a self-adjoint, positive and trace-class linear operator on a Hilbert space \(\Bigl (\mathcal {H},\langle \cdot ,\cdot \rangle , \Vert \cdot \Vert \Bigr ).\) Gradient flow or steepest descent is a natural approach to this problem, but in its basic form requires computation of the gradient of \({\varPsi }\) which, in some applications, may be an expensive or complex task. The purpose of this paper is to show how a stochastic gradient descent described by a stochastic partial differential equation can emerge from certain carefully specified random walks, when combined with a Metropolis–Hastings accept–reject mechanism [1]. In the finite state [4, 5] or finite dimensional context [6–9] this is a well-known idea, which goes by the name of simulated-annealing; see the review [10] for further references. The novelty of the work in this paper is that the theory is developed on an infinite dimensional Hilbert space, leading to an algorithm which is robust to finite dimensional approximation: we adopt the “optimize then discretize” viewpoint (see [11], Chapter 3). We emphasize that discretizing, and then applying standard finite dimensional techniques in \(\mathbb {R}^N\) to optimize, can lead to algorithms which degenerate as \(N\) increases; the diffusion limit proved in [2] provides a concrete example of this phenomenon for the standard random walk algorithm.

The algorithms we construct have two basic building blocks: (i) drawing samples from the centred Gaussian measure \(N(0,C)\) and (ii) evaluating \({\varPsi }\). By judiciously combining these ingredients we generate (approximately) a noisy gradient flow for \(J\) with tunable temperature parameter controlling the size of the noise. In finite dimensions the basic idea is built from Metropolis–Hastings methods which have an invariant measure with Lebesgue density proportional to \(\exp \bigl (-\tau ^{-1}J(x)\bigr )\). The essential challenge in transferring these finite-dimensional algorithms to an infinite-dimensional setting is that there is no Lebesgue measure. This issue can be circumvented by working with measures defined via their density with respect to a Gaussian measure, and for us the natural Gaussian measure on \(\mathcal {H}\) is

The quadratic form \(\Vert C^{-\frac{1}{2}}x\Vert ^2\) is the square of the Cameron-Martin norm corresponding to the Gaussian measure \(\pi ^{\tau }_0.\) Given \(\pi ^{\tau }_0\) we may then define the (in general non-Gaussian) measure \(\pi ^{\tau }\) via its Radon-Nikodym derivative with respect to \(\pi ^{\tau }\):

We assume that \(\exp \bigl (-\tau ^{-1}{\varPsi }(\cdot )\bigr )\) is in \(L^1_{\pi _0^{\tau }}\). Note that if \(\mathcal {H}\) is finite dimensional then \(\pi ^{\tau }\) has Lebesgue density proportional to \(\exp \bigl (-\tau ^{-1}J(x)\bigr ).\)

Our basic strategy will be to construct a Markov chain which is \(\pi ^{\tau }\)-invariant and to show that a piecewise linear interpolant of the Markov chain converges weakly (in the sense of probability measures) to the desired noisy gradient flow in an appropriate parameter limit. To motivate the Markov chain we first observe that the linear SDE in \(\mathcal {H}\) given by

where \(W\) is a Brownian motion in \(\mathcal {H}\) with covariance operator equal to \(C\), is reversible and ergodic with respect to \(\pi _0^{\tau }\) given by (2) [12]. If \(t>0\) then the exact solution of this equation has the form, for \(\delta =\frac{1}{2}(1-e^{-2t})\),

where \(\xi \) is a Gaussian random variable drawn from \(N(0,C).\) Given a current state \(x\) of our Markov chain we will propose to move to \(z(t)\) given by this formula, for some choice of \(t>0\). We will then accept or reject this proposed move with probability found from pointwise evaluation of \({\varPsi }\), resulting in a Markov chain \(\{x^{k,\delta }\}_{k \in \mathbb {Z}^+}.\) The resulting Markov chain corresponds to the preconditioned Crank-Nicolson, or pCN, method, also refered to as the PIA method with \((\alpha ,\theta )=(0,\frac{1}{2})\) in the paper [13] where it was introduced; this is one of a family of Metropolis–Hastings methods defined on the Hilbert space \(\mathcal{H}\) and the review [14] provides further details.

From the output of the pCN Metropolis–Hastings method we construct a continuous interpolant of the Markov chain defined by

with \(t_k \overset{{\tiny {\text{ def }}}}{=}k \delta .\) The main result of the paper is that as \(\delta \rightarrow 0\) the Hilbert-space valued function of time \(z^{\delta }\) converges weakly to \(z\) solving the Hilbert space valued SDE, or SPDE, following the dynamics

on pathspace. This equation is reversible and ergodic with respect to the measure \(\pi ^{\tau }\) [12, 15]. It is also known that small ball probabilities are asymptotically maximized (in the small radius limit), under \(\pi ^{\tau }\), on balls centred at minimizers of \(J\) [16]. The result thus shows that the algorithm will generate sequences which concentrate near minimizers of \(J\).

Because the SDE (7) does not possess the smoothing property, almost sure fine scale properties under its invariant measure \(\pi ^{\tau }\) are not necessarily reflected at any finite time. For example, if \(C\) is the covariance operator of Brownian motion or Brownian bridge then the quadratic variation of draws from the invariant measure, an almost sure quantity, is not reproduced at any finite time in (7) unless \(z(0)\) has this quadratic variation; the almost sure property is approached asymptotically as \(t \rightarrow \infty \). This behaviour is reflected in the underlying Metropolis–Hastings Markov chain pCN with weak limit (7), where the almost sure property is only reached asymptotically as \(n \rightarrow \infty .\) In a second result of this paper we will show that almost sure quantities such as the quadratic variation under pCN satisfy a limiting linear ODE with globally attractive steady state given by the value of the quantity under \(\pi ^{\tau }\). This gives quantitative information about the rate at which the pCN algorithm approaches statistical equilibrium.

We have motivated the limit theorem in this paper through the goal of creating noisy gradient flow in infinite dimensions with tuneable noise level, using only draws from a Gaussian random variable and evaluation of the non-quadratic part of the objective function. A second motivation for the work comes from understanding the computational complexity of MCMC methods, and for this it suffices to consider \(\tau \) fixed at \(1\). The paper [2] shows that discretization of the standard Random Walk Metropolis algorithm, S-RWM, will also have diffusion limit given by (7) as the dimension of the discretized space tends to infinity, whilst the time increment \(\delta \) in (6), decreases at a rate inversely proportional to \(N\). The condition on \(\delta \) is a form of CFL condition, in the language of computational PDEs, and implies that \(\mathcal{O}(N)\) steps will be required to sample the desired probability distribution. In contrast the pCN method analyzed here has no CFL restriction: \(\delta \) may tend to zero independently of dimension; indeed in this paper we work directly in the setting of infinite dimension. The reader interested in this computational statistics perspective on diffusion limits may also wish to consult the paper [3] which demonstrates that the Metropolis adjusted Langevin algorithm, MALA, requires a CFL condition which implies that \(\mathcal{O}(N^{\frac{1}{3}})\) steps are required to sample the desired probability distribution. Furthermore, the formulation of the limit theorem that we prove in this paper is closely related to the methodologies introduced in [2] and [3]; it should be mentioned nevertheless that the analysis carried out in this article allows to prove a diffusion limit for a sequence of Markov chains evolving in a possibly non-stationary regime. This was not the case in [2] and [3].

We prove in Theorem 4 that for a fixed temperature parameter \(\tau >0\), as the time increment \(\delta \) goes to \(0\), the pCN algorithm behaves as a stochastic gradient descent. By adapting the temperature \(\tau \in (0,\infty )\) according to an appropriate cooling schedule it is possible to locate global minima of \(J\); standard heuristics show that the distribution \(\pi ^{\tau }\) concentrates on a \(\tau ^{1/2}\)-neighbourhood around the global minima of the functional \(J\). We stress though that all the proofs presented in this article assume a constant temperature. The asymptotic analysis of the effect of the cooling schedule is left for future work; the study of such Hilbert space valued simulated annealing algorithms presents several challenges, one of them being that that the probability distributions \(\pi ^{\tau }\) are mutually singular for different temperatures \(\tau >0\).

In Sect. 2 we describe some notation used throughout the paper, discuss the required properties of Gaussian measures and Hilbert-space valued Brownian motions, and state our assumptions. Section 3 contains a precise definition of the Markov chain \(\{x^{k,\delta }\}_{k \ge 0}\), together with statement and proof of the weak convergence theorem that is the main result of the paper. Section 4 contains proof of the lemmas which underly the weak convergence theorem. In Sect. 5 we state and prove the limit theorem for almost sure quantities such as quadratic variation; such results are often termed “fluid limits” in the applied probability literature. An example is presented in Sect. 6. We conclude in Sect. 7.

2 Preliminaries

In this section we define some notational conventions, Gaussian measure and Brownian motion in Hilbert space, and state our assumptions concerning the operator \(C\) and the functional \({\varPsi }.\)

2.1 Notation

Let \(\Bigl (\mathcal {H}, \langle \cdot , \cdot \rangle , \Vert \cdot \Vert \Bigr )\) denote a separable Hilbert space of real valued functions with the canonical norm derived from the inner-product. Let \(C\) be a positive symmetric trace class operator on \(\mathcal {H}\) and \(\{\varphi _j,\lambda ^2_j\}_{j \ge 1}\) be the eigenfunctions and eigenvalues of \(C\) respectively, so that \(C\varphi _j = \lambda ^2_j \,\varphi _j\) for \(j \in \mathbb {N}\). We assume a normalization under which \(\{\varphi _j\}_{j \ge 1}\) forms a complete orthonormal basis in \(\mathcal {H}\). For every \(x \in \mathcal {H}\) we have the representation \(x = \sum _{j} \; x_j \varphi _j\) where \(x_j=\langle x,\varphi _j\rangle .\) Using this notation, we define Sobolev-like spaces \(\mathcal {H}^r, r \in \mathbb {R}\), with the inner-products and norms defined by

Notice that \(\mathcal {H}^0 = \mathcal {H}\). Furthermore \(\mathcal {H}^r \subset \mathcal {H}\subset \mathcal {H}^{-r}\) and \(\{j^{-r} \varphi _j\}_{j \ge 1}\) is an orthonormal basis of \(\mathcal {H}^r\) for any \(r >0\). For a positive, self-adjoint operator \(D : \mathcal {H}^r \mapsto \mathcal {H}^r\), its trace in \(\mathcal {H}^r\) is defined as

Since \(\mathrm {Trace}_{\mathcal {H}^r}(D)\) does not depend on the orthonormal basis \(\{\varphi _j\}_{j \ge 1}\), the operator \(D\) is said to be trace class in \(\mathcal {H}^r\) if \(\mathrm {Trace}_{\mathcal {H}^r}(D) < \infty \) for some, and hence any, orthonormal basis of \(\mathcal {H}^r\). Let \(\otimes _{\mathcal {H}^r}\) denote the outer product operator in \(\mathcal {H}^r\) defined by

for vectors \(x,y,z \in \mathcal {H}^r\). For an operator \(L: \mathcal {H}^r \mapsto \mathcal {H}^l\), we denote its operator norm by \(\Vert \cdot \Vert _{\mathcal{L}(\mathcal{H}^{r},\mathcal{H}^{l})}\) defined by \(\Vert L\Vert _{\mathcal{L}(\mathcal{H}^{r},\mathcal{H}^{l})} \overset{{\tiny {\text{ def }}}}{=}\sup \, \big \{ \Vert L x\Vert _l ,: \Vert x\Vert _r=1 \big \}\). For self-adjoint \(L\) and \(r=l=0\) this is, of course, the spectral radius of \(L\). Throughout we use the following notation.

-

Two sequences \(\{\alpha _n\}_{n \ge 0}\) and \(\{\beta _n\}_{n \ge 0}\) satisfy \(\alpha _n \lesssim \beta _n\) if there exists a constant \(K>0\) satisfying \(\alpha _n \le K \beta _n\) for all \(n \ge 0\). The notations \(\alpha _n \asymp \beta _n\) means that \(\alpha _n \lesssim \beta _n\) and \(\beta _n \lesssim \alpha _n\).

-

Two sequences of real functions \(\{f_n\}_{n \ge 0}\) and \(\{g_n\}_{n \ge 0}\) defined on the same set \({\varOmega }\) satisfy \(f_n \lesssim g_n\) if there exists a constant \(K>0\) satisfying \(f_n(x) \le K g_n(x)\) for all \(n \ge 0\) and all \(x \in {\varOmega }\). The notations \(f_n \asymp g_n\) means that \(f_n \lesssim g_n\) and \(g_n \lesssim f_n\).

-

The notation \(\mathbb {E}_x \big [ f(x,\xi ) \big ]\) denotes expectation with variable \(x\) fixed, while the randomness present in \(\xi \) is averaged out.

-

We use the notation \(a \wedge b\) instead of \(\min (a,b)\).

2.2 Gaussian measure on Hilbert space

The following facts concerning Gaussian measures on Hilbert space, and Brownian motion in Hilbert space, may be found in [17]. Since \(C\) is self-adjoint, positive and trace-class we may associate with it a centred Gaussian measure \(\pi _0\) on \(\mathcal {H}\) with covariance operator \(C\), i.e., \(\pi _0 \overset{{\tiny {\text{ def }}}}{=}\mathrm N (0,C)\). If \(x \overset{\mathcal {D}}{\sim }\pi _0\) then we may write its Karhunen-Loéve expansion,

with \(\{\rho _j\}_{j \ge 1}\) an i.i.d sequence of standard centered Gaussian random variables; since \(C\) is trace-class, the above sum converges in \(L^2\). Notice that for any value of \(r \in \mathbb {R}\) we have \(\mathbb {E}\Vert X\Vert _r^2 = \sum _{j \ge 1} j^{2r} \langle X,\varphi _j \rangle ^2 = \sum _{j \ge 1} j^{2r} \lambda _j^2\) for \(X \overset{\mathcal {D}}{\sim }\pi _0\). For values of \(r \in \mathbb {R}\) such that \(\mathbb {E}\Vert X\Vert _r^2 < \infty \) we indeed have \(\pi _0\big ( \mathcal {H}^r \big )=1\) and the random variable \(X\) can also be described as a Gaussian random variable in \(\mathcal {H}^r\). One can readily check that in this case the covariance operator \(C_r:\mathcal {H}^r \rightarrow \mathcal {H}^r\) of \(X\) when viewed as a \(\mathcal {H}^r\)-valued random variable is given by

where \(B_r : \mathcal {H}\mapsto \mathcal {H}\) denote the operator which is diagonal in the basis \(\{\varphi _j\}_{j \ge 1}\) with diagonal entries \(j^{2r}\). In other words, \(B_r \,\varphi _j = j^{2r} \varphi _j\) so that \(B^{\frac{1}{2}}_r \,\varphi _j = j^r \varphi _j\) and \(\mathbb {E}\big [ \langle X,u \rangle _r \langle X,v \rangle _r \big ] = \langle u,C_r v \rangle _r\) for \(u,v \in \mathcal {H}^r\) and \(X \overset{\mathcal {D}}{\sim }\pi _0\). The condition \(\mathbb {E}\Vert X\Vert _r^2 < \infty \) can equivalently be stated as

This shows that even though the Gaussian measure \(\pi _0\) is defined on \(\mathcal {H}\), depending on the decay of the eigenvalues of \(C\), there exists an entire range of values of \(r\) such that \(\mathbb {E}\Vert X\Vert _r^2 = \mathrm {Trace}_{\mathcal {H}^r}(C_r) < \infty \) and in that case the measure \(\pi _0\) has full support on \(\mathcal {H}^r\).

Frequently in applications the functional \({\varPsi }\) arising in (1) may not be defined on all of \(\mathcal {H}\), but only on a subspace \(\mathcal {H}^s \subset \mathcal {H}\), for some exponent \(s>0\). From now onwards we fix a distinguished exponent \(s>0\) and assume that \({\varPsi }:\mathcal {H}^s \rightarrow \mathbb {R}\) and that \(\mathrm {Trace}_{\mathcal {H}^s}(C_s) < \infty \) so that \(\pi (\mathcal {H}^s)=\pi _0^{\tau }(\mathcal {H}^s)=\pi ^{\tau }(\mathcal {H}^s)=1\); the change of measure formula (3) is well defined. For ease of notations we introduce

so that the family \(\{\hat{\varphi }_j\}_{j \ge 1}\) forms an orthonormal basis for \(\big (\mathcal {H}^s, \langle \cdot ,\cdot \rangle _s\big )\). We may view the Gaussian measure \(\pi _0 = \mathrm N (0,C)\) on \(\big (\mathcal {H}, \langle \cdot ,\cdot \rangle \big )\) as a Gaussian measure \(\mathrm N (0,C_s)\) on \(\big (\mathcal {H}^s, \langle \cdot ,\cdot \rangle _s\big )\).

A Brownian motion \(\{W(t)\}_{t \ge 0}\) in \(\mathcal {H}^s\) with covariance operator \(C_s:\mathcal {H}^s \rightarrow \mathcal {H}^s\) is a continuous Gaussian process with stationary increments satisfying \(\mathbb {E}\big [ \langle W(t),x \rangle _s \langle W(t),y \rangle _s \big ] = t \langle x,C_s y \rangle _s\). For example, taking \(\{\beta _j(t)\}_{j \ge 1}\) independent standard real Brownian motions, the process

defines a Brownian motion in \(\mathcal {H}^s\) with covariance operator \(C_s\); equivalently, this same process \(\{W(t)\}_{t \ge 0}\) can be described as a Brownian motion in \(\mathcal {H}\) with covariance operator equal to \(C\) since Eq. (12) may also be expressed as \(W(t) = \sum _{j=1}^{\infty } \lambda _j \beta _j(t) \varphi _j\).

2.3 Assumptions

In this section we describe the assumptions on the covariance operator \(C\) of the Gaussian measure \(\pi _0 =\mathrm N (0,C)\) and the functional \({\varPsi }\), and the connections between them. Roughly speaking we will assume that the second-derivative of \({\varPsi }\) is globally bounded as an operator acting between two spaces which arise naturally from understanding the domain of the function \({\varPsi }\); furthermore the domain of \({\varPsi }\) must be a set of full measure with respect to the underlying Gaussian. If the eigenvalues of \(C\) decay like \(j^{-2\kappa }\) and \(\kappa >\frac{1}{2}\) then \(\pi _0^{\tau }(\mathcal {H}^s)=1\) for all \(s<\kappa -\frac{1}{2}\) and so we will assume eigenvalue decay of this form and assume the domain of \({\varPsi }\) is defined appropriately. We now formalize these ideas.

For each \(x \in \mathcal {H}^s\) the derivative \(\nabla {\varPsi }(x)\) is an element of the dual \((\mathcal {H}^s)^*\) of \(\mathcal {H}^s\), comprising the linear functionals on \(\mathcal {H}^s\). However, we may identify \((\mathcal {H}^s)^* = \mathcal {H}^{-s}\) and view \(\nabla {\varPsi }(x)\) as an element of \(\mathcal {H}^{-s}\) for each \(x \in \mathcal {H}^s\). With this identification, the following identity holds

and the second derivative \(\partial ^2 {\varPsi }(x)\) can be identified with an element of \(\mathcal {L}(\mathcal {H}^s, \mathcal {H}^{-s})\). To avoid technicalities we assume that \({\varPsi }(x)\) is quadratically bounded, with first derivative linearly bounded and second derivative globally bounded. Weaker, localized assumptions could be dealt with by use of stopping time arguments.

Assumption 1

The functional \({\varPsi }\) and the covariance operator \(C\) satisfy the following assumptions.

-

A1.

Decay of Eigenvalues \(\lambda _j^2\) of \(C\): there exists a constant \(\kappa > \frac{1}{2}\) such that

$$\begin{aligned} \lambda _j \asymp j^{-\kappa }. \end{aligned}$$(13) -

A2.

Domain of \({\varPsi }\): there exists an exponent \(s \in [0, \kappa - 1/2)\) such \({\varPsi }\) is defined on \(\mathcal {H}^s\).

-

A3.

Size of \({\varPsi }\): the functional \({\varPsi }:\mathcal {H}^s \rightarrow \mathbb {R}\) satisfies the growth conditions

$$\begin{aligned} 0 \le {\varPsi }(x) \lesssim 1 + \Vert x\Vert _s^2 . \end{aligned}$$ -

A4.

Derivatives of \({\varPsi }\): The derivatives of \({\varPsi }\) satisfy

$$\begin{aligned} \Vert \nabla {\varPsi }(x)\Vert _{-s} \lesssim 1 + \Vert x\Vert _s \qquad \text {and} \qquad \Vert \partial ^2 {\varPsi }(x)\Vert _{\mathcal{L}(\mathcal{H}^{s},\mathcal{H}^{-s})} \lesssim 1. \end{aligned}$$

Remark 1

The condition \(\kappa > \frac{1}{2}\) ensures that \(\mathrm {Trace}_{\mathcal {H}^r}(C_r) < \infty \) for any \(r < \kappa - \frac{1}{2}\): this implies that \(\pi ^{\tau }_0(\mathcal {H}^r)=1\) for any \(\tau > 0\) and \(r < \kappa - \frac{1}{2}\).

Remark 2

The functional \({\varPsi }(x) = \frac{1}{2}\Vert x\Vert _s^2\) is defined on \(\mathcal {H}^s\) and satisfies Assumptions 1. Its derivative at \(x \in \mathcal {H}^s\) is given by \(\nabla {\varPsi }(x) = \sum _{j \ge 0} j^{2s} x_j \varphi _j \in \mathcal {H}^{-s}\) with \(\Vert \nabla {\varPsi }(x)\Vert _{-s} = \Vert x\Vert _s\). The second derivative \(\partial ^2 {\varPsi }(x) \in \mathcal {L}(\mathcal {H}^s, \mathcal {H}^{-s})\) is the linear operator that maps \(u \in \mathcal {H}^s\) to \(\sum _{j \ge 0} j^{2s} \langle u,\varphi _j \rangle \varphi _j \in \mathcal {H}^{-s}\): its norm satisfies \(\Vert \partial ^2 {\varPsi }(x) \Vert _{\mathcal {L}(\mathcal {H}^s, \mathcal {H}^{-s})} = 1\) for any \(x \in \mathcal {H}^s\).

The Assumptions 1 ensure that the functional \({\varPsi }\) behaves well in a sense made precise in the following lemma.

Lemma 2

Let Assumptions 1 hold.

-

1.

The function \(d(x) \overset{{\tiny {\text{ def }}}}{=}-\Big ( x + C \nabla {\varPsi }(x) \Big )\) is globally Lipschitz on \(\mathcal {H}^s\):

$$\begin{aligned} \Vert d(x) - d(y)\Vert _s \lesssim \Vert x-y\Vert _s \qquad \qquad \forall x,y \in \mathcal {H}^s. \end{aligned}$$(14) -

2.

The second order remainder term in the Taylor expansion of \({\varPsi }\) satisfies

$$\begin{aligned} \big | {\varPsi }(y)-{\varPsi }(x) - \langle \nabla {\varPsi }(x), y-x \rangle \big | \lesssim \Vert y-x\Vert _s^2 \qquad \qquad \forall x,y \in \mathcal {H}^s. \end{aligned}$$(15)

Proof

See [2].

In order to provide a clean exposition, which highlights the central theoretical ideas, we have chosen to make global assumptions on \({\varPsi }\) and its derivatives. We believe that our limit theorems could be extended to localized version of these assumptions, at the cost of considerable technical complications in the proofs, by means of stopping-time arguments. The numerical example presented in Sect. 6 corroborates this assertion. There are many applications which satisfy local versions of the assumptions given, including the Bayesian formulation of inverse problems [18] and conditioned diffusions [19].

3 Diffusion limit theorem

This section contains a precise statement of the algorithm, statement of the main theorem showing that piecewise linear interpolant of the output of the algorithm converges weakly to a noisy gradient flow described by a SPDE, and proof of the main theorem. The proofs of various technical lemmas are deferred to Sect. 4.

3.1 pCN algorithm

We now define the Markov chain in \(\mathcal {H}^s\) which is reversible with respect to the measure \(\pi ^{\tau }\) given by Eq. (3). Let \(x \in \mathcal {H}^s\) be the current position of the Markov chain. The proposal candidate \(y\) is given by (5), so that

and \(\delta \in (0, \frac{1}{2})\) is a small parameter which we will send to zero in order to obtain the noisy gradient flow. In Eq. (16), the random variable \(\xi \) is chosen independent of \(x\). As described in [13] (see also [18, 20]), at temperature \(\tau \in (0,\infty )\) the Metropolis–Hastings acceptance probability for the proposal \(y\) is given by

For future use, we define the local mean acceptance probability at the current position \(x\) via the formula

The chain is then reversible with respect to \(\pi ^{\tau }\). The Markov chain \(x^{\delta } = \{x^{k,\delta }\}_{k \ge 0}\) can be written as

In the above equation, the \(\xi ^k\) are i.i.d Gaussian random variables \(\mathrm N (0,C)\) and the \(\gamma ^{k,\delta }\) are Bernoulli random variables which account for the accept–reject mechanism of the Metropolis–Hastings algorithm,

for an i.i.d sequence \(\{U^k\}_{k \ge 0}\) of random variables uniformly distributed on the interval \((0,1)\) and independent from all the other sources of randomness. The next lemma will be repeatedly used in the sequel. It states that the size of the jump \(y-x\) is of order \(\sqrt{\delta }\).

Lemma 3

Under Assumptions 1 and for any integer \(p\ge 1\) the following inequality

holds for any \(\delta \in (0, \frac{1}{2})\).

Proof

The definition of the proposal (16) shows that \( \Vert y-x\Vert ^p_s \lesssim \delta ^p \; \Vert x\Vert ^p_s + \delta ^{\frac{p}{2}} \, \mathbb {E}\big [ \Vert \xi \Vert ^p_s \big ]\). Fernique’s theorem [17] shows that \(\xi \) has exponential moments and therefore \(\mathbb {E}\big [ \Vert \xi \Vert ^p_s \big ] < \infty \). This gives the conclusion.

3.2 Diffusion limit theorem

Fix a time horizon \(T > 0\) and a temperature \(\tau \in (0,\infty )\). The piecewise linear interpolant \(z^\delta \) of the Markov chain (19) is defined by Eq. (6). The following is the main result of this article. Note that “weakly” refers to weak convergence of probability measures.

Theorem 4

Let Assumption 1 hold. Let the Markov chain \(x^{\delta }\) start at a fixed position \(x_* \in \mathcal {H}^s\). Then the sequence of processes \(z^\delta \) converges weakly to \(z\) in \(C([0,T], \mathcal {H}^s)\), as \(\delta \rightarrow 0\), where \(z\) solves the \(\mathcal {H}^s\)-valued stochastic differential equation

and \(W\) is a Brownian motion in \(\mathcal {H}^s\) with covariance operator equal to \(C_s\).

For conceptual clarity, we derive Theorem 4 as a consequence of the general diffusion approximation Lemma 6. Consider a separable Hilbert space \(\big ( \mathcal {H}^s, \langle \cdot , \cdot \rangle _s \big )\) and a sequence of \(\mathcal {H}^s\)-valued Markov chains \(x^\delta = \{x^{k,\delta }\}_{k \ge 0}\). The martingale-drift decomposition with time discretization \(\delta \) of the Markov chain \(x^{\delta }\) reads

where the approximate drift \(d^{\delta }\) and volatility term \({\varGamma }^{\delta }(x,\xi ^k)\) are given by

In Eq. (22), the conditional expectation \(\mathbb {E}\big [ x^{k+1,\delta } - x^{k,\delta }\,|x^{k,\delta } \big ]\) is given by \(\alpha ^{\delta }(x^{k,\delta }, \xi ^k) \times (y^{k, \delta } - x^{k,\delta })\) for a proposal \(y^{k,\delta }\) and noise term \(\xi ^k\) as defined in Eq (22). Notice that \(\big \{ {\varGamma }^{k,\delta }\big \}_{k \ge 0}\), with \({\varGamma }^{k,\delta } \overset{{\tiny {\text{ def }}}}{=}{\varGamma }^{\delta }(x^{k, \delta }, \xi ^k)\), is a martingale difference array in the sense that \(M^{k,\delta } = \sum _{j=0}^k {\varGamma }^{j,\delta }\) is a martingale adapted to the natural filtration \(\mathcal {F}^{\delta } = \{\mathcal {F}^{k,\delta }\}_{k \ge 0}\) of the Markov chain \(x^{\delta }\). The parameter \(\delta \) represents a time increment. We define the piecewise linear rescaled noise process by

We now show that, as \(\delta \rightarrow 0\), if the sequence of approximate drift functions \(d^{\delta }(\cdot )\) converges in the appropriate norm to a limiting drift \(d(\cdot )\) and the sequence of rescaled noise process \(W^{\delta }\) converges to a Brownian motion then the sequence of piecewise linear interpolants \(z^\delta \) defined by Eq. (6) converges weakly to a diffusion process in \(\mathcal {H}^s\). In order to state the general diffusion approximation Lemma 6, we introduce the following:

Conditions 5

There exists an integer \(p \ge 1\) such that the sequence of Markov chains \(x^\delta = \{x^{k,\delta }\}_{k \ge 0}\) satisfies

-

1.

Convergence of the drift: there exists a globally Lipschitz function \(d:\mathcal {H}^s \rightarrow \mathcal {H}^s\) such that

$$\begin{aligned} \Vert d^\delta (x)-d(x)\Vert _s \lesssim \delta \cdot \big ( 1+\Vert x\Vert ^{p}_s \big ) \end{aligned}$$(25) -

2.

Invariance principle: as \(\delta \) tends to zero the sequence of processes \(\{W^{\delta }\}_{\delta \in (0,\frac{1}{2})}\) defined by Eq. (24) converges weakly in \(C([0,T],\mathcal {H}^s)\) to a Brownian motion \(W\) in \(\mathcal {H}^s\) with covariance operator \(C_s\).

-

3.

A priori bound: the following bound holds

$$\begin{aligned} \sup _{\delta \in \left( 0,\frac{1}{2}\right) } \Big \{ \delta \cdot \mathbb {E}\Big [ \sum _{k \delta \le T} \Vert x^{k,\delta }\Vert ^p_s \Big ] \Big \} < \infty . \end{aligned}$$(26)

Remark 3

The a-priori bound (26) can equivalently be stated as

It is now proved that Conditions 5 are sufficient to obtain a diffusion approximation for the sequence of rescaled processes \(z^{\delta }\) defined by Eq. (6), as \(\delta \) tends to zero. Contrary to more classical diffusion approximation for Markov processes results [21, 22] based on infinitesimal generators, the next Lemma exploits specific structures which arise when the limiting process has additive noise and, in particular, is based on exploiting preservation of weak convergence under continuous mappings, together with an explicit construction of the noise process. This idea has previously appeared in the literature in, for example, the articles [2, 3] in the context of MCMC and the article [23], and the references therein, in the context of the derivation of SDEs from ODEs with random data.

Lemma 6

(General diffusion approximation for Markov chains) Consider a separable Hilbert space \(\big ( \mathcal {H}^s, \langle \cdot , \cdot \rangle _s \big )\) and a sequence of \(\mathcal {H}^s\)-valued Markov chains \(x^\delta = \{x^{k,\delta }\}_{k \ge 0}\) starting at a fixed position in the sense that \(x^{0,\delta } = x_*\) for all \(\delta \in (0,\frac{1}{2})\). Suppose that the drift-martingale decompositions (22) of \(x^{\delta }\) satisfy Conditions 5. Then the sequence of rescaled interpolants \(z^\delta \in C([0,T],\mathcal {H}^s)\) defined by Eq. (6) converges weakly in \(C([0,T],\mathcal {H}^s)\) to \(z \in C([0,T],\mathcal {H}^s)\) given by the stochastic differential equation

with initial condition \(z_0 = x_*\) and where \(W\) is a Brownian motion in \(\mathcal {H}^s\) with covariance \(C_s\).

Proof

For the sake of clarity, the proof of Lemma 6 is divided into several steps.

-

Integral equation representation

Notice that solutions of the \(\mathcal {H}^s\)-valued SDE (27) are nothing else than solutions of the following integral equation,

$$\begin{aligned} z(t) = x_* + \int _0^t \, d(z(u)) \, du + \sqrt{2 \tau } W(t) \qquad \forall t \in (0,T), \end{aligned}$$(28)where \(W\) is a Brownian motion in \(\mathcal {H}^s\) with covariance operator equal to \(C_s\). We thus introduce the Itô map \({\varTheta }: C([0,T],\mathcal {H}^s) \rightarrow C([0,T],\mathcal {H}^s)\) that sends a function \(W \in C([0,T],\mathcal {H}^s)\) to the unique solution of the integral Eq. (28): solution of (27) can be represented as \({\varTheta }(W)\) where \(W\) is an \(\mathcal {H}^s\)-valued Brownian motion with covariance \(C_s\). As is described below, the function \({\varTheta }\) is continuous if \(C([0,T],\mathcal {H}^s)\) is topologized by the uniform norm \(\Vert w\Vert _{C([0,T],\mathcal {H}^s)} \overset{{\tiny {\text{ def }}}}{=}\sup \{ \Vert w(t)\Vert _{s} : t \in (0,T)\}\). It is crucial to notice that the rescaled process \(z^{\delta }\), defined in Eq. (6), satisfies \(z^{\delta } = {\varTheta }(\widehat{W}^{\delta })\) with

$$\begin{aligned} \widehat{W}^{\delta }(t)\!:= W^{\delta }(t) + \frac{1}{\sqrt{2\tau }} \int _0^t [ d^{\delta }(\bar{z}^{\delta }(u))- d(z^{\delta }(u)) ]\,du. \end{aligned}$$(29)In Equation (29), the quantity \(d^{\delta }\) is the approximate drift defined in Eq. (23) and \(\bar{z}^{\delta }\) is the rescaled piecewise constant interpolant of \(\{x^{k,\delta }\}_{k \ge 0}\) defined as

$$\begin{aligned} \bar{z}^{\delta }(t) = x^{k,\delta } \qquad \text {for} \qquad t_k \le t < t_{k+1}. \end{aligned}$$(30)The proof follows from a continuous mapping argument (see below) once it is proven that \(\widehat{W}^{\delta }\) converges weakly in \(C([0,T],\mathcal {H}^s)\) to \(W\).

-

The Itô map \({\varTheta }\) is continuous

It can be proved that \({\varTheta }\) is continuous as a mapping from \(\Big (C([0,T],\mathcal {H}^s), \Vert \cdot \Vert _{C([0,T],\mathcal {H}^s)} \Big )\) to itself. The usual Picard’s iteration proof of the Cauchy-Lipschitz theorem of ODEs may be employed: see [2].

-

The sequence of processes \(\widehat{W}^{\delta }\) converges weakly to \(W\)

The process \(\widehat{W}^{\delta }(t)\) is defined by \(\widehat{W}^{\delta }(t) = W^{\delta }(t) + \frac{1}{\sqrt{2\tau }} \int _0^t [ d^{\delta }(\bar{z}^{\delta }(u))- d(z^{\delta }(u)) ]\,du\) and Conditions 5 state that \(W^{\delta }\) converges weakly to \(W\) in \(C([0,T], \mathcal {H}^s)\). Consequently, to prove that \(\widehat{W}^{\delta }(t)\) converges weakly to \(W\) in \(C([0,T], \mathcal {H}^s)\), it suffices (Slutsky’s lemma) to verify that the sequences of processes

$$\begin{aligned} (\omega ,t) \mapsto \int _0^t \big [ d^{\delta }(\bar{z}^{\delta }(u))- d(z^{\delta }(u)) \big ] \,du \end{aligned}$$(31)converges to zero in probability with respect to the supremum norm in \(C([0,T],\mathcal {H}^s)\). By Markov’s inequality, it is enough to check that \(\mathbb {E}\big [ \int _0^T \!\Vert d^{\delta }(\bar{z}^{\delta }(u))- d(z^{\delta }(u)) \Vert _s \; du \!\big ]\) converges to zero as \(\delta \) goes to zero. Conditions 5 states that there exists an integer \(p \ge 1\) such that \(\Vert d^{\delta }(x)-d(x)\Vert \lesssim \delta \cdot (1+\Vert x\Vert _s^{p})\) so that for any \(t_k \le u < t_{k+1}\) we have

$$\begin{aligned} \Big \Vert d^{\delta }(\bar{z}^{\delta }(u))- d(\bar{z}^{\delta }(u)) \Big \Vert _s \lesssim \delta \big ( 1+\Vert \bar{z}^{\delta }(u)\Vert _s^{p} \big ) = \delta \big ( 1+\Vert x^{k,\delta }\Vert _s^{p} \big ). \end{aligned}$$(32)Conditions 5 states that \(d(\cdot )\) is globally Lipschitz on \(\mathcal {H}^s\). Therefore, Lemma 3 shows that

$$\begin{aligned} \mathbb {E}\Vert d(\bar{z}^{\delta }(u))- d(z^{\delta }(u))\Vert _s \lesssim \mathbb {E}\Vert x^{k+1,\delta }-x^{k,\delta }\Vert _s \lesssim \delta ^{\frac{1}{2}} (1+\Vert x^{k,\delta }\Vert _s). \end{aligned}$$(33)From estimates (32) and (33) it follows that \(\Vert d^{\delta }(\bar{z}^{\delta }(u))- d(z^{\delta }(u)) \Vert _s \lesssim \delta ^{\frac{1}{2}} (1+\Vert x^{k,\delta }\Vert ^{p}_s)\). Consequently

$$\begin{aligned} \mathbb {E}\Big [ \int _0^T \Vert d^{\delta }(\bar{z}^{\delta }(u))- d(z^{\delta }(u)) \Vert _s \; du \Big ] \lesssim \delta ^{\frac{3}{2}} \sum _{k \delta < T} \mathbb {E}\Big [ 1+\Vert x^{k,\delta }\Vert ^{p}_s \Big ]. \end{aligned}$$(34)The a-priori bound of Conditions 5 shows that this last quantity converges to zero as \(\delta \) converges to zero, which finishes the proof of Eq. (31). This concludes the proof of \(\widehat{W}^{\delta }(t) \Longrightarrow W\).

-

Continuous mapping argument

It has been proved that \({\varTheta }\) is continuous as a mapping from \(\Big (C([0,T],\mathcal {H}^s), \Vert \cdot \Vert _{C([0,T],\mathcal {H}^s)} \Big )\) to itself. The solutions of the \(\mathcal {H}^s\)-valued SDE (27) can be expressed as \({\varTheta }(W)\) while the rescaled continuous interpolate \(z^{\delta }\) also reads \(z^{\delta } = {\varTheta }(\widehat{W}^{\delta })\). Since \(\widehat{W}^{\delta }\) converges weakly in \(\Big (C([0,T],\mathcal {H}^s), \Vert \cdot \Vert _{C([0,T],\mathcal {H}^s)} \Big )\) to \(W\) as \(\delta \) tends to zero, the continuous mapping theorem ensures that \(z^{\delta }\) converges weakly in \(\Big (C([0,T],\mathcal {H}^s), \Vert \cdot \Vert _{C([0,T],\mathcal {H}^s)} \Big )\) to the solution \({\varTheta }(W)\) of the \(\mathcal {H}^s\)-valued SDE (27). This ends the proof of Lemma 6.

In order to establish Theorem 4 as a consequence of the general diffusion approximation Lemma 6, it suffices to verify that if Assumptions 1 hold then Conditions 5 are satisfied by the Markov chain \(x^{\delta }\) defined in Sect. 3.1. In Sect. 4.2 we prove the following quantitative version of the approximation the function \(d^{\delta }(\cdot )\) by the function \(d(\cdot )\) where \(d(x) = -\Big ( x + C \nabla {\varPsi }(x)\Big )\).

Lemma 7

(Drift estimate) Let Assumptions 1 hold and let \(p \ge 1\) be an integer. Then the following estimate is satisfied,

Moreover, the approximate drift \(d^{\delta }\) is linearly bounded in the sense that

It follows from Lemma (7) that Eq. (25) of Conditions 5 is satisfied as soon as Assumptions 1 hold. The invariance principle of Conditions 5 follows from the next lemma. It is proved in Sect 4.5.

Lemma 8

(Invariance Principle) Let Assumptions 1 hold. Then the rescaled noise process \(W^{\delta }(t)\) defined in Eq. (24) satisfies

where \(\Longrightarrow \) denotes weak convergence in \(C([0,T],\mathcal {H}^s)\), and \(W\) is a \(\mathcal {H}^s\)-valued Brownian motion with covariance operator \(C_s\).

In Sect. 4.4 it is proved that the following a priori bound is satisfied,

Lemma 9

(A priori bound) Consider a fixed time horizon \(T > 0\) and an integer \(p \ge 1\). Under Assumptions 1 the following bound holds,

In conclusion, Lemmas 7 and 8 and 9 together show that Conditions 5 are consequences of Assumptions 1. Therefore, under Assumptions 1, the general diffusion approximation Lemma 6 can be applied: this concludes the proof of Theorem 4.

4 Key estimates

This section assembles various results which are used in the previous section. Some of the technical proofs are deferred to the appendix.

4.1 Acceptance probability asymptotics

This section describes a first order expansion of the acceptance probability. The approximation

is valid for \(\delta \ll 1\). The quantity \(\bar{\alpha }^{\delta }\) has the advantage over \(\alpha ^{\delta }\) of being very simple to analyse: explicit computations are available. This will be exploited in Sect. 4.2. The quality of the approximation (38) is rigorously quantified in the next lemma.

Lemma 10

(Acceptance probability estimate) Let Assumptions 1 hold. For any integer \(p \ge 1\) the quantity \(\bar{\alpha }^{\delta }(x,\xi )\) satisfies

Proof

See Appendix 1

Recall the local mean acceptance \(\alpha ^{\delta }(x)\) defined in Eq. (18). Define the approximate local mean acceptance probability by \(\bar{\alpha }^{\delta }(x) \overset{{\tiny {\text{ def }}}}{=}\mathbb {E}_x[ \bar{\alpha }^{\delta }(x,\xi )]\). One can use Lemma 10 to approximate the local mean acceptance probability \(\alpha ^{\delta }(x)\).

Corollary 1

Let Assumptions 1 hold. For any integer \(p \ge 1\) the following estimates hold,

Proof

See Appendix 1

4.2 Drift estimates

Explicit computations are available for the quantity \(\bar{\alpha }^{\delta }\). We will use these results, together with quantification of the error committed in replacing \(\alpha ^{\delta }\) by \(\bar{\alpha }^{\delta }\), to estimate the mean drift (in this section) and the diffusion term (in the next section).

Lemma 11

For any \(x \in \mathcal {H}^s\) the approximate acceptance probability \(\bar{\alpha }^{\delta }(x,\xi )\) satisfies

Proof

Let \(u = \sqrt{\frac{2\tau }{\delta }} \; \mathbb {E}_x \Big [ \bar{\alpha }^{\delta }(x,\xi ) \cdot \xi \Big ] \in \mathcal {H}^s\). To prove the lemma it suffices to verify that for all \(v \in \mathcal {H}^{-s}\) we have \( \langle u,v \rangle = -\langle C \nabla {\varPsi }(x), v \rangle \). To this end, use the decomposition \(v = \alpha \nabla {\varPsi }(x) + w\) where \(\alpha \in \mathbb {R}\) and \(w \in \mathcal {H}^{-s}\) satisfies \(\langle C \nabla {\varPsi }(x), w \rangle = 0\). Since \(\xi \overset{\mathcal {D}}{\sim }\mathrm N (0,C)\) the two Gaussian random variables \(Z_{{\varPsi }} \overset{{\tiny {\text{ def }}}}{=}\langle \nabla {\varPsi }(x), \xi \rangle \) and \(Z_{w} \overset{{\tiny {\text{ def }}}}{=}\langle w, \xi \rangle \) are independent: indeed, \((Z_{{\varPsi }}, Z_{w})\) is a Gaussian vector in \(\mathbb {R}^2\) with \(\text {Cov}(Z_{{\varPsi }}, Z_{w}) = 0\). It thus follows that

which concludes the proof of Lemma 11.

We now use this explicit computation to give a proof of the drift estimate Lemma 7.

Proof

(Proof of Lemma 7) The function \(d^{\delta }\) defined by Eq. (23) can also be expressed as

where the mean local acceptance probability \(\alpha ^{\delta }(x)\) has been defined in Eq. (18) and the two terms \(B_1\) and \(B_2\) are studied below. To prove Eq. (35), it suffices to establish that

We now establish these two bounds.

-

Lemma 10 and Corollary 1 show that

$$\begin{aligned} \Vert B_1 + x \Vert _s^p&= \Big \{ \frac{(1-2\delta )^{\frac{1}{2}} - 1}{\delta }\alpha ^{\delta }(x) + 1 \Big \}^p \Vert x\Vert ^p_s \\&\lesssim \Big \{ \big | \frac{(1-2\delta )^{\frac{1}{2}} - 1}{\delta } - 1 \big |^p + \big | \alpha ^{\delta }(x) - 1 \big |^p \Big \} \Vert x\Vert ^p_s \nonumber \\&\lesssim \Big \{\delta ^p + \delta ^\frac{p}{2} (1+\Vert x\Vert _s^p) \Big \} \Vert x\Vert ^p_s \lesssim \delta ^\frac{p}{2} (1+\Vert x\Vert _s^{2p}).\nonumber \end{aligned}$$(44) -

Lemma 10 shows that

$$\begin{aligned} \Vert B_2 + C\nabla {\varPsi }(x)\Vert _s^p&= \big \Vert \sqrt{\frac{2\tau }{\delta }} \, \mathbb {E}_x[\alpha ^{\delta }(x,\xi ) \, \xi ] + C\nabla {\varPsi }(x) \big \Vert _s^p \\&\lesssim \delta ^{-\frac{p}{2}} \big \Vert \mathbb {E}_x[\{\alpha ^{\delta }(x,\xi ) - \bar{\alpha }^{\delta }(x,\xi ) \} \, \xi ] \big \Vert _s^p\nonumber \\&+\, \big \Vert \underbrace{ \sqrt{\frac{2\tau }{\delta }} \, \mathbb {E}_x[\bar{\alpha }^{\delta }(x,\xi ) \, \xi ] + C\nabla {\varPsi }(x)}_{=0} \big \Vert _s^p.\nonumber \end{aligned}$$(45)By Lemma 11, the second term on the right hand equals to zero. Consequently, the Cauchy-Schwarz inequality implies that

$$\begin{aligned} \Vert B_2 + C\nabla {\varPsi }(x)\Vert _s^p&\lesssim \delta ^{-\frac{p}{2}} \mathbb {E}_x[ \big |\alpha ^{\delta }(x,\xi ) - \bar{\alpha }^{\delta }(x,\xi )\big |^2]^{\frac{p}{2}}\\&\lesssim \delta ^{-\frac{p}{2}} \Big ( \delta ^2 (1+\Vert x\Vert _s^{4})\Big )\!^{\frac{p}{2}} \lesssim \delta ^{\frac{p}{2}} (1+\Vert x\Vert _s^{2p}). \end{aligned}$$

Estimates (44) and (45) give Eq. (43). To complete the proof we establish the bound (36). The expression (42) shows that it suffices to verify \(\delta ^{-\frac{1}{2}} \; \mathbb {E}_x[\alpha ^{\delta }(x,\xi ) \, \xi ] \lesssim 1+\Vert x\Vert _s\). To this end, we use Lemma 11 and Corollary 1. By the Cauchy-Schwarz inequality,

which concludes the proof of Lemma 7.

4.3 Noise estimates

In this section we estimate the error in the approximation \({\varGamma }^{k,\delta } \approx \mathrm N (0,C_s)\). To this end, let us introduce the covariance operator \(D^{\delta }(x)=\mathbb {E}\Big [ {\varGamma }^{k,\delta } \otimes _{\mathcal {H}^s} {\varGamma }^{k,\delta } | x^{k,\delta }=x \Big ]\) of the martingale difference \({\varGamma }^{\delta }\). For any \(x, u,v \in \mathcal {H}^s\) the operator \(D^\delta (x)\) satisfies

The next lemma gives a quantitative version of the approximation of \(D^\delta (x)\) by the operator \(C_s\).

Lemma 12

(Noise estimates) Let Assumptions 1 hold. For any pair of indices \(i,j \ge 1\), the martingale difference term \({\varGamma }^\delta (x,\xi )\) satisfies

with \(\{\hat{\varphi }_j = j^{-s} \varphi _j\}_{j \ge 0}\) is an orthonormal basis of \(\mathcal {H}^s\).

Proof

See Appendix 1

4.4 A priori bound

Now we have all the ingredients for the proof of the a priori bound presented in Lemma 9 which states that the rescaled process \(z^{\delta }\) given by Eq. (6) does not blow up in finite time.

Proof

(Proof Lemma 9) Without loss of generality, assume that \(p = 2n\) for some positive integer \(n \ge 1\). We now prove that there exist constants \(\alpha _1, \alpha _2, \alpha _3 > 0\) satisfying

Lemma 9 is a straightforward consequence of Eq. 48 since this implies that

For notational convenience, let us define \(V^{k,\delta } = \mathbb {E}\big [\Vert x^{k,\delta }\Vert _s^{2n} \big ]\). To prove Eq. (48), it suffices to establish that

where \(K>0\) is constant independent from \(\delta \in (0,\frac{1}{2})\). Indeed, iterating inequality (49) leads to the bound (48), for some computable constants \(\alpha _1, \alpha _2, \alpha _3 > 0\). The definition of \(V^k\) shows that

where the increment \(x^{k+1,\delta }-x^{k,\delta }\) is given by

To bound the right-hand-side of Eq. (50), we use a binomial expansion and control each term. To this end, we establish the following estimate: for all integers \(i,j,k \ge 0\) satisfying \(i+j+k = n\) and \((i,j,k) \ne (n,0,0)\) the following inequality holds,

To prove Eq. (52), we separate two different cases.

-

Let us suppose \((i,j,k) = (n-1,0,1)\). Lemma 7 states that the approximate drift has a linearly bounded growth so that

$$\begin{aligned} \Big \Vert \mathbb {E}\big [ x^{k+1,\delta } - x^{k,\delta } | x^{k,\delta } \big ] \Big \Vert _s = \delta \Vert d^{\delta }(x^{k,\delta })\Vert _s \lesssim \delta ( 1+\Vert x^{k,\delta }\Vert _s ). \end{aligned}$$Consequently, we have

$$\begin{aligned} \mathbb {E}\Big [\Big (\Vert x^{k,\delta } \Vert _s^2\Big )^{n-1} \langle x^{k,\delta }, x^{k+1,\delta }\!-\!x^{k,\delta } \rangle _s \!\Big ]&\lesssim \mathbb {E}\Big [ \Vert x^{k,\delta } \Vert _s^{2(n-1)} \Vert x^{k,\delta } \Vert _s \Big ( \delta (1\!+\!\Vert x^{k,\delta }\Vert _s \!\Big ) \Big ] \\&\lesssim \delta (1 + V^{k,\delta }). \end{aligned}$$This proves Eq. (52) in the case \((i,j,k) = (n-1,0,1)\).

-

Let us suppose \((i,j,k) \not \in \Big \{ (n,0,0), (n-1,0,1) \Big \}\). Because for any integer \(p \ge 1\),

$$\begin{aligned} \mathbb {E}_x\Big [ \Vert x^{k+1,\delta }-x^{k,\delta }\Vert _s^p \Big ]^{\frac{1}{p}} \lesssim \delta ^{\frac{1}{2}} (1+\Vert x\Vert _s) \end{aligned}$$it follows from the Cauchy-Schwarz inequality that

$$\begin{aligned} \mathbb {E}\Big [\Big (\Vert x^{k,\delta } \Vert _s^2\Big )^i \Big ( \Vert x^{k+1,\delta }-x^{k,\delta }\Vert _s^2 \Big )^j \Big ( \langle x^{k,\delta }, x^{k+1,\delta }-x^{k,\delta } \rangle _s \Big )^k \Big ] \lesssim \delta ^{j + \frac{k}{2}} (1+ V^{k,\delta }). \end{aligned}$$Since we have supposed that \((i,j,k) \not \in \Big \{ (n,0,0), (n-1,0,1) \Big \}\) and \(i+j+k = n\), it follows that \(j + \frac{k}{2} \ge 1\). This concludes the proof of Eq. (52),

The binomial expansion of Eq. (50) and the bound (52) show that Eq. (49) holds. This concludes the proof of Lemma 9.

4.5 Invariance principle

Combining the noise estimates of Lemma 12 and the a priori bound of Lemma 9, we show that under Assumptions 1 the sequence of rescaled noise processes defined in Eq. 24 converges weakly to a Brownian motion. This is the content of Lemma 8 whose proof is now presented.

Proof

(Proof of Lemma 8) As described in [24] [Proposition \(5.1\)], in order to prove that \(W^{\delta }\) converges weakly to \(W\) in \(C([0,T], \mathcal {H}^s)\) it suffices to prove that for any \(t \in [0,T]\) and any pair of indices \(i,j \ge 0\) the following three limits hold in probability,

We now check that these three conditions are indeed satisfied.

-

Condition (53): since \(\mathbb {E}\Big [ \Vert {\varGamma }^{k,\delta }\Vert _s^2 | x^{k,\delta }\Big ] = \mathrm {Trace}_{\mathcal {H}^s}(D^{\delta }(x^{k,\delta }))\), Lemma 12 shows that

$$\begin{aligned} \mathbb {E}\Big [ \Vert {\varGamma }^{k,\delta }\Vert _s^2 | x^{k,\delta }\Big ] = \mathrm {Trace}_{\mathcal {H}^s}(C_s) + \mathbf e _1^{\delta }(x^{k,\delta }) \end{aligned}$$where the error term \(\mathbf e _1^{\delta }\) satisfies \(| \mathbf e _1^{\delta }(x) | \lesssim \delta ^{\frac{1}{8}} (1+\Vert x\Vert _s^2)\). Consequently, to prove condition (53) it suffices to establish that

$$\begin{aligned} \lim _{\delta \rightarrow 0} \; \mathbb {E}\Big [ \big | \delta \, \sum _{k \delta < T} \mathbf e _1^{\delta }(x^{k,\delta }) \big | \Big ] = 0 . \end{aligned}$$We have \(\mathbb {E}\big [ \big | \delta \, \sum _{k \delta < T} \mathbf e _1^{\delta }(x^{k,\delta }) \big | \big ] \lesssim \delta ^{\frac{1}{8}} \Big \{ \delta \cdot \mathbb {E}\Big [ \sum _{k \delta < T} (1+\Vert x^{k,\delta }\Vert _s^2) \Big ] \Big \}\) and the a priori bound presented in Lemma 9 shows that

$$\begin{aligned} \sup _{\delta \in \left( 0,\frac{1}{2}\right) } \quad \Big \{ \delta \cdot \mathbb {E}\Big [ \sum _{k \delta < T} (1+\Vert x^{k,\delta }\Vert _s^2) \Big ] \Big \} < \infty . \end{aligned}$$Consequently \(\lim _{\delta \rightarrow 0} \; \mathbb {E}\big [ \big | \delta \, \sum _{k \delta < T} \mathbf e _1^{\delta }(x^{k,\delta }) \big | \big ] = 0\), and the conclusion follows.

-

Condition (54): Lemma 12 states that

$$\begin{aligned} \mathbb {E}_k \Big [ \langle {\varGamma }^{k,\delta }, \hat{\varphi }_i \rangle _s \langle {\varGamma }^{k,\delta }, \hat{\varphi }_j \rangle _s \Big ] = \langle \hat{\varphi }_i, C_s \hat{\varphi }_j \rangle _s + \mathbf e _2^{\delta }(x^{k,\delta }) \end{aligned}$$where the error term \(\mathbf e _2^{\delta }\) satisfies \(| \mathbf e _2^{\delta }(x) | \lesssim \delta ^{\frac{1}{8}} (1+\Vert x\Vert _s)\). The exact same approach as the proof of Condition (53) gives the conclusion.

-

Condition (55): from the Cauchy-Schwarz and Markov’s inequalities it follows that

$$\begin{aligned} \mathbb {E}\Big [\Vert {\varGamma }^{k,\delta }\Vert _s^2 \; {1\!\!1}_{ \{\Vert {\varGamma }^{k,\delta } \Vert _s^2 \ge \delta ^{-1} \, \varepsilon \}} \Big ]&\le \mathbb {E}\Big [\Vert {\varGamma }^{k,\delta }\Vert _s^4 \Big ]^{\frac{1}{2}} \cdot \mathbb {P}\Big [ \Vert {\varGamma }^{k,\delta } \Vert _s^2 \ge \delta ^{-1} \, \varepsilon \Big ]^{\frac{1}{2}}\\&\le \mathbb {E}\Big [\Vert {\varGamma }^{k,\delta }\Vert _s^4 \Big ]^{\frac{1}{2}} \cdot \Big \{ \frac{\mathbb {E}\big [\Vert {\varGamma }^{k,\delta }\Vert _s^4 \big ]}{(\delta ^{-1} \, \varepsilon )^2}\Big \}^{\frac{1}{2}}\\&\le \frac{\delta }{\varepsilon } \cdot \mathbb {E}\Big [\Vert {\varGamma }^{k,\delta }\Vert _s^4 \Big ]. \end{aligned}$$Lemma 7 readily shows that \(\mathbb {E}\Vert {\varGamma }^{k,\delta }\Vert _s^4 \lesssim 1+\Vert x\Vert _s^4\) Consequently we have

$$\begin{aligned} \mathbb {E}\Big [ \Big | \delta \, \sum _{k\delta < T} \mathbb {E}\Big [\Vert {\varGamma }^{k,\delta }\Vert _s^2 \; {1\!\!1}_{ \{\Vert {\varGamma }^{k,\delta } \Vert _s^2 \ge \delta ^{-1} \, \varepsilon \}} |x^{k,\delta } \Big ] \Big | \Big ] \le \frac{\delta }{\varepsilon } \times \Big \{ \delta \cdot \mathbb {E}\Big [ \sum _{k \delta < T} (1+\Vert x^{k,\delta }\Vert _s^4) \Big ] \Big \} \end{aligned}$$and the conclusion again follows from the a priori bound Lemma 9.

5 Quadratic variation

As discussed in the introduction, the SPDE (7), and the Metropolis–Hastings algorithm pCN which approximates it for small \(\delta \), do not satisfy the smoothing property and so almost sure properties of the limit measure \(\pi ^\tau \) are not necessarily seen at finite time. To illustrate this point, we introduce in this section a functional \(V:\mathcal {H}\rightarrow \mathbb {R}\) that is well defined on a dense subset of \(\mathcal {H}\) and such that \(V(X)\) is \(\pi ^{\tau }\)-almost surely well defined and satisfies \(\mathbb {P}\big ( V(X) = 1\big ) = \tau \) for \(X \overset{\mathcal {D}}{\sim }\pi ^{\tau }\). The quantity \(V\) corresponds to the usual quadratic variation if \(\pi _0\) is the Wiener measure. We show that the quadratic variation like quantity \(V(x^{k,\tau })\) of a pCN Markov chain converges as \(k \rightarrow \infty \) to the almost sure quantity \(\tau \). We then prove that piecewise linear interpolation of this quantity solves, in the small \(\delta \) limit, a linear ODE (the “fluid limit”) whose globally attractive stable state is the almost sure quantity \(\tau \). This quantifies the manner in which the pCN method approaches statistical equilibrium.

5.1 Definition and properties

Under Assumptions 1, the Karhunen-Loéve expansion shows that \(\pi _0\)-almost every \(x \in \mathcal {H}\) satisfies

This motivates the definition of the quadratic variation like quantities

When these two quantities are equal the vector \(x \in \mathcal {H}\) is said to possess a quadratic variation \(V(x)\) defined as \(V(x) = V_-(x) = V_+(x)\). Consequently, \(\pi _0\)-almost every \(x \in \mathcal {H}\) possesses a quadratic variation \(V(x)=1\). It is a straightforward consequence that \(\pi _0^{\tau }\)-almost every and \(\pi ^{\tau }\)-almost every \(x \in \mathcal {H}\) possesses a quadratic variation \(V(x)=\tau \). Strictly speaking this only coincides with quadratic variation when \(C\) is the covariance of a (possibly conditioned) Brownian motion; however we use the terminology more generally in this section. The next lemma proves that the quadratic variation \(V(\cdot )\) behaves as it should do with respect to additivity.

Lemma 13

(Quadratic variation additivity) Consider a vector \(x \in \mathcal {H}\) and a Gaussian random variable \(\xi \overset{\mathcal {D}}{\sim }\pi _0\) and a real number \(\alpha \in \mathbb {R}\). Suppose that the vector \(x \in \mathcal {H}\) possesses a finite quadratic variation \(V(x)<+\infty \). Then almost surely the vector \(x+\alpha \xi \in \mathcal {H}\) possesses a quadratic variation that is equal to

Proof

Let us define \(V_N \overset{{\tiny {\text{ def }}}}{=}N^{-1} \sum _{1}^N \frac{\langle x,\varphi _j \rangle \cdot \langle \xi ,\varphi _j \rangle }{\lambda _j^2}\). To prove Lemma 13 it suffices to prove that almost surely the following limit holds

Borel-Cantelli Lemma shows that it suffices to prove that for every fixed \(\varepsilon > 0\) we have \(\sum _{N \ge 1} \; \mathbb {P}\big [ \big |V_N \big | > \varepsilon \big ] < \infty \). Notice then that \(V_N\) is a centred Gaussian random variables with variance

It readily follows that \(\sum _{N \ge 1} \; \mathbb {P}\big [ \big |V_N \big | > \varepsilon \big ] < \infty \), finishing the proof of the Lemma.

5.2 Large \(k\) behaviour of quadratic variation for pCN

The pCN algorithm at temperature \(\tau >0\) and discretization parameter \(\delta >0\) proposes a move from \(x\) to \(y\) according to the dynamics

This move is accepted with probability \(\alpha ^{\delta }(x,y)\). In this case, Lemma 13 shows that if the quadratic variation \(V(x)\) exists then the quadratic variation of the proposed move \(y \in \mathcal {H}\) exists and satisfies

Consequently, one can prove that for any finite time step \(\delta > 0\) and temperature \(\tau >0\) the quadratic variation of the MCMC algorithm converges to \(\tau \).

Proposition 14

(Limiting Quadratic Variation) Let Assumptions 1 hold and \(\{x^{k,\delta }\}_{k \ge 0}\) be the Markov chain of Sect. 3.1. Then almost surely the quadratic variation of the Markov chain converges to \(\tau \),

Proof

Let us first show that the number of accepted moves is infinite. If this were not the case, the Markov chain would eventually reach a position \(x^{k,\delta } = x \in \mathcal {H}\) such that all subsequent proposals \(y^{k+l} = (1-2\delta )^{\frac{1}{2}} \, x^k + (2 \tau \delta )^{\frac{1}{2}} \, \xi ^{k+l}\) would be refused. This means that the \(\text{ i.i.d. }\) Bernoulli random variables \(\gamma ^{k+l} = \hbox {Bernoulli} \big (\alpha ^{\delta }(x^k,y^{k+l}) \big )\) satisfy \(\gamma ^{k+l} = 0\) for all \(l \ge 0\). This can only happen with probability zero. Indeed, since \(\mathbb {P}[\gamma ^{k+l} = 1] > 0\), one can use Borel-Cantelli Lemma to show that almost surely there exists \(l \ge 0\) such that \(\gamma ^{k+l} = 1\). To conclude the proof of the Proposition, notice then that the sequence \(\{ u_{k} \}_{k \ge 0}\) defined by \(u_{k+1}-u_k = -2 \delta (u_k - \tau )\) converges to \(\tau \).

5.3 Fluid limit for quadratic variation of pCN

To gain further insight into the rate at which the limiting behaviour of the quadratic variation is observed for pCN we derive an ODE “fluid limit” for the Metropolis–Hastings algorithm. We introduce the continuous time process \(t \mapsto v^{\delta }(t)\) defined as continuous piecewise linear interpolation of the process \(k \mapsto V(x^{k,\delta })\),

Since the acceptance probability of pCN approaches one as \(\delta \rightarrow 0\) (see Corollary 1) Eq. (56) shows heuristically that the trajectories of the process \(t \mapsto v^{\delta }(t)\) should be well approximated by the solution of the (non-stochastic) differential equation

We prove such a result, in the sense of convergence in probability in \(C([0,T],{\mathbb R})\):

Theorem 15

(Fluid limit for quadratic variation) Let Assumptions 1 hold. Let the Markov chain \(x^{\delta }\) start at fixed position \(x_* \in \mathcal {H}^s\). Assume that \(x_* \in \mathcal {H}\) possesses a finite quadratic variation, \(V(x_*) < \infty \). Then the function \(v^{\delta }(t)\) converges in probability in \(C([0,T], \mathbb {R})\), as \(\delta \) goes to zero, to the solution of the differential Eq. (58) with initial condition \(v_0 = V(x_*)\).

As already indicated, the heart of the proof consists in showing that the acceptance probability of the algorithm converges to one as \(\delta \) goes to zero. We prove such a result as Lemma 16 below, and then proceed to prove Theorem 15. To this end we introduce \(t^{\delta }(k)\), the number of accepted moves,

where \(\gamma ^{l, \delta } = \hbox {Bernoulli}(\alpha ^{\delta }(x,y))\) is the Bernoulli random variable defined in Eq. (20). Since the acceptance probability of the algorithm converges to \(1\) as \(\delta \rightarrow 0\), the approximation \(t^{\delta }(k) \approx k\) holds. In order to prove a fluid limit result on the interval \([0,T]\) one needs to prove that the quantity \(\big | t^{\delta }(k) - k \big |\) is small when compared to \(\delta ^{-1}\). The next Lemma shows that such a bounds holds uniformly on the interval \([0,T]\).

Lemma 16

(Number of accepted moves) Let Assumptions 1 hold. The number of accepted moves \(t^{\delta }(\cdot )\) verifies

where the convergence holds in probability.

Proof

The proof is given in Appendix 2.

We now complete the proof of Theorem 15 using the key Lemma 16.

Proof (of Theorem 15)

The proof consists in showing that the trajectory of the quadratic variation process behaves as if all the move were accepted. The main ingredient is the uniform lower bound on the acceptance probability given by Lemma 16.

Recall that \(v^{\delta }(k \delta ) = V(x^{k,\delta })\). Consider the piecewise linear function \(\widehat{v}^{\delta }( \cdot ) \in C([0,T], \mathbb {R})\) defined by linear interpolation of the values \(\hat{v}^{\delta }(k \delta ) = u^{\delta }(k)\) and where the sequence \(\{u^{\delta }(k) \}_{k \ge 0}\) satisfies \(u^{\delta }(0) = V(x_*)\) and

The value \(u^{\delta }(k) \in \mathbb {R}\) represents the quadratic variation of \(x^{k,\delta }\) if the \(k\) first moves of the MCMC algorithm had been accepted. One can readily check that as \(\delta \) goes to zero the sequence of continuous functions \(\hat{v}^{\delta }(\cdot )\) converges in \(C([0,T], \mathbb {R})\) to the solution \(v(\cdot )\) of the differential Eq. (58). Consequently, to prove Theorem 15 it suffices to show that for any \(\varepsilon > 0\) we have

The definition of the number of accepted moves \(t^{\delta }(k)\) is such that \(V(x^{k,\delta }) = u^{\delta }(t^{\delta }(k))\). Note that

Hence, for any integers \(t_1, t_2 \ge 0,\) we have \(\big |u^{\delta }(t_2) - u^{\delta }(t_1) \big | \le \big | u^{\delta }(|t_2-t_1|) - u^{\delta }(0) \big |\) so that

Equation (60) shows that \(|u^{\delta }(k)-u^{\delta }(0)| \lesssim \big ( 1 - (1-2\delta )^k \big )\). This implies that

where \(S = \sup \big \{ \delta \cdot \big | t^{\delta }(k) -k \big | \; : 0 \le k \le T \delta ^{-1} \big \}\). Since for any \(a>0\) we have \(1-(1-2\delta )^{a \delta ^{-1}} \rightarrow 1-e^{-2a}\), Eq. (59) follows if one can prove that as \(\delta \) goes to zero the supremum \(S\) converges to zero in probability: this is precisely the content of Lemma 16. This concludes the proof of Theorem .

6 Numerical results

In this section, we present some numerical simulations demonstrating our results. We consider the minimization of a functional \(J(\cdot )\) defined on the Sobolev space \(H^1_0(\mathbb {R}).\) Note that functions \(x \in H^1_0([0,1])\) are continuous and satisfy \(x(0) = x(1) = 0;\) thus \(H^1_0(\mathbb {R}) \subset C^0([0,1]) \subset L^2(0,1)\). For a given real parameter \(\lambda > 0\), the functional \(J:H^1_0([0,1]) \rightarrow \mathbb {R}\) is composed of two competitive terms, as follows:

The first term penalizes functions that deviate from being flat, whilst the second term penalizes functions that deviate from one in absolute value. Critical points of the functional \(J(\cdot )\) solve the following Euler-Lagrange equation:

Clearly \(x \equiv 0\) is a solution for all \(\lambda \in {\mathbb R}^+.\) If \(\lambda \in (0,\pi ^2)\) then this is the unique solution of the Euler-Lagrange equation and is the global minimizer of \(J\). For each integer \(k\) there is a supercritical bifurcation at parameter value \(\lambda =k^2\pi ^2.\) For \(\lambda >\pi ^2\) there are two minimizers, both of one sign and one being minus the other. The three different solutions of (62) which exist for \(\lambda =2\pi ^2\) are displayed in Fig. 1, at which value the zero (blue dotted) solution is a saddle point, and the two green solutions are the global minimizers of \(J\). These properties of \(J\) are overviewed in, for example, [25]. We will show how these global minimizers can emerge from an algorithm whose only ingredients are an ability to evaluate \({\varPsi }\) and to sample from the Gaussian measure with Cameron-Martin norm \(\int _0^1 |{\dot{x}}(s)|^2 ds.\) We emphasize that we are not advocating this as the optimal method for solving the Euler-Lagrange equations (62). We have chosen this example for its simplicity, in order to illustrate the key ingredients of the theory developed in this paper.

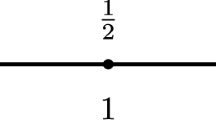

The three solutions of the Euler-Lagrange Eq. (62) for \(\lambda = 2 \pi ^2\). Only the two non-zero solutions are global minimum of the functional \(J(\cdot )\). The dotted solution is a local maximum of \(J(\cdot )\)

The pCN algorithm to minimize \(J\) given by (61) is implemented on \(L^2([0,1])\). Recall from [17] that the Gaussian measure \(\mathrm N (0,C)\) may be identified by finding the covariance operator for which the \(H^1_0([0,1])\) norm \(\Vert x\Vert ^2_C \overset{{\tiny {\text{ def }}}}{=}\int _0^1 \bigl |\dot{x}(s)\bigr |^2 \, ds\) is the Cameron-Martin norm. In [26] it is shown that the Wiener bridge measure \(\mathbb {W}_{0 \rightarrow 0}\) on \(L^2([0,1])\) has precisely this Cameron-Martin norm; indeed it is demonstrated that \(C^{-1}\) is the densely defined operator \(-\frac{d^2}{ds^2}\) with \(D(C^{-1})=H^2([0,1])\cap H^1_0([0,1]).\) In this regard it is also instructive to adopt the physicists viewpoint that

although, of course, there is no Lebesgue measure in infinite dimensions. Using an integration by parts, together with the boundary conditions on \(H^1_0([0,1])\), then gives

and the inverse of \(C\) is clearly identified as the differential operator above. See [27] for basic discussion of the physicists viewpoint on Wiener measure. For a given temperature parameter \(\tau \) the Wiener bridge measure \(\mathbb {W}_{0 \rightarrow 0}^{\tau }\) on \(L^2([0,1])\) is defined as the law of \(\big \{ \sqrt{\tau } \, W(t) \big \}_{t \in [0,1]}\) where \(\{ W(t) \}_{t \in [0,1]}\) is a standard Brownian bridge on \([0,1]\) drawn from \(\mathbb {W}_{0 \rightarrow 0}\).

The posterior distribution \(\pi ^{\tau }(dx)\) is defined by the change of probability formula

Notice that \(\pi ^{\tau }_0\bigl (H^1_0([0,1)\bigr ) =\pi ^{\tau }\bigl (H^1_0([0,1)\bigr ) = 0\) since a Brownian bridge is almost surely not differentiable anywhere on \([0,1]\). For this reason, the algorithm is implemented on \(L^2([0,1])\) even though the functional \(J(\cdot )\) is defined on the Sobolev space \(H^1_0([0,1])\). In terms of Assumptions 1(1) we have \(\kappa =1\) and the measure \(\pi ^{\tau }_0\) is supported on \(\mathcal{H}^r\) if and only if \(r<\frac{1}{2}\), see Remark 1; note also that \(H^1_0([0,1])=\mathcal{H}^1.\) Assumption 1(2) is satisfied for any choice \(s \in [\frac{1}{4},\frac{1}{2})\) because \(\mathcal{H}^s\) is embedded into \(L^4([0,1])\) for \(s \ge \frac{1}{4}.\) We add here that Assumptions 1(3-4) do not hold globally, but only locally on bounded sets, but the numerical results below will indicate that the theory developed in this paper is still relevant and could be extended to nonlocal versions of Assumptions 1(3-4), with considerable further work.

Following Sect. 3.1, the pCN Markov chain at temperature \(\tau >0\) and time discretization \(\delta >0\) proposes moves from \(x\) to \(y\) according to

where \(\xi \in C([0,1], \mathbb {R})\) is a standard Brownian bridge on \([0,1]\). The move \(x \rightarrow y\) is accepted with probability \(\alpha ^{\delta }(x,\xi ) = 1 \wedge \exp \big ( -\tau ^{-1}[{\varPsi }(y) - {\varPsi }(x)]\big )\). Figure 2 displays the convergence of the Markov chain \(\{x^{k,\delta }\}_{k \ge 0}\) to a minimizer of the functional \(J(\cdot )\). Note that this convergence is not shown with respect to the space \(H^1_0([0,1])\) on which \(J\) is defined, but rather in \(L^2([0,1])\); indeed \(J(\cdot )\) is almost surely infinite when evaluated at samples of the pCN algorithm, precisely because \(\pi ^{\tau }_0\bigl (H^1_0([0,1)\bigr ) = 0,\) as discussed above.

pCN parameters: \(\lambda = 2\pi ^2\), \(\delta = 1.10^{-2}\), \(\tau = 1.10^{-2}\). The algorithm is started at the zero function, \(x^{0,\delta }(t) = 0\) for \(t \in [0,1]\). After a transient phase, the algorithm fluctuates around a global minimizer of functional \(J(\cdot )\). The \(L^2\) error \(\Vert x^{k,\delta } - (\text {minimizer})\Vert _{L^2}\) is plotted as a function of the algorithmic time \(k\)

Of course the algorithm does not converge exactly to a minimizer of \(J(\cdot ),\) but fluctuates in a neighborhood of it. As described in the introduction of this article, in a finite dimensional setting the target probability distribution \(\pi ^{\tau }\) has Lebesgue density proportional to \(\exp \big ( -\tau ^{-1} \, J(x)\big )\). This intuitively shows that the size of the fluctuations around the minimum of the functional \(J(\cdot )\) are of size proportional to \(\sqrt{\tau }\). Figure 3 shows this phenomenon on log-log scales: the asymptotic mean error \(\mathbb {E}\big [ \Vert x - (\text {minimizer})\Vert _2\big ]\) is displayed as a function of the temperature \(\tau \). Figure 4 illustrates Theorem 15. One can observe the path \(\{ v^{\delta }(t) \}_{t \in [0,T]}\) for a finite time step discretization parameter \(\delta \) as well as the limiting path \(\{v(t)\}_{t \in [0,T]}\) that is solution of the differential Eq. (58).

pCN parameters: \(\lambda =2 \,\pi ^2\), \(\tau = 1.10^{-1}\), \(\delta = 1.10^{-3}\) and the algorithm starts at \(x^{k,\delta }=0\). The rescaled quadratic variation process (full line) behaves as the solution of the differential equation (dotted line), as predicted by Theorem 15. The quadratic variation converges to \(\tau \), as described by Proposition 14

7 Conclusion

There are two useful perspectives on the material contained in this paper, one concerning optimization and one concerning statistics. We now detail these perspectives.

-

Optimization We have demonstrated a class of algorithms to minimize the functional \(J\) given by (1). The Assumptions 1 encode the intuition that the quadratic part of \(J\) dominates. Under these assumptions we study the properties of an algorithm which requires only the evaluation of \({\varPsi }\) and the ability to draw samples from Gaussian measures with Cameron-Martin norm given by the quadratic part of \(J\). We demonstrate that, in a certain parameter limit, the algorithm behaves like a noisy gradient flow for the functional \(J\) and that, furthermore, the size of the noise can be controlled systematically. The advantage of constructing algorithms on Hilbert space is that they are robust to finite dimensional approximation. We turn to this point in the next bullet.

-

Statistics The algorithm that we use is a Metropolis–Hastings method with an Onrstein–Uhlenbeck proposal which we refer to here as pCN, as in [14]. The proposal takes the form for \(\xi \sim \mathrm N (0,C)\),

$$\begin{aligned} y=\bigl (1-2\delta \bigr )^{\frac{1}{2}}x+\sqrt{2\delta \tau }\xi \end{aligned}$$given in (5). The proposal is constructed in such a way that the algorithm is defined on infinite dimensional Hilbert space and may be viewed as a natural analogue of a random walk Metropolis–Hastings method for measures defined via density with respect to a Gaussian. It is instructive to contrast this with the standard random walk method S-RWM with proposal

$$\begin{aligned} y=x+\sqrt{2\delta \tau }\xi . \end{aligned}$$Although the proposal for S-RWM differs only through a multiplicative factor in the systematic component, and thus implementation of either is practically identical, the S-RWM method is not defined on infinite dimensional Hilbert space. This turns out to matter if we compare both methods when applied in \({\mathbb R}^N\) for \(N \gg 1\), as would occur if approximating a problem in infinite dimensional Hilbert space: in this setting the S-RWM method requires the choice \(\delta =\mathcal{O}(N^{-1})\) to see the diffusion (SDE) limit [2] and so requires \(\mathcal{O}(N)\) steps to see \(\mathcal{O}(1)\) decrease in the objective function, or to draw independent samples from the target measure; in contrast the pCN produces a diffusion limit for \(\delta \rightarrow 0\) independently of \(N\) and so requires \(\mathcal{O}(1)\) steps to see \(\mathcal{O}(1)\) decrease in the objective function, or to draw independent samples from the target measure. Mathematically this last point is manifest in the fact that we may take the limit \(N \rightarrow \infty \) (and thus work on the infinite dimensional Hilbert space) followed by the limit \(\delta \rightarrow 0.\)

The methods that we employ for the derivation of the diffusion (SDE) limit use a combination of ideas from numerical analysis and the weak convergence of probability measures. This approach is encapsulated in Lemma 6 which is structured in such a way that it, or variants of it, may be used to prove diffusion limits for a variety of problems other than the one considered here.

References

Tierney, L.: A note on metropolis-hastings kernels for general state spaces. Ann. Appl. Probab. 8(1), 1–9 (1998)

Mattingly, J., Pillai, N., Stuart, A.: SPDE limits of the random walk metropolis algorithm in high dimensions. Ann. Appl. Prob (2011)

Pillai, N.S., Stuart, A.M., Thiery, A.H.: Optimal scaling and diffusion limits for the langevin algorithm in high dimensions. Ann. Appl. Prob. 22(6), 2320–2356 (2012). doi:10.1214/11-AAP828

Kirkpatrick, S., Jr., D., Vecchi, M.: Optimization by simulated annealing. Science 220(4598), 671–680 (1983)

Černỳ, V.: Thermodynamical approach to the traveling salesman problem: an efficient simulation algorithm. J. Optim. Theory Appl. 45(1), 41–51 (1985)

Geman, D.: Bayesian image analysis by adaptive annealing. IEEE Trans. Geosci. Remote Sens. 1, 269–276 (1985)

Geman, S., Hwang, C.: Diffusions for global optimization. SIAM J. Control Optim. 24, 1031 (1986)

Chiang, T., Hwang, C., Sheu, S.: Diffusion for global optimization in \(\text{ r }{\hat{\,}}\text{ n }\). SIAM J. Control Optim. 25(3), 737–753 (1987)

Holley, R., Kusuoka, S., Stroock, D.: Asymptotics of the spectral gap with applications to the theory of simulated annealing. J. Funct. Anal. 83(2), 333–347 (1989)

Bertsimas, D., Tsitsiklis, J.: Simulated annealing. Stat. Sci. 8(1), 10–15 (1993)

Hinze, M., Pinnau, R., Ulbrich, M., Ulbrich, S.: Optimization PDE Constraints. Springer, New York (2008)

Da Prato, G., Zabczyk, J.: Ergodicity for Infinite Dimensional Systems. Cambridge Univ Press, Cambridge (1996)

Beskos, A., Roberts, G., Stuart, A., Voss, J.: An mcmc method for diffusion bridges. Stoch. Dyn. 8(3), 319–350 (2008)

Cotter, S., Roberts, G.O., Stuart, A., White, D.: MCMC methods for functions: modifying old algorithms to make them faster. Statistical Science

Hairer, M., Stuart, A.M., Voss, J.: Analysis of spdes arising in path sampling. partii: the nonlinear case. Ann. Appl. Probab. 17(5–6), 1657–1706 (2007)

Dashti, M., Law, K., Stuart, A., Voss, J.: MAP estimators and their consistency in bayesian nonparametric inverse problems. Inverse Problems

Da Prato, G., Zabczyk, J.: Stochastic Equations in Infinite Dimensions, Encyclopedia of Mathematics and Its Applications, vol. 44. Cambridge University Press, Cambridge (1992)

Stuart, A.: Inverse problems: a bayesian perspective. Acta Numer. 19(–1), 451–559 (2010)

Hairer, M., Stuart, A.M., Voss, J.: Signal Processing Problems on Function Space: Bayesian Formulation, Stochastic Pdes and Effective Mcmc Methods. The Oxford Handbook of Nonlinear Filtering. In: Crisan, D., Rozovsky, B. (2010). To Appear

Cotter, S., Dashti, M., Stuart, A.: Variational data assimilation using targetted random walks. Int. J. Numer. Method Fluids (2011). doi:10.1002/fld.2510

Stroock, D.W., Varadhan, S.S.: Multidimensional Diffussion Processes, vol. 233. Springer, New York (1979)

Ethier, S.N., Kurtz, T.G.: Markov Processes. Wiley Series in Probability and Mathematical Statistics: Probability and Mathematical Statistics. Wiley, New York (1986). Characterization and convergence

Kupferman, R., Stuart, A., Terry, J., Tupper, P.: Long-term behaviour of large mechanical systems with random initial data. Stoch. Dyn. 2(04), 533–562 (2002)

Berger, E.: Asymptotic behaviour of a class of stochastic approximation procedures. Probab. Theory Relat. Fields 71(4), 517–552 (1986)

Henry, D.: Geometric Theory of Semilinear Parabolic Equations, vol. 61. Springer, New York (1981)

Hairer, M., Stuart, A.M., Voss, J., Wiberg, P.: Analysis of spdes arising in path sampling. part 1: the gaussian case. Comm. Math. Sci. 3, 587–603 (2005)

Chorin, A., Hald, O.: Stochastic Tools in Mathematics and Science. Springer, New York (2006)

Acknowledgments

The authors thank an anonymous referee for constructive comments. We are grateful to David Dunson for the his comments on the implications of theory, Frank Pinski for helpful discussions concerning the behaviour of the quadratic variation; these discussions crystallized the need to prove Theorem 15. NSP gratefully acknowledges the NSF grant DMS 1107070. AMS is grateful to EPSRC and ERC for financial support. Parts of this work was done when AHT was visiting the department of Statistics at Harvard university. The authors thank the department of statistics, Harvard University for its hospitality.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix 1: Proofs of lemmas; Section 4

Proof

(Proof of Lemma 10) Let us introduce the two \(1\)-Lipschitz functions \(h,h_*:\mathbb {R}\rightarrow \mathbb {R}\) defined by

The function \(h_*\) is a first order approximation of \(h\) in a neighborhood of zero and we have