Abstract

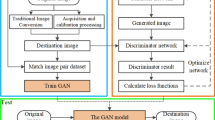

The image enhancement aims to improve the quality of images from contrast, detail, and color perspectives by adjusting the color of an image to match the distribution of the high-quality domain. Since the images captured by portable devices often suffer from noise and color bias, this paper designed a novel bi-directional normalization and color attention-guided generative adversarial network (BNCAGAN) for unsupervised image enhancement. Specifically, the bi-directional normalization generator is built upon a feature encoder, an auxiliary attention classifier (AAC), a bi-directional normalization residual (BNR) module, and a feature fusion decoder. With the aid of the AAC and BNR modules, the generator can flexibly control the global style, local details, and color transformation constraints from low-quality and high-quality domains. To improve the visual effect, a spatial color correction module is proposed to assist the multi-scale discriminator in focusing on color fidelity. In addition, a mixed loss, including a content retention loss and an identity fidelity loss, can maintain the structural features to fit the high-quality domain distribution. Extensive experiments on the MIT-Adobe FiveK dataset and DSLR photograph enhancement dataset exhibit that our BNCAGAN outperforms existing methods, and it can improve both the authenticity and naturalness of low-quality images and thus can be widely used for image retrieval preprocessing to improve the understanding of image semantics. The source code is available at https://github.com/SWU-CS-MediaLab/BNCAGAN.

Similar content being viewed by others

Change history

22 March 2024

Copyright right year has been changed from 2023 to 2024

References

Pizer SM, Amburn EP, Austin JD, Cromartie R, Geselowitz A, Greer T, ter Haar B, Romeny JB, Zimmerman ZK (1987) Adaptive histogram equalization and its variations. Comput Vis Graph Image Process 39(3):355–368

Lee C, Lee C, Kim C-S (2013) Contrast enhancement based on layered difference representation of 2d histograms. IEEE Trans Image Process 22(12):5372–5384

Wang S, Zheng J, Hai-Miao H, Li B (2013) Naturalness preserved enhancement algorithm for non-uniform illumination images. IEEE Trans Image Process 22(9):3538–3548

Fu X, Zeng D, Huang Y, Zhang X-P, Ding X (2016) A weighted variational model for simultaneous reflectance and illumination estimation. In: 2016 IEEE Conference on computer vision and pattern recognition (CVPR), pp 2782–2790

Guo X, Li Yu, Ling H (2017) Lime: low-light image enhancement via illumination map estimation. IEEE Trans Image Process 26(2):982–993

Li M, Liu J, Yang W, Sun X, Guo Z (2018) Structure-revealing low-light image enhancement via robust retinex model. IEEE Trans Image Process 27(6):2828–2841

Wu S, Oerlemans A, Bakker EM, Lew MS (2017) Deep binary codes for large scale image retrieval. Neurocomputing 257:5–15

Wang X, Zou X, Bakker EM, Song W (2020) Self-constraining and attention-based hashing network for bit-scalable cross-modal retrieval. Neurocomputing 400:255–271

Zou X, Wang X, Bakker EM, Song W (2021) Multi-label semantics preserving based deep cross-modal hashing. Signal Process Image Commun 93:116131

Ignatov A, Kobyshev N, Timofte R, Vanhoey K (2017) Dslr-quality photos on mobile devices with deep convolutional networks. In: 2017 IEEE international conference on computer vision (ICCV), pp 3297–3305

Ren W, Liu S, Ma L, Qianqian X, Xiangyu X, Cao X, Junping D, Yang M-H (2019) Low-light image enhancement via a deep hybrid network. IEEE Trans Image Process 28(9):4364–4375

Goodfellow IJ, Pouget-Abadie J, Mirza M, Xu B, Warde-Farley D, Ozair S, Courville A, Bengio Y (2014) Generative adversarial networks. Adv Neural Inf Process Syst 3:2672–2680

Zhu J-Y, Park T, Isola P, Efros AA (2017) Unpaired image-to-image translation using cycle-consistent adversarial networks. In: 2017 IEEE international conference on computer vision (ICCV), pp 2242–2251

Jiang Y, Gong X, Ding Liu Yu, Cheng CF, Shen X, Yang J, Zhou P, Wang Z (2021) Enlightengan: deep light enhancement without paired supervision. IEEE Trans Image Process 30:2340–2349

Ni Z, Yang W, Wang S, Ma L, Kwong S (2020) Towards unsupervised deep image enhancement with generative adversarial network. IEEE Trans Image Process 29:9140–9151

Radford A, Metz L, Chintala S (2015) Unsupervised representation learning with deep convolutional generative adversarial networks. Comput Sci

Isola P, Zhu J-Y, Zhou T, Efros AA (2017) Image-to-image translation with conditional adversarial networks. In: 2017 IEEE conference on computer vision and pattern recognition (CVPR), pp 5967–5976

Chen C, Chen Q, Xu J, Koltun V (2018) Learning to see in the dark. In: 2018 IEEE/CVF conference on computer vision and pattern recognition, pp 3291–3300

Wei C, Wang W, Yang W, Liu J (2018) Deep retinex decomposition for low-light enhancement. In: British machine vision conference (BMVC)

He J, Yihao LY, Qiao CD (2020) Conditional sequential modulation for efficient global image retouching. In: Computer vision-ECCV 2020, pp 679–695

Chen Y-S, Wang Y-C, Kao M-H, Chuang Y-Y (2018) Deep photo enhancer: unpaired learning for image enhancement from photographs with gans. In: 2018 IEEE/CVF conference on computer vision and pattern recognition, pp 6306–6314

Zamir SW, Arora A, Khan S, Hayat M, Khan F, Yang M-H, Shao L (2020) Learning enriched features for real image restoration and enhancement. In: Computer vision—ECCV 2020, pp 492–511

Jiang L, Zhang C, Huang M, Liu C, Shi J, Loy CC (2020) Tsit: a simple and versatile framework for image-to-image translation. In: Computer vision—ECCV 2020, pp 206–222

Guo C, Li C, Guo J, Loy CC, Hou J, Kwong S, Cong R (2020) Zero-reference deep curve estimation for low-light image enhancement. In: 2020 IEEE/CVF conference on computer vision and pattern recognition (CVPR), pp 1777–1786

Liang J, Zeng H, Zhang L (2021) High-resolution photorealistic image translation in real-time: a laplacian pyramid translation network. In: 2021 IEEE/CVF conference on computer vision and pattern recognition (CVPR), pp 9387–9395

Sun X, Li M, He T, Fan L (2021) Enhance image as you like with unpaired learning. In: IJCAI

Song Y, Qian H, Du X (2021) Starenhancer: learning real-time and style-aware image enhancement. In: 2021 IEEE/CVF international conference on computer vision (ICCV), pp 4106–4115

Ronneberger O, Fischer P, Brox T (2015) U-net: convolutional networks for biomedical image segmentation. In: Navab N, Hornegger J, Wells WM, Frangi AF (eds) Medical image computing and computer-assisted intervention—MICCAI 2015. Springer, Cham, pp 234–241

Woo S, Park J, Lee J-Y, Kweon IS (2018) Cbam: convolutional block attention module. In: Ferrari V, Hebert M, Sminchisescu C, Weiss Y (eds) Computer vision-ECCV 2018, pp 3–19

Ulyanov D, Vedaldi A, Lempitsky V (2016) Instance normalization: the missing ingredient for fast stylization. arXiv:1607.08022

Huang X, Belongie S (2017) Arbitrary style transfer in real-time with adaptive instance normalization. In: 2017 IEEE international conference on computer vision (ICCV), pp 1510–1519

Lei Ba J, Kiros JR, Hinton GE (2016) Layer normalization. arXiv:1607.06450

Nam H, Kim H-E (2018) Batch-instance normalization for adaptively style-invariant neural networks. In: Proceedings of the 32nd international conference on neural information processing systems, pp 2563–2572

Kim J, Kim M, Kang H, Lee K (2020) U-gat-it: unsupervised generative attentional networks with adaptive layer-instance normalization for image-to-image translation. In: 8th International conference on learning representations (ICLR)

Zhang H, Goodfellow I, Metaxas D, Odena A (2019) Self-attention generative adversarial networks. In: International conference on machine learning. PMLR, pp 7354–7363

Jolicoeur-Martineau A (2019) The relativistic discriminator: a key element missing from standard gan. In: 7th International conference on learning representations (ICLR)

Johnson J, Alahi A, Fei-Fei L (2016) Perceptual losses for real-time style transfer and super-resolution. In: Leibe B, Matas J, Sebe N, Welling M (eds) Computer vision-ECCV 2016, pp 694–711

Bychkovsky V, Paris S, Chan E, Durand F (2011) Learning photographic global tonal adjustment with a database of input/output image pairs. In: CVPR 2011, pp 97–104

Zhou Wang AC, Bovik HRS, Simoncelli EP (2004) Image quality assessment: from error visibility to structural similarity. IEEE Trans Image Process 13(4):600–612

Sheikh HR, Bovik AC (2006) Image information and visual quality. IEEE Trans Image Process 15(2):430–444

Zhang L, Zhang L, Mou X, Zhang D (2011) Fsim: a feature similarity index for image quality assessment. IEEE Trans Image Process 20(8):2378–2386

Zhang R, Isola P, Efros AA, Shechtman E, Wang O (2018) The unreasonable effectiveness of deep features as a perceptual metric. In: 2018 IEEE/CVF conference on computer vision and pattern recognition, pp 586–595

Talebi H, Milanfar P (2018) Nima: neural image assessment. IEEE Trans Image Process 27(8):3998–4011

Zhang L, Zhang L, Bovik AC (2015) A feature-enriched completely blind image quality evaluator. IEEE Trans Image Process 24(8):2579–2591

Mittal A, Moorthy AK, Bovik AC (2012) No-reference image quality assessment in the spatial domain. IEEE Trans Image Process 21(12):4695–4708

Ma L, Ma T, Liu R, Fan X, Luo Z (2022) Toward fast, flexible, and robust low-light image enhancement. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 5637–5646

Liang J, Xu Y, Quan Y, Shi B, Ji H (2022) Self-supervised low-light image enhancement using discrepant untrained network priors. In: IEEE transactions on circuits and systems for video technology, page Early Access

Author information

Authors and Affiliations

Contributions

Shan Liu: Conceptualization; Methodology; Software; Investigation; Writing—Original Draft; ShiHao Shan: Methodology; Software; GuoQiang Xiao: Methodology; Software; XinBo Gao: Writing—Review & Editing; Song Wu: Conceptualization; Methodology; Software; Writing—Review & Editing.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Liu, S., Shan, S., Xiao, G. et al. Image enhancement with bi-directional normalization and color attention-guided generative adversarial networks. Int J Multimed Info Retr 13, 1 (2024). https://doi.org/10.1007/s13735-023-00310-8

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s13735-023-00310-8