Abstract

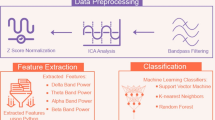

The objective of the proposed research is to classify electroencephalography (EEG) data of covert speech words. Six subjects were asked to perform covert speech tasks i.e mental repetition of four different words i.e ‘left’, ‘right’, ‘up’ and ‘down’. Fifty trials for each word recorded for every subject. Kernel-based Extreme Learning Machine (kernel ELM) was used for multiclass and binary classification of EEG signals of covert speech words. We achieved a maximum multiclass and binary classification accuracy of (49.77%) and (85.57%) respectively. The kernel ELM achieves significantly higher accuracy compared to some of the most commonly used classification algorithms in Brain–Computer Interfaces (BCIs). Our findings suggested that covert speech EEG signals could be successfully classified using kernel ELM. This research involving the classification of covert speech words potentially leading to real-time silent speech BCI research.

Similar content being viewed by others

References

Hamedi M, Salleh SH, Noor AM. Electroencephalographic motor imagery brain connectivity analysis for BCI: a review. Neural Comput. 2016;28(6):999–1041.

Neuper C, Scherer R, Wriessnegger S, Pfurtscheller G. Motor imagery and action observation: modulation of sensorimotor brain rhythms during mental control of a brain–computer interface. Clin Neurophysiol. 2009;120(2):239–47.

Hwang HJ, Kwon K, Im CH. Neurofeedback-based motor imagery training for brain–computer interface (BCI). J Neurosci Methods. 2009;179(1):150–6.

Ahn M, Jun SC. Performance variation in motor imagery brain–computer interface: a brief review. J Neurosci Methods. 2015;243:103–10.

Chaudhary U, Xia B, Silvoni S, Cohen LG, Birbaumer N. Brain–computer interface-based communication in the completely locked-in state. PLoS Biol. 2017;15(1):e1002593.

Mohanchandra K, Saha S, Lingaraju GM. EEG based brain computer interface for speech communication: principles and applications. In: Brain–computer interfaces. Springer, Cham; 2015. p. 273–93.

Chaudhary U, Birbaumer N, Ramos-Murguialday A. Brain–computer interfaces for communication and rehabilitation. Nate Rev Neurol. 2016;12(9):513.

DaSalla CS, Kambara H, Sato M, Koike Y. Single-trial classification of vowel speech imagery using common spatial patterns. Neural Netw. 2009;22(9):1334–9.

Brigham K, Kumar BV. Imagined speech classification with EEG signals for silent communication: a preliminary investigation into synthetic telepathy. In: 4th IEEE international conference on bioinformatics and biomedical engineering 2010; p. 1–4.

Torres-Garcia AA, Reyes-Garcia CA, Villasenor-Pineda L, Garcia-Aguilar G. Implementing a fuzzy inference system in a multi-objective EEG channel selection model for imagined speech classification. Expert Syst Appl. 2016;59:1–2.

Wang L, Zhang X, Zhong X, Zhang Y. Analysis and classification of speech imagery EEG for BCI. Biomed Signal Process Control. 2013;8(6):901–8.

Sereshkeh AR, Trott R, Bricout A, Chau T. Eeg classification of covert speech using regularized neural networks. IEEE/ACM Trans Audio Speech Lang Process. 2017;25(12):2292–300.

Brumberg JS, Krusienski DJ, Chakrabarti S, Gunduz A, Brunner P, Ritaccio AL, Schalk G. Spatio-temporal progression of cortical activity related to continuous overt and covert speech production in a reading task. PLoS ONE. 2016;11(11):e0166872.

Mugler EM, Patton JL, Flint RD, Wright ZA, Schuele SU, Rosenow J, Shih JJ, Krusienski DJ, Slutzky MW. Direct classification of all American English phonemes using signals from functional speech motor cortex. J Neural Eng. 2014;11(3):035015.

Martin S, Brunner P, Holdgraf C, Heinze HJ, Crone NE, Rieger J, Schalk G, Knight RT, Pasley BN. Decoding spectrotemporal features of overt and covert speech from the human cortex. Front Neuroeng. 2014;7:14.

Lotte F, Congedo M, Lecuyer A, Lamarche F, Arnaldi B. A review of classification algorithms for EEG-based brain–computer interfaces. J Neural Eng. 2007;4(2):R1.

Pawar D, Dhage SN. Recognition of unvoiced human utterances using brain-computer interface. In: Fourth IEEE international conference on image information processing (ICIIP). 2017; p. 1–4.

Kim J, Lee SK, Lee B. EEG classification in a single-trial basis for vowel speech perception using multivariate empirical mode decomposition. J Neural Eng. 2014;11(3):036010.

Deng S, Srinivasan R, Lappas T, D’Zmura M. EEG classification of imagined syllable rhythm using Hilbert spectrum methods. J Neural Eng. 2010;7(4):046006.

Indefrey P, Levelt WJ. The spatial and temporal signatures of word production components. Cognition. 2004;92(1–2):101–44.

Leuthardt E, Pei XM, Breshears J, Gaona C, Sharma M, Freudenburg Z, Barbour D, Schalk G. Temporal evolution of gamma activity in human cortex during an overt and covert word repetition task. Front Hum Neurosci. 2012;6:99.

Jahangiri A, Sepulveda F. The relative contribution of high-gamma linguistic processing stages of word production, and motor imagery of articulation in class separability of covert speech tasks in EEG data. J Med Syst. 2019;43(2):20.

Numminen J, Curio G. Differential effects of overt, covert and replayed speech on vowel-evoked responses of the human auditory cortex. Neurosci Lett. 1999;272(1):29–32.

Chakrabarti S, Sandberg HM, Brumberg JS, Krusienski DJ. Progress in speech decoding from the electrocorticogram. Biomed Eng Lett. 2015;5(1):10–21.

Pei X, Leuthardt EC, Gaona CM, Brunner P, Wolpaw JR, Schalk G. Spatiotemporal dynamics of electrocorticographic high gamma activity during overt and covert word repetition. Neuroimage. 2011;54(4):2960–72.

Huang GB, Zhu QY, Siew CK. Extreme learning machine: theory and applications. Neurocomputing. 2006;70(1–3):489–501.

Huang GB, Zhou H, Ding X, Zhang R. Extreme learning machine for regression and multiclass classification. IEEE Trans Syste Man Cybern Part B (Cybernetics). 2011;42(2):513–29.

Mognon A, Jovicich J, Bruzzone L, Buiatti M. ADJUST: an automatic EEG artifact detector based on the joint use of spatial and temporal features. Psychophysiology. 2011;48(2):229–40.

Patidar S, Pachori RB, Upadhyay A, Acharya UR. An integrated alcoholic index using tunable-Q wavelet transform based features extracted from EEG signals for diagnosis of alcoholism. Appl Soft Comput. 2017;50:71–8.

Broca P. Perte de la parole, ramollissement chronique et destruction partielle du lobe antérieur gauche du cerveau. Bull Soc Anthropol. 1861;2:235–8.

Wernicke C. Der aphasische symptomenkomplex. Berlin: Springer; 1974. p. 1–70.

Hickok G. Computational neuroanatomy of speech production. Nat Rev Neurosci. 2012;13(2):135–45.

Alpaydin E. Introduction to machine learning. Cambridge: MIT Press; 2014.

Martin S, Brunner P, Iturrate I, Millan JD, Schalk G, Knight RT, Pasley BN. Word pair classification during imagined speech using direct brain recordings. Sci Rep. 2016;6:25803.

Pei X, Hill J, Schalk G. Silent communication: toward using brain signals. IEEE Pulse. 2012;3(1):43–6.

Chi X, Hagedorn JB, Schoonover D, D’Zmura M. EEG-based discrimination of imagined speech phonemes. Int J Bioelectromagn. 2011;13(4):201–6.

Peng Y, Lu BL. Discriminative manifold extreme learning machine and applications to image and EEG signal classification. Neurocomputing. 2016;174:265–77.

Shi LC, Lu BL. EEG-based vigilance estimation using extreme learning machines. Neurocomputing. 2013;102:135–43.

Liang NY, Saratchandran P, Huang GB, Sundararajan N. Classification of mental tasks from EEG signals using extreme learning machine. Int J Neural Syst. 2006;16(01):29–38.

Yuan Q, Zhou W, Li S, Cai D. Epileptic EEG classification based on extreme learning machine and nonlinear features. Epilepsy Res. 2011;96(1–2):29–38.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical statement

All procedures performed in studies involving human participants were in accordance with the ethical standards of the institutional and/or national research committee and with the 1964 Helsinki declaration and its later amendments or comparable ethical standards.

Human participants informed consent

The informed consent has been taken from all the involved human participants.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Pawar, D., Dhage, S. Multiclass covert speech classification using extreme learning machine. Biomed. Eng. Lett. 10, 217–226 (2020). https://doi.org/10.1007/s13534-020-00152-x

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13534-020-00152-x