Abstract

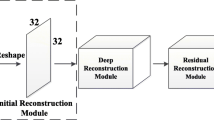

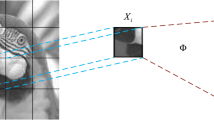

The detection and reconstruction of transparent objects have remained challenging due to the absence of their features and variations in the local features with variations in illumination. In this paper, both compressive sensing (CS) and super-resolution convolutional neural network (SRCNN) techniques are combined to capture transparent objects. With the proposed method, the transparent object’s details are extracted accurately using a single pixel detector during the surface reconstruction. The resultant images obtained from the experimental setup are low in quality due to speckles and deformations on the object. However, the implemented SRCNN algorithm has obviated the mentioned drawbacks and reconstructed images visually plausibly. The developed algorithm locates the deformities in the resultant images and improves the image quality. Additionally, the inclusion of compressive sensing minimizes the measurements required for reconstruction, thereby reducing image post-processing and hardware requirements during network training. The result obtained indicates that the visual quality of the reconstructed images has increased from a structural similarity index (SSIM) value of 0.2 to 0.53. In this work, we demonstrate the efficiency of the proposed method in imaging and reconstructing transparent objects with the application of a compressive single pixel imaging technique and improving the image quality to a satisfactory level using the SRCNN algorithm.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

K. N. Kutulakos and E. Steger, “A theory of refractive and specular 3D shape by light-path triangulation,” International Journal of Computer Vision, 2008, 76(1): 13–29.

V. Chari and P. Sturm, “A theory of refractive photo-light-path triangulation,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Oregon, USA, 2013, pp. 1438–1445.

A. Mathai, N. Guo, D. Liu, and X. Wang, “3D transparent object detection and reconstruction based on passive mode single-pixel imaging,” Sensors, 2020, 20(15): 4211.

X. Tian, R. Liu, Z. Wang, and J. Ma, “High quality 3D reconstruction based on fusion of polarization imaging and binocular stereo vision,” Information Fusion, 2021, 77: 19–28.

R. Rantoson, C. Stolz, D. Fofi, and F. Mériaudeau, “3D reconstruction of transparent objects exploiting surface fluorescence caused by UV irradiation,” in 2010 IEEE International Conference on Image Processing, Hong Kong, China, 2010, pp. 2965–2968.

K. Han, K. Y. K. Wong, and M. Liu, “A fixed viewpoint approach for dense reconstruction of transparent objects,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, USA, 2015, pp. 4001–4008.

C. J. Phillips, M. Lecce, and K. Daniilidis, “Seeing glassware: from edge detection to pose estimation and shape recovery,” in Robotics: Science and Systems, Michigan, USA, 2016: 3.

G. Georgakis, M. A. Reza, A. Mousavian, P. H. Le, and J. KoŠecká, “Multiview RGB-D dataset for object instance detection,” in 2016 Fourth International Conference on 3D Vision (3DV), Stanford, USA, 2016, pp. 426–434.

Z. Wang, Q. Zhou, and Y. Shuang, “Three-dimensional reconstruction with single-shot structured light dot pattern and analytic solutions,” Measurement, 2020, 151: 107114.

Z. Wang, “Review of real-time three-dimensional shape measurement techniques,” Measurement, 2020, 156: 107624.

G. Eren, O. Aubreton, F. Meriaudeau, L. A. S. Secades, D. Fofi, A. T. Naskali, et al., “Scanning from heating: 3D shape estimation of transparent objects from local surface heating,” Optics Express, 2009, 17(14): 11457–11468.

A. Brahm, C. Rößler, P. Dietrich, S. Heist, P. Kühmstedt, and G. Notni, “Non-destructive 3D shape measurement of transparent and black objects with thermal fringes,” in Dimensional Optical Metrology and Inspection for Practical Applications, vol. 9868: International Society for Optics and Photonics, Baltimore, Maryland, USA, 2016, pp. 98680C.

U. Klank, D. Carton, and M. Beetz, “Transparent object detection and reconstruction on a mobile platform,” in 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 2011, pp. 5971–5978.

H. Jiang, H. Zhai, Y. Xu, X. Li, and H. Zhao, “3D shape measurement of translucent objects based on Fourier single-pixel imaging in projector-camera system,” Optics Express, 2019, 27(23): 33564–33574.

B. Atcheson, I. Ihrke, W. Heidrich, A. Tevs, D. Bradley, M. Magnor, et al., “Time-resolved 3d capture of non-stationary gas flows,” ACM Transactions on Graphics, 2008, 27(5): 1–9.

X. Fu, Y. Sun, M. LiWang, Y. Huang, X. P. Zhang, and X. Ding, “A novel retinex based approach for image enhancement with illumination adjustment,” in 2014 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Florence, Italy, 2014, pp: 1190–1194.

C. A. Glasbey, “An analysis of histogram-based thresholding algorithms,” CVGIP: Graphical Models and Image Processing, 1993, 55(6): 532–537.

X. Guo, Y. Li, and H. Ling, “LIME: low-light image enhancement via illumination map estimation,” IEEE Transactions on Image Processing, 2016, 26(2): 982–993.

W. Yang, X. Zhang, Y. Tian, W. Wang, J. H. Xue, and Q. Liao, “Deep learning for single image super-resolution: A brief review,” IEEE Transactions on Multimedia, 2019, 21(12): 3106–3121.

C. Szegedy, W. Liu, Y. Jia, P. Sermanet, S. Reed, D. Anguelov, et al., “Going deeper with convolutions,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, USA, 2015, pp. 1–9.

C. Dong, C. C. Loy, K. He, and X. Tang, “Image super-resolution using deep convolutional networks,” IEEE Transactions on Pattern Analysis and Machine Intelligence, 2015, 38(2): 295–307.

L. Zhang, Y. Zhang, Z. Zhang, J. Shen, and H. Wang, “Real-time water surface object detection based on improved faster R-CNN,” Sensors, 2019, 19(16): 3523.

P. J. Lai and C. S. Fuh, “Transparent object detection using regions with convolutional neural network,” in IPPR Conference on Computer Vision, Graphics, and Image Processing, Taiwan, China, 2015, pp. 2.

E. Xie, W. Wang, W. Wang, M. Ding, C. Shen, and P. Luo, “Segmenting transparent objects in the wild,” in Computer Vision—ECCV 2020: 16th European Conference, Glasgow, UK, 2020, pp. 696–711.

M. P. Khaing and M. Masayuki, “Transparent object detection using convolutional neural network,” in International Conference on Big Data Analysis and Deep Learning Applications, Miyazaki, Japan, 2018, pp. 86–93.

S. Song and H. Shim, “Depth reconstruction of translucent objects from a single time-of-flight camera using deep residual networks,” in Asian Conference on Computer Vision, Perth, Australia, 2018, pp. 641–657.

M. F. Duarte, M. A. Davenport, D. Takhar, J. N. Laska, T. Sun, K. F. Kell, et al., “Single-pixel imaging via compressive sampling,” IEEE Signal Processing Magazine, 2008, 25(2): 83–91.

J. A. Tropp, “A mathematical introduction to compressive sensing [Book Review],” Bulletin of the American Mathematical Society, 2017, 54(1): 151–165.

J. Romberg, “Imaging via compressive sampling,” IEEE Signal Processing Magazine, 2008, 25(2): 14–20.

L. O. Chua and T. Roska, “The CNN paradigm,” IEEE Transactions on Circuits and Systems I: Fundamental Theory and Applications, 1993, 40(3): 147–156.

M. Elhoseny and K. Shankar, “Optimal bilateral filter and convolutional neural network based denoising method of medical image measurements,” Measurement, 2019, 143: 125–135.

Y. Guo, J. Chen, J. Wang, Q. Chen, J. Cao, Z. Deng, et al., “Closed-loop matters: Dual regression networks for single image super-resolution,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020, pp. 5407–5416.

B. Wang, Y. Zou, L. Zhang, Y. Hu, H. Yan, C. Zuo, et al., “Low-light-level image super-resolution reconstruction based on a multi-scale features extraction network,” Photonics, 2021, 8(8): 321.

T. Tong, G. Li, X. Liu, and Q. Gao, “Image super-resolution using dense skip connections,” in Proceedings of the IEEE International Conference on Computer Vision, Honolulu, USA, 2017, pp. 4799–4807.

U. Sara, M. Akter, and M. S. Uddin, “Image quality assessment through FSIM, SSIM, MSE and PSNR—a comparative study,” Journal of Computer and Communications, 2019, 7(3): 8–18.

Acknowledgment

This research was funded by the Ministry of Higher Education, Malaysia (Grant No. Grant FRGS/1/2020/ICT02/MUSM/02/1). Thanks for the support from Monash University Malaysia.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Mathai, A., Mengdi, L., Lau, S. et al. Transparent Object Reconstruction Based on Compressive Sensing and Super-Resolution Convolutional Neural Network. Photonic Sens 12, 220413 (2022). https://doi.org/10.1007/s13320-022-0653-x

Received:

Revised:

Published:

DOI: https://doi.org/10.1007/s13320-022-0653-x