Abstract

Evolutionary neural networks (ENNs) are an adaptive approach that combines the adaptive mechanism of Evolutionary algorithms (EAs) with the learning mechanism of Artificial Neural Network (ANNs). In view of the difficulties in design and development of DNNs, ENNs can optimize and supplement deep learning algorithm, and the more powerful neural network systems are hopefully built. Many valuable conclusions and results have been obtained in this field, especially in the construction of automated deep learning systems. This study conducted a systematic review of the literature on ENNs by using the PRISMA protocol. In literature analysis, the basic principles and development background of ENNs are firstly introduced. Secondly, the main research techniques are introduced in terms of connection weights, architecture design and learning rules, and the existing research results are summarized and the advantages and disadvantages of different research methods are analyzed. Then, the key technologies and related research progress of ENNs are summarized. Finally, the applications of ENNs are summarized and the direction of future work is proposed.

Similar content being viewed by others

References

Hinton GE, Salakhutdinov RR (2006) Reducing the dimensionality of data with neural networks. Science 313(5786):504–507

Yang F, Zhang L, Yu S et al (2020) Feature pyramid and hierarchical boosting network for pavement crack detection. IEEE Trans Intell Transp Syst 21(4):1525–1535

Guo Y, Liu Y, Oerlemans A et al (2016) Deep learning for visual understanding: a review. Neurocomputing 187:27–48

Ahmad F, Abbasi A, Li J et al (2020) A deep learning architecture for psychometric natural language processing. ACM Trans Inf Syst (TOIS) 38(1):1–29

Knoll F, Hammernik K, Yi Z (2019) Assessment of the generalization of learned image reconstruction and the potential for transfer learning. Magn Reson Med 81(1):116–128

Mahindru A, Sangal AL (2021) MLDroid—framework for Android malware detection using machine learning techniques. Neural Comput Appl 33:5183–5240

Mahindru A, Sangal AL (2021) DeepDroid: feature selection approach to detect android malware using deep learning. In: Proceedings of the 2019 IEEE 10th International Conference on software engineering and service science (ICSESS). https://doi.org/10.1109/ICSESS47205.2019.9040821

Mahindru A, Sangal AL (2020) PerbDroid: effective malware detection model developed using machine learning classification techniques. J Towards Bio-inspir Tech Softw Eng. https://doi.org/10.1007/978-3-030-40928-9_7

Sun Y, Yen GG et al (2019) Evolving unsupervised deep neural networks for learning meaningful representations. IEEE Trans Evol Comput 23(1):89–103

Liang J, Meyerson E, Hodjat B, Fink D, Mutch K, Miikkulainen R (2019) Evolutionary neural AutoML for deep learning. In: Proceedings of the genetic and evolutionary computation conference (GECCO), pp 401–409

Fernandez-Blanco E, Rivero D, Gestal M et al (2015) A Hybrid evolutionary system for automated artificial neural networks generation and simplification in biomedical applications. Curr Bioinform 10(5):672–691

Lehman J, Chen J, Clune J, Stanley KO (2018) Safe mutations for deep and recurrent neural networks through output gradients. In: Proceedings of the genetic and evolutionary computation conference (GECCO), pp 117–124

Lu G, Li J, Yao X (2014) Fitness landscapes and problem difficulty in evolutionary algorithms: from theory to applications. In: Richter H (ed) Recent advances in the theory and application of fitness landscapes. Springer Berlin Heidelberg, Berlin, pp 133–152

Whitley D (2001) An overview of evolutionary algorithms: practical issues and common pitfalls. Inf Softw Technol 43(14):817–831

Stanley KO, Clune J, Lehman J et al (2019) Designing neural networks through neuroevolution. Nat Mach Intell 1(1):24–35

Alvaro M, Joaquin D, Ronald J et al (2002) Systematic learning of gene functional classes from DNA array expression data by using multilayer perceptrons. Genome Res 12(11):1703–1715

Rumelhart DE, Hinton GE, Williams RJ (1986) Learning representations by back-propagating errors. Nature 323(6088):533–536

Krizhevsky A, Sutskever I, Hinton GE (2017) ImageNet classification with deep convolutional neural networks. Commun ACM 60(6):84–90

Zeiler MD, Fergus R (2013) Visualizing and understanding convolutional networks. arXiv preprint, arXiv:1311.2901

Simonyan K, Zisserman A (2014) very deep convolutional networks for large-scale image recognition. arXiv preprint, arXiv 1409.1556

Szegedy C, Liu W, Jia Y, Sermanet P, Reed S, Anguelov D, Erhan D, Vanhoucke V, Rabinovich A (2015) Going deeper with convolutions. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR)

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR)

Silver D, Huang A, Maddison CJ et al (2016) Mastering the game of Go with deep neural networks and tree search. Nature 529(7587):484–489

He KM, Sun J (2015) Convolutional neural networks at constrained time cost. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR)

Lecun Y, Bengio Y, Hinton G (2015) Deep learning. Nature 521(7553):436

Ding S, Li H, Su C et al (2013) Evolutionary artificial neural networks: a review. Artif Intell Rev 39(3):251–260

Jin Y, Jürgen B (2005) Evolutionary optimization in uncertain environments-a survey. IEEE Trans Evol Comput 9(3):303–317

Sharkey N (2002) Evolutionary computation: the fossil record. IEE Rev 45(1):40–40

Sun Y, Xue B, Zhang M (2017) Evolving deep convolutional neural networks for image classification. IEEE Trans Evol Comput 24(2):394–407

Bonissone PP, Subbu R, Eklund N et al (2006) Evolutionary algorithms + domain knowledge = real-world evolutionary computation. IEEE Trans Evol Comput 10(3):256–280

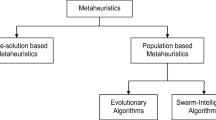

Ojha VK, Abraham A, Snasel V (2017) Metaheuristic design of feedforward neural networks: a review of two decades of research. Eng Appl Artif Intell 60:97–116

Grefenstette JJ (1988) Genetic algorithms and machine learning. Mach Learn 3(2):95–99

Hansen N (2006) The CMA evolution strategy: a comparing review. Stud Fuzziness Soft Comput 192:75–102

Banzhaf W, Koza JR (2000) Genetic programming. IEEE Intell Syst 15(3):74–84

Lehman J, Miikkulainen R (2013) Neuroevolution. Scholarpedia 8(6):30977

Dufourq E, Bassett B (2017) Automated problem identification: regression vs classification via evolutionary deep networks. In: Proceedings of the South African institute of computer scientists and information technologists

Sharma D, Deb K et al (2011) Domain-specific initial population strategy for compliant mechanisms using customized genetic algorithm. Struct Multidiscip Optim 43(4):541–554

Madeira JA, Rodrigues HC et al (2006) Multiobjective topology optimization of structures using genetic algorithms with chromosome repairing. Struct Multidiscip Optim 32(1):31–39

Lorenzo PR, Nalepa J (2018) Memetic evolution of deep neural networks. In: Proceedings of the genetic and evolutionary computation conference (GECCO)

Liu Y, Sun Y, Xue B, Zhang M, Yen GG, Tan KC (2021) A survey on evolutionary neural architecture search. IEEE Trans Neural Netw Learn Syst. https://doi.org/10.1109/TNNLS.2021.3100554

Sapra D, Pimentel AD (2020) An evolutionary optimization algorithm for gradually saturating objective functions. In: Proceedings of GECCO

Montana D, Davis L et al (1989) Training feedforward neural networks using genetic algorithms. In: Proceedings of the international joint conference on Artificial intelligence (IJCAI). vol 1, pp 762–767

Yao X (1999) Evolving artificial neural networks. In: Proceedings of the IEEE, 87(9):1423–1447

Lehman J, Chen J, Clune J et al (2018) ES is more than just a traditional finite-difference approximator. In: Proceedings of the genetic and evolutionary computation conference (GECCO)

Salimans T, Ho J, Chen X, et al. (2017) evolution strategies as a scalable alternative to reinforcement learning. arXiv preprint, arXiv:1703.03864

Such F P, Madhavan V, Conti E, et al. (2017) Deep neuroevolution: genetic algorithms are a competitive alternative for training deep neural networks for reinforcement learning. arXiv preprint, arXiv: 1712.06567

Singh GAP, Gupta PK (2018) Performance analysis of various machine learning-based approaches for detection and classification of lung cancer in humans. Neural Comput Appl 31:6863–6877

Cui X, Zhang W, Tüske Z, Picheny M (2018) Evolutionary stochastic gradient descent for optimization of deep neural networks. In: Proceedings of the 32nd international conference on neural information processing systems (NIPS)

Khadka S, Tumer K (2018) Evolution-guided policy gradient in reinforcement learning. In: In: Proceedings of the 32nd international conference on neural information processing systems (NIPS)

Houthooft R, Chen Y, Isola P, Stadie B, Wolski F, Jonathan H, OpenAI, Abbeel P (2018) Evolved policy gradients. In: Proceedings of the 32nd international conference on neural information processing systems (NIPS)

Yang S, Tian Y, He C, Zhang X, Tan KC, Jin Y (2021) A Gradient-guided evolutionary approach to training deep neural networks. IEEE Trans Neural Netw Learn Syst. https://doi.org/10.1109/TNNLS.2021.3061630

Liu H, Simonyan K, Vinyals O, et al (2018) Genetic programming approach to designing convolutional Architecture Search. In: Proceedings of the ICLR

Hu H, Peng R, Tai YW, et al (2016) Network trimming: a data-driven neuron pruning approach towards efficient deep architectures. arXiv preprint, arXiv:1607.03250

Liu J, Gong M, Miao Q et al (2018) Structure learning for deep neural networks based on multiobjective optimization. IEEE Trans Neural Netw Learn Syst 29(99):2450–2463

Kim YH, Reddy B, Yun S, Seo C (2017) Nemo: neuro-evolution with multiobjective optimization of deep neural network for speed and accuracy. In: Proceedings of the international conference on machine learning (ICML)

Zhou Y, Jin Y, Ding J (2020) Surrogate-assisted evolutionary search of spiking neural architectures in liquid state machines. Neurocomputing 406:12–23

Probst P, Bischl B, Boulesteix AL (2018) Tunability: Importance of hyperparameters of machine learning algorithms. arXiv preprint, arXiv:1802.09596

Ghawi R, Pfeffer J (2019) Efficient hyperparameter tuning with grid search for text categorization using kNN approach with BM25 similarity. Open Comput Sci 9(1):160–180

Bergstra J, Bengio Y (2012) Random search for hyper-parameter optimization. J Mach Learn Res 13(1):281–305

Loshchilov I, Hutter F (2016) CMA-ES for hyperparameter optimization of deep neural networks. arXiv preprint, arXiv:1604.07269

ZahediNasaba R, Mohsenia H (2020) Neuroevolutionary based convolutional neural network with adaptive activation functions. Neurocomputing 381:306–313

Stanley KO, Miikkulainen R (2002) Evolving neural networks through augmenting topologies. Evol Comput 10(2):99–127

Abraham A (2004) Meta learning evolutionary artificial neural networks. Neurocomputing 56:1–38

Miikkulainen R, Liang J, Meyerson E, et al (2017) Evolving deep neural networks. arXiv preprint, arXiv:1703.00548

Assunção F, Lourenço N, Machado P et al (2018) DENSER: deep evolutionary network structured representation. Genet Program Evol Mach 20:5–35

Assuno F, Loureno N, Machado P et al (2019) Fast DENSER: efficient deep neuroevolution. Genetic Programming 11451:197–212

Minar M R, Naher J (2018) Recent advances in deep learning: an overview. arXiv preprint, arXiv:1807.08169

Suganuma M, Shirakawa S, Nagao T (2017) A genetic programming approach to designing convolutional neural network architectures. In: Proceedings of the genetic and evolutionary computation conference, pp 497–504

Ma B, Li X, Xia Y et al (2020) Autonomous deep learning: a genetic DCNN designer for image classification. Neurocomputing 379:152–161

Xie L, Yuille A (2017) Genetic CNN. In: Proceedings of the IEEE international conference on computer vision (ICCV), pp 1379–1388

Suganuma M, Kobayashi M, Shirakawa S et al (2020) Evolution of deep convolutional neural networks using Cartesian genetic programming. Evol Comput 28(1):141–163

Sun Y, Xue B, Zhang M et al (2019) Completely automated CNN architecture design based on blocks. IEEE Trans Neural Netw Learn Syst 31(4):1–13

Real E, Moore S, Selle A, Saxena S, Suematsu YL, Tan J, Le QV, Kurakin A (2017) Large-scale evolution of image classifiers. In: Proceedings of the international conference on machine learning (ICML)

Zhang H, Kiranyaz S, Gabbouj M (2018) Finding better topologies for deep convolutional neural networks by evolution. arXiv preprint, arXiv:1809.03242

Desell T (2017) Large scale evolution of convolutional neural networks using volunteer computing. In: Proceedings of the the genetic and evolutionary computation conference companion (GECCO)

ElSaid A, Wild B, Higgins J, Desell T (2016) Using LSTM recurrent neural networks to predict excess vibration events in aircraft engines. In: Proceedings of 2016 IEEE 12th international conference on e-Science (e-Science), pp 260–269

Ororbia A, ElSaid A E, Desell T (2019) Investigating recurrent neural network memory structures using neuro-evolution. In: Proceedings of the genetic and evolutionary computation conference (GECCO)

Real E, Aggarwal A, Huang Y, Le QV (2018) Regularized evolution for image classifier architecture search. In: Proceedings of the AAAI conference on artificial intelligence. vol 33(01), pp 4780–4789

Rawal A, Miikkulainen R (2018) From nodes to networks: evolving recurrent neural networks. arXiv preprint, arXiv:1803.04439

Zoph B, Le Q V (2016) Neural architecture search with reinforcement learning. arXiv preprint, arXiv:1611.01578

Pham H, Guan M, Zoph B, Le Q, Dean J (2018) Efficient neural architecture search via parameters sharing. In: Proceedings of the 35th International conference on machine learning, PMLR 80, pp 4095–4104

Zhong Z, Yang Z, Deng B, Yan J, Wu W, Shao J, Liu C (2021) Blockqnn: efficient block-wise neural network architecture generation. IEEE Trans Pattern Anal Mach Intell 43(7):2314–2328

Baker B, Gupta O, Naik N, Raskar R (2016) Designing neural network architectures using reinforcement learning. arXiv preprint arXiv:1611.02167

Dong J, Cheng A, Juan D, Wei W, Sun M (2018) Dpp-net: device-aware progressive search for pareto-optimal neural architectures. In: Proceedings of the 6th international conference on learning representations (ICLR). https://openreview.net/forum?id=B1NT3TAIM

Dong J, Cheng A, Juan D, Wei W, Sun M (2018) Ppp-net: platform-aware progressive search for pareto-optimal neural architectures. In: Proceedings of 6th international conference on learning representations (ICLR)

Cai H, Zhu L, Han S (2018) Proxylessnas: direct neural architecture search on target task and hardware. arXiv preprint arXiv:1812.00332

Liu H, Simonyan K, Yang Y (2018) Darts: differentiable architecture search. arXiv preprint arXiv:1806.09055

Jin X, Wang J, Slocum J, Yang M, Dai S, Yan S, Feng J (2019) Rc-darts: resource constrained differentiable architecture search. arXiv preprint arXiv:1912.12814

Xie S, Zheng H, Liu C, Lin L (2018) Snas: stochastic neural architecture search. arXiv preprint arXiv:1812.09926

Sun Y, Xue B, Zhang M, Yen GG, Lv J (2020) Automatically designing CNN architectures using the genetic algorithm for image classification. IEEE Trans Cybern 50(9):3840–3854

Sun Y, Wang H, Xue B, Jin Y, Yen GG, Zhang M (2019) Surrogate-assisted evolutionary deep learning using an end-to-end random forest-based performance predictor. IEEE Trans Evol Comput 24(2):350–364

Zhang H, Jin Y, Cheng R, Hao K (2020) Efficient evolutionary search of attention convolutional networks via sampled training and node inheritance. IEEE Trans Evol Comput 25(2):371–385

Howard AG, Zhu M, Chen B, Kalenichenko D, Wang W, Weyand T, Andreetto M, Adam H (2017) MobileNets: efficient convolutional neural networks for mobile vision applications. arXiv preprint. arXiv:1704.04861

Ming F, Gong W, Wang L (2022) A two-stage evolutionary algorithm with balanced convergence and diversity for many-objective optimization. IEEE Trans Syst Man Cybern Syst. https://doi.org/10.1109/TSMC.2022.3143657

Sun Y, Xue B, Zhang M, Yen GG (2019) A new two-stage evolutionary algorithm for many-objective optimization. IEEE Trans Evol Comput 23(5):748–761

Huang J, Sun W, Huang L (2020) Deep neural networks compression learning based on multi-objective evolutionary algorithms. Neurocomputing 378:260–269

Loni M, Sinaei S, Zoljodi A, Daneshtalab M, Sjödin M (2020) DeepMaker: a multi-objective optimization framework for deep neural networks in embedded systems. Microprocess Microsyst 73:102989

Cetto T, Byrne J, Xu X et al (2019) Size/accuracy trade-off in convolutional neural networks: an evolutionary approach. In: Proceedings of the INNSBDDL

Nolfi S, Miglino O, Parisi D (1994) Phenotypic plasticity in evolving neural networks. In: Proceedings of the PerAc'94. From perception to action, pp 146–157

Soltoggio A, Stanley KO, Risi S (2018) Born to learn: the inspiration, progress, and future of evolved plastic artificial neural networks. Neural Netw 108:48–67

Chalmers DJ (1991) The evolution of learning: An experiment in genetic connectionism. In: Connectionist models. Morgan Kaufmann, Elsevier, pp 81–90

Kim HB, Jung SH, Kim TG et al (1996) Fast learning method for back-propagation neural network by evolutionary adaptation of learning rates. Neurocomputing 11(1):101–106

Niv Y, Joel D, Meilijson I et al (2002) Evolution of reinforcement learning in uncertain environments: a simple explanation for complex foraging behaviors. Adapt Behav 10(1):5–24

Hebb DO (1949) The organization of behavior: a neuropsychological theory. Wiley, New York

Bienenstock EL, Cooper LN, Munro PW (1982) Theory for the development of neuron selectivity: orientation specificity and binocular interaction in visual cortex. J Neurosci 2(1):32–48

Babinec Š, Pospíchal J (2007) Improving the prediction accuracy of echo state neural networks by anti-Oja’s learning. In: Proceedings of the International Conference on artificial neural networks, Springer, pp 19–28

Bi GQ, Poo MM (1998) Synaptic modifications in cultured hippocampal neurons: dependence on spike timing, synaptic strength, and postsynaptic cell type. J Neurosci 18(24):10464–10472

Triesch J (2005) A gradient rule for the plasticity of a neuron’s intrinsic excitability. In: Proceedings of the artificial neural networks: biological inspirations(ICANN). vol 3696, Springer, pp 65–70

Coleman OJ, Blair AD (2012) Evolving plastic neural networks for online learning: review and future directions. In: Proceedings of the Australasian joint conference on artificial intelligence, pp 326–337

Stanley KO (2017) Neuroevolution: a different kind of deep learning. Obtenido de. 27(04):2019

Risi S, Stanley KO (2014) Guided self-organization in indirectly encoded and evolving topographic maps. In: Proceedings of the 2014 annual conference on genetic and evolutionary computation. ACM, pp 713–720

Risi S, Stanley KO (2010) Indirectly encoding neural plasticity as a pattern of local rules. In: Proceedings of the international conference on simulation of adaptive behavior, pp 533–543

Stanley KO, Ambrosio DBD, Gauci J (2009) A hypercube-based encoding for evolving large-scale neural networks. Artif Life 15(2):185–212

Wang X, Jin Y, Hao K (2021) Synergies between synaptic and intrinsic plasticity in echo state networks. Neurocomputing 432:32–43

Guirguis D et al (2020) Evolutionary black-box topology optimization: challenges and promises. IEEE Trans Evol Comput 24(4):613–633

Azevedo FAC, Carvalho LRB, Grinberg LT et al (2010) Equal numbers of neuronal and nonneuronal cells make the human brain an isometrically scaled-up primate brain. J Comp Neurol 513(5):532–541

Boichot R et al (2016) A genetic algorithm for topology optimization of area-to-point heat conduction problem. Int J Therm Sci 108:209–217

Aulig N, Olhofer M (2016) Evolutionary computation for topology optimization of mechanical structures: An overview of representation. In: Proceedings of the 2016 IEEE congress on evolutionary computation (CEC), pp 1948–1955

Gruau F (1993) Genetic synthesis of modular neural networks. In: Proceedings of the GECCO

Gruau F, Whitley D, Pyeatt L (1996) A comparison between cellular encoding and direct encoding for genetic neural networks. In: Proceedings of the 1st annual conference on genetic programming, pp 81–89

Stanley KO (2007) Compositional pattern producing networks: a novel abstraction of development. Genet Program Evolvable Mach 8(2):131–162

Pugh JK, Stanley KO (2013) Evolving multimodal controllers with hyperneat. In: Proceedings of the 15th annual conference on Genetic and evolutionary computation, pp 735–742

Fernando C, Banarse D, Reynolds M et al (2016) Convolution by evolution: differentiable pattern producing networks. In: Proceedings of the Genetic and Evolutionary Computation Conference (GECCO), 109-116

Stork J, Zaefferer M, Bartz-Beielstein T (2019) Improving neuroevolution efficiency by surrogate model-based optimization with phenotypic distance kernels. In: Proceedings of the international conference on the applications of evolutionary computation (Part of EvoStar), Springer, pp 504–519

Lehman J, Stanley KO (2011) Abandoning objectives: evolution through the search for novelty alone. Evol Comput 19(2):189–223

Lehman J, Stanley KO (2008) Exploiting Open-endedness to solve problems through the search for novelty. In: Proceedings of the ALIFE

Risi S, Hughes CE, Stanley KO (2010) Evolving plastic neural networks with novelty search. Adapt Behav 18(6):470–491

Risi S, Vanderbleek SD, Hughes CE et al (2009) How novelty search escapes the deceptive trap of learning to learn. In: Proceedings of the 11th Annual conference on Genetic and evolutionary computation, pp 153-160

Conti E, Madhavan V, Such FP et al (2018) Improving exploration in evolution strategies for deep reinforcement learning via a population of novelty-seeking agents. In: Proceedings of the 32nd international conference on neural information processing systems (NIPS) pp 5032–5043

Reisinger J, Stanley K O, Miikkulainen R (2004) Evolving reusable neural modules. In: Proceedings of the genetic and evolutionary computation conference (GECCO), Springer, pp 69–81

Mouret J B, Doncieux S (2009) Evolving modular neural-networks through exaptation. In: Proceedings of the IEEE congress on evolutionary computation (CEC), IEEE, pp 1570–1577

Gomez F, Schmidhuber J, Miikkulainen R (2008) Accelerated neural evolution through cooperatively coevolved synapses. J Mach Learn Res 9:937–965

Praczyk T (2016) Cooperative co–evolutionary neural networks. J Intell Fuzzy Syst 30(5):2843–2858

Liang J, Meyerson E, Miikkulainen R (2018) Evolutionary architecture search for deep multitask networks. In: Proceedings of the genetic and evolutionary computation conference (GECCO), pp 466–473

Ellefsen KO, Mouret JB, Clune J (2015) Neural modularity helps organisms evolve to learn new skills without forgetting old skills. PLoS Comput Biol 11(4):e1004128

Velez R, Clune J (2017) Diffusion-based neuromodulation can eliminate catastrophic forgetting in simple neural networks. PLoS ONE 12(11):e0187736

Ellefsen KO, Huizinga J, Torresen J (2019) Guiding neuroevolution with structural objectives. Evol Comput 28(1):115–140

Knippenberg M V, Menkovski V, Consoli S (2019) Evolutionary construction of convolutional neural networks. arXiv preprint, arXiv:1903.01895

Liu P, El Basha MD, Li Y et al (2019) Deep evolutionary networks with expedited genetic algorithms for medical image denoising. Med Image Anal 54:306–315

Assunção F, Lourenço N, Machado P, et al (2019) Fast-DENSER++: evolving fully-trained deep artificial neural networks. arXiv preprint, arXiv:1905.02969

Li S, Sun Y, Yen GG, Zhang M (2021) Automatic design of convolutional neural network architectures under resource constraints. IEEE Trans Neural Netw Learn Syst. https://doi.org/10.1109/TNNLS.2021.3123105

Kyriakides G, Margaritis K (2022) Evolving graph convolutional networks for neural architecture search. Neural Comput Appl 34:899–909

Deng B, Yan J, Lin D (2017) Peephole: Predicting network performance before training. arXiv preprint arXiv:1712.03351

Istrate R, Scheidegger F, Mariani G, Nikolopoulos D, Bekas C, Malossi A C I (2019) Tapas: Train-less accuracy predictor for architecture search. In: Proceedings of the AAAI Conference on artificial intelligence 33: 3927–3934

Sun Y, Sun X, Fang Y, Yen GG, Liu Y (2021) A novel training protocol for performance predictors of evolutionary neural architecture search algorithms. IEEE Trans Evol Comput 25(3):524–536

Domhan T, Springenberg J T, Hutter F (2015) Speeding up automatic hyperparameter optimization of deep neural networks by extrapolation of learning curves. In: Proceedings of the Twenty-fourth International Joint Conference on artificial intelligence

Klein A, Falkner S, Springenberg TJ, Hutter F Learning curve prediction with Bayesian neural networks. In: Proceedings of the Fifth International Conference on learning representations, ICLR

Baker B, Gupta O, Raskar R, Naik N (2017) Accelerating neural architecture search using performance prediction. arXiv preprint arXiv:1705.10823

Zhang H, Jin Y, Jin Y, Hao K (2022) Evolutionary search for complete neural network architectures with partial weight sharing. IEEE Trans Evol Comput. https://doi.org/10.1109/TEVC.2022.3140855

Elsken T, Metzen J H, et al (2019) Efficient multi-objective neural architecture search via Lamarckian evolution. In: 7th International Conference on learning representations

Liang JZ, Miikkulainen R (2015) Evolutionary bilevel optimization for complex control tasks. In: Proceedings of the 2015 annual conference on genetic and evolutionary computation, pp 871–878

MacKay M, Vicol P, Lorraine J et al (2019) Self-tuning networks: Bilevel optimization of hyperparameters using structured best-response functions. arXiv preprint, arXiv:1903.03088

Sinha A, Malo P, Xu P et al (2014) A bilevel optimization approach to automated parameter tuning. In: Proceedings of the GECCO

Baldominos A, Saez Y, Isasi P (2018) Evolutionary convolutional neural networks: an application to handwriting recognition. Neurocomputing 283:38–52

Huang PC, Sentis L, Lehman J et al (2019) Tradeoffs in neuroevolutionary learning-based real-time robotic task design in the imprecise computation framework. ACM Trans Cyber-Phys Syst 3(2):14

Lipson H, Pollack JB (2000) Automatic design and manufacture of robotic lifeforms. Nature 406(6799):974

Durán-Rosal AM, Fernández JC, Casanova-Mateo C et al (2018) Efficient fog prediction with multi-objective evolutionary neural networks. Appl Soft Comput 70:347–358

Mason K, Duggan M, Barret E et al (2018) Predicting host CPU utilization in the cloud using evolutionary neural networks. Futur Gener Comput Syst 86:162–173

Khan GM, Arshad R (2016) Electricity peak load forecasting using CGP based neuro evolutionary techniques. Int J Comput Intell Syst 9(2):376–395

Grisci BI, Feltes BC, Dorn M (2019) Neuroevolution as a tool for microarray gene expression pattern identification in cancer research. J Biomed Inf 89:122–133

Abdikenov B, Iklassov Z, Sharipov A et al (2019) Analytics of heterogeneous breast cancer data using neuroevolution. IEEE Access 7:18050–18060

Wu Y, Tan H, Jiang Z, et al. (2019) ES-CTC: A deep neuroevolution model for cooperative intelligent freeway traffic control. arXiv preprint, arXiv:1905.04083

Trasnea B, Marina LA, Vasilcoi A, Pozna CR, Grigorescu SM (2019) GridSim: a vehicle kinematics engine for deep neuroevolutionary control in autonomous driving. In: Proceedings of the 2019 third IEEE international conference on robotic computing (IRC), IEEE, pp 443–444

Grigorescu S, Trasnea B, Marina L et al (2019) NeuroTrajectory: a neuroevolutionary approach to local state trajectory learning for autonomous vehicles. arXiv preprint, arXiv:1906.10971

Han F, Zhao MR, Zhang JM et al (2017) An improved incremental constructive single-hidden-layer feedforward networks for extreme learning machine based on particle swarm optimization. Neurocomputing 228:133–142

ElSaid A, Ororbia A, Desell T (2019) The ant swarm neuro-evolution procedure for optimizing recurrent networks. arXiv preprint, arXiv:1909.11849

Zhu W, Yeh WC, Chen J, Chen D, Li A, Lin Y (2019) Evolutionary convolutional neural networks using ABC. In: Proceedings of the 2019 11th international conference on machine learning and computing (ICMLC), pp 156–162

Acknowledgements

This work is supported by the NSFC (National Natural Science Foundation of China) project (Grant number: 62066041, 41861047) and the Northwest Normal University young teachers’ scientific research capability upgrading program (NWNU-LKQN-17-6).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Ma, Y., Xie, Y. Evolutionary neural networks for deep learning: a review. Int. J. Mach. Learn. & Cyber. 13, 3001–3018 (2022). https://doi.org/10.1007/s13042-022-01578-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13042-022-01578-8