Abstract

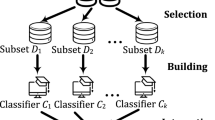

Ensemble learning has attracted much attention of researchers studying variable selection due to its great power in improving selection accuracy and stabilizing selection results. In this paper, we present a novel ensemble pruning technique called Pruned-ST2E to obtain more effective variable selection ensembles. The order to aggregate the individuals generated by the ST2E algorithm (Xin and Zhu in J Comput Graph Stat 21(2):275–294, 2012) is rearranged. To estimate the importance of each candidate variable, only some members ranked ahead are remained. Experiments with simulated and real-world data show that the performance of Pruned-ST2E is comparable or superior to several other benchmark methods. Through analyzing the accuracy–diversity pattern in both ST2E and Pruned-ST2E, it is revealed that the inserted pruning step excludes less accurate members. The reserved members also become more concentrated on the true importance vector. Moreover, Pruned-ST2E is easy to implement. Therefore, Pruned-ST2E can be considered as an alternative for tackling variable selection tasks in practice.

Similar content being viewed by others

References

Breiman L (1996) Heuristics of instability and stabilization in model selection. Ann Stat 24(6):2350–2383

Cai J, Luo JW, Wang SL, Yang S (2018) Feature selection in machine learning: a new perspective. Neurocomputing 300:70–79

Che JL, Yang YL (2017) Stochastic correlation coefficient ensembles for variable selection. J Appl Stat 44(10):1721–1742

Che JL, Yang YL, Li L, Bai XY, Zhang SH, Deng CZ (2017) Maximum relevance minimum common redundancy feature selection for nonlinear data. Inf Sci 409–410:68–86

Chung D, Kim H (2015) Accurate ensemble pruning with PL-bagging. Comput Stat Data Anal 83:1–13

Efron B, Hastie T, Hohnstone I, Tibshirani R (2004) Least angle regression. Ann Stat 32(2):407–499

Fan JQ, Li RZ (2001) Variable selection via nonconcave penalized likelihood and its oracle properties. J Am Stat Assoc 96(456):1348–1360

Fan JQ, Lv JC (2008) Sure independence screening for ultrahigh dimensional feature space (with discussions). J R Stat Soc (Ser B) 70(5):849–911

Fan JQ, Lv JC (2010) A selective overview of variable selection in high dimensional feature space. Stat Sin 20(1):101–148

Fakhraei S, Soltanian-Zadeh H, Fotouhi F (2014) Bias and stability of single variable classifiers for feature ranking and selection. Exp Syst Appl 41(15):6945–6958

Genuer R, Poggi JM, Tuleau-Malot C (2010) Variable selection using random forests. Pattern Rocognit Lett 31(14):2225–2236

Griffin J, Brown P (2017) Hierarchical shrinkage priors for regression models. Bayes Anal 12(1):135–159

Kuncheva LI (2014) Combining pattern classifiers: methods and algorithms, 2nd edn. Wiley, Hoboken

Dua D, Graff C (2019) UCI machine learning repository. http://archive.ics.uci.edu/ml. Accessed Dec 2016

Martínez-Muñoz G, Suárez A (2007) Using boosting to prune boosting ensembles. Pattern Recognit Lett 28(1):156–165

Martínez-Muñoz G, Hernández-Lobato D, Suárez A (2009) An analysis of ensemble pruning techniues based on ordered aggregation. IEEE Trans Pattern Anal Mach Intell 31(2):245–259

Meinshausen N, Bühlmann P (2010) Stability selection (with discussion). J R Stat Soc B 72(4):417–473

Mendes-Moreira J, Soares C, Jorge AM, de Sousa JF (2012) Ensemble approaches for regression: a survey. ACM Comput Surv 45(1):40 Article 10

Miller A (2002) Subset selection in regression, 2nd edn. Chapman & Hall/CRC Press, New Work

Nan Y, Yang YH (2014) Variable selection diagnostics measures for high-dimensional regression. J Comput Graph Stat 23(3):636–656

Peng HC, Long FH, Ding C (2005) Feature selection based on mutual information: criteria of max-dependency, max-relevance, and min-redundancy. IEEE Trans Pattern Anal Mach Intel 27(8):1226–1238

Rokach L (2016) Decision forest: twenty years of research. Inf Fus 27:111–125

Sauerbrei W, Buchholz A, Boulesteix AL, Binder H (2015) On stability issues in deriving multivariable regression models. Biometrical J 57(4):531–555

Subrahmanya N, Shin YC (2013) A variational Bayesian framework for group feature selection. Intern J Mach Learn Cybern 4(6):609–619

Tibshirani R (1996) Regression shrinkage and selection via the lasso. J R Stat Soc B 58(1):267–288

Tibshirani R, Walther G, Hastie T (2001) Estimating the number of clusters in a data set via the gap statistic. J R Stat Soc (Ser B) 63(2):411–423

Wang SJ, Nan B, Rosset S, Zhu J (2011) Random lasso. Ann Appl Stat 5(1):468–485

Xin L, Zhu M (2012) Stochastic stepwise ensembles for variable selection. J Comput Graph Stat 21(2):275–294

Zhang CX, Wang GW, Liu JM (2015) RandGA: injecting randomness into parallel genetic algorithm for variable selection. J Appl Stat 42(3):630–647

Zhang CX, Zhang JS, Kim SW (2016a) PBoostGA: pseudo-boosting genetic algorithm for variable ranking and selection. Comput Stat 31(4):1237–1262

Zhang CX, Ji NN, Wang GW (2016b) Randomizing outputs to increase variable selection accuracy. Neurocomputing 218:91–102

Zhang CX, Zhang JS, Yin QY (2017) A ranking-based strategy to prune variable selection ensembles. Knowl Based Syst 125:13–25

Zhou ZH, Wu JX, Tang W (2002) Ensembling neural networks: many could be better than all. Artif Intel 137(1–2):239–263

Zhu M, Chipman HA (2006) Darwinian evolution in parallel universes: a parallel genetic algorithm for variable selection. Technometrics 48(4):491–502

Zhu M, Fan GZ (2011) Variable selection by ensembles for the Cox model. J Stat Comput Simul 81(12):1983–1992

Zou H (2006) The adaptive lasso and its oracle properties. J Am Stat Assoc 101(476):1418–1429

Acknowledgements

The authors would like to thank the editor and reviewers for their useful comments which helped to improve the paper. This research was supported by the National Natural Science Foundation of China (Nos. 11671317, 61572393) and the National Research Foundation of Korea (No. NRF-2012R1A1A2041661).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Zhang, CX., Kim, SW. & Zhang, JS. On selective learning in stochastic stepwise ensembles. Int. J. Mach. Learn. & Cyber. 11, 217–230 (2020). https://doi.org/10.1007/s13042-019-00968-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13042-019-00968-9