Abstract

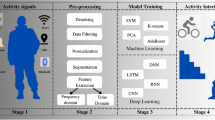

Toward the age of ambient intelligence, in which contactless devices are widely applied to recognize human states. This study aims at designing critical motion features to build artificial intelligence (AI) models for identifying user activities in front of the computer. Eight participants were recruited in the study to perform four daily computer activities, including playing games, surfing the web, typing words, and watching videos. While performing the experimental tasks, the participants’ upper body were videotaped, and the recorded videos were processed to obtain four designed features, comprising (1) the eye-opening size, (2) the mouth-opening size, (3) the number of optical-fence pixels, and (4) the standard deviation of optical-fence pixels. After feature importance confirmation, these obtained motion features were used to establish three recurrent neural network (RNN) models using simple RNN, gated recurrent unit (GRU), and long short-term memory (LSTM). The comparison of the model predictions showed that the GRU model had the best performance (accuracy = 76%), compared to the Simple RNN model (accuracy = 59%) and the LSTM model (accuracy = 70%). This study showed that the four tested computer activities had significant effects on the four designed features, and hence the features could be applied to build AI models for recognizing activities in front of a computer. Limitations are discussed for directing future studies in extending the methodology to other applications.

Similar content being viewed by others

References

Agarwal A, Gupta S, Singh DK (2016) Review of optical flow technique for moving object detection. In: 2016 2nd international conference on contemporary computing and informatics (IC3I). IEEE, pp 409–413

Aslani S, Mahdavi-Nasab H (2013) Optical flow based moving object detection and tracking for traffic surveillance. Int J Electr Comput Energ Electron Commun Eng 7:1252–1256

Barbot de Villeneuve G-S (2011) Beauty and the beast. The Great Books Foundation, Chicago

Bengio Y, Simard P, Frasconi P (1994) Learning long-term dependencies with gradient descent is difficult. IEEE Trans Neural Netw 5:157–166

Bertók K, Fazekas A (2014) Recognizing human activities based on head movement trajectories. In: 2014 5th IEEE conference on cognitive infocommunications (CogInfoCom). IEEE, pp 273–278

Blais C, Roy C, Fiset D, Arguin M, Gosselin F (2012) The eyes are not the window to basic emotions. Neuropsychologia 50:2830–2838

Blais C, Fiset D, Roy C, Saumure Régimbald C, Gosselin F (2017) Eye fixation patterns for categorizing static and dynamic facial expressions. Emotion 17:1107

Breuer R, Kimmel R (2017) A deep learning perspective on the origin of facial expressions. arXiv:1705.01842

Busso C, Deng Z, Yildirim S, Bulut M, Lee CM, Kazemzadeh A, Narayanan S (2004) Analysis of emotion recognition using facial expressions, speech and multimodal information. In: Proceedings of the 6th international conference on multimodal interfaces, pp 205–211

Camacho D, Novais P (2017) Innovations and practical applications of intelligent systems in ambient intelligence and humanized computing. J Ambient Intell Humaniz Comput 8:155–156

Chakraborty A, Konar A, Chakraborty UK, Chatterjee A (2009) Emotion recognition from facial expressions and its control using fuzzy logic. IEEE Trans Syst Man Cybern 39(4):726–743

Cho K, Van Merriënboer B, Gulcehre C, Bahdanau D, Bougares F, Schwenk H, Bengio Y (2014) Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv:1406.1078

Chung J, Gulcehre C, Cho K, Bengio Y (2014) Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv:1412.3555

Davis JW (2001) Hierarchical motion history images for recognizing human motion. In: Proceedings IEEE workshop on detection and recognition of events in video. IEEE, pp 39–46

de Gelder B (2000) Recognizing emotions by ear and by eye. In: Cognitive neuroscience of emotion, pp 84–105

Denman S, Fookes C, Sridharan S (2009) Improved simultaneous computation of motion detection and optical flow for object tracking. In: 2009 digital image computing: techniques and applications. IEEE, pp 175–182

Dey R, Salemt FM (2017) Gate-variants of gated recurrent unit (GRU) neural networks. In: 2017 IEEE 60th international midwest symposium on circuits and systems (MWSCAS). IEEE, pp 1597–1600

Ebrahimi Kahou S, Michalski V, Konda K, Memisevic R, Pal C (2015) Recurrent neural networks for emotion recognition in video. In: Proceedings of the 2015 ACM on international conference on multimodal interaction, pp 467–474

Eisenbarth H, Alpers GW (2011) Happy mouth and sad eyes: scanning emotional facial expressions. Emotion 11:860

Elman JL (1990) Finding structure in time. Cogn Sci 14:179–211

Fu R, Zhang Z, Li L (2016) Using LSTM and GRU neural network methods for traffic flow prediction. In: 2016 31st youth academic annual conference of Chinese association of automation (YAC). IEEE, pp 324–328

Hochreiter S, Schmidhuber J (1997) Long short-term memory. Neural Comput 9:1735–1780

Jung H, Lee S, Yim J, Park S, Kim J (2015) Joint fine-tuning in deep neural networks for facial expression recognition. In: Proceedings of the IEEE international conference on computer vision, pp 2983–2991

Kazemi V, Sullivan J (2014) One millisecond face alignment with an ensemble of regression trees. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 1867–1874

Kim DH, Baddar WJ, Jang J, Ro YM (2017) Multi-objective based spatio-temporal feature representation learning robust to expression intensity variations for facial expression recognition. IEEE Trans Affect Comput 10:223–236

Lim AP, Kusuma GP, Zahra A (2018) Facial emotion recognition using computer vision. In: 2018 Indonesian association for pattern recognition international conference (INAPR). IEEE, pp 46–50

Liu E, Yang G, Yang X (1983) The Travels of Lao Can. Chinese literature

Löfgren M, Witell L (2005) Kano's theory of attractive quality and packaging. Qual Manag J 12:7–20

Maña A, Koshutanski H (2019) Special issue on “Recent advances in ambient intelligence towards a smart and human-centered internet of things”. J Ambient Intell Humaniz Comput 10:727–729

Martin S, Tran C, Trivedi M (2012) Optical flow based head movement and gesture analyzer (ohmega). In: Proceedings of the 21st international conference on pattern recognition (ICPR2012). IEEE, pp 605–608

Mckelviet SJ (1973) The meaningfulness and meaning of schematic faces. Percept Psychophys 14:343–348

Murphy-Chutorian E, Trivedi MM (2008) Head pose estimation in computer vision: a survey. IEEE Trans Pattern Anal Mach Intell 31:607–626

Pantic M, Pentland A, Nijholt A, Huang TS (2007) Human computing and machine understanding of human behavior: a survey. In: Artifical intelligence for human computing, pp 47–71

Pipe riders (2020) https://www.crazygames.com/game/pipe-riders

Ranasinghe P, Wathurapatha W, Perera Y, Lamabadusuriya D, Kulatunga S, Jayawardana N, Katulanda P (2016) Computer vision syndrome among computer office workers in a developing country: an evaluation of prevalence and risk factors. BMC Res Notes 9:150

Rideout VJ, Foehr UG, Roberts DF (2010) Generation M 2: media in the lives of 8-to 18-year-olds. Henry J Kaiser Family Foundation, Oakland

Schroeders U, Wilhelm O (2011) Computer usage questionnaire: structure, correlates, and gender differences. Comput Hum Behav 27:899–904

Tarnowski P, Kolodziej M, Majkowski A, Rak RJ (2017) Emotion recognition using facial expressions. In: ICCS, pp 1175–1184

Tetris Battle (2019) https://twitter.com/tetrisbattle

Vries SD, Vries VD (2019) Krunker.io. https://www.miniclip.com/games/krunkerio/en/#t-g-rg-G

Westenius E (2013) Camera based gesture detection on Android devices. Department of Computer Science, Faculty of Engineering, LTH, Lund University

Wong K-W, Lam K-M, Siu W-C (2001) An efficient algorithm for human face detection and facial feature extraction under different conditions. Pattern Recognit 34:1993–2004

Acknowledgements

We would like to acknowledge the grant support from the Taiwan Ministry of Science and Technology (MOST107-2221-E-155 -033 -MY3) for funding the paper submission.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Lee, TY., Shen, WY., Lin, R.F. et al. Validation of four designed motion features for recognizing computer user activities. J Ambient Intell Human Comput 14, 14467–14476 (2023). https://doi.org/10.1007/s12652-020-02479-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12652-020-02479-w