Abstract

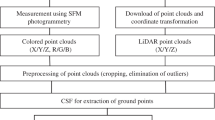

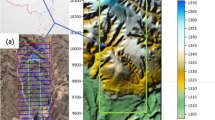

As the main type of ground objects in mountainous environment, mountain settlement is also an important subject to monitor for the prevention of geological disasters. The accurate and fast identification of mountain settlement is essential for disaster monitoring and rural planning. The fusion of multi-source heterogeneous data has attracted widespread attention for remote sensing. Equipped with high-definition cameras, UAV (unmanned aerial vehicle, UAV) can capture the image data with plenty of information about spectral texture, while the airborne LiDAR (light detection and ranging, LiDAR) equipped with high precision sensors is capable to locate objects precisely. Combining the advantages shown by the two kinds of data features enables the integration of two kinds of data, thus supplementing the advantages of spatial information and optical information. This paper aims at addressing the inaccuracy of boundary extraction in the existing methods of mountain settlement extraction and the limitation on the use of manual features to express image information. A mountainous settlement classification method is proposed by combining the feature information of LiDAR data and UAV image data sources. According to the qualitative and quantitative analyses of the experimental results, when the point cloud data with fused spectral information is oriented to the precise identification of mountainous areas, the RMSE (root-mean-square error, RMSE) value of building boundaries is relatively stable, ranging between 0 and 0.2 m through comparison of the calculation results with the actual data. The overall accuracy evaluation index of the extracted building area is determined to exceed 80%, which is higher than the other two single data sources extracted from the mountain settlement information. Besides, the extracted contour lines are made clearer, which suggests the classification advantages of the point cloud data fused with image information. It is demonstrated that the classification method is conducive to the accurate identification of mountain settlements, which indicates its practical value in the accurate identification of mountain settlements.

Similar content being viewed by others

References

Bertoldi, W., & Gurnell, A. M. (2020). Physical engineering of an island-braided river by two riparian tree species: Evidence from aerial images and airborne lidar. River Research and Applications, 36(7), 1183–1201.

Blackman, R., & Yuan, F. (2020). detecting long-term urban forest cover change and impacts of natural disasters using high-resolution aerial images and LiDAR data. Remote Sensing, 12(11), 1820.

Cao, Q., Ma, A., Zhang, Y., et al. (2019). Urban land cover classification based on Hyperspectral LiDAR multilevel fusion. Journal of Remote Sensing, 23(05), 892–903.

Haala, N., & Brenner, C. (1999). Extraction of buildings and trees in urban environments. Isprs Journal of Photogrammetry and Remote Sensing, 54(2–3), 130–137.

Huang, X., Zhang, L., & Gong, W. (2011). Information fusion of aerial images and LIDAR data in urban areas: Vector-stacking, re-classification and post-processing approaches. International Journal of Remote Sensing, 32(1), 69–84.

Khodadadzadeh, M., Li, J., Prasad, S., et al. (2015). Fusion of hyperspectral and LiDAR remote sensing data using multiple feature learning. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 8(6), 2971–2983.

Lagahit, M. L. R., & Tseng, Y. H. (2020). Using deep learning to digitize road arrow markings from LIDAR point cloud derived images. The International Archives of Photogrammetry, Remote Sensing and Spatial Information Sciences, 43, 123–129.

Li, Y., Li, P., Dong, Y., et al. (2020). Automatic extraction and classification of rod features from LiDAR point cloud data. Journal of Surveying and Mapping, 49(06), 724–735.

Liu, S., Tong, X., Chen, J., et al. (2016). A linear feature-based approach for the registration of unmanned aerial vehicle remotely-sensed images and airborne LiDAR data. Remote Sensing, 8(2), 82.

Liu, Y., Mu, X., Wang, H., et al. (2012). A novel method for extracting green fractional vegetation cover from digital images. Journal of Vegetation Science, 23(3), 406–418.

Paris, C., & Bruzzone, L. (2014). A three-dimensional model-based approach to the estimation of the tree top height by fusing low-density LiDAR data and very high-resolution optical images. IEEE Transactions on Geoscience and Remote Sensing, 53(1), 467–480.

Polewski, P., Yao, W., Cao, L., et al. (2019). Marker-free coregistration of UAV and backpack LiDAR point clouds in forested areas. ISPRS Journal of Photogrammetry and Remote Sensing, 147, 307–318.

Priestnall, G., Jaafar, J., & Duncan, A. (2020). Extracting urban features from LiDAR digital surface models. Computers, Environment and Urban Systems, 24(2), 65–78.

Sankey, T., Donager, J., McVay, J., et al. (2017). UAV lidar and hyperspectral fusion for forest monitoring in the southwestern USA. Remote Sensing of Environment, 195, 30–43.

Tontini, A., Gasparini, L., & Perenzoni, M. (2020). Numerical model of SPAD-based direct time-of-flight flash LIDAR CMOS image sensors. Sensors, 20(18), 5203.

Wang, H., Lei, X., & Zhao, Z. (2020). 3D deep learning classification method of airborne LiDAR point cloud based on spectral information. Progress in Laser and Optoelectronics, 57(12), 348–355.

Wen, C., Yang, L., Li, X., et al. (2020). Directionally constrained fully convolutional neural network for airborne LiDAR point cloud classification. ISPRS Journal of Photogrammetry and Remote Sensing, 162, 50–62.

Wu, Y., & Zhang, X. (2020). Object-based tree species classification using airborne hyperspectral images and LiDAR data. Forests, 11(1), 32.

Xu, F., Zhang, X., & Shi, Y. (2019). Classification of urban features based on LiDAR and aerial image. Remote Sensing Technology and Application, 34(02), 253–262.

Yang, B., & Chen, C. (2015). Automatic registration of UAV-borne sequent images and LiDAR data. ISPRS Journal of Photogrammetry and Remote Sensing, 101, 262–274.

Zarco-Tejada, P. J., Diaz-Varela, R., Angileri, V., et al. (2014). Tree height quantification using very higher solution imagery acquired from an unmanned aerial vehicle (UAV) and automatic 3D photo-reconstruction methods. European Journal of Agronomy, 55, 89–99.

Zhang, A., Dong, Z., & Kang, X. (2019). Feature selection algorithm of airborne LiDAR and hyperspectral image based on XG boost. China Laser, 46(04), 150–158.

Zhou, M., Kang, Z., Wang, Z., et al. (2020). Airborne LIDAR point cloud classification fusion with DIM point cloud. The International Archives of Photogrammetry, Remote Sensing and Spatial Information Sciences, 43, 375–382.

Zhou, Q., & Robson, M. (2001). Automated rangeland vegetation cover and density estimation using ground digital images and a spectral-contextual classifier. International Journal of Remote Sensing, 22(17), 345.

Funding

Current Funding Sources List: National Natural Science Foundation of China (NSFC) Award Number (Grand No. 41561083).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

All authors declare no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

About this article

Cite this article

Gao, S., Yuan, X., Gan, S. et al. Experimental Study on Precise Recognition of Settlements in Mountainous Areas Based on UAV Image and LIDAR Point Cloud. J Indian Soc Remote Sens 50, 1827–1840 (2022). https://doi.org/10.1007/s12524-022-01548-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12524-022-01548-1