Abstract

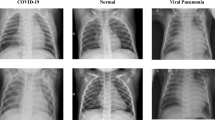

The COVID-19 medical diagnosis method based on individual’s chest X-ray (CXR) is achieved difficultly in the initial research, owing to difficulties in identifying CXR data of COVID-19 individuals. At the beginning of the study, infected individuals’ CXRs were scarce. The combination of artificial intelligence and medical diagnosis has been advanced and popular. To solve the difficulties, the interpretability analysis of AI model was used to explore the pathological characteristics of CXR samples infected with COVID-19 and assist medical diagnosis. The dataset was expanded by data augmentation to avoid overfitting. Transfer learning was used to test different pre-trained models and the unique output layers were designed to complete the model training with few samples. In this study, the output results of four pre-trained models were compared in three different output layers, and the results after data augmentation were compared with the results of the original dataset. The control variable method was used to conduct independent tests of 24 groups. Finally, 99.23% accuracy and 98% recall rate were obtained, and the visual results of CXR interpretability analysis were displayed. The network of COVID-19 interpretable diagnosis algorithm has the characteristics of high generalization and lightweight. It can be quickly applied to other urgent tasks with insufficient experimental data. At the same time, interpretability analysis brings new possibilities for medical diagnosis.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

CHEN N S, ZHOU M, DONG X, et al. Epidemiological and clinical characteristics of 99 cases of 2019 novel coronavirus pneumonia in Wuhan, China: A descriptive study [J]. The Lancet, 2020, 395(10223): 507–513.

LU Y Q, SHEN L, HE B. Application of artificial intelligence in assisted diagnosis and treatment of cardiovascular disease [J]. Journal of Shanghai Jiaotong University (Medical Science), 2020, 40(2): 259–262 (in Chinese).

ZHANG L, CHEN Q, JIANG B B, et al. Preliminary study on motion artifacts removal of coronary CT angiography using generative adversarial network [J]. Journal of Shanghai Jiao Tong University (Medical Science), 2020, 40(9): 1229–1235 (in Chinese).

LIU Y H, ZHANG F D, ZHANG Q Y, et al. Cross-view correspondence reasoning based on bipartite graph convolutional network for mammogram mass detection [C] //2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Seattle, WA: IEEE, 2020: 3811–3821.

RIBEIRO M T, SINGH S, GUESTRIN C. “Why should I trust you?” Explaining the predictions of any classifier [C]// Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA: ACM, 2016: 1135–1144.

PALATNIK DE SOUSA I, VELLASCO M M B R, COSTA DA SILVA E. Local interpretable model-agnostic explanations for classification of lymph node metastases [J]. Sensors, 2019, 19(13): 2969.

SIMONYAN K, ZISSERMAN A. Very deep convolutional networks for large-scale image recognition [EB/OL]. [2021-04-12]. https://arxiv.org/abs/1409.1556.

SZEGEDY C, VANHOUCKE V, IOFFE S, et al. Rethinking the inception architecture for computer vision [EB/OL]. [2021-04-12]. https://arxiv.org/abs/1512.00567

HU X C, MU H Y, ZHANG X Y, et al. Meta-SR: A magnification-arbitrary network for super-resolution [C] //2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Long Beach, CA: IEEE, 2019: 1575–1584.

ZHANG X F, WU G. Data augmentation method based on generative adversarial network [J]. Computer Systems Applications, 2019, 28(10): 201–206 (in Chinese).

BRUNESE L, MERCALDO F, REGINELLI A, et al. Explainable deep learning for pulmonary disease and coronavirus COVID-19 detection from X-rays [J]. Computer Methods and Programs in Biomedicine, 2020, 196: 105608.

COZZI D, ALBANESI M, CAVIGLI E, et al. Chest X-ray in new Coronavirus Disease 2019 (COVID-19) infection: Findings and correlation with clinical outcome [J]. La Radiologia Medica, 2020, 125(8): 730–737.

SCHIAFFINO S, TRITELLA S, COZZI A, et al. Diagnostic performance of chest X-ray for COVID-19 pneumonia during the SARS-CoV-2 pandemic in Lombardy, Italy [J]. Journal of Thoracic Imaging, 2020, 35(4): W105–W106.

CHENG K Y, SHI W X, ZHAN Y Z. Research Progress on explicability of deep learning [J]. Journal of Computer Research and Development, 2020, 57(6): 1208–1217 (in Chinese).

SELVARAJU R R, COGSWELL M, DAS A, et al. Grad-CAM: Visual explanations from deep networks via gradient-based localization [J]. International Journal of Computer Vision, 2020, 128(2): 336–359.

ANTHIMOPOULOS M, CHRISTODOULIDIS S, EBNER L, et al. Lung pattern classification for interstitial lung diseases using a deep convolutional neural network [J]. IEEE Transactions on Medical Imaging, 2016, 35(5): 1207–1216.

Author information

Authors and Affiliations

Corresponding author

Additional information

Foundation item: the Southwest Minzu University Graduate Innovative Research Project (No. CX2020SZ95, Master Program); the National Natural Science Foundation of China (No. 62073270); the State Ethnic Affairs Commission Innovation Research Team Project, and Innovative Research Team Project of the Education Department of Sichuan Province (No. 15TD0050)

Rights and permissions

About this article

Cite this article

Bu, R., Xiang, W. & Cao, S. COVID-19 Interpretable Diagnosis Algorithm Based on a Small Number of Chest X-Ray Samples. J. Shanghai Jiaotong Univ. (Sci.) 27, 81–89 (2022). https://doi.org/10.1007/s12204-021-2393-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12204-021-2393-2

Key words

- COVID-19

- chest X-ray (CXR)

- interpretability

- data augmentation

- transfer learning

- convolutional neural network