Abstract

The paper focuses on the development of an open-source utility tool for the analysis of eye-tracking data recorded on interactive web maps. The tool simplifies the labor-intensive task of frame-by-frame analysis of screen recordings with overlaid eye-tracking data in the current eye-tracking systems. The tool's main functionality is to convert the screen coordinates of the participant's gaze to real-world coordinates and allow exports in commonly used spatial data formats. The paper explores the existing state-of-art in an eye-tracking analysis of dynamic cartographic products as well as the research and technology aiming at improving the analysis techniques. The developed software, called ET2Spatial, is tested in-depth in terms of performance and accuracy. The capabilities of GIS software for visualizing and analyzing recorded eye-tracking data are investigated. The tool aims to enhance the research capabilities in the field of eye-tracking in geovisualization.

Similar content being viewed by others

References

Andrienko G, Andrienko N, Burch M, Weiskopf D (2012) Visual analytics methodology for eye movement studies. IEEE Trans Visual Comput Graphics 18(12):2889–2898. https://doi.org/10.1109/TVCG.2012.276

Beitlova M, Popelka S, Vozenilek V (2020) Differences in thematic map reading by students and their geography teacher. ISPRS Int J Geo Inf 9(9):492

Burch M (2019) Interaction graphs: visual analysis of eye movement data from interactive stimuli. In: Proceedings of the 11th ACM Symposium on Eye Tracking Research & Applications. Denver, Colorado. https://doi.org/10.1145/3317960.3321617

Burch M, Kurzhals K, Kleinhans N, Weiskopf D (2018) EyeMSA: exploring eye movement data with pairwise and multiple sequence alignment. In: Proceedings of the 2018 ACM Symposium on Eye Tracking Research & Applications. Warsaw, Poland. https://doi.org/10.1145/3204493.3204565

Dolezalova J, Popelka S (2016) ScanGraph: a novel scanpath comparison method using visualisation of graph cliques. J Eye Mov Res 9(4):Article 5. https://doi.org/10.16910/jemr.9.4.5

Dong W, Liao H, Liu B, Zhan Z, Liu H, Meng L, Liu Y (2020) Comparing pedestrians’ gaze behavior in desktop and in real environments. Cartogr Geogr Inf Sci 47(5):432–451. https://doi.org/10.1080/15230406.2020.1762513

Enoch JM (1959) Effect of the size of a complex display upon visual search. JOSA 49(3):280–285. https://doi.org/10.1364/JOSA.49.000280

Eraslan S, Yaneva V, Yesilada Y, Harper S (2019) Web users with autism: eye tracking evidence for differences. Behav Inf Technol 38(7):678–700

Giannopoulos I, Kiefer P, Raubal M (2012) GeoGazemarks: providing gaze history for the orientation on small display maps. In: Proceedings of the 14th ACM international conference on Multimodal interaction. Santa Monica, California, USA. https://doi.org/10.1145/2388676.2388711

Göbel F, Kiefer P, Raubal M (2019) FeaturEyeTrack: automatic matching of eye tracking data with map features on interactive maps. GeoInformatica 2019(23):663–687. https://doi.org/10.1007/s10707-019-00344-3

Havelková L, Gołębiowska IM (2020) What went wrong for bad solvers during thematic map analysis? Lessons learned from an eye-tracking study. ISPRS Int J Geo Inf 9(1):9

Herman L, Popelka S, Hejlova V (2017) Eye-tracking analysis of interactive 3D geovisualization. J Eye Mov Res 10(3):1–15. https://doi.org/10.16910/jemr.10.3.2. Article 2

Holmqvist K, Nyström M, Andersson R, Dewhurst R, Jarodzka H, Van de Weijer J (2011) Eye tracking: a comprehensive guide to methods and measures. Oxford University Press, Oxford

Jenks GF (1973) Visual integration in thematic mapping: fact or fiction? International Yearbook of Cartography, 13 pp 27–35

Jenks GF (1974) The average map-reader lives. Paper presented at Annual meeting of the Association of American Geographers, Seattle, Washington

Kiefer P, Giannopoulos I (2012) Gaze map matching: mapping eye tracking data to geographic vector features. In: Proceedings of the 20th International Conference on Advances in Geographic Information Systems. Redondo Beach, California. https://doi.org/10.1145/2424321.2424367

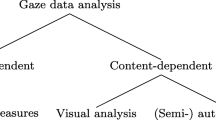

Krassanakis V, Cybulski P (2019) A review on eye movement analysis in map reading process: the status of the last decade. Geod Cartogr 68(1):191–209

Krassanakis V, Filippakopoulou V, Nakos B (2014) EyeMMV toolbox: an eye movement post-analysis tool based on a two-step spatial dispersion threshold for fixation identification. J Eye Mov Res 7(1). https://doi.org/10.16910/jemr.7.1.1

Ooms K, De Maeyer P, Fack V, Van Assche E, Witlox F (2012) Interpreting maps through the eyes of expert and novice users. Int J Geogr Inf Sci 26(10):1773–1788. https://doi.org/10.1080/13658816.2011.642801

Ooms K, Coltekin A, De Maeyer P, Dupont L, Fabrikant S, Incoul A, Kuhn M, Slabbinck H, Vansteenkiste P, Van der Haegen L (2015) Combining user logging with eye tracking for interactive and dynamic applications. Behav Res Methods 47(4):977–993. https://doi.org/10.3758/s13428-014-0542-3

Papenmeier F, Huff M (2010) DynAOI: a tool for matching eye-movement data with dynamic areas of interest in animations and movies. Behav Res Methods 42(1):179–187. https://doi.org/10.3758/BRM.42.1.179

Pfeiffer T (2012) Measuring and visualizing attention in space with 3D attention volumes. Symposium on Eye Tracking research & applications (ETRA 2012), Santa Barbara, California, USA

Popelka S, Beitlova M (2022) Scanpath Comparison using ScanGraph for Education and Learning Purposes: Summary of previous educational studies performed with the use of ScanGraph. 2022 Symposium on Eye Tracking Research and Applications, Seattle, WA, USA. https://doi.org/10.1145/3517031.3529243

Popelka S, Burian J, Beitlova M (2022) Swipe versus multiple view: a comprehensive analysis using eye-tracking to evaluate user interaction with web maps. Cartography and Geographic Information Science, pp 1–19. https://doi.org/10.1080/15230406.2021.2015721

Popelka S, Dolezalova J, Beitlova M (2018) New features of scangraph: a tool for revealing participants’ strategy from eye-movement data. In: Proceedings of the 2018 ACM Symposium on Eye Tracking Research & Applications. Warsaw, Poland. https://doi.org/10.1145/3204493.3208334

Růžička O (2012) Profil uživatele webových map. Palacký University Olomouc, Olomouc

Šašinka Č, Morong K, Stachoň Z (2017) The hypothesis platform: an online tool for experimental research into work with maps and behavior in electronic environments. ISPRS Int J Geo Inf 6(12):1–22

Šašinka Č, Stachoň Z, Čeněk J, Šašinková A, Popelka S, Ugwitz P, Lacko D (2021) A comparison of the performance on extrinsic and intrinsic cartographic visualizations through correctness, response time and cognitive processing. PLoS ONE 16(4):e0250164. https://doi.org/10.1371/journal.pone.0250164

Skrabankova J, Popelka S, Beitlova M (2020) Students’ ability to work with graphs in physics studies related to three typical student groups. J Balt Sci Educ 19(2):298–316

Słomska K (2018) Types of maps used as a stimuli in cartographical empirical research. Misc Geogr 22(3):157–171. https://doi.org/10.2478/mgrsd-2018-0014

Unrau R, Kray C (2019) Usability evaluation for geographic information systems: a systematic literature review. Int J Geogr Inf Sci 33(4):645–665. https://doi.org/10.1080/13658816.2018.1554813

Funding

This study was done as part of the master thesis of first author, supported by the Erasmus + Programme of the European Union. The paper was also supported by the Internal Grant Agency of Palacký University Olomouc (grant number IGA_PrF_2022_027) and by the Ministry of Culture Czech Republic (grant number DG18P02OVV017).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors have no competing interests to declare that are relevant to the content of this article.

Additional information

Communicated by: H. Babaie

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Sultan, M.N., Popelka, S. & Strobl, J. ET2Spatial – software for georeferencing of eye movement data. Earth Sci Inform 15, 2031–2049 (2022). https://doi.org/10.1007/s12145-022-00832-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12145-022-00832-5