Abstract

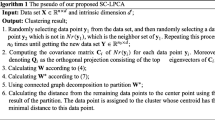

Many classical clustering algorithms do good jobs on their prerequisite but do not scale well when being applied to deal with very large data sets (VLDS). In this work, a novel division and partition clustering method (DP) was proposed to solve the problem. DP cut the source data set into data blocks, and extracted the eigenvector for each data block to form the local feature set. The local feature set was used in the second round of the characteristics polymerization process for the source data to find the global eigenvector. Ultimately according to the global eigenvector, the data set was assigned by criterion of minimum distance. The experimental results show that it is more robust than the conventional clusterings. Characteristics of not sensitive to data dimensions, distribution and number of nature clustering make it have a wide range of applications in clustering VLDS.

Similar content being viewed by others

References

MACQUEEN J B. Some methods for classification and analysis of multivariate observations [C]// The 5th Berkeley Symposium on Mathematical Statistics and Probability. Berkeley, USA, 1967: 281–297.

LI Qing-feng, PENG Wen-feng. A new clustering algorithm for large datasets [J]. Journal of Central South University of Technology, 2011, 18(3): 823–829.

FREY B J, DUECK D. Clustering by passing message between data points [J]. Science, 2007, 315: 972–976.

ZHANG Tian, RAMAKRISHNA R, LIVNY M. Birch: An efficient data clustering method for large databases [C]// Proceedings of ACM-SIGMOD International Conference on Management of Data. Montreal, 1996: 103–114.

GUHA S, RASTOGI R, SHIM K. Cure: An efficient clustering algorithm for large databases [C]// Proceedings of 1998 ACM-SIGMOD International Conference on Management of Data. Seattle, 1998: 73–84.

NG R T, HAN J. Efficient and effective clustering methods for spatial data mining [C]// Proceeding of the 20th VLDB Conference Santiago. Chile, 1994: 144–155.

SHEIKHOLESLAMI G, CHATTERJEE S, ZHANG A. WaveCluster: A muti-resolution clustering approach for very large spatial databases [C]// Proceedings of 24th International Conference on Very Large Database. New York, 1998: 428–439.

KIDDLE S J, WINDRAM O P, MCHATTIE S. Temporal clustering by affinity propagation reveals transcriptional modules in Arabidopsis thaliana [J]. Bioinformatics, 2010, 26(3): 355–362.

CHANG Chin-liang. Finding prototypes for nearest neighbor classifiers [J]. IEEE Transactions on Computers C, 1974, 23(11): 1179–1184.

LIANG Jiu-zhen, SONG Wei. Clustering based on Steiner points [J]. International Journal of Machine Learning and Cybernetics, DOI: 10.1007/s13042-011-0047-7.

BRADLEY P, FAYYAD U, REINA C. Scaling clustering algorithms to large databases [C]// Proceedings of the 4th International Conference on Knowledge Discovery & Data Mining. Redmond, USA, 1998: 9–15.

BRADLEY P, FAYYAD U, REINA C. Scaling EM (Expectation-Maximization) clustering to large databases [R]. Redmond: Technical Report MSR-TR-98-35, Microsoft Research, 1998: 9–15.

DEMPSTER A P, LAIRD N M, RUBIN D B. Maximum likelihood from incomplete data via the EM algorithm [J]. Journal of the Royal Statistical Society: Series B, 1977, 39(1): 1–38.

MÉZARD M, PARISI G, ZECCHINA R. Analytic and algorithmic solution of random satisfiability problems [J]. Science, 2002, 297: 812–815.

Machine-learning-databases [EB/OL]. 2011-09-25. http://archive.ics.uci.edu/ml/machine-learning-databases/.

WITTEN L H, FRANK E, HALL M A. Data ming: Practical machine learning tools and techniques [M]. 3rd edition. Burlington, USA: Morgan Kaufmann, 2011: 173–182.

Author information

Authors and Affiliations

Corresponding author

Additional information

Foundation item: Projects(60903082, 60975042) supported by the National Natural Science Foundation of China; Project(20070217043) supported by the Research Fund for the Doctoral Program of Higher Education of China

Rights and permissions

About this article

Cite this article

Lu, Zm., Liu, C., Massinanke, S. et al. Clustering method based on data division and partition. J. Cent. South Univ. 21, 213–222 (2014). https://doi.org/10.1007/s11771-014-1932-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11771-014-1932-5