Abstract

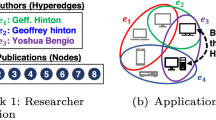

Graph neural networks have been shown to be very effective in utilizing pairwise relationships across samples. Recently, there have been several successful proposals to generalize graph neural networks to hypergraph neural networks to exploit more complex relationships. In particular, the hypergraph collaborative networks yield superior results compared to other hypergraph neural networks for various semi-supervised learning tasks. The collaborative network can provide high quality vertex embeddings and hyperedge embeddings together by formulating them as a joint optimization problem and by using their consistency in reconstructing the given hypergraph. In this paper, we aim to establish the algorithmic stability of the core layer of the collaborative network and provide generalization guarantees. The analysis sheds light on the design of hypergraph filters in collaborative networks, for instance, how the data and hypergraph filters should be scaled to achieve uniform stability of the learning process. Some experimental results on real-world datasets are presented to illustrate the theory.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

L. Yao, C. S. Mao, Y. Luo. Graph convolutional networks for text classification. In Proceedings of the 33rd AAAI Conference on Artificial Intelligence, Honolulu, USA, pp. 7370–7377, 2018. DOI: https://doi.org/10.1609/aaai.v33i01.33017370.

H. Xu, Y. Ma, H. C. Liu, D. Deb, H. Liu, J. L. Tang, A. K. Jain. Adversarial attacks and defenses in images, graphs and text: A review. International Journal of Automation and Computing, vol. 17, no. 2, pp. 151–178, 2020. DOI: https://doi.org/10.1007/s11633-019-1211-x.

Z. D. Chen, L. S. Li, J. Bruna. Supervised community detection with line graph neural networks. In Proceedings of the 7th International Conference on Learning Representations, New Orleans, USA, 2020.

J. Gilmer, S. S. Schoenholz, P. F. Riley, O. Vinyals, G. E. Dahl. Neural message passing for quantum chemistry. In Proceedings of the 34th International Conference on Machine Learning, Sydney, Australia, pp. 1263–1272, 2017.

G. S. Te, W. Hu, A. M. Zheng, Z. Guo. RGCNN: Regularized graph CNN for point cloud segmentation. In Proceedings of the 26th ACM international conference on Multimedia, Seoul, Republic of Korea, pp. 746–754, 2018. DOI: https://doi.org/10.1145/3240508.3240621.

O. Litany, A. Bronstein, M. Bronstein, A. Makadia. Deformable shape completion with graph convolutional autoencoders. In Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, Satt Lake City, USA, pp. 1886–1895, 2018. DOI: https://doi.org/10.1109/CV-PR.2018.00202.

Y. Rui, V. I. S. Carmona, M. Pourvali, Y. Xing, W. W. Yi, H. B. Ruan, Y. Zhang. Knowledge mining: A cross-disciplinary survey. Machine Intelligence Research, vol. 19, no. 2, pp. 89–114, 2022. DOI: https://doi.org/10.1007/s11633-022-1323-6.

T. N. Kipf, M. Welling. Semi-supervised classification with graph convolutional networks. In Proceedings of the 5th International Conference on Learning Representations, Toulon, France, 2017. DOI: https://doi.org/10.48550/arXiv.1609.02907.

R. Y. Li, S. Wang, F. Y. Zhu, J. Z. Huang. Adaptive graph convolutional neural networks. In Proceedings of the 32nd AAAI Conference on Artificial Intelligence, New Orleans, USA, pp. 3546–3553, 2018. DOI: https://doi.org/10.1609/aaai.v32i1.11691.

F. P. Such, S. Sah, M. A. Dominguez, S. Pillai, C. Zhang, A. Michael, N. D. Cahill, R. Ptucha. Robust spatial filtering with graph convolutional neural networks. IEEE Journal of Selected Topics in Signal Processing, vol. 11, no. 6, pp. 884–896, 2017. DOI: https://doi.org/10.1109/JSTSP.2017.2726981.

D. Y. Zhou, J. Y. Huang, Schölkopf, B. Learning with hypergraphs: Clustering, classification, and embedding. In Proceedings of the 19th International Conference on Neural Information Processing Systems, Vancouver, Canada, vol. 19, pp. 1601–1608, 2006.

Y. Gao, Z. Z. Zhang, H. J. Lin, X. B. Zhao, S. Y. Du, C. Q. Zou. Hypergraph learning: Methods and practices. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 44, no. 5, pp. 2548–2566, 2022.

Y. F. Feng, H. X. You, Z. Z. Zhang, R. R. Ji, Y. Gao. Hypergraph neural networks. In Proceedings of the 33rd AAAI Conference on Artificial Intelligence, Honolulu, USA, vol. 33, pp. 3558–3565, 2019. DOI: https://doi.org/10.1609/aaai.v33i01.33013558.

S. Bai, F. H. Zhang, P. H. S. Torr. Hypergraph convolution and hypergraph attention. Pattern Recognition, vol. 110, Article number 107637, 2021. DOI: https://doi.org/10.1016/j.patcog.2020.107637.

Y. H. Dong, W. Sawin, Y. Bengio. HNHN: Hypergraph networks with hyperedge neurons. In Graph Representations and Beyond Workshop at International Conference on Machine Learning, [Online], Available: https://arxiv.org/abs/2006.12278, 2020.

N. Yadati, M. Nimishakavi, P. Yadav, V. Nitin, A. Louis, P. Talukdar. HyperGCN: A new method of training graph convolutional networks on hypergraphs. In Proceedings of the 33rd International Conference on Neural Information Processing Systems, Vancouver, Canada, pp. 1511–1522, 2019.

H. R. Wu, M. K. Ng. Hypergraph convolution on nodeshyperedges network for semi-supervised node classification. ACM Transactions on Knowledge Discovery from Data, vol. 16, no. 4, Article number 80, 2022. DOI: https://doi.org/10.1145/3494567.

H. R. Wu, Y. G. Yan, M. K. Ng. Hypergraph collaborative network on vertices and hyperedges. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 43, no. 3, pp. 3245–3258, 2023. DOI: https://doi.org/10.1109/TPAMI.2022.3178156.

O. Bousquet, A. Elisseeff. Stability and generalization. The Journal of Machine Learning Research, vol. 2, pp. 499–526, 2002. DOI: https://doi.org/10.1162/153244302760200704.

S. Mukherjee, P. Niyogi, T. Poggio, R. Rifkin. Learning theory: Stability is sufficient for generalization and necessary and sufficient for consistency of empirical risk minimization. Advances in Computational Mathematics, vol. 25, no. 1–3, pp. 161–193, 2006. DOI: https://doi.org/10.1007/s10444-004-7634-z.

A. S. Nemirovski, D. B. Yudin. Problem Complexity and Method Efficiency in Optimization, New York, USA: Wiley Interscience, 1983.

M. Hardt, B. Recht, Y. Singer. Train faster, generalize better: Stability of stochastic gradient descent. In Proceedings of the 33rd International Conference on International Conference on Machine Learning, New York, USA, pp. 1225–1234, 2015.

S. Verma, Z. L. Zhang. Stability and generalization of graph convolutional neural networks. In Proceedings of the 25th ACM/SIGKDD International Conference on Knowledge Discovery & Data Mining, ACM, Anchorage, USA, pp. 1539–1548, 2019. DOI: https://doi.org/10.1145/3292500.3330.956.

M. Belkin, I. Matveeva, P. Niyogi. Regularization and semi-supervised learning on large graphs. In Proceedings of the 17th International Conference on Learning Theory, Springer, Banff, Canada, pp. 624–638, 2004. DOI: https://doi.org/10.1007/978-3-540-27819-1_43.

C. McDiarmid. On the method of bounded differences. Surveys in Combinatorics, J. Siemons, Ed., Cambridge, UK: Cambridge University Press, pp. 148–188, 1989. DOI: https://doi.org/10.1017/CBO9781107359949.008.

I. Bhattacharya, L. Getoor. Collective entity resolution in relational data. ACM Transactions on Knowledge Discovery from Data, vol. 1, no. 1, Article number 5, 2007. DOI: https://doi.org/10.1145/1217299.1217304.

P. Sen, G. Namata, M. Bilgic, L. Getoor, B. Galligher, T. Eliassi-Rad. Collective classification in network data. AI Magazine, vol. 29, no. 3, Article number 93, 2008. DOI: https://doi.org/10.1609/aimag.v29i3.2157.

G. Namata, B. London, L. Getoor, B. Huang. Query-driven active surveying for collective classification. In Proceedings of Workshop on Mining and Learning with Graphs, Edinburgh, UK, 2012.

Acknowledgements

We would like to thank the editors and reviewers for their comments and suggestions, which helped to improve the quality of this paper to a great deal.

Ng was supported in part by Hong Kong Research Grant Council General Research Fund (GRF), China (Nos. 12300218, 12300519, 17201020, 17300021, CRF C1013-21GF, C7004-21GF and Joint NSFC-RGC NHKU76921). Wu is supported by National Natural Science Foundation of China (No. 62206111), Young Talent Support Project of Guangzhou Association for Science and Technology, China (No. QT-2023-017), Guangzhou Basic and Applied Basic Research Foundation, China (No. 2023A04J1058), Fundamental Research Funds for the Central Universities, China (No. 21622326), and China Postdoctoral Science Foundation (No. 2022M721343).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

The authors declared that they have no conflicts of interest to this work.

Additional information

Colored figures are available in the online version at https://link.springer.com/journal/11633

Michael K. Ng received the B. Sc. and M. Phil. degrees in mathematics from The University of Hong Kong, China in 1990 and 1992, respectively, and the Ph. D. degree in mathematics from The Chinese University of Hong Kong, China in 1995. He was a research fellow of Computer Sciences Laboratory at Australian National University, Australia from 1995 to 1997, and an assistant/associate professor of the University of Hong Kong, China from 1997 to 2005. He was professor/Chair professor in Department of Mathematics at Hong Kong Baptist University, China from 2006 to 2019. He is currently a chair professor in Research Division of mathematical and statistical science at The University of Hong Kong, China. He is selected for the 2017 class of fellows of the Society for Industrial and Applied Mathematics. He obtained the Feng Kang Prize for his significant contributions in scientific computing. He serves on the editorial board members of several international journals.

His research interests include bioinformatics, image processing, scientific computing and data mining.

Hanrui Wu received the B. Sc. and Ph. D. degrees in software engineering from School of Software Engineering, South China University of Technology, China in 2013 and 2020, respectively. He is currently an associate professor with Department of Computer Science, Jinan University, China. Before that, he was a postdoctoral research fellow with Department of Mathematics, The University of Hong Kong, China from 2020 to 2021.

His research interests include transfer learning, zero-shot learning, hypergraph learning, recommendation systems and brain-computer interactions.

Andy Yip received the Ph. D. degree in mathematics from University of California at Los Angeles, USA in 2005. He is currently a senior researcher with The University of Hong Kong, China. He served as an assistant professor with National University Singapore, Singapore, and a lecturer with Hong Kong Baptist University, China. Since 2017, he has worked in the data science and finance industries.

His research interests include data science and image processing.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Ng, M.K., Wu, H. & Yip, A. Stability and Generalization of Hypergraph Collaborative Networks. Mach. Intell. Res. 21, 184–196 (2024). https://doi.org/10.1007/s11633-022-1397-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11633-022-1397-1