Abstract

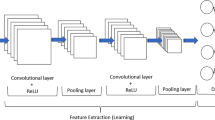

To meet the needs of embedded intelligent forest fire monitoring systems using an unmanned aerial vehicles (UAV), a deep learning fire recognition algorithm based on model compression and lightweight requirements is proposed in this study. The algorithm for the lightweight MobileNetV3 model was developed to reduce the complexity of the conventional YOLOv4 network structure. The redundant channels are eliminated through channel-level sparsity-induced regularization. The knowledge distillation algorithm is used to improve the detection accuracy of the pruned model. The experimental results reveal that the number of model parameters for the proposed architecture is only 2.64 million—compared with YOLOv4, this represents a reduction of nearly 95.87%. The inference time decreased from 153.8 to 37.4 ms, a reduction of nearly 75.68%. Our approach shows the advantages of a model with a smaller number of parameters, low memory requirements and fast inference speed compared with existing algorithms. The method presented in this paper is specifically tailored for use as a deep learning forest fire monitoring system on a UAV platform.

Similar content being viewed by others

Data availability statement

All data generated or appeared in this study are available upon request by contact with the corresponding author.

References

Donald, M., Littell, J.S.: Climate change and the eco-hydrology of fire: Will area burned increase in a warming western USA? Ecol. Appl. 27(1), 26–36 (2017)

Rudz, S., Chetehouna, K., Hafiane, A., et al.: On the evaluation of segmentation methods for wildland fire. In: International Conference on Advanced Concepts for Intelligent Vision Systems, pp. 12–23. Springer, Berlin, Heidelberg (2009)

Wei, H.C., Wang, S.Y., Xu, Y.J., et al.: Forest fire image recognition algorithm of sample entropy fusion and clustering. J. Electron. Measure. Instrum. 34(01), 171–177 (2020)

Surit, S., Chatwiriya, W.: Forest fire smoke detection in video based on digital image processing approach with static and dynamic characteristic analysis. In: 2011 first ACIS/JNU International Conference on Computers, Networks, Systems and Industrial Engineering. IEEE, pp. 35–39 (2011)

Wirth, M., Zaremba, R.: Flame region detection based on histogram backprojection. In: 2010 Canadian Conference on Computer and Robot Vision. IEEE, pp. 167–174 (2010)

Muhammad, K., Ahmad, J., Lv, Z., et al.: Efficient deep CNN-based fire detection and localization in video surveillance applications. IEEE Trans. Syst. Man Cybern. Syst. 49(7), 1419–1434 (2019)

Muhammad, K., Ahmad, J., Mehmood, I., et al.: Convolutional neural networks based fire detection in surveillance videos. IEEE Access 6, 18174–18183 (2018)

Zhang, Q.X., Lin, G.H., Zhang, Y.M., et al.: Wildland forest fire smoke detection based on faster R-CNN using synthetic smoke images. Proc. Eng. 211, 441–446 (2018)

Kuanar, S., Rao, K.R., Bilas, M., et al.: Adaptive CU mode selection in HEVC intra prediction: a deep learning approach. Circuits Syst. Signal. Process. 38(11), 5081–5102 (2019)

Kuanar, S., Athitsos, V., Mahapatra, D., et al.: Low dose abdominal CT image reconstruction: an unsupervised learning based approach. In: 2019 IEEE International Conference on Image Processing (ICIP). IEEE, pp 1351–1355 (2019)

Bochkovskiy, A., Wang ,C.Y., Liao, H.Y.M.: YOLOv4: Optimal Speed and Accuracy of Object Detection. arXiv 2020. arXiv preprint. arXiv:2004.10934. pp. 1–17 (2020)

Wang, C.Y., Mark Liao, H.Y., Wu, Y.H., et al.: CSPNet: a new backbone that can enhance learning capability of cnn. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, pp. 390–391 (2020)

He, K., Zhang, X., Ren, S., et al.: Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. 37(9), 1904–1916 (2015)

Liu, S., Qi, L., Qin, H., et al.: Path aggregation network for instance segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 8759–8768 (2018)

Howard, A., Sandler, M., Chu, G., et al.: Searching for mobilenetv3. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1314–1324 (2019)

Iandola, F.N., Han, S., Moskewicz, M.W., et al.: SqueezeNet: AlexNet-level Accuracy with 50x Fewer Parameters and <0.5 MB Model Size. arXiv preprint. arXiv:1602.07360 (2016)

Zhang, X., Zhou, X., Lin, M., et al.: Shufflenet: an extremely efficient convolutional neural network for mobile devices. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6848–6856 (2018)

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7132–7141 (2018)

Howard, A.G., Zhu, M., Chen, B., et al.: Mobilenets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv preprint. arXiv:1704.04861 (2017)

Sandler, M., Howard, A., Zhu, M., et al.: Mobilenetv2: inverted residuals and linear bottlenecks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4510–4520 (2018)

Liu, Z., Li, J., Shen, Z., et al.: Learning efficient convolutional networks through network slimming. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2736–2744 (2017)

Ioffe, S., Szegedy, C.: Batch normalization: accelerating deep network training by reducing internal covariate shift. In: International Conference on Machine Learning, PMLR, pp 448–456 (2015)

Hinton, G., Vinyals, O., Dean, J.: Distilling the Knowledge in a Neural Network. arXiv preprint. arXiv:1503.02531 (2015)

Acknowledgements

Data processing was supported by Ningxia Technology Innovative Team of advanced intelligent perception & control and the Key Laboratory of Intelligent Perception Control at North Minzu University.

Funding

This research was funded by National Natural Science Foundation of China (No. 61861001), Postgraduate Innovation Project of North Minzu University (No. YCX20111).

Author information

Authors and Affiliations

Contributions

S.W. and H.W. designed the experiments; S.W., J.Z., and X.Z. processed the data; S.W., M.X., and N.T. analyzed the data and wrote the original paper. All authors have read and agreed to the published version of the manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Wang, S., Zhao, J., Ta, N. et al. A real-time deep learning forest fire monitoring algorithm based on an improved Pruned + KD model. J Real-Time Image Proc 18, 2319–2329 (2021). https://doi.org/10.1007/s11554-021-01124-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11554-021-01124-9