Abstract

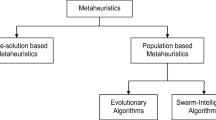

Evolutionary algorithms (EAs) are a sort of nature-inspired metaheuristics, which have wide applications in various practical optimization problems. In these problems, objective evaluations are usually inaccurate, because noise is almost inevitable in real world, and it is a crucial issue to weaken the negative effect caused by noise. Sampling is a popular strategy, which evaluates the objective a couple of times, and employs the mean of these evaluation results as an estimate of the objective value. In this work, we introduce a novel sampling method, median sampling, into EAs, and illustrate its properties and usefulness theoretically by solving OneMax, the problem of maximizing the number of 1s in a bit string. Instead of the mean, median sampling employs the median of the evaluation results as an estimate. Through rigorous theoretical analysis on OneMax under the commonly used onebit noise, we show that median sampling reduces the expected runtime exponentially. Next, through two special noise models, we show that when the 2-quantile of the noisy fitness increases with the true fitness, median sampling can be better than mean sampling; otherwise, it may fail and mean sampling can be better. The results may guide us to employ median sampling properly in practical applications.

Similar content being viewed by others

References

Bäck T. Evolutionary Algorithms in Theory and Practice: Evolution Strategies, Evolutionary Programming, Genetic Algorithms. Oxford: Oxford University Press, 1996

Xu P, Liu X, Cao H F, et al. An efficient energy aware virtual network migration based on genetic algorithm. Front Comput Sci, 2019, 13: 440–442

Yuan Q, Tang H B, You W, et al. Virtual network function scheduling via multilayer encoding genetic algorithm with distributed bandwidth allocation. Sci China Inf Sci, 2018, 61: 092107

Jin Y C, Branke J. Evolutionary optimization in uncertain environments — a survey. IEEE Trans Evol Comput, 2005, 9: 303–317

Aizawa A N, Wah B W. Scheduling of genetic algorithms in a noisy environment. Evolary Comput, 1994, 2: 97–122

Stagge P. Averaging efficiently in the presence of noise. In: Proceedings of the 5th International Conference on Parallel Problem Solving from Nature, Amsterdam, 1998. 188–197

Branke J, Schmidt C. Selection in the presence of noise. In: Proceedings of the 5th ACM Conference on Genetic and Evolutionary Computation, 2003. 766–777

Branke J, Schmidt C. Sequential sampling in noisy environments. In: Proceedings of the 8th International Conference on Parallel Problem Solving from Nature, 2004. 202–211

Auger A, Doerr B. Theory of Randomized Search Heuristics: Foundations and Recent Developments. Singapore: World Scientific, 2011

Neumann F, Witt C. Bioinspired Computation in Combinatorial Optimization: Algorithms and Their Computational Complexity. Berlin: Springer, 2010

Zhang Y S, Huang H, Wu H Y, et al. Theoretical analysis of the convergence property of a basic pigeon-inspired optimizer in a continuous search space. Sci China Inf Sci, 2019, 62: 070207

Hwang H-K, Witt C. Sharp bounds on the runtime of the (1+1) EA via drift analysis and analytic combinatorial tools. In: Proceedings of the 15th International Workshop on Foundations of Genetic Algorithms, 2019

Huang H, Su J P, Zhang Y S, et al. An experimental method to estimate running time of evolutionary algorithms for continuous optimization. IEEE Trans Evol Comput, 2020, 24: 275–289

Zhang Y A, Qin X F, Ma Q L, et al. Markov chain analysis of evolutionary algorithms on OneMax function — from coupon collector’s problem to (1+1) EA. Theory Comput Sci, 2020, 820: 26–44

Bian C, Qian C, Tang K. Towards a running time analysis of the (1+1)-EA for OneMax and LeadingOnes under general bit-wise noise. In: Proceedings of the 15th International Conference on Parallel Problem Solving from Nature, 2018. 165–177

Dang-Nhu R, Dardinier T, Doerr B, et al. A new analysis method for evolutionary optimization of dynamic and noisy objective functions. In: Proceedings of the 20th ACM Conference on Genetic and Evolutionary Computation, 2018. 1467–1474

Droste S. Analysis of the (1+1) EA for a noisy OneMax. In: Proceedings of the 6th ACM Conference on Genetic and Evolutionary Computation, 2004. 1088–1099

Gießen C, Kötzing T. Robustness of populations in stochastic environments. Algorithmica, 2016, 75: 462–489

Qian C, Yu Y, Zhou Z H. Analyzing evolutionary optimization in noisy environments. Evolary Comput, 2018, 26: 1–41

Sudholt D. On the robustness of evolutionary algorithms to noise: refined results and an example where noise helps. In: Proceedings of the 20th ACM Conference on Genetic and Evolutionary Computation, 2018. 1523–1530

Qian C, Shi J-C, Yu Y, et al. Subset selection under noise. In: Proceedings of the 31st International Conference on Neural Information Processing Systems, 2017. 3563–3573

Qian C. Distributed pareto optimization for large-scale noisy subset selection. IEEE Trans Evol Comput, 2020, 24: 694–707

Dang D-C, Lehre P K. Efficient optimisation of noisy fitness functions with population-based evolutionary algorithms. In: Proceedings of the 13th International Workshop on Foundations of Genetic Algorithms, 2015. 62–68

Prugel-Bennett A, Rowe J, Shapiro J. Run-time analysis of population-based evolutionary algorithm in noisy environments. In: Proceedings of the 13th International Workshop on Foundations of Genetic Algorithms, 2015. 69–75

Qian C, Bian C, Yu Y, et al. Analysis of noisy evolutionary optimization when sampling fails. In: Proceedings of the 20th ACM Conference on Genetic and Evolutionary Computation, 2018. 1507–1514

Qian C, Yu Y, Tang K, et al. On the effectiveness of sampling for evolutionary optimization in noisy environments. Evolary Comput, 2018, 26: 237–267

Qian C, Bian C, Jiang W, et al. Running time analysis of the (1+1)-EA for OneMax and leadingones under bit-wise noise. Algorithmica, 2019, 81: 749–795

Friedrich T, Kotzing T, Krejca M S, et al. The compact genetic algorithm is efficient under extreme gaussian noise. IEEE Trans Evol Comput, 2017, 21: 477–490

Doerr B, Hota A, Kötzing T. Ants easily solve stochastic shortest path problems. In: Proceedings of the 14th ACM Conference on Genetic and Evolutionary Computation, 2012. 17–24

Feldmann M, Kötzing T. Optimizing expected path lengths with ant colony optimization using fitness proportional update. In: Proceedings of the 12th International Workshop on Foundations of Genetic Algorithms, 2013. 65–74

Friedrich T, Kötzing T, Krejca M S, et al. Robustness of ant colony optimization to noise. Evolary Comput, 2016, 24: 237–254

Sudholt D, Thyssen C. A simple ant colony optimizer for stochastic shortest path problems. Algorithmica, 2012, 64: 643–672

Akimoto Y, Astete-Morales S, Teytaud O. Analysis of runtime of optimization algorithms for noisy functions over discrete codomains. Theory Comput Sci, 2015, 605: 42–50

Huber P, Ronchetti M. Robust Statistics. Hoboken: John Wiley & Sons, 2009

Leys C, Ley C, Klein O, et al. Detecting outliers: do not use standard deviation around the mean, use absolute deviation around the median. J Exp Social Psychol, 2013, 49: 764–766

DeNavas-Walt C, Proctor B, Smith J. Income, poverty, and health insurance coverage in the United States: 2011. U.S. Census Bureau, 2012

Doerr B, Sutton A. When resampling to cope with noise, use median, not mean. In: Proceedings of the 21st ACM Conference on Genetic and Evolutionary Computation, 2019. 242–248

Droste S, Jansen T, Wegener I. On the analysis of the (1+1) evolutionary algorithm. Theory Comput Sci, 2002, 276: 51–81

He J, Yao X. Drift analysis and average time complexity of evolutionary algorithms. Artif Intell, 2001, 127: 57–85

Acknowledgements

This work was supported by National Key Research and Development Program of China (Grant No. 2017YFB1003102), National Natural Science Foundation of China (Grant Nos. 62022039, 61672478, 61876077), and MOE University Scientific-Technological Innovation Plan Program. The authors would like to thank the anonymous reviewers for their helpful comments and suggestions to this work.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Bian, C., Qian, C., Yu, Y. et al. On the robustness of median sampling in noisy evolutionary optimization. Sci. China Inf. Sci. 64, 150103 (2021). https://doi.org/10.1007/s11432-020-3114-y

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11432-020-3114-y