Abstract

The study presents statistical procedures that monitor functioning of items over time. We propose generalized likelihood ratio tests that surveil multiple item parameters and implement with various sampling techniques to perform continuous or intermittent monitoring. The procedures examine stability of item parameters across time and inform compromise as soon as they identify significant parameter shift. The performance of the monitoring procedures was validated using simulated and real-assessment data. The empirical evaluation suggests that the proposed procedures perform adequately well in identifying the parameter drift. They showed satisfactory detection power and gave timely signals while regulating error rates reasonably low. The procedures also showed superior performance when compared with the existent methods. The empirical findings suggest that multivariate parametric monitoring can provide an efficient and powerful control tool for maintaining the quality of items. The procedures allow joint monitoring of multiple item parameters and achieve sufficient power using powerful likelihood-ratio tests. Based on the findings from the empirical experimentation, we suggest some practical strategies for performing online item monitoring.

Similar content being viewed by others

Notes

Previous studies defined the reference sample as \({\mathcal {R}} = \{i: \, i = 1 , \, \ldots \, , \,t - m \}\) such that it increases as the monitoring progresses. This study uses a fixed reference sample to alleviate the probable impact of false negatives in the expanding reference sample.

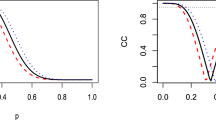

Under the simulation design in Sect. 4, sequential testing based on \({\mathcal {T}}^2\) showed average false positive rate of 16.88%. The procedure also tended to flag drift items prematurely before the actual parameter shift, exhibiting early detection rate of 9.70%. The chart seemed overly sensitive to small fluctuations that occur from the sampling and calibration error. Note that, unlike standard Shewhart control charts, which examine manifest variables, the Shewhart chart based on \({\mathcal {T}}^2\) examines estimable parameters and can be influenced by the sampling and estimation error.

We note that there are other ways of constructing a multivariate chart (e.g., Healy, 1987, Pignatiello & Runger, 1990, Woodall & Ncube, 1985). These procedures, however, make impractical assumptions (e.g., known directions or multiple univariate charts) or make little difference in the monitoring statistics in the present setting because only one observation is evaluated each time.

In sequential testing, Type I error can be defined in three ways–across the event times, the items, and across both the events and items.

Recall that the charting statistics are obtained by subtracting the reference values (k). The larger the k, the smaller the null charting statistics, and thus, the smaller the decision limit.

We also contemplated simulation for attaining the threshold values. The resulting values, however, did not generally accord with the statistics in the real data possibly due to disparity in sampling (e.g., content-balancing and item exposure control in real testing.)

References

Armstrong, R. D., & Shi, M. (2009). A parametric cumulative sum statistic for person fit. Applied Psychological Measurement, 33, 391–410.

Ban, J. C., Hanson, B. A., Wang, T., Yi, Q., & Harris, D. J. (2001). A comparative study of on-line pretest item-calibration/scaling methods in computerized adaptive testing. Journal of Educational Measurement, 38(3), 191–212.

Basseville, M., & Nikiforov, I. V. (1993). Detection of abrupt changes: Theory and applications. Prentice-Hall Inc.

Birnbaum, A. (1968). Theories of mental test scores. In F. M. Lord & M. R. Novick (Eds.), Some latent trait models and their use in inferring an examinee’s ability (pp. 397–479). MA: Addison-Wesley, Reading.

Bock, R., Muraki, E., & Pfeiffenberger, W. (1988). Item pool maintenance in the presence of item parameter drift. Journal of Educational Measurement, 25, 275–285.

Choe, E. M., Zhang, J., & Chang, H.-H. (2018). Sequential detection of compromised items using response times in computerized adaptive testing. Psychometrika, 83, 650–673.

Clark, A. (2013). Review of parameter drift methodology and implications for operational testing. Retrieved from https://www.ncbex.org/statistics-and-research/covington-award

Cohen, J. (1992). A power primer. Psychological Bulletin, 112, 155–159.

Crosier, R. B. (1988). Multivariate generalizations of cumulative sum quality-control schemes. Technometrics, 30, 291–303.

DeMars, C. E. (2004). Detection of item parameter drift over multiple test administrations. Applied Measurement in Education, 17, 265–300.

Donoghue, J. R., & Isham, S. P. (1998). A comparison of procedures to detect item parameter drift. Applied Psychological Measurement, 22(1), 33–51.

Goldstein, H. (1983). Measuring changes in educational attainment over time: Problems and possibilities. Journal of Educational Measurement, 20, 369–377.

Guo, H., Robin, F., & Dorans, N. (2017). Detecting item drift in large-scale testing. Journal of Educational Measurement, 54, 265–284.

Healy, J. D. (1987). A note on multivariate CUSUM procedures. Technometrics, 29, 409–412.

Hotelling, H. (1931). The generalization of Student’s ratio. Annals of Mathematical Statistics, 2, 360–378. https://doi.org/10.1214/aoms/1177732979

Huggins-Manley, A. C. (2017). Psychometric Consequences of Subpopulation Item Parameter Drift. Educational and Psychological Measurement, 2017, 143–164. https://doi.org/10.1177/0013164416643369

Kang, H.-A., Zheng, Y., & Chang, H.-H. (2020). Online Calibration of a Joint Model of Item Responses and Response Times in Computerized Adaptive Testing. Journal of Educational and Behavioral Statistics, 45, 175–208.

Klein Entink, R. H., Kuhn, J.-T., Hornke, L. F., & Fox, J.-P. (2009). Evaluating cognitive theory: A joint modeling approach using responses and response times. Psychological Methods, 14, 54–75.

Lai, T. (1991). Asymptotic optimality of generalized sequential likelihood ratio tests in some classical sequential testing problems. In B. K. Ghosh & P. K. Sen (Eds.), Handbook of sequential analysis handbook of sequential analysis (pp. 121–144). New York: Marcel Dekker Inc.

Lee, Y.-H., & Lewis, C. (2021). Monitoring item performance with CUSUM statistics in continuous testing. Journal of Educational and Behavioral Statistics, 46, 611–648. https://doi.org/10.3102/1076998621994563

Liu, C., Han, K. T., & Li, J. (2019). Compromised item detection for computerized adaptive testing. Front. Psychol., 10, 829. https://doi.org/10.3389/fpsyg.2019.00829

Lowry, C. A., Woodall, W. H., Champ, C. W., & Rigdon, S. E. (1992). A multivariate EWMA control chart. Technometrics, 34, 46–53.

Marianti, S., Fox, J.-P., Avetisyan, M., Veldkamp, B. P., & TijmstraFirs, J. (2014). Testing for aberrant behavior in response time modeling. Journal of Educational and Behavioral Statistics, 39, 426–451.

Page, E. S. (1954). Continuous inspection schemes. Biometrika, 41, 100–115. https://doi.org/10.1093/biomet/41.1-2.100

Pignatiello, J. J., & Runger, G. C. (1990). Comparisons of multivariate CUSUM charts. Journal of Quality Technology, 22, 173–186.

Segall, D. O. (2002). An item response model for characterizing test compromise. Journal of Educational and Behavioral Statistics, 27, 163–179.

Segall, D. O. (2004). A sharing item response theory model for computerized adaptive testing. Journal of Educational and Behavioral Statistics, 29, 439–460.

Shu, Z., Henson, R., & Luecht, R. (2013). Using deterministic, gated item response theory model to detect test cheating due to item compromise. Psychometrika, 78, 481–497.

Sinharay, S., & Johnson, M. S. (2020). The use of item scores and response times to detect examinees who may have benefited from item preknowledge. British Journal of Mathematical and Statistical Psychology, 73, 397–419.

Tendeiro, J. N., Meijer, R. R., Schakel, L., & Maij-de Meij, A. M. (2013). Using cumulative sum statistics to detect inconsistencies in unproctored internet testing. Educational and Psychological Measurement, 73, 143–161.

van der Linden, W. J. (2006). A lognormal model for response times on test items. Journal of Educational and Behavioral Statistics, 31, 181–204.

van der Linden, W. J. (2007). A hierarchical framework for modeling speed and accuracy on test items. Psychometrika, 72, 287–308.

van der Linden, W. J., & Guo, F. (2008). Bayesian procedures for identifying aberrant response-time patterns in adaptive testing. Psychometrika, 73(3), 365–384.

van Krimpen-Stoop, E. M. L. A., & Meijer, R. R. (2001). CUSUM-based person-fit statistics for adaptive testing. Journal of Educational and Behavioral Statistics, 26, 199–218.

Veerkamp, W. J. J., & Glas, C. A. W. (2000). Detection of known items in adaptive testing with a statistical quality control method. Journal of Educational and Behavioral Statistics, 25, 373–389.

Wang, X., & Liu, Y. (2020). Detecting compromised items using information from secure items. Journal of Educational and Behavioral Statistics, 45, 667–689.

Wells, C. S., Subkoviak, M. J., & Serlin, R. C. (2002). The effect of item parameter drift on examinee ability estimates. Applied Psychological Measurement, 26, 77–87.

Wilks, S. S. (1938). The large-sample distribution of the likelihood ratio for testing composite hypotheses. The Annals of Mathematical Statistics, 9, 60–62.

Woodall, W. H., & Ncube, M. M. (1985). Multivariate CUSUM quality control procedures. Technometrics, 27, 285–292.

Yang, Y., Ferdous, A., & Chin, T. Y. (2007). Exposed items detection in personnel selection assessment: An exploration of new item statistic. Chicago, IL: Paper presented at the annual meeting of the National Council of Measurement in Education.

Zhang, J. (2014). A sequential procedure for detecting compromised items in the item pool of CAT system. Applied Psychological Measurement, 38, 87–104.

Zhang, J., & Li, J. (2016). Monitoring items in real time to enhance CAT security. Journal of Educational Measurement, 53, 131–151.

Zhang, J., Li, Z., & Wang, Z. (2010). A multivariate control chart for simultaneously monitoring process mean and variability. Computational Statistics and Data Analysis, 54, 2244–2252.

Zopluoglu, C. (2019). Detecting examinees with item Preknowledge in large-scale testing using extreme gradient boosting (XGBoost). Educational and Psychological Measurement, 79, 931–961.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Kang, HA. Sequential Generalized Likelihood Ratio Tests for Online Item Monitoring. Psychometrika 88, 672–696 (2023). https://doi.org/10.1007/s11336-022-09871-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11336-022-09871-9