Abstract

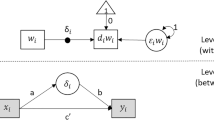

The use of multilevel VAR(1) models to unravel within-individual process dynamics is gaining momentum in psychological research. These models accommodate the structure of intensive longitudinal datasets in which repeated measurements are nested within individuals. They estimate within-individual auto- and cross-regressive relationships while incorporating and using information about the distributions of these effects across individuals. An important quality feature of the obtained estimates pertains to how well they generalize to unseen data. Bulteel and colleagues (Psychol Methods 23(4):740–756, 2018a) showed that this feature can be assessed through a cross-validation approach, yielding a predictive accuracy measure. In this article, we follow up on their results, by performing three simulation studies that allow to systematically study five factors that likely affect the predictive accuracy of multilevel VAR(1) models: (i) the number of measurement occasions per person, (ii) the number of persons, (iii) the number of variables, (iv) the contemporaneous collinearity between the variables, and (v) the distributional shape of the individual differences in the VAR(1) parameters (i.e., normal versus multimodal distributions). Simulation results show that pooling information across individuals and using multilevel techniques prevent overfitting. Also, we show that when variables are expected to show strong contemporaneous correlations, performing multilevel VAR(1) in a reduced variable space can be useful. Furthermore, results reveal that multilevel VAR(1) models with random effects have a better predictive performance than person-specific VAR(1) models when the sample includes groups of individuals that share similar dynamics.

Similar content being viewed by others

Notes

Next to the mean squared prediction error (MSPE), we also used the out-of-sample coefficient of determination \(\text {R}^2\) (Campbell and Thompson, 2008) to assess the predictive accuracy of the considered models. For a variable j, the out-of-sample \(\text {R}_j^2\) represents the proportion of variance of \(Y_j\) that is explained by the predictive model, estimated using other data. In our cross-validation context, the out-of-sample \(\text {R}_j^2\) can be computed as follows:

$$\begin{aligned} \text {R}_j^2 = 1 - \frac{\sum _{k=1}^K \sum _{i=1}^N \sum _{t =1}^{T_{i}^{(k)}} (Y_{i t j k} - {\hat{Y}}_{i t j k})^2}{\sum _{k=1}^K \sum _{i=1}^N \sum _{t =1}^{T_{i}^{(k)}} (Y_{i t j k} - {\bar{Y}}_{i t j k})^2} \end{aligned}$$(4)where \({\hat{Y}}_{i t j k}\) indicates the predicted values based on the training data, and \({\bar{Y}}_{i t j k}\) is the mean of variable j. The overall \(\text {R}^2\) is computed as the average of the P \(\text {R}_j^2\)-values. The out-of-sample \(R^2\) takes values in the interval \([-\infty ,1]\). Negative values reflect that the predictive model has a poor predictive performance, whereas a value equal to one shows that the model perfectly predicts unseen data. Obviously, in most cases, we expect \(R^2\) to be smaller than the in-sample counterpart. Since this measure shows the same patterns as the MSPE, we did not include the results in the paper, but interested readers can consult them in the supplementary material: https://osf.io/rs6un/.

In a previous version of the manuscript, we conducted the analysis varying the regressive parameters within each design cell. The simulation results are included in the OSF page of the project and are comparable with the ones presented in this paper.

For each of the data generating models, we conducted a mixed ANOVA to investigate how the MSPE values of the six proposed methods are affected by the number of variables, the number of measurement occasions within persons, and the number of persons. Results show that there are large main effects of the number of variables and the estimation model. These main effects are qualified by interactions between the number of variables and the estimation model, and the number of variables and the number of measurement occasions. The results are included in the supplementary material.

References

Asparouhov, T., Hamaker, E. L., & Muthén, B. (2018). Dynamic structural equation models. Structural Equation Modeling: A Multidisciplinary Journal, 25(3), 359–388.

Babyak, M. A. (2004). What you see may not be what you get: A brief, nontechnical introduction to overfitting in regression-type models. Psychosomatic Medicine, 66(3), 411–421.

Barr, D. J., Levy, R., Scheepers, C., & Tily, H. J. (2013). Random effects structure for confirmatory hypothesis testing: Keep it maximal. Journal of Memory and Language, 68(3), 255–278.

Bates, D., Kelman, T., Simon, A. B., Noack, A., Hatherly, M., & Bouchet-Valat, M. (2016). Dmbates/Mixedmodels.Jl: Drop Julia V0.4.X and earlier support. Zenodo.

Bates, D., Kliegl, R., Vasishth, S., & Baayen, H. (2015a). Parsimonious mixed models. arXiv preprint arXiv:1506.04967.

Bates, D., Mächler, M., Bolker, B., & Walker, S. (2015b). Fitting linear mixed-effects models using lme4. Journal of Statistical Software, 67(1), 1–48.

Bezanson, J., Edelman, A., Karpinski, S., & Shah, V. B. (2017). Julia: A fresh approach to numerical computing. SIAM Review, 59(1), 68–98.

Borsboom, D., & Cramer, A. O. (2013). Network analysis: An integrative approach to the structure of psychopathology. Annual Review of Clinical Psychology, 9, 91–121.

Bringmann, L. F., Pe, M. L., Vissers, N., Ceulemans, E., Borsboom, D., Vanpaemel, W., & Kuppens, P. (2016). Assessing temporal emotion dynamics using networks. Assessment, 23(4), 425–435.

Bringmann, L. F., Vissers, N., Wichers, M., Geschwind, N., Kuppens, P., Peeters, F., & Tuerlinckx, F. (2013). A network approach to psychopathology: New insights into clinical longitudinal data. PLoS ONE, 8(4), e60188.

Brose, A., Voelkle, M. C., Lövdén, M., Lindenberger, U., & Schmiedek, F. (2015). Differences in the between-person and within-person structures of affect are a matter of degree. European Journal of Personality, 29(1), 55–71.

Browne, M. W., & Nesselroade, J. R. (2005). Representing psychological processes with dynamic factor models: Some promising uses and extensions of autoregressive moving average time series models. In A. Maydeu-Olivares & J. J. McArdle (Eds.), Contemporary psychometrics: A festschrift for Roderick P. McDonald (pp. 415–452). Mahwah, NJ: Lawrence Erlbaum Associates.

Bulteel, K., Mestdagh, M., Tuerlinckx, F., & Ceulemans, E. (2018a). VAR (1) based models do not always outpredict AR (1) models in typical psychological applications. Psychological Methods, 23(4), 740–756.

Bulteel, K., Tuerlinckx, F., Brose, A., & Ceulemans, E. (2016a). Clustering vector autoregressive models: Capturing qualitative differences in within-person dynamics. Frontiers in Psychology, 7, 1540.

Bulteel, K., Tuerlinckx, F., Brose, A., & Ceulemans, E. (2016b). Using raw VAR regression coefficients to build networks can be misleading. Multivariate Behavioral Research, 51(2–3), 330–344.

Bulteel, K., Tuerlinckx, F., Brose, A., & Ceulemans, E. (2018b). Improved insight into and prediction of network dynamics by combining VAR and dimension reduction. Multivariate Behavioral Research, 53(6), 853–875.

Campbell, J. Y., & Thompson, S. B. (2008). Predicting excess stock returns out of sample: Can anything beat the historical average? The Review of Financial Studies, 21(4), 1509–1531.

Cattell, R. B. (1966). The scree test for the number of factors. Multivariate Behavioral Research, 1(2), 245–276.

Ceulemans, E., & Kiers, H. A. (2009). Discriminating between strong and weak structures in three-mode principal component analysis. British Journal of Mathematical and Statistical Psychology, 62(3), 601–620.

Ceulemans, E., & Kiers, H. A. L. (2006). Selecting among three-mode principal component models of different types and complexities: A numerical convex hull based method. British Journal of Mathematical and Statistical Psychology, 59(1), 133–150.

Ceulemans, E., Timmerman, M. E., & Kiers, H. A. (2011). The CHull procedure for selecting among multilevel component solutions. Chemometrics and Intelligent Laboratory Systems, 106(1), 12–20.

Ceulemans, E., & Van Mechelen, I. (2005). Hierarchical classes models for three-way three-mode binary data: Interrelations and model selection. Psychometrika, 70(3), 461–480.

Ceulemans, E., Wilderjans, T. F., Kiers, H. A. L., & Timmerman, M. E. (2016). MultiLevel simultaneous component analysis: A computational shortcut and software package. Behavior Research Methods, 48, 1008–1020.

Clark, T. S., & Linzer, D. A. (2015). Should I use fixed or random effects. Political Science Research and Methods, 3(2), 399–408.

Cohen, J., Cohen, P., West, S. G., & Aiken, L. S. (2013). Applied multiple regression/correlation analysis for the behavioral sciences. Milton Park: Routledge.

Crawford, A. V., Green, S. B., Levy, R., Lo, W. J., Scott, L., Svetina, D., & Thompson, M. S. (2010). Evaluation of parallel analysis methods for determining the number of factors. Educational and Psychological Measurement, 70(6), 885–901.

Eisele, G., Lafit, G., Vachon, H., Kuppens, P., Houben, M., Myin-Germeys, I., & Viechtbauer, W. (2020). Affective structure, measurement invariance, and reliability across different experience sampling protocols.

Ernst, A. F., Timmerman, M. E., Jeronimus, B. F., & Albers, C. J. (2019). Insight into individual differences in emotion dynamics with clustering. Assessment, first online.

Friedman, J., Hastie, T., & Tibshirani, R. (2001). The elements of statistical learning. Springer series in statistics (Vol. 1(10)). New York: Springer.

Friedman, J. H. (1997). On bias, variance, 0/1-loss, and the curse-of-dimensionality. Data Mining and Knowledge Discovery, 1(1), 55–77.

Gates, K. M., & Molenaar, P. C. (2012). Group search algorithm recovers effective connectivity maps for individuals in homogeneous and heterogeneous samples. NeuroImage, 63(1), 310–319.

Gelman, A. (2005). Analysis of variance-why it is more important than ever. Annals of Statistics, 33(1), 1–53.

Goldstein, H. (2011). Multilevel statistical models (Vol. 922). Hoboken: Wiley.

Hamaker, E., Ceulemans, E., Grasman, R., & Tuerlinckx, F. (2015). Modeling affect dynamics: State of the art and future challenges. Emotion Review, 7(4), 316–322.

Hamilton, J. (1994). Time series analysis (Vol. 2). Princeton: Princeton University Press.

Horn, J. L. (1965). A rationale and test for the number of factors in factor analysis. Psychometrika, 30(2), 179–185.

Hox, J. J. (2010). Multilevel analysis: Techniques and applications. New York, NY: Routledge.

Jongerling, J., Laurenceau, J. P., & Hamaker, E. L. (2015). A multilevel AR (1) model: Allowing for inter-individual differences in trait-scores, inertia, and innovation variance. Multivariate Behavioral Research, 50(3), 334–349.

Kiers, H. A., & Smilde, A. K. (2007). A comparison of various methods for multivariate regression with highly collinear variables. Statistical Methods and Applications, 16(2), 193–228.

Kiers, H. A. L., & ten Berge, J. M. F. (1994a). Hierarchical relations between methods for simultaneous component analysis and a technique for rotation to a simple simultaneous structure. British Journal of Mathematical and Statistical Psychology, 47, 109–126.

Kiers, H. A. L., & ten Berge, J. M. F. (1994b). The Harris-Kaiser independent cluster rotation as a method for rotation to simple component weights. Psychometrika, 59, 81–90.

Krone, T., Albers, C. J., Kuppens, P., & Timmerman, M. E. (2018). A multivariate statistical model for emotion dynamics. Emotion, 18(5), 739–754.

Krone, T., Albers, C. J., & Timmerman, M. E. (2016). Comparison of estimation procedures for multilevel AR (1) models. Frontiers in Psychology, 7, 486.

Krone, T., Albers, C. J., & Timmerman, M. E. (2017). A comparative simulation study of AR(1) estimators in short time series. Quality & Quantity, 51(1), 1–21.

Kuppens, P., Allen, N. B., & Sheeber, L. B. (2010). Emotional inertia and psychological maladjustment. Psychological Science, 21(7), 984–991.

Kuppens, P., Champagne, D., & Tuerlinckx, F. (2012). The dynamic interplay between appraisal and core affect in daily life. Frontiers in Psychology, 3, 380.

Lafit, G., Adolf, J., Dejonckheere, E., Myin-Germeys, I., Viechtbauer, W., & Ceulemans, E. (2021). Selection of the number of participants in intensive longitudinal studies: A user-friendly shiny app and tutorial for performing power analysis in multilevel regression models that account for temporal dependencies. In Advances in methods and practices in psychological science.

Larson, R., & Csikszentmihalyi, M. (1983). The experience sampling method. In H. T. Reis (Ed.), New directions for methodology of social and behavioral science (pp. 41–56). San Francisco: Jossey-Bass.

Liu, S. (2017). Person-specific versus multilevel autoregressive models: Accuracy in parameter estimates at the population and individual levels. British Journal of Mathematical and Statistical Psychology, 70(3), 480–498.

Lorenzo-Seva, U., Timmerman, M. E., & Kiers, H. A. (2011). The Hull method for selecting the number of common factors. Multivariate Behavioral Research, 46(2), 340–364.

Lütkepohl, H. (2005). New introduction to multiple time series analysis. Berlin: Springer.

Mansueto, A. C., Wiers, R., van Weert, J. C., Schouten, B. C., & Epskamp, S. (2020). Investigating the feasibility of idiographic network models.

McNeish, D., & Hamaker, E. L. (2020). A primer on two-level dynamic structural equation models for intensive longitudinal data in Mplus. Psychological Methods, 25(5), 610.

Merz, E. L., & Roesch, S. C. (2011). Modeling trait and state variation using multilevel factor analysis with PANAS daily diary data. Journal of Research in Personality, 45(1), 2–9.

Molenaar, P. (2004). A manifesto on psychology as idiographic science: Bringing the person back into scientific psychology, this time forever. Measurement, 2(4), 201–218.

Morren, M., Van Dulmen, S., Ouwerkerk, J., & Bensing, J. (2009). Compliance with momentary pain measurement using electronic diaries: a systematic review. European Journal of Pain, 13(4), 354–365.

Müller, S., Scealy, J. L., & Welsh, A. H. (2013). Model selection in linear mixed models. Statistical Science, 28(2), 135–167.

Muthén, B., & Muthén, B. O. (2009). Statistical analysis with latent variables. New York, NY: Wiley.

Myin-Germeys, I., Kasanova, Z., Vaessen, T., Vachon, H., Kirtley, O., Viechtbauer, W., & Reininghaus, U. (2018). Experience sampling methodology in mental health research: New insights and technical developments. World Psychiatry, 17(2), 123–132.

Ono, M., Schneider, S., Junghaenel, D. U., & Stone, A. A. (2019). What affects the completion of ecological momentary assessments in chronic pain research? An individual patient data meta-analysis. Journal of Medical Internet Research, 21(2), e11398.

Pe, M. L., Kircanski, K., Thompson, R. J., Bringmann, L. F., Tuerlinckx, F., Mestdagh, M., & Kuppens, P. (2015). Emotion-network density in major depressive disorder. Clinical Psychological Science, 3(2), 292–300.

R Core Team. (2020). R: A language and environment for statistical computing. Vienna: R Foundation for Statistical Computing.

Raudenbush, S. W., & Bryk, A. S. (2002). Hierarchical linear models: Applications and data analysis methods (2nd ed.). Thousand Oaks, CA: Sage.

Schepers, J., Ceulemans, E., & Van Mechelen, I. (2008). Selecting among multi-mode partitioning models of different complexities: A comparison of four model selection criteria. Journal of Classification, 25(1), 67.

Schultzberg, M., & Muthén, B. (2018). Number of subjects and time points needed for multilevel time-series analysis: A simulation study of dynamic structural equation modeling. Structural Equation Modeling: A Multidisciplinary Journal, 25(4), 495–515.

Schuurman, N. K., & Hamaker, E. L. (2019). Measurement error and person-specific reliability in multilevel autoregressive modeling. Psychological Methods, 24(1), 70.

Sels, L., Ceulemans, E., Bulteel, K., & Kuppens, P. (2016). Emotional interdependence and well-being in close relationships. Frontiers in Psychology, 7, 283.

Song, H., & Zhang, Z. (2014). Analyzing multiple multivariate time series data using multilevel dynamic factor models. Multivariate Behavioral Research, 49(1), 67–77.

Timmerman, M. E., & Kiers, H. A. L. (2003). Four simultaneous component models of multivariate time series for more than one subject to model intraindividual and interindividual differences. Psychometrika, 86, 105–122.

Trull, T. J., & Ebner-Priemer, U. (2013). Ambulatory assessment. Annual Review of Clinical Psychology, 9, 151–176.

Vachon, H., Viechtbauer, W., Rintala, A., & Myin-Germeys, I. (2019). Compliance and retention with the experience sampling method over the continuum of severe mental disorders: Meta-analysis and recommendations. Journal of Medical Internet Research, 21(12), e14475.

Wainer, H. (1976). Estimating coefficients in linear models: It dont make no nevermind. Psychological Bulletin, 83(2), 213.

Watson, D., Clark, L. A., & Tellegen, A. (1988). Development and validation of brief measures of positive and negative affect: The PANAS scales. Journal of Personality and Social Psychology, 54(6), 1063.

Wichers, M. (2014). The dynamic nature of depression: A new micro-level perspective of mental disorder that meets current challenges. Psychological Medicine, 44(7), 1349–1360.

Wigman, J. T. W., Van Os, J., Borsboom, D., Wardenaar, K. J., Epskamp, S., Klippel, A., & Wichers, M. (2015). Exploring the underlying structure of mental disorders: Cross-diagnostic differences and similarities from a network perspective using both a top-down and a bottom-up approach. Psychological Medicine, 45(11), 2375–2387.

Wilderjans, T. F., Ceulemans, E., & Meers, K. (2013). CHull: A generic convex hull based model selection method. Behavior Research Methods, 45(1), 1–15.

Yarkoni, T., & Westfall, J. (2017). Choosing prediction over explanation in psychology: Lessons from machine learning. Perspectives on Psychological Science, 12(6), 1100–1122.

Zautra, A. J., Affleck, G. G., Tennen, H., Reich, J. W., & Davis, M. C. (2005). Dynamic approaches to emotions and stress in everyday life: Bolger and Zuckerman reloaded with positive as well as negative affects. Journal of Personality, 73(6), 1511–1538.

Acknowledgements

The resources and services used in this work were provided by the VSC (Flemish Supercomputer Center), funded by the Research Foundation - Flanders (FWO) and the Flemish Government.

Funding

The research presented in this article was supported by research grants from the Fund for Scientific Research-Flanders (FWO; Project No. G0C9821N) and from the Research Council of KU Leuven (C14/19/054; iBOF/21/090) awarded to E. Ceulemans.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Performance Measures to Evaluate Estimation Accuracy. While we cannot directly estimate the mean squared bias or variance of the predictions in our CV setting, an easy way to shed some light on the relation between predictive accuracy and estimation accuracy is to investigate how well person-specific and multilevel VAR(1) models can accurately estimate the elements of the true transition matrix \(\varvec{\Psi }_i\), underlying our simulated data. Specifically, using the complete data sets (i.e., without further splitting them in a training and test part), we can compute the mean squared estimation error for the autoregressive effects as follows:

where \(\psi _{i j j}\) and \({\hat{\psi }}_{i j j}\) denote the true and estimated autoregressive effects. Additionally, we can compute the mean squared estimation errors for the cross-regressive effects:

where \(\psi _{i j k}\) and \({\hat{\psi }}_{i j k}\) denote the true and estimated cross-regressive effects. We expect that these two MSE measures are strongly correlated to how well a considered modeling approach estimates the target function \(f(\mathbf{Y }_{it-1})\). We will do that for Study I and report the results below (Tables 9 and 10). Figures 5, 6, 7, 8, 9, 10, 11, and 12 display the histograms of the distribution of the auto- and cross-regressive effects across persons when data are generated from a multilevel VAR(1) model with random effects, a person-specific VAR(1) model, or a cluster-specific VAR(1) model.

Rights and permissions

About this article

Cite this article

Lafit, G., Meers, K. & Ceulemans, E. A Systematic Study into the Factors that Affect the Predictive Accuracy of Multilevel VAR(1) Models. Psychometrika 87, 432–476 (2022). https://doi.org/10.1007/s11336-021-09803-z

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11336-021-09803-z