Abstract

Horn’s parallel analysis is a widely used method for assessing the number of principal components and common factors. We discuss the theoretical foundations of parallel analysis for principal components based on a covariance matrix by making use of arguments from random matrix theory. In particular, we show that (i) for the first component, parallel analysis is an inferential method equivalent to the Tracy–Widom test, (ii) its use to test high-order eigenvalues is equivalent to the use of the joint distribution of the eigenvalues, and thus should be discouraged, and (iii) a formal test for higher-order components can be obtained based on a Tracy–Widom approximation. We illustrate the performance of the two testing procedures using simulated data generated under both a principal component model and a common factors model. For the principal component model, the Tracy–Widom test performs consistently in all conditions, while parallel analysis shows unpredictable behavior for higher-order components. For the common factor model, including major and minor factors, both procedures are heuristic approaches, with variable performance. We conclude that the Tracy–Widom procedure is preferred over parallel analysis for statistically testing the number of principal components based on a covariance matrix.

Similar content being viewed by others

Abbreviations

- \({\mathbf {X}}\) :

-

Matrices (bold font, uppercase)

- \(x_{ij}\) :

-

Element of \({\mathbf {X}}\) in the i-th row, j-th column

- \({\varvec{\Sigma }}\) :

-

Population covariance matrix

- \({\mathbf {C}}\) :

-

Sample covariance matrix

- \(\lambda _k\) :

-

kth eigenvalue of the population covariance matrix

- \(l_k\) :

-

kth eigenvalue of the sample covariance matrix

- \(L_k\) :

-

Tracy–Widom statistic for the \(l_k\)

- s :

-

Argument of the Tracy–Widom cdf and pdf

References

Airy, G. (1838). On the intensity of light in the neighbourhood of a caustic. Transactions of the Cambridge Philosophical Society, 6, 379–402.

Baik, J., Ben Arous, G., & Péché, S. (2005). Phase transition of the largest eigenvalue for nonnull complex sample covariance matrices. Annals of Probability, 33, 1643–1697.

Baik, J., & Silverstein, J. W. (2006). Eigenvalues of large sample covariance matrices of spiked population models. Journal of Multivariate Analysis, 97(6), 1382–1408.

Bao, Z., Pan, G., & Zhou, W. (2012). Tracy-Widom law for the extreme eigenvalues of sample correlation matrices. Electronic Journal of Probability, 17(88), 1–32.

Barelds, D. P., & Dijkstra, P. (2010). Narcissistic personality inventory: Structure of the adapted Dutch version. Scandinavian Journal of Psychology, 51(2), 132–138.

Bartlett, M. S. (1950). Tests of significance in factor analysis. British Journal of Statistical Psychology, 3(2), 77–85.

Bornemann, F. (2009). On the numerical evaluation of distributions in random matrix theory: A review. arXiv:0904.1581.

Bornemann, F. (2010). On the numerical evaluation of Fredholm determinants. Mathematics of Computation, 79(270), 871–915.

Buja, A., & Eyübğolu, N. (1992). Remarks on parallel analysis. Multivariate Behavioral Research, 27(4), 509–540.

Cattell, R. B. (1966). The scree test for the number of factors. Multivariate Behavioral Research, 1(2), 245–276.

Ceulemans, E., & Kiers, H. A. (2006). Selecting among three-mode principal component models of different types and complexities: A numerical convex hull based method. British Journal of Mathematical and Statistical Psychology, 59(1), 133–150.

Chiani, M. (2012). Distribution of the largest eigenvalue for real Wishart and Gaussian random matrices and a simple approximation for the Tracy–Widom distribution. arXiv:1209.3394.

Crawford, A. V., Green, S. B., Levy, R., Lo, W.-J., Scott, L., Svetina, D., et al. (2010). Evaluation of parallel analysis methods for determining the number of factors. Educational and Psychological Measurement, 70(6), 885–901.

Deming, W. E. (1966). Some theory of sampling. New York: Courier Dover Publications.

DiCiccio, T. J., & Efron, B. (1996). Bootstrap confidence intervals. Statistical Science, 11, 189–212.

Dinno, A. (2009). Exploring the sensitivity of horn’s parallel analysis to the distributional form of random data. Multivariate Behavioral Research, 44(3), 362–388.

Efron, B.,&Tibshirani, R. J. (1993). The bootstrap estimate of standard error. In An introduction to the bootstrap (pp.45–59). New York: Springer.

Efron, B. (1994). Missing data, imputation, and the bootstrap. Journal of the American Statistical Association, 89(426), 463–475.

Fabrigar, L. R., Wegener, D. T., MacCallum, R. C., & Strahan, E. J. (1999). Evaluating the use of exploratory factor analysis in psychological research. Psychological Methods, 4(3), 272.

Ford, J. K., MacCallum, R. C., & Tait, M. (1986). The application of exploratory factor analysis in applied psychology: A critical review and analysis. Personnel Psychology, 39(2), 291–314.

Garrido, L. E., Abad, F. J., & Ponsoda, V. (2013). A new look at Horn’s parallel analysis with ordinal variables. Psychological Methods, 18(4), 454.

Glorfeld, L. W. (1995). An improvement on horn’s parallel analysis methodology for selecting the correct number of factors to retain. Educational and Psychological Measurement, 55(3), 377–393.

Green, S. B., Levy, R., Thompson, M. S., Lu, M., Lo, W.-J. (2012). A proposed solution to the problem with using completely random data to assess the number of factors with parallel analysis. Educational and Psychological Measurement, 72(3) 357–374. http://epm.sagepub.com/content/72/3/357.abstract doi:10.1177/0013164411422252

Guttman, L. (1954). Some necessary conditions for common-factor analysis. Psychometrika, 19(2), 149–161.

Harding, M. C. (2008). Explaining the single factor bias of arbitrage pricing models in finite samples. Economics Letters, 99(1), 85–88.

Hastings, S., & McLeod, J. (1980). A boundary value problem associated with the second Painleve transcendent and the Korteweg-de Vries equation. Archive for Rational Mechanics and Analysis, 73(1), 31–51.

Hattie, J. (1985). Methodology review: assessing unidimensionality of tests and ltenls. Applied Psychological Measurement, 9(2), 139–164.

Hayton, J. C., Allen, D. G., & Scarpello, V. (2004). Factor retention decisions in exploratory factor analysis: A tutorial on parallel analysis. Organizational Research Methods, 7(2), 191–205.

Horn, J. L. (1965). A rationale and test for the number of factors in factor analysis. Psychometrika, 30(2), 179–185.

Humphreys, L. G., & Montanelli, R. G, Jr. (1975). An investigation of the parallel analysis criterion for determining the number of common factors. Multivariate Behavioral Research, 10(2), 193–205.

Jackson, D. A. (1993). Stopping rules in principal components analysis: A comparison of heuristical and statistical approaches. Ecology, 74(8), 2204–2214.

Johnstone, I. M. (2006). High dimensional statistical inference and random matrices. arXiv:math/0611589.

Johnstone, I. M., Ma, Z., Perry, P. O. Shahram, M. (2009). Rmtstat: Distributions, statistics and tests derived from random matrix theory [Computersoftwaremanual]. (R package version 0.2)

Johnstone, I. M. (2001). On the distribution of the largest eigenvalue in principal components analysis. Annals of Statistics, 29(2), 295–327.

Jolliffe, I. (2005). Principal component analysis. New York: Wiley.

Karoui, N. E. (2003). On the largest eigenvalue of Wishart matrices with identity covariance when n, p and p/n tend to infinity. arXiv:math/0309355.

Karoui, N. E. (2007). Tracy–Widom limit for the largest eigenvalue of a large class of complex sample covariance matrices. The Annals of Probability, 35, 663–714.

Kendall, M. G., & Yule, G. U. (1950). An introduction to the theory of statistics. London: Charles Griffin & Company.

Koster, M., Timmerman, M. E., Nakken, H., Pijl, S. J., & van Houten, E. J. (2009). Evaluating social participation of pupils with special needs in regular primary schools. European Journal of Psychological Assessment, 25(4), 213–222.

Kritchman, S., & Nadler, B. (2008). Determining the number of components in a factor model from limited noisy data. Chemometrics and Intelligent Laboratory Systems, 94(1), 19–32.

Kuppens, P., Ceulemans, E., Timmerman, M. E., Diener, E., & Kim-Prieto, C. (2006). Universal intracultural and intercultural dimensions of the recalled frequency of emotional experience. Journal of Cross-Cultural Psychology, 37(5), 491–515.

Ledesma, R. D., & Valero-Mora, P. (2007). Determining the number of factors to retain in EFA: an easy-to-use computer program for carrying out parallel analysis. Practical Assessment, Research & Evaluation, 12(2), 1–11.

Lorenzo-Seva, U., Timmerman, M. E., & Kiers, H. A. (2011). The hull method for selecting the number of common factors. Multivariate Behavioral Research, 46(2), 340–364.

Pan, G. (2012). Comparison between two types of large sample covariance matrices. In Institut Henri Poincaré: Ann.

Patterson, N., Price, A. L., & Reich, D. (2006). Population structure and eigenanalysis. PLoS Genetics, 2(12), e190.

Paul, D. (2007). Asymptotics of sample eigenstructure for a large dimensional spiked covariance model. Statistica Sinica, 17(4), 1617.

Paul, D., & Aue, A. (2014). Random matrix theory in statistics: A review. Journal of Statistical Planning and Inference, 150, 1–29.

Peres-Neto, P. R., Jackson, D. A., & Somers, K. M. (2005). How many principal components? stopping rules for determining the number of non-trivial axes revisited. Computational Statistics & Data Analysis, 49(4), 974–997.

Pillai, N. S., & Yin, J. (2012). Edge universality of correlation matrices. The Annals of Statistics, 40(3), 1737–1763.

Raskin, R., & Hall, C. (1979). A narcissistic personality inventory. Psychological Reports, 45(2), 590–590.

Raskin, R., & Terry, H. (1988). A principal-components analysis of the narcissistic personality inventory and further evidence of its construct validity. Journal of Personality and Social Psychology, 54(5), 890.

Rice, S., & Church, M. (1996). Sampling surficial fluvial gravels: the precision of size distribution percentile estimates. Journal of Sedimentary Research, 66(3), 654–665.

Saccenti, E., Smilde, A. K., Westerhuis, J. A., & Hendriks, M. M. (2011). Tracy-Widom statistic for the largest eigenvalue of autoscaled real matrices. Journal of Chemometrics, 25(12), 644–652.

Saccenti, E., & Camacho, J. (2015). Determining the number of components in principal components analysis: A comparison of statistical, crossvalidation and approximated methods. Chemometrics and Intelligent Laboratory Systems, 149(Part A), 99–116.

Saccenti, E., & Timmerman, M. E. (2016). Approaches to sample size determination for multivariate data: Applications to PCA and PLS-DA of omics data. Journal of Proteome Research, 15, 2379–2393.

Smits, I. A., Timmerman, M. E., & Meijer, R. R. (2012). Exploratory Mokken scale analysis as a dimensionality assessment tool why scalability does not imply unidimensionality. Applied Psychological Measurement, 36(6), 516–539.

Soshnikov, A. (2002). A note on universality of the distribution of the largest eigenvalues in certain sample covariance matrices. Journal of Statistical Physics, 108(5), 1033–1056.

Thompson, B. (2004). Exploratory and confirmatory factor analysis: Understanding concepts and applications. Washington, DC: American Psychological.

Timmerman, M. E., & Lorenzo-Seva, U. (2011). Dimensionality assessment of ordered polytomous items with parallel analysis. Psychological Methods, 16(2), 209.

Tracy, C. A., Widom, H. (2009). The distributions of random matrix theory and their applications. In New trends in mathematical physics (pp. 753–765). Springer: New York.

Tracy, C. A., & Widom, H. (1993). Level-spacing distributions and the airy kernel. Physics Letters B, 305(1), 115–118.

Tracy, C. A., & Widom, H. (1994). Level-spacing distributions and the airy kernel. Communications in Mathematical Physics, 159(1), 151–174.

Tracy, C. A., & Widom, H. (1996). On orthogonal and symplectic matrix ensembles. Communications in Mathematical Physics, 177(3), 727–754.

Tucker, L. R., Koopman, R. F., & Linn, R. L. (1969). Evaluation of factor analytic research procedures by means of simulated correlation matrices. Psychometrika, 34(4), 421–459.

Wilderjans, T. F., Ceulemans, E., & Meers, K. (2013). CHull: A generic convex-hull-based model selection method. Behavior Research Methods, 45(1), 1–15.

Wishart, J. (1928). The generalised product moment distribution in samples from a normal multivariate population. Biometrika pp. 32–52.

Zwick, W. R., & Velicer, W. F. (1982). Factors influencing four rules for determining the number of components to retain. Multivariate Behavioral Research, 17(2), 253–269.

Acknowledgments

The authors thank Dick Barelds, Marloes Koster, and Sip Jan Pijl for sharing their data. This work was partly supported by the European Commission-funded FP7 project INFECT (Contract No. 305340).

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendices

1.1 Appendix 1: The Tracy–Widom distribution

The Tracy–Widom distribution (Tracy & Widom, 1993, 1994, 1996) is defined as

The function q(x) is the unique Hastings–McLeod solution (Hastings & McLeod, 1980) of the nonlinear Painlevé differential equation

satisfying the boundary condition

where Ai(t) is the Airy function (Airy, 1838) and

This distribution was found to be the limiting law for the largest eigenvalue of Gaussian symmetric \(n \times n\) matrices (the so-called GOE, Gaussian Orthogonal Ensemble). Johnstone’s theorem showed that the same limiting distribution holds for the covariance matrices of rectangular data matrices \(n \times p\) when both n and p are large.

1.2 Appendix 2: The Baik–Ben Arous-Péché phase transition

Baik et al. (2005) provided the asymptotics of the distribution of the largest eigenvalue(s) of a sample covariance matrix under the spike population model. These results were proved for complex data, but there is strong evidence that they hold true also for real data. For this, the results in Baik et al. (2005) can be stated in form of a conjecture, the so-called Baik–Ben Arous-Péché (BBP conjecture).

Conjecture 1

Let \(\lambda _1\) be the leading eigenvalue of the population covariance matrix with \(\lambda _k = 1\) for \(2\le k \le p\) and let \(l_1\) be the largest sample eigenvalue. In the asymptotic regime \((n,p) \rightarrow \infty \) with finite limit ratio p / n, it holds that

-

(1)

If

$$\begin{aligned} \lambda _1 < 1 + \sqrt{\frac{p}{n}} \end{aligned}$$(17)then \(l_1\) when properly normalized to \(L_1\), will have the same distribution as when \(\lambda _1=1\), that is, it will be Tracy–Widom distributed.

-

(2)

If

$$\begin{aligned} \lambda _1 \ge 1 + \sqrt{\frac{p}{n}} \end{aligned}$$(18)then \(L_1\) will be almost surely unbounded.

Statement (2) was proved for real data in Baik and Silverstein (2006), and Paul (2007) showed that in the real case, \(L_1\) will exhibit Gaussian fluctuations.

The behavior of \(L_1\) will be different depending on the size of \(\lambda _1\), hence the phase-transition denomination. It is clear that as \((n,p) \rightarrow \infty \) the phase transition become arbitrary sharp. Stated otherwise, if \(\lambda _1\) is below the BBP limit there will be little chance to detect structure in the data, as the eigenvalues are distributed according the Tracy–Widom distribution, that is, as noise eigenvalues. On the contrary, if \(\lambda _1\) is above the BBP limit, detection of structure will be easier (Patterson et al., 2006). This phenomenon has been recently used to explain some problems arising in eigenanalysis when applied to population genetic studies (Patterson et al., 2006) and economics (Harding, 2008). Similar results hold for higher-order eigenvalues: it is enough to replace \(l_1\) and \(\lambda _1\) with \(l_k\) and \(\lambda _k\) in Equations (17) and (18). For more details, see Karoui (2007), Johnstone (2006), Paul (2007), Paul & Aue (2014), Tracy & Widom (2009).

An interesting aspect of the BBP phase transition is that it provides one of the few examples of power analysis in the multivariate setting (Saccenti & Timmerman, 2016). Given a problem and fixed the population size p, if \(\lambda _1\) would have been known, then Equation (18) would give a direct estimate of the sample size needed to be able to detect the presence of structure in the data. From Equation (18) also descends that increasing sample size, rather than variable number, is advantageous for detecting structure above the BBP threshold, but if \(\lambda _1\) is below the BBP threshold there is no gain in increasing the sample size (Patterson et al., 2006).

1.3 Appendix 3: A Note on Percentiles Estimation

Here, we give a brief look at the quality of the estimates of the percentiles of the null distribution, as obtained using parallel analysis. Consistently with the rest of the paper we consider the percentiles of the Tracy–Widom distribution as the target against which to compare the estimations obtained using parallel analysis. For the Tracy–Widom distribution, the percentiles can be calculated with almost arbitrary precision using computational toolboxes (see Bornemann, 2009, 2010). In contrast, obtaining reliable estimations of the percentiles of a population density function from an empirical distribution is a known problem even when the parameter of the population density (i.e., mean and variance) are known (Efron & Tibshirani, 1993; Efron, 1994; Rice & Church, 1996; DiCiccio & Efron, 1996). Using simulations we found that the error on the percentiles estimation decreases, as expected, with the number N of realizations used to build the empirical distribution. This is also indicated by the formula

where \(y_{100-\alpha } = \mathrm{TW}^{-1}(x_{100-\alpha })\), \(\sigma _\mathrm{TW}\) is the standard deviation of \(\mathrm{TW}_1(s)\), and P is the proportion \(100-\alpha \) (see e.g., Kendall & Yule, 1950; Deming, 1966; Rice & Church, 1996).

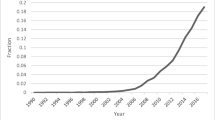

Figure 6 shows the results of a simulation, where the 95 and \(99\,\%\) percentiles of the Tracy–Widom distribution were estimated using different numbers of realizations N, using 10 replicates. As anticipated, the error decreases with N. Another aspect to be considered is the sampling variability: given a fixed N, different realizations may lead to (slightly) different estimations of the percentiles and this variability can be really large if the number of realizations is limited. This is clearly shown in Figure 6: estimations tend to be really unstable for small numbers of realizations (\(N<10^3\)) (panel a) and even for size of \(N = 10^5\), we can still observe instability of the estimations (panel d).

With \(N= 10^5\), the relative error is slightly below \(1\,\%\). In literature, some authors have recommended \(N= 300\) in (Ledesma & Valero-Mora, 2007) or \(N = 2500\) (Buja & Eyübğolu, 1992) being the latter a number that can still lead to a relative error in the range of \(10\,\%\). Theoretical estimates of the error on the estimation can be obtained using the Yule–Kendall formula. This is outside the scope of this paper, but preliminary simulations seem to indicate that at least \(N>10^7\) are needed to obtain a relative precision lower then \(0.1\,\%\).

Rights and permissions

About this article

Cite this article

Saccenti, E., Timmerman, M.E. Considering Horn’s Parallel Analysis from a Random Matrix Theory Point of View. Psychometrika 82, 186–209 (2017). https://doi.org/10.1007/s11336-016-9515-z

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11336-016-9515-z