Abstract

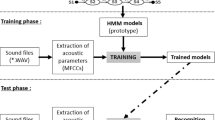

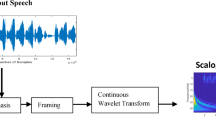

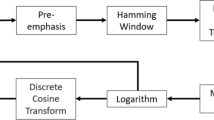

Dysarthric speech recognition requires a learning technique that is able to capture dysarthric speech specific features. Dysarthric speech is considered as speech with source distortion or noisy speech. Hence, as a first step speech enhancement is performed using variational mode decomposition (VMD) and wavelet thresholding. The reconstructed signals are then fed as input to convolutional neural networks. These networks learn dysarthric speech specific features and generate a speech model that supports dysarthric speech recognition. The performance of the proposed method is evaluated using UA-Speech database. The average accuracy values obtained by the proposed method for speakers with different intelligibility levels with VMD based enhancement and without enhancement are 95.95 and 91.80% respectively. The proposed method also provides an increased accuracy value compared to existing methods that are based on generative models and artificial neural networks.

Similar content being viewed by others

References

Rampello, L., Rampello, L., Patti, F., & Zappia, M. (2016). When the word doesnt come out: A synthetic overview of dysarthria. Journal of the Neurological Sciences, 369, 354–360.

Moore, M., Demakethepalli, V. H., & Panchanathan, S. (2018). Whistle-blowing ASRS: Evaluating the need for more inclusive automatic speech recognition systems. Proceedings of the Annual conference of the International Speech Communication Association INTERSPEECH, 2018, 466–470.

Thoppil, M. G., Kumar, C. S., Kumar, A., & Amose, J. (2017). Speech signal analysis and pattern recognition in diagnosis of dysarthria. Annals of Indian Academy of Neurology, 20(4), 302–357.

Borrie, S. A., Berk, M. B., Engen, K. V., & Bent, T. (2017). A relationship between processing speech in noise and dysarthric speech. Journal of Acoustics Society of AMerica, 141(6), 4460–4467.

Yakoub M. S., Selouani S. A., Zaidi B. F., & Bouch A. (2020). Improving dysarthric speech recognition using empirical mode decomposition and convolutional neural networks, EURASIP Journal on Audio, Speech and Music Processing, Article ID: 1. https://doi.org/10.1186/s13636-019-0169-5.

Dragomiretskiy, K., & Zosso, D. (2014). Variational mode decomposition. IEEE Transactions on Signal Processing, 62(3), 531–544.

Ram, R., & Mohanty, M. N. (2017). Comparative analysis of EMD and VMD algorithm in speech enhancement. International Journal of Natural Computing Research, 6(1), 17–35.

Park, J.H., Seong, W.K., & Kim, H.K. (2011). ’Preprocessing of Dysarthric Speech in Noise Based on CV-Dependent Wiener Filtering’, In: Delgado RC., Kobayashi T. (eds) Proceedings of the Paralinguistic Information and its Integration in Spoken Dialogue Systems Workshop, Springer, New York, pp. 41–47.

Wisler, A., Berisha, V., Spanias, A., & Liss, J. (2016). ‘Noise robust dysarthric speech classification using domain adaptation’, 2016 Digital Media Industry and Academic Forum (DMIAF), pp. 135–138.

Deller, J. R., Hsu, D., & Ferrier, L. J. (1991). On the use of hidden Markov modelling for recognition of dysarthric speech. Computers Methods and Programs in Biomedicine, 35(2), 125–139.

Lee, S. H., Kim, M., Seo, H. G., Oh, B. M., Lee, G., & Leigh, J. H. (2019). Assessment of dysarthria using one word speech recognition with hidden Markov models. Journal of Korean Medical Science, 34(13), e108. https://doi.org/10.3346/jkms.2019.34.e108

Rajeswari, N., & Chandrakala, S. (2016). Generative model-driven feature learning for dysarthric speech recognition. Biocybernetics and Biomedical Engineering, 36, 553–561.

Shahamiri, S. R., & Salim, S. S. B. (2014). Artificial networks as speech recognizers for dysarthric speech: Identifying the best performing set of MFCC parameters and studying a speaker independent approach. Advanced Engineering Informatics, 28, 102–110.

Polur, P. D., & Miller, G. E. (2006). Investigation of an HMM/ ANN hybrid structure in pattern recognition application using cepstral analysis of dysarthric (distorted) speech signals. Medical Engineering and Physics, 28, 741–748.

Nakashika, T., Yoshioka, T., Takiguchi, T., Ariki, Y., Duffner, S., & Garcia, C. (2014). Convolutive bottleneck network with dropout for dysarthric speech recognition. Transactions on Machine Learning and Artificial Intelligence, 2(2), 1–15.

Joy, N. M., & Umesh, S. (2018). Improving acoustic models in TORGO dysarthric speech database. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 26(3), 637–645.

Zaidi, B. F., Selouani, S. A., Boudraa, M., & Yakoub, M. S. (2021). Deep neural network architectures for dysarthric speech analysis and recognition. Neural Computing and Applications. https://doi.org/10.1007/S00521-020-05672-2

Donoho, D. L. (1995). De-noising by soft-thresholding. IEEE Transactions on Information Theory, 41(3), 613–627.

Kim, H., Hasegawa-Johnson, M., Perlman, A., Gunderson, J., Huang, T., Watkin, K., & Frame S. (2008). ‘Dysarthric speech database for universal access research’, In Proceedings of the Annual Conference of the International Speech Communication Association, INTERSPEECH, pp. 1741–1744.

van der Maaten, L., & Hinton, G. (2008). Visualizing Data using t-SNE. Journal of Machine Learning Research, 9, 2579–2605.

Acknowledgements

The authors acknowledge the support of the Biomedical Device and Technology Development, Department of Science and Technology, India. The authors would like to thank Professor Mark Hasegawa-Johnson of the University of Illinois for kindly allowing to access the UA-Speech database. The authors would like to thank Bharathiar University for providing the necessary support.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Rajeswari, R., Devi, T. & Shalini, S. Dysarthric Speech Recognition Using Variational Mode Decomposition and Convolutional Neural Networks. Wireless Pers Commun 122, 293–307 (2022). https://doi.org/10.1007/s11277-021-08899-x

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11277-021-08899-x