Abstract

If well-designed, the results of a Randomised Clinical Trial (RCT) can justify a causal claim between treatment and effect in the study population; however, additional information might be needed to carry over this result to another population. RCTs have been criticized exactly on grounds of failing to provide this sort of information (Cartwright and Stegenga, in: Dawid, Twining, Vasilaki (eds) Evidence, inference and enquiry. Oxford University Press, New York, 2011), as well as to black-box important details regarding the mechanisms underpinning the causal law instantiated by the RCT result. On the other side, so-called In Silico Clinical Trials (ISCTs) face the same criticisms addressed against standard modelling and simulation techniques, and cannot be equated to experiments (see, e.g.; Boem and Ratti in: Boniolo, Nathan (eds) Philosophy of molecular medicine: foundational issues in research and practice, Routledge, New York, 2017; Parker in Synthese 169(3):483–496, 2009; Parke in Philos Sci 81(4):516–536, 2014; Diez Roux in Am J Epidemiol 181(2):100–102, 2015 and related discussions in Frigg and Reiss in Synthese 169(3):593–613, 2009; Winsberg in Synthese 169(3):575–592, 2009; Beisbart and Norton in Int Stud Philos Sci 26(4):403–422, 2012). We undertake a formal analysis of both methods in order to identify their distinct contribution to causal inference in the clinical setting. Britton et al.’s study (Proc Natl Acad Sci 110(23):E2098–E2105, 2013) on the impact of ion current variability on cardiac electrophysiology is used for illustrative purposes. We deduce that, by predicting variability through interpolation, ISCTs aid with problems regarding extrapolation of RCTs results, and therefore in assessing their external validity. Furthermore, ISCTs can be said to encode “thick” causal knowledge (knowledge about the biological mechanisms underpinning the causal effects at the clinical level)—as opposed to “thin” difference-making information inferred from RCTs. Hence, ISCTs and RCTs cannot replace one another but rather, they are complementary in that the former provide information about the determinants of variability of causal effects, while the latter can, under certain conditions, establish causality in the first place.

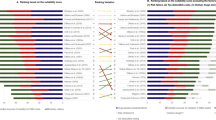

[Reproduced with permission from Britton et al. 2013, Fig. 9]

[Reproduced with permission from Britton et al. 2013, Fig. 10]

[Reproduced with permission from Britton et al. 2013, Fig. 6]

Similar content being viewed by others

Notes

For the sake of clarity, we will distinguish the two terms as follows: by “computer model” we mean the algorithm or computer program that is built in order to capture the mechanism of the phenomenon under study; by “simulation” instead we mean the actual run of the program.

Keller’s stance has been questioned by Rowbottom (2009)—by pointing out the importance of analogy also in extrapolation of results from animal models to humans (or from species to species, more generally). However this misses the point of distinguishing the two kinds of inference in that, even if analogy is also used in inference from animal models, it aids the extrapolation in a different way. Analogical inferences from organ system to organ system rely on ontological assumptions regarding the affinity of various biological species. Instead, computational models are intended to reproduce the target system by modelling its hypothesised underpinning structure; therefore analogical inference is based here on isomorphism (structural similarity).

Heterogeneity is explicitly taken into account in the evaluation of meta-analyses, where it is used to up/downgrade study quality, but it is rarely considered in its own right. However, heterogeneity is one of the main issues when predicting the effects of drugs in specific individuals or target groups, given that they are the result of causal interaction between the drug and various combinations of triggering factors, which may not be equally represented in study and target populations or the individual user.

Random allocation of the treatment to the experimental group should be not confused with random sampling. This refers to the sampling procedure and is aimed at guaranteeing a representative sample with respect to the population from which the study sample is drawn. Hence, whereas the purpose of random sampling is to have a study population as close as possible to the sampled population, the goal of random allocation of treatment to the experimental group is to obtain two groups (“treatment” and “control” group) as close as possible to each other, except for the treatment itself. This should guarantee that the possibly observed difference in the outcome is due to the treatment and only to it.

Random allocation of the treatment to the experimental group has putatively two main roles: (1) in the long run, it should allow the investigator to approach the true mean difference between treatment and control group; however it is unclear what this true underlying population probability denotes when we are dealing not with population of molecules for instance, but with population of patients undergoing medical interventions, where heterogeneity among individuals can at most allow for an aggregate average measure. Furthermore, it is obviously unethical and unfeasible to re-sample the same subjects of an experiment again and again, and even if this were possible, the subjects who were administered the drug in the first round would undergo physiological change; consequently, the successive trial population would no longer be the “same” (Worrall 2007); (2) random allocation (together with intervention and blinding) should guarantee the internal validity of the study by severing any common cause, or common effect, between the investigated treatment and its putative effects (i.e., avoidance of confounders and (self-)selection bias). This property is supposed to justify the primary role assigned to randomised evidence by so called evidence hierarchies (see Osimani, 2014).

That is, modulo the absence of random and systematic error.

See also Poellinger (forthcoming) for a discussion of the ramifications of theory choice in causal assessment.

In analogy for instance to similar laws in macroeconomics, such as the expectations-augmented Phillips curve used to predict the rate of inflation at time t, given that a particular level of unemployment persisted for some time: \({Y_t}={\text{ }}{b_{0{\text{ }}+}}{b_1}{X_{2t}}+{\text{ }}{b_2}{X_{3t}}+{\text{ }}{e_t}\) where Yt is the actual rate of inflation, X2t the unemployment rate, and X3t the expected inflation rate, at time t (see Hoover 2008).

Clearly, the simulation of a complete human physiology (the ‘virtual patient’) remains a far-fetched objective of the VPH project. What is possible however is the simulation of bits of human physiology, aimed at reproducing the possible response of the targeted organ or system to a new intervention. As an example, an early effort of this kind of complex computational model of physiology was a model of cardiac physiology aimed at simulating the electrical activity of the heart (Winslow et al. 2002). Winslow and colleagues used different kinds of data—imaging techniques and measurements of electrical activity—in order to construct a full three-dimensional model of the cardiac ventricle. See also the case study illustrated below.

Hence, the proposed model is based on a deterministic approach with no probabilistic or machine learning methods.

Current functionality includes tissue and cell level electrophysiology, discrete tissue modelling, and soft tissue modelling. The package is being developed by a team mainly based in the Computational Biology Group at the Department of Computer Science, University of Oxford, and the development draws on expertise from software engineering, high performance computing, mathematical modelling and scientific computing.

To be precise, dofetilide is a blocker of the apid component of the delayed rectifier potassium current Ikr. The authors oft he study explicitly chose this kind of intervention because Ikr block is the main assay required in safety pharmacology assessment, due to ist importance in long QT-related arrythmias (Britton et al. 2013, p E2099).

See, e.g., Carusi et al. (2012) for a systematic overview over the processes and constituents involved in the construction of multiscale models of cardiac electrophysiology.

The choice of Ikr block as an intervention to evaluate the predictive power of the population of models is motivated by the fact that Ikr block is the main assay required in safety pharmacology assessment, due to its link to QT-related arrhythmias.

This is also known as the “potential outcome approach” to causal inference.

Plus possibly the addition of some bounded random error to express finer-grained natural variation in the sense of a Monte–Carlo simulation.

Averaging would now be possible, but an aggregate effect size is not the goal of the investigation here, since the value of the functional model lies precisely in the fact, that its mechanistic core is able to treat input vectors individually and generate predictions that are sensitive to the input details.

See also Poellinger (forthcoming) for an analysis of analogy-based inference in pharmacology.

The main difference is that in some cases (i.e. neural networks) the model in not explicitly known by the users but is in the network learning phase. All the conclusions presented here are also valid, with different formulations on models for biological processes based on discrete mathematics (graphs, combinatorics) and probabilistic and optimization methods, such as Markov chains and Markov fields, Monte–Carlo simulation, maximum-likelihood estimation, entropy and information. Applications selected from epidemiology, inheritance and genetic drift, combinatorics and sequence alignment of nucleic acids, energy optimization in protein structure prediction, topology of biological molecules.

References

Anjum RL, Mumford S (2012) Causal dispositionalism. In: Bird A, Ellis B, Sankey H (eds) Properties, powers and structure, chap. 7. Routledge, New York, pp 101–118

Beisbart C, Norton JD (2012) Why monte carlo simulations are inferences and not experiments. Int Stud Philos Sci 26(4):403–422

Bertolaso M (2013) On the structure of biological explanations: beyond functional ascriptions in cancer research. Epistemologia 36(1):112–130

Bertolaso M, Ratti E (2018) Conceptual challenges in the theoretical foundations of systems biology. In: Bizzarri M (ed) Systems biology. Springer, Humana Press, New York, pp 1–13

Bertolaso M, Campaner R (2018) Scientific practice in modelling diseases: stances from cancer research and neuropsychiatry. J Med Philos (forthcoming)

Bertolaso M, Macleod M (eds) (2016) In silico modeling: the human factor. Humana Mente 30:III–XV

Boem F, Ratti E (2017) Toward a notion of intervention in Big-data biology and molecular medicine. In: Boniolo G, Nathan MJ (eds) Philosophy of molecular medicine: foundational issues in research and practice. Routledge, New York

Britton OJ, Bueno-Orovio A, Van Ammel K, Lu HR, Towart R, Gallacher DJ, Rodriguez B (2013) Experimentally calibrated population of models predicts and explains intersubject variability in cardiac cellular electrophysiology. Proc Natl Acad Sci 110(23):E2098–E2105

Cartwright N (2007) Are RCTs the Gold Standard? Biosocieties 2:11–20. https://doi.org/10.1017/S1745855207005029

Cartwright N, Stegenga J (2011) A theory of evidence for evidence-based policy, chapter 11. In: Dawid P, Twining W, Vasilaki M (eds) Evidence, inference and enquiry. Oxford University Press, New York, pp 291–322

Carusi A (2014) Validation and variability: dual challenges on the path from systems biology to systems medicine. Stud Hist Philos Sci C 48:28–37

Carusi A, Burrage K, Rodriguez B (2012) Bridging experiments, models and simulations: an integrative approach to validation in computational cardiac electrophysiology. Am J Physiol Heart Circ Physiol 303(2):H144–H155

Clarke B, Gillies D, Illari P, Russo F, Williamson J (2014) Mechanisms and the evidence hierarchy. Topoi 33(2):339–360

Corrias A, Giles W, Rodriguez B (2011) Ionic mechanisms of electrophysiological properties and repolarization abnormalities in rabbit Purkinje fibers. Am J Physiol Heart Circ Physiol 300(5):H1806–H1813

Davies MR, Mistry HB, Hussein L, Pollard CE, Valentin JP, Swinton J, Abi-Gerges N (2012) An in silico canine cardiac midmyocardial action potential duration model as a tool for early drug safety assessment. Am J Physiol Heart Circ Physiol 302(7):H1466–H1480

Dawid P, Twinning W, Vasilaki M (eds) Evidence, inference and enquiry, chap. 11. Oxford University Press, New York, pp 291–322

Diez Roux AV (2015) The virtual epidemiologist—promise and peril. Am J Epidemiol 181(2):100–102

Dowe P (1992) Wesley salmon’s process theory of causality and the conserved quantity theory. Philos Sci 59(2):195–216

Dowe P (2000) Physical causation. Cambridge University Press, Cambridge

Frigg R, Reiss J (2009) The philosophy of simulation: hot new issues or same old stew? Synthese 169(3):593–613

Holland PW (1986) Statistics and causal inference. J Am Statist Assoc 81(396):945–960. https://doi.org/10.1080/01621459.1986.10478354

Hoover KD (2008) “Phillips curve.” The concise encyclopedia of economics. Library of economics and liberty. http://www.econlib.org/library/Enc/PhillipsCurve.html. Accessed 29 Aug 2017

Keller EF (2003) Making sense of life: explaining biological development with models, metaphors, and machines. Harvard University Press, Cambridge

Landes J, Osimani B, Poellinger R (2017) Epistemology of causal inference in pharmacology. Towards a framework for the assessment of harms. Eur J Philos Sci. https://doi.org/10.1007/s13194-017-0169-1

Lewis D (1973a) Counterfactuals. Blackwell Publishers, Oxford (Reprinted with revisions, 1986)

Lewis D (1973b) Causation. J Philos 70(17):556–567

Lewis D (2000) Causation as influence. J Philos 97(4):182–197

Mackie JL (1980) The cement of the universe: a study of causation. Oxford University Press, New York

MacLeod M, Nersessian NJ (2013) Coupling simulation and experiment: the bimodal strategy in integrative systems biology. Stud Hist Philos Biol Biomed A 44(4):572–584. https://doi.org/10.1016/j.shpsc.2013.07.001

Morrison M (2015) Reconstructing reality: models, mathematics, and simulations. Oxford University Press, New York

Mumford S (2009) Causal powers and capacities, chap. 12. In: Beebee H, Hitchcock C, Menzies P (eds) The Oxford handbook of causation. Oxford University Press, New York, pp 265–278

Osimani B (2014) Hunting side effects and explaining them: should we reverse evidence hierarchies upside down? Topoi 33(2):295–312. https://doi.org/10.1007/s11245-013-9194-7

Osimani B, Poellinger R (forthcoming) A protocol for model validation and causal inference form computer simulation. Stud Hist Philos Sci C

Parke EC (2014) Experiments, simulations, and epistemic privilege. Philos Sci 81(4):516–536

Parker WS (2009) Does matter really matter? Computer simulations, experiments, and materiality. Synthese 169(3):483–496

Pearl J (2000) Causality: models, reasoning, and inference, 1st edn. Cambridge University Press, Cambridge

Poellinger R (forthcoming) On the ramifications of theory choice in causal assessment: indicators of causation and their conceptual relationships. Philos Sci

Poellinger R (forthcoming) Analogy-based inference patterns in pharmacological research. In: La Caze A, Osimani B (eds) Uncertainty in pharmacology: epistemology, methods, and decisions. Boston studies in philosophy of science. Springer, New York

Reichenbach H (1956) The direction of time. University of California Press, Berkeley-Los Angeles

Romero L, Pueyo E, Fink M, Rodríguez B (2009) Impact of ionic current variability on human ventricular cellular electrophysiology. Am J Physiol Heart Circ Physiol 297(4): H1436–H1445

Rowbottom DP (2009) Models in biology and physics: What’s the difference? Found Sci 14(4):281–294

Rubin D (2005) Causal inference using potential outcomes. J Amer Statist Assoc 100(469):322–331. https://doi.org/10.1198/016214504000001880

Rubin DB (1974) Estimating causal effects of treatments in randomized and nonrandomized studies. J Educ Psychol 66(5):688–701

Salmon W (1984) Scientific explanation and the causal structure of the world. Princeton University Press, Princeton

Salmon W (1997) Causality and explanation: a reply to two critiques. Philos Sci 64:461–477

Sarkar AX, Sobie EA (2011) Quantification of repolarization reserve to understand interpatient variability in the response to proarrhythmic drugs: a computational analysis. Heart Rhythm 8(11):1749–1755

Spirtes P, Glymour C, Scheines R (2000) Causation, prediction, and search, adaptive computation and machine learning. MIT Press, Boston

Sprenger J (2016) The probabilistic no miracles argument. Eur J Philos Sci 6:173–189

Viceconti M, Henney A, Morley-Fletcher E (2016) In silico clinical trials: how computer simulation will transform the biomedical industry. Int J Clin Trials 3(2):37–46

Wang R-S, Maron BA, Loscalzo J (2015) Systems medicine: evolution of systems biology from bench to bedside. Wiley Interdisc Rev 7(4):141–161

Winsberg E (2009) A tale of two methods. Synthese 169(3):575–592

Winslow RL, Helm P, Baumgartner W, Peddi S, Ratnanather T, McVeigh E, Miller MI (2002) Imaging-based integrative models of the heart: closing the loop between experiment and simulation. In ‘In silico simulation of biological processes: Novartis foundation symposium 247, pp 129–143

Worrall J (2007) Evidence in medicine and evidence-based medicine. Philos Compass 2(6):981–1022. https://doi.org/10.1111/j.1747-9991.2007.00106.x

Funding

This study was funded by the European Research Council (Grant Number: GA 639276).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical Approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Informed Consent

Informed consent was obtained from all individual participants included in the study.

Rights and permissions

About this article

Cite this article

Osimani, B., Bertolaso, M., Poellinger, R. et al. Real and Virtual Clinical Trials: A Formal Analysis. Topoi 38, 411–422 (2019). https://doi.org/10.1007/s11245-018-9563-3

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11245-018-9563-3