Abstract

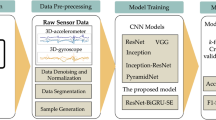

Human activity recognition (HAR) has played an indispensable role in ubiquitous computing scenario, from smart homes to game console designing, elderly care, and fitness tracking. It is very hard to manually extract most suitable activity features from raw sensor time series. Due to an obvious advantage, convolutional neural networks (CNNs) that can extract features automatically have been widely utilized for activity recognition. Despite exceptional performance, CNNs are computation-intensive and memory-demanding algorithms because of a large number of internal parameters. As a result, the research attention in HAR implementations over resource-limited embedded platforms has turned to computationally lightweight convolution architectures. In this paper, we offer a contribution in the direction. Simple linear transformations with low cost are combined with a convolution-based HAR classifier to decrease overall computational/memory overhead and, simultaneously, which establishes an efficient classification method with satisfactory performance. Our new method is evaluated against standard convolution-based and residual counterparts, over several popular HAR datasets for algorithm benchmarking. Experimental results verify that, the cheap linear operations can significantly reduce computational and memory cost, and meanwhile producing satisfactory recognition performance, which is able to ensure faster inference on mobile devices. Our new method could be a strong candidate for real HAR implementations on embedded platforms with limited computing resources.

Similar content being viewed by others

References

Wang J, Chen Y, Hao S, Peng X, Hu L (2019) Deep learning for sensor-based activity recognition: a survey. Pattern Recogn Lett 119:3–11

Zhang M, Sawchuk AA (2013) Human daily activity recognition with sparse representation using wearable sensors. IEEE J Biomed Health Inform 17(3):553–560

Hong Y-J, Kim I-J, Ahn SC, Kim H-G (2010) Mobile health monitoring system based on activity recognition using accelerometer. Simul Model Pract Theory 18(4):446–455

Alshurafa N, Xu W, Liu JJ, Huang M-C, Mortazavi B, Roberts CK, Sarrafzadeh M (2013) Designing a robust activity recognition framework for health and exergaming using wearable sensors. IEEE J Biomed Health Inform 18(5):1636–1646

Grünerbl A, Muaremi A, Osmani V, Bahle G, Oehler S, Tröster G, Mayora O, Haring C, Lukowicz P (2014) Smartphone-based recognition of states and state changes in bipolar disorder patients. IEEE J Biomed Health Inform 19(1):140–148

Monkaresi H, Calvo RA, Yan H (2013) A machine learning approach to improve contactless heart rate monitoring using a webcam. IEEE J Biomed Health Inform 18(4):1153–1160

Rish I et al (2001) An empirical study of the naive bayes classifier. In: IJCAI 2001 Workshop on Empirical Methods in Artificial Intelligence, vol. 3, no. 22, 2001, pp. 41–46

Jiang W, Yin Z (2015) Human activity recognition using wearable sensors by deep convolutional neural networks. In: Proceedings of the 23rd ACM International Conference on Multimedia, pp 1307–1310

Wang K, He J, Zhang L (2019) Attention-based convolutional neural network for weakly labeled human activities’ recognition with wearable sensors. IEEE Sens J 19(17):7598–7604

Kwapisz JR, Weiss GM, Moore SA (2011) Activity recognition using cell phone accelerometers. ACM SIGKDD Explor Newsl 12(2):74–82

Anguita D, Ghio A, Oneto L, Parra X, Reyes-Ortiz JL (2012) Human activity recognition on smartphones using a multiclass hardware-friendly support vector machine. In: International Workshop on Ambient Assisted Living. Springer, Berlin, pp 216–223

Reiss A, Stricker D (2012) Introducing a new benchmarked dataset for activity monitoring. In: 16th International Symposium on Wearable Computers. IEEE 2012, pp 108–109

Micucci D, Mobilio M, Napoletano P (2017) Unimib shar: a dataset for human activity recognition using acceleration data from smartphones. Appl Sci 7(10):1101

Roggen D, Calatroni A, Rossi M, Holleczek T, Förster K, Tröster G, Lukowicz P, Bannach D, Pirkl G, Ferscha A (2010) Collecting complex activity datasets in highly rich networked sensor environments. In: Seventh International Conference on Networked Sensing Systems (INSS). IEEE 2010:233–240

Zhang M, Sawchuk AA (2012) Usc-had: a daily activity dataset for ubiquitous activity recognition using wearable sensors. In: Proceedings of the 2012 ACM Conference on Ubiquitous Computing, pp 1036–1043

Hassan MM, Uddin MZ, Mohamed A, Almogren A (2018) A robust human activity recognition system using smartphone sensors and deep learning. Futur Gener Comput Syst 81:307–313

Wan S, Qi L, Xu X, Tong C, Gu Z (2020) Deep learning models for real-time human activity recognition with smartphones. Mobile Netw Appl 25(2):743–755

Zhang X, Zhou X, Lin M, Sun J (2018) Shufflenet: an extremely efficient convolutional neural network for mobile devices. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 6848–6856

Zeng M, Nguyen LT, Yu B, Mengshoel OJ, Zhu J, Wu P, Zhang J (2014) Convolutional neural networks for human activity recognition using mobile sensors. In: 6th International Conference on Mobile Computing, Applications and Services. IEEE, pp 197–205

Ordóñez FJ, Roggen D (2016) Deep convolutional and lstm recurrent neural networks for multimodal wearable activity recognition. Sensors 16(1):115

Hassan MM, Ullah S, Hossain MS, Alelaiwi A (2020) An end-to-end deep learning model for human activity recognition from highly sparse body sensor data in internet of medical things environment,. J Supercomput, pp 1–14

Semwal VB, Gupta A, Lalwani P (2021) An optimized hybrid deep learning model using ensemble learning approach for human walking activities recognition. J Supercomput, pp 1–24

Yan L, Fu J, Wang C, Ye Z, Chen H, Ling H (2021) Enhanced network optimized generative adversarial network for image enhancement. Multimedia Tools Appl 80(9):14363–14381

Chollet F (2017) Xception: deep learning with depthwise separable convolutions. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 1251–1258

Howard AG, Zhu M, Chen B, Kalenichenko D, Wang W, Weyand T, Andreetto M, Adam H (2017) Mobilenets: efficient convolutional neural networks for mobile vision applications, arXiv preprint arXiv:1704.04861

Sandler M, Howard A, Zhu M, Zhmoginov A, Chen L-C (2018) Mobilenetv2: Inverted residuals and linear bottlenecks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 4510–4520

Howard A, Sandler M, Chu G, Chen L-C, Chen B, Tan M, Wang W, Zhu Y, Pang R, Vasudevan V et al (2019) Searching for mobilenetv3. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp 1314–1324

Ma N, Zhang X, Zheng HT, Sun J (2018) Shufflenet v2: practical guidelines for efficient cnn architecture design. In: Proceedings of the European Conference on Computer Vision (ECCV), pp 116–131

Han K, Wang Y, Tian Q, Guo J, Xu C, Xu C (2020) Ghostnet: more features from cheap operations. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp 1580–1589

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 770–778

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Liu, T., Wang, S., Liu, Y. et al. A lightweight neural network framework using linear grouped convolution for human activity recognition on mobile devices. J Supercomput 78, 6696–6716 (2022). https://doi.org/10.1007/s11227-021-04140-5

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11227-021-04140-5