Abstract

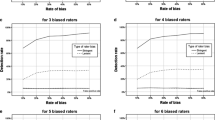

Although it has long been a consensus that intercoder reliability is crucial to the validity of a content analysis study, the choice among them has been debated. This study reviewed and empirically tested most popular intercoder reliability indices, aiming to find the most robust index against prevalence and rater bias, by empirically testing their relationships with response surface methodology through a Monte Carlo experiment. It was found that Maxwell’s R.E is superior to Krippendorff’s α, Scott’s π, Cohen’s κ, I r of Perreault and Leigh, and Gwet’s AC 1. More nuanced relationships among prevalence, sensitivity, specificity and the intercoder reliability indices were discovered through response surface plots. Both theoretical and practical implications were also discussed in the end.

Similar content being viewed by others

References

Agresti A.: Modelling patterns of agreement and disagreement. Stat. Methods Med. Res. 1(2), 201–218 (1992). doi:10.1177/096228029200100205

Agresti A.: Categorical Data Analysis. Wiley, New York (2002)

Agresti, A., Lang, J.B.: Quasi-symmetric latent class models, with application to rater agreement. Biometrics 49(1), 131–139 (1993). http://0-www.jstor.org/stable/2532608

Agresti A., Ghosh A., Bini M.: Raking kappa: describing potential impact of marginal distributions on measures of agreement. Biometrical J. 37(7), 811–820 (1995). doi:10.1002/bimj.4710370705

Artstein R., Poesio M.: Inter-coder agreement for computational linguistics. Comput. Linguistics 34(4), 555–596 (2008)

Bakeman R.: Behavioral Observation and Coding. Cambridge University Press, New York (2000)

Banerjee M., Capozzoli M., McSweeney L., Sinha D.: Beyond kappa: a review of interrater agreement measures. Can. J. Stat. 27(1), 3–23 (1999)

Barlow W., Lai M.Y., Azen S.P.: A comparison of methods for calculating a stratified kappa. Stat. Med. 10(9), 1465–1472 (1991). doi:10.1002/sim.4780100913

Bennett, E.M., Alpert, R., Goldstein, A.C.: Communications through limited-response questioning. Public Opin. Q. 18(3), 303–308 (1954). doi:10.1086/266520, http://poq.oxfordjournals.org/content/18/3/303.abstract, http://poq.oxfordjournals.org/content/18/3/303.full.pdf+html

Bewick V., Cheek L., Ball J.: Statistics review 13: receiver operating characteristic curves. Crit. Care 8(6), 508–512 (2004). doi:10.1186/cc3000

Bhapkar, V.P.: A note on the equivalence of two test criteria for hypotheses in categorical data. J. Am. Stat. Assoc. 61(313), 228–235 (1966). http://www.jstor.org/stable/2283057

Bishop Y., Fienberg S., Holland P.: Discrete Multivariate Analysis: Theory and Practice. Springer, Cambridge (2007)

Bloch, D.A., Kraemer, H.C.: 2 x 2 kappa coefficients: measures of agreement or association. Biometrics 45(1), 269–287 (1989). http://www.jstor.org/stable/2532052

Box G., Draper N.: Response surfaces, mixtures, and ridge analyses, vol. 527, 2nd edn. Wiley-Interscience, New Jersy (2007)

Brennan R., Prediger D.: Coefficient kappa: some uses, misuses, and alternatives. Educ. Psychol. Meas. 41(3), 687–699 (1981)

Broemeling L.: Bayesian Methods for Measures of Agreement. Chapman & Hall/CRC biostatistics series, CRC Press, Boca Raton (2009)

Byrt, T., Bishop, J., Carlin, J.B.: Bias, prevalence and kappa. J. Clin. Epidemiol. 46(5), 423–429 (1993). doi:10.1016/0895-4356(93)90018-V, http://www.sciencedirect.com/science/article/pii/089543569390018V

Choudhary P., Yin K.: Bayesian and frequentist methodologies for analyzing method comparison studies with multiple methods. Stat. Biopharm. Res. 2(1), 122–132 (2010)

Cicchetti D., Feinstein A.: High agreement but low kappa: Ii. resolving the paradoxes* 1. J. Clin. Epidemiol. 43(6), 551–558 (1990)

Cohen J.: A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 20(1), 37–46 (1960). doi:10.1177/001316446002000104

Cohen, J.: Weighted kappa: nominal scale agreement provision for scaled disagreement or partial credit. Psychol. Bull. 70(4), 213–220 (1968). doi:10.1037/h0026256, http://search.ebscohost.com/login.aspx?direct=true&db=pdh&AN=bul-70-4-213&site=ehost-live

Conger A.: Integration and generalization of kappas for multiple raters. Psychol. Bull. 88(2), 322–328 (1980). doi:10.1037/0033-2909.88.2.322

Coughlin, S.S., Pickle, L.W.: Sensitivity and specificity-like measures of the validity of a diagnostic test that are corrected for chance agreement. Epidemiology 3(2), 178–181 (1992) http://www.jstor.org/stable/3702898

Dahl, D.: xtable: export tables to latex or html. R package version 1(6) (2009)

de Mast J.: Agreement and kappa-type indices. Am. Stat. 61(2), 148–153 (2007)

Dewey, M.: Coefficients of agreement. Br. J. Psychiatry 143(5), 487–489 (1983). doi:10.1192/bjp.143.5.487, http://bjp.rcpsych.org/cgi/content/abstract/143/5/487, http://bjp.rcpsych.org/cgi/reprint/143/5/487.pdf

Feinstein, A.R., Cicchetti, D.V.: High agreement but low kappa: I. the problems of two paradoxes. J. Clin. Epidemiol. 43(6), 543–549 (1990). doi:10.1016/0895-4356(90)90158-L, http://www.sciencedirect.com/science/article/pii/089543569090158L

Feuerman M., Miller A.R.: Relationships between statistical measures of agreement: sensitivity, specificity and kappa. J. Eval. Clin. Pract. 14(5), 930–933 (2008). doi:10.1111/j.1365-2753.2008.00984.x

Finn R.: A note on estimating the reliability of categorical data. Educ. Psychol. Meas. 30(1), 70–76 (1970). doi:10.1177/001316447003000106

Fleiss J.: Measuring nominal scale agreement among many raters. Psychol. Bull. 76(5), 378–382 (1971)

Fleiss, J.: Measuring agreement between two judges on the presence or absence of a trait. Biometrics 31(3): 651–659 (1975). http://0-www.jstor.org/stable/2529549

Fleiss, J.L., Levin, B., Paik, M.C.: The measurement of interrater agreement, 3rd edn. In: Statistical Methods for Rates and Proportions, chap. 18, pp. 598–626. Wiley, New York (2004). doi:10.1002/0471445428.ch18

Gamer, M., Lemon, J., Singh, I.F.P.: irr: various coefficients of interrater reliability and agreement (2010). http://cran.r-project.org/web/packages/irr/index.html

Guggenmoos-Holzmann I.: How reliable are change-corrected measures of agreement?. Stat. Med. 12(23), 2191–2205 (1993). doi:10.1002/sim.4780122305

Guggenmoos-Holzmann I.: The meaning of kappa: probabilistic concepts of reliability and validity revisited* 1. J. Clin. Epidemiol. 49(7), 775–782 (1996)

Gwet K.: Inter-rater reliability: dependency on trait prevalence and marginal homogeneity. Stat. Methods Inter-Rater Reliab. Assess. Ser. 2, 1–9 (2002)

Gwet K.: Computing inter-rater reliability and its variance in the presence of high agreement. Br. J. Math. Stat. Psychol. 61(1), 29–48 (2008)

Gwet K.: Handbook of Inter-rater Reliability-A Definitive Guide to Measuring the Extent of Agreement Among Multiple Raters. Advanced Analytics, LLC, Gaithersburg (2010)

Hayes A., Krippendorff K.: Answering the call for a standard reliability measure for coding data. Commun. Methods Meas. 1(1), 77–89 (2007)

Hoehler, F.K.: Bias and prevalence effects on kappa viewed in terms of sensitivity and specificity. J. Clin. Epidemiol. 53(5), 499–503 (2000). doi:10.1016/S0895-4356(99)00174-2, http://www.sciencedirect.com/science/article/pii/S0895435699001742

Holley, J., Guilford, J.: A note on the g index of agreement. Educ. Psychol. Meas. 24(4), 749–753 (1964). doi:10.1177/001316446402400402, http://epm.sagepub.com/content/24/4/749.short, http://epm.sagepub.com/content/24/4/749.full.pdf+html

Holsti O.: Content Analysis for the Social Sciences and Humanities. Addison-Wesley, Reading (1969)

Hoyt, W.T.: Rater bias in psychological research: when is it a problem and what can we do about it? Psychol. Methods 5(1):64–86 (2000). http://search.ebscohost.com/login.aspx?direct=true&db=pdh&AN=met-5-1-64&site=ehost-live

Hripcsak, G., Heitjan, D.F.: Measuring agreement in medical informatics reliability studies. J. Biomed. Inf. 35(2), 99–110 (2002). doi:10.1016/S1532-0464(02)00500-2, http://www.sciencedirect.com/science/article/pii/S1532046402005002

Hsu L., Field R.: Interrater agreement measures: comments on kappa n , Cohen’s κ, Scott’s π, and Aickin’s α. Underst. Stat. 2, 205–219 (2003)

Hutchinson T.P.: Kappa muddles together two sources of disagreement: tetrachoric correlation is preferable. Res. Nurs. Health 16(4), 313–316 (1993). doi:10.1002/nur.4770160410

Janes C.: An extension of the random error coefficient of agreement to n × n tables. Br. J. Psychiatry 134(6), 617–619 (1979). doi:10.1192/bjp.134.6.617

Janson S., Vegelius J.: On generalizations of the g index and the phi coefficient to nominal scales. Multivar. Behav. Res. 14(2), 255–269 (1979). doi:10.1207/s15327906mbr1402_9

Kirk, J.: rmac: calculate RMAC or FMAC agreement coefficients (2010). http://cran.r-project.org/web/packages/rmac/index.html

Kraemer H.: Ramifications of a population model for as a coefficient of reliability. Psychometrika 44(4), 461–472 (1979). doi:10.1007/BF02296208

Krippendorff, K.: Bivariate agreement coefficients for reliability of data. Sociol. Methodol. 2, 139–150 (1970). http://www.jstor.org/stable/270787

Krippendorff K.: Content Analysis: An Introduction to its Methodology, 2nd edn. Sage Publications, Inc, Thousand Oaks (2004)

Krippendorff K.: Reliability in content analysis. Some common misconceptions and recommendations. Human Commun. Res. 30(3), 411–433 (2004). doi:10.1111/j.1468-2958.2004.tb00738.x

Krippendorff, K.: Computing krippendorff’s alpha reliability (2007). http://repository.upenn.edu/cgi/viewcontent.cgi?article=1043&context=asc_papers

Lamport L.: Latex: A Document Preparation System. Addison-Wesley Longman Publishing Co., Inc, Boston (1986)

Langenbucher J., Labouvie E., Morgenstern J.: Measuring diagnostic agreement. J. Consult. Clin. Psychol. 64(6), 1285–1289 (1996)

Lantz, C.A., Nebenzahl, E.: Behavior and interpretation of the κ statistic: resolution of the two paradoxes. J. Clin. Epidemiol. 49(4), 431–434 (1996). doi:10.1016/0895-4356(95)00571-4, http://www.sciencedirect.com/science/article/pii/0895435695005714

Leisch, F.: Sweave: Dynamic Generation of Statistical Reports Using Literate Data Analysis. http://www.stat.uni-muenchen.de/leisch/Sweave, ISBN 3-7908-1517-9 (2002)

Lemon, J., Fellows, I.: Concord: concordance and reliability (2007). http://cran.r-project.org/web/packages/concord/index.html

Lenth, R.V.: RSM: Response-Surface Analysis. 1st edn (2010). http://cran.r-project.org/web/packages/rsm/index.html

Light R.J.: Measures of response agreement for qualitative data: some generalizations and alternatives. Psychol. Bull. 76(5), 365–377 (1971)

Lin L.: A concordance correlation coefficient to evaluate reproducibility. Biometrics 45(1), 255–268 (1989)

Lin L., Hedayat A.S., Wenting W.: A unified approach for assessing agreement for continuous and categorical data. J. Biopharm. Stat. 17(4), 629–652 (2007). doi:10.1080/10543400701376498

Lombard M.: A call for standardization in content analysis reliability. Human Commun. Res. 30(3), 434–437 (2004)

Mason R., Gunst R., Hess J.: Statistical Design and Analysis of Experiments: With Applications to Engineering and Science, vol. 356. Wiley-Interscience, New York (2003)

Maxwell A.: Comparing the classification of subjects by two independent judges. Br. J. Psychiatry 116(535), 651–655 (1970)

Maxwell, A.E.: Coefficients of agreement between observers and their interpretation. Br. J. Psychiatry 130(1), 79–83 (1977). doi:10.1192/bjp.130.1.79, http://bjp.rcpsych.org/content/130/1/79.abstract, http://bjp.rcpsych.org/content/130/1/79.full.pdf+html

May S.: Modelling observer agreement—an alternative to kappa. J. Clin. Epidemiol. 47(11), 1315–1324 (1994)

McNemar Q.: Note on the sampling error of the difference between correlated proportions or percentages. Psychometrika 12, 153–157 (1947). doi:10.1007/BF02295996

Myers R., Montgomery D., Anderson-Cook C.: Response Surface Methodology: Process and Product Optimization Using Designed Experiments, vol. 705, 3rd edn. Wiley, Hoboken (2009)

Osgood, C.: The Representational Model and Relevant Research Methods. Trends in Content Analysis, pp. 33–88. UK (1959)

Perreault, J., William, D., Leigh, L.E.: Reliability of nominal data based on qualitative judgments. J. Mark. Res. 26(2), 135–148, http://www.jstor.org/stable/3172601 (1989)

R Development Core Team: R: A Language and Environment for Statistical Computing (2011). http://www.R-project.org/, ISBN 3-900051-07-0

Revolution Analytics doSMP: Foreach Parallel Adaptor for the revoIPC Package. 1st edn (2011). http://cran.r-project.org/web/packages/doSMP/index.html

Rosenbaum, J.: Bayesian methods for measures of agreement. J. R. Stat. Soc. Ser. A (Stat. Soc.) 173(1), 270–270 (2010). doi:10.1111/j.1467-985X.2009.00624_2.x (book Reviews)

Sarkar, D.: Lattice: Lattice Graphics, 0th edn. (2012). http://cran.r-project.org/web/packages/lattice/index.html

Scott W.: Reliability of content analysis: the case of nominal scale coding. Public Opin. Q. 19, 321–325 (1955). doi:10.1086/266577

Shrout P., Fleiss J.: Intraclass correlations: uses in assessing rater reliability. Psychol. Bull. 86(2), 420–428 (1979)

Shrout P., Spitzer R., Fleiss J.: Quantification of agreement in psychiatric diagnosis revisited. Arch. Gen. Psychiatry 44(2), 172–177 (1987)

Siegel S., Castellan N.: Nonparametric Statistics for the Behavioral Sciences. McGraw-HiU Book Company, New York (1988)

Sim J., Wright C.: The kappa statistic in reliability studies: use, interpretation, and sample size requirements. Phys. Ther. 85(3), 257–268 (2005)

Spitznagel E., Helzer J.: A proposed solution to the base rate problem in the kappa statistic. Arch. Gen. Psychiatry 42(7), 725–728 (1985)

Stevenson, M., Sanchez, J., Thornton, R.: epir: Functions for analysing epidemiological data (2011). http://cran.r-project.org/web/packages/epiR/index.html

Stewart A.: Basic Statistics and Epidemiology: A Practical Guide. Radcliffe Pub., Oxford (2007)

Stuart A.: A test for homogeneity of the marginal distributions in a two-way classification. Biometrika 42(3–4), 412–416 (1955)

Tanner, M.A., Young, M.A.: Modeling agreement among raters. J. Am. Stat. Assoc. 80(389), 175–180 (1985). http://www.jstor.org/stable/2288068

Thompson, W., Walter, S.D.: A reappraisal of the kappa coefficient. J. Clin. Epidemiol. 41(10), 949–958 (1988). doi:10.1016/0895-4356(88)90031-5, http://www.sciencedirect.com/science/article/pii/0895435688900315

Tinsley, H.E., Weiss, D.J.: Interrater reliability and agreement of subjective judgments. J. Couns. Psychol. 22(4), 358–376 (1975). doi:10.1037/h0076640, http://search.ebscohost.com/login.aspx?direct=true&db=pdh&AN=cou-22-4-358&site=ehost-live

Tinsley H.E., Weiss D.J.: Interrater reliability and agreement. In: Tinsley, H., Brown, S. (eds.) Handbook of Applied Multivariate Statistics and Mathematical Modeling, chap 4, pp. 95–124. Academic Press, San Diego (2000)

Uebersax J.: Diversity of decision-making models and the measurement of interrater agreement. Psychol. Bull. 101(1), 140–146 (1987)

Uebersax, J.: The myth of chance-corrected agreement (2009). http://www.john-uebersax.com/stat/kappa2.htm

Uebersax, J., Grove, W.: Latent class analysis of diagnostic agreement. Stat. Med. 9(5), 559–572 (1990). http://www.psych.umn.edu/faculty/grove/053latentclassanalysisof.pdf

Vach, W.: The dependence of cohen’s kappa on the prevalence does not matter. J. Clin. Epidemiol. 58(7), 655–661 (2005). doi:10.1016/j.jclinepi.2004.02.021, http://www.sciencedirect.com/science/article/pii/S0895435604003300

von Eye, A., Sőrensen, S.: Models of chance when measuring interrater agreement with kappa. Biometrical J. 33(7), 781–787 (1991). doi:10.1002/bimj.4710330704, http://dx.doi.org/10.1002/bimj.4710330704

von Eye A., von Eye M.: On the marginal dependency of Cohen’s κ. Eur. Psychol. 13(4), 305–315 (2008)

Warrens, M.: A formal proof of a paradox associated with Cohen’s kappa. J. Classif. 27(3), 322–332 (2010). doi:10.1007/s00357-010-9060-x, https://openaccess.leidenuniv.nl/bitstream/handle/1887/16310/Warrens_2010_JoC_27_322_332.pdf?sequence=2

Yu, Y., Lin, L.: Agreement: statistical tools for measuring agreement (2011). http://cran.r-project.org/web/packages/Agreement/Agreement.pdf

Zhao, X.: When to use Scott’s π or Krippendorff’s α, if ever. The annual Conference of the Association for Education in Journalism and Mass Communication, the Association for Education in Journalism and Mass Communication, St. Louis (2011). http://www.allacademic.com/meta/p517973_index.html

Zhao X., Liu J.S., Deng K.: Assumptions behind inter-coder reliability indices, vol. 36. Routledge, New York (2012)

Zwick R.: Another look at interrater agreement. Psychol. Bull. 103(3), 374–378 (1988). doi:10.1037/0033-2909.103.3.374

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Feng, G.C. Factors affecting intercoder reliability: a Monte Carlo experiment. Qual Quant 47, 2959–2982 (2013). https://doi.org/10.1007/s11135-012-9745-9

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11135-012-9745-9