Occam’s Razor produces hypotheses that with high probability will be predictive for future observations.

Anselm Blumer, Andrzej Ehrenfeucht, David Hausler and Manfred K. Warmuth, “Occam’s Razor”

Abstract

Lalley and Weyl (Quadratic voting, 2016) propose a mechanism for binary collective decisions, Quadratic Voting (QV), and prove its approximate efficiency in large populations in a stylized environment. They motivate their proposal substantially based on its greater robustness when compared with pre-existing efficient collective decision mechanisms. However, these suggestions are based purely on discussion of structural properties of the mechanism. In this paper, I study these robustness properties quantitatively in an equilibrium model. Given the mathematical challenges with establishing results on QV fully formally, my analysis relies on a number of structural conjectures that have been proven in analogous settings in the literature, but in the models I consider here. While most of the factors I study reduce the efficiency of QV to some extent, it is reasonably robust to all of them and quite robustly outperforms one-person-one-vote. Collusion and fraud, except on a very large scale, are deterred either by unilateral deviation incentives or by the reactions of non-participants to the possibility of their occurring. I am able to study aggregate uncertainty only for particular parametric distributions, but using the most canonical structures in the literature I find that such uncertainty reduces limiting efficiency, but never by a large magnitude. Voter mistakes or non-instrumental motivations for voting, so long as they are uncorrelated with values, may either enhance or harm efficiency depending on the setting. These findings contrast with existing (approximately) efficient mechanisms, all of which are highly sensitive to at least one of these factors.

Similar content being viewed by others

Notes

LW actually use a slightly different form of QV with a smoothed rather than sharp majority rule and, thus, the relevant marginal pivotality for them is slightly different. I ignore these details to maximize expositional clarity.

See Experian Marketing’s 2013 Lesbian, Gay, Bisexual, Transgender Demographic Report.

The reason is that the utility of the group depends only on the aggregate number of votes it buys and the aggregate payments it makes. Conditional on the first, the second is minimized when all individuals split equally the aggregate votes because the quadratic function is convex.

In a previous analysis I considered also an average case with randomly drawn individuals. This case seems fairly unrealistic, however, and my results are strictly better for QV in this case so I did not consider it to be worth including.

Note that here I implicitly use the idea that EI can be decomposed lineraly across these two cases, which is not precisely correct because the welfare created in the two cases may differ. It can easily, but very tediously, be shown that this does not change the results; this analysis is available on request, but in what follows I continue to use this decomposition.

These results are extremely conservative for a variety of reasons (the collusive group would be significantly less pivotal and would also have a significantly smaller impact on welfare than is given by the approximation of constant pivotality), so this is likely an exaggeration, but not a great one.

Note, however, that this is only true of “type-symmetric” collusive strategies where it is individuals of particular types, rather than individuals of particular names, that collude.

However, as McLean and Postelwaite (2015) argue, it may be an efficient mechanism given aggregate certainty that provides correct incentives for individuals to reveal their information to the group and thus create this aggregate certainty. Thus, aggregate certainty may be the appropriate framework for analysis of a robust mechanism like QV even if it would admit other, non-robust mechanisms.

An alternative model that I have also considered but do not report here for brevity is one wherein individuals overestimate the chance of being pivotal unless they pay a cost to obtain a better estimate. In this case QV behaves more like 1p1v, thus losing some of its efficiency benefits over the latter. However, it may perform better for finite populations as this is the case that most effectively deters extremists and it always continues to outperform 1p1v, at least if the costs of acquiring information about p are excluded. If these are included, 1p1v may perform better.

The statistics of all of these events are dominated by variations in values rather than in \(\epsilon\) by my assumption that \(\epsilon\) has a bounded support distribution, so I ignore the distribution of \(\epsilon\) in what follows.

References

Adler, A. (2006). Exact laws for sums of ratios of order statistics from the pareto distribution. Central European Journal of Mathematics, 4(1), 1–4.

Arrow, K. J. (1979). The property rights doctrine and demand revelation under incomplete information. In M. Boskin (Ed.), Economics and human welfare (pp. 23–39). New York: Academic Press.

Ausubel, L. M., & Milgrom, P. (2005). The lovely but lonely Vickrey auction. In P. Cramton, R. Steinberg, & Y. Shoham (Eds.), Combinatorial auctions (pp. 17–40). Cambridge, MA: MIT Press.

Blais, A. (2000). To vote or not to vote: The merits and limits of rational choice theory. Pittsburgh, PA: University of Pittsburgh Press.

Blumer, A., Ehrenfeucht, A., Haussler, D., & Warmuth, M. K. (1987). Occam’s razor. Information Processing Letters, 24(6), 377–380.

Cárdenas, J. C., Mantilla, C., & Zárate, R. D. (2014). Purchasing votes without cash: Implementing quadratic voting outside the lab. http://www.aeaweb.org/aea/2015conference/program/retrieve.php?pdfid=719.

Chamberlain, G., & Rothschild, M. (1981). A note on the probability of casting a decisive vote. Journal of Economic Theory, 25(1), 152–162.

Chandar, B., & Weyl, E. G. (2016). The efficiency of quadratic voting in finite populations. http://ssrn.com/abstract=2571026.

Clarke, E. H. (1971). Multipart pricing of public goods. Public Choice, 11(1), 17–33.

d’Aspremont, C., & Gérard-Varet, L. A. (1979). Incentives and incomplete information. Journal of Public Economics, 11(1), 25–45.

DellaVigna, S., List, J. A., Malmendier, U., & Rao, G. (2017). Voting to tell others. Review of Economic Studies, 84(1), 143–181.

Downs, A. (1957). An economic theory of democracy. New York: Harper.

Fiorina, M. P. (1976). The voting decision: Instrumental and expressive aspects. Journal of Politics, 38(2), 390–413.

Gelman, A., Silver, N., & Edlin, A. (2010). What is the probability your vote will make a difference? Economic Inquiry, 50(2), 321–326.

Goeree, J. K., & Zhang, J. (Forthcoming). One man, one bid. Games and Economic Behavior.

Good, I. J., & Mayer, L. S. (1975). Estimating the efficacy of a vote. Behavioral Science, 20(1), 25–33.

Groves, T. (1973). Incentives in teams. Econometrica, 41(4), 617–631.

Kahneman, D., & Tversky, A. (1979). Prospect theory: An analysis of decision under risk. Econometrica, 47(2), 263–292.

Kawai, K., & Watanabe, Y. (2013). Inferring strategic voting. American Economic Review, 103(2), 624–662.

Klüppelberg, C., & Mikosch, T. (1997). Large deviations of heavy-tailed random sums with applications in insurance and finance. Journal of Applied Probability, 34(2), 293–308.

Krishna, V., & Morgan, J. (2001). Asymmetric information and legislative rules: Some ammendments. American Political Science Review, 95(2), 435–452.

Krishna, V., & Morgan, J. (2012). Majority rule and utilitarian welfare. http://papers.ssrn.com/sol3/papers.cfm?abstract_id=2083248.

Krishna, V., & Morgan, J. (2015). Majority rule and utilitarian welfare. American Economic Journal: Microeconomics, 7(4), 339–375.

Laffont, J. J., & Martimort, D. (1997). Collusion under asymmetric information. Econometrica, 65(4), 875–911.

Laffont, J. J., & Martimort, D. (2000). Mechanism design with collusion and correlation. Econometrica, 68(2), 309–342.

Laine, C. R. (1977). Strategy in point voting: A note. Quarterly Journal of Economics, 91(3), 505–507.

Lalley, S. P., & Weyl, E. G. (2016). Quadratic voting. http://papers.ssrn.com/sol3/papers.cfm?abstract_id=2003531.

Ledyard, J. (1984). The pure theory of large two-candidate elections. Public Choice, 44(1), 7–41.

Ledyard, J. O., & Palfrey, T. R. (1994). Voting and lottery drafts as efficient public goods mechanisms. Review of Economic Studies, 61(2), 327–355.

Ledyard, J. O., & Palfrey, T. R. (2002). The approximation of efficient public good mechanisms by simple voting schemes. Journal of Public Economics, 83(2), 153–171.

McLean, R. P., & Postelwaite, A. (2015). Implementation with interdependent values. Theoretical Economics, 10(3), 923–952.

Milgrom, P. R. (1981). Good news and bad news: Representation theorems and applications. Bell Journal of Economics, 12(2), 380–391.

Milgrom, P. R., & Weber, R. J. (1982). A theory of auctions and competitive bidding. Econometrica, 50(5), 1089–1122.

Mueller, D. C. (1973). Constitutional democracy and social welfare. Quarterly Journal of Economics, 87(1), 60–80.

Mueller, D. C. (1977). Strategy in point voting: Comment. Quarterly Journal of Economics, 91(3), 509.

Myatt, D. P. (2012). A rational choice theory of voter turnout. http://www2.warwick.ac.uk/fac/soc/economics/news_events/conferences/politicalecon/turnout-2012.pdf.

Myerson, R. B. (2000). Large poisson games. Journal of Economic Theory, 94(1), 7–45.

Spenkuch, J. L. (2015). (ir)rational voters? http://www.kellogg.northwestern.edu/faculty/spenkuch/research/voting.pdf.

Stigler, G. J. (1964). A theory of oligopoly. Journal of Political Economy, 72(1), 44–61.

Thompson, E. A. (1966). A pareto-efficient group decision process. Public Choice, 1(1), 133–140.

Tideman, N. N., & Tullock, G. (1976). A new and superior process for making social choices. Journal of Political Economy, 84(6), 1145–1159.

Vickrey, W. (1961). Counterspeculation, auctions and competitive sealed tenders. Journal of Finance, 16(1), 8–37.

Acknowledgements

This paper was spun off from an earlier draft of the paper “quadratic voting” and all acknowledgements in that paper implicitly extend here. However I additionally thank participants at the “Quadratic Voting and the Public Good” conference at the Becker Friedman Institute and I am particularly grateful to Steve Lalley and Itai Sher for detailed comments. All errors are my own.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix 1: Collusion and fraud

Proof of Proposition 2

First consider the \(\mu =0\) case. As in the proof of Proposition 1 I use a two-case analysis, though I proceed through it more rapidly here as the reader, it is to be hoped, already is acquainted with the schematic there. The two cases are now based on whether \(\left| u_{M}\right|\) is greater or less than \(k N^{\frac{2}{2 +\alpha }} M^{\frac{\alpha -1}{2 +\alpha }}\) for any fixed \(k >0\). In the former case, the probability of this event is, by Fact 1 in

In the complementary case, by Conjecture 2 part 2), the optimal votes for any individual even if she is a member of the collusive group must be in \(O \left( a_{N} \vert u\vert \right)\), where u is the individual’s value. This must, by the quadratic form of the payoff function, yield a value relative to not voting at all in \(O \left( a_{N}^{2} (u)^{2}\right)\). By the same argument, to keep the incentives to deviate to the unilateral optimal action within a constant fraction of this, the votes of each individual must be in \(O \left( a_{N} \vert u\vert \right)\). Thus, we must have \(v_{M} \in O \left( a_{N} \left| u_{M}\right| \right)\). Combining this with Conjecture 2 part 2) requires

completing the schematic from the proof of Proposition 1 necessary to establish this case.

The results for the \(\mu >0\) case follow from the fact that, given that all other voters’ behavior is assumed unchanged, the colluders cannot accomplish more when they are more constrained and thus the inefficiency they create is upper bounded by that in the unconstrained case. \(\square\)

Proof of Proposition 3

By Conjecture 3, \(E I \in O (r) =O \left(\frac{M {\mathbb {E}} \left[ \left. \left| u_{M}\right| \right| C\right] }{N}\right)\). By Fact 1 we know that \(u_{M}\) follows a power law. Additionally, because any collusive group with collective utility greater than a collusive group that does implement an extremist strategy will wish to implement one, we know that a threshold \(u_{M}^{ \star }\) exists such that any coalition with utility below this threshold (above it in absolute value) will act as extremists. Thus, because the expectation of a Pareto distributed variable above a threshold is in constant proportion to that threshold regardless of other parameters, we have that

Furthermore, by Fact 1, \(r \in \varTheta \left( N \left( \left| u_{M}^{ \star }\right| \right) ^{ -\alpha } M^{\alpha -1}\right)\). Solving this jointly with the previous equation yields the result. \(\square\)

Proof of Proposition 4

For the \(\mu =0\) case, I again use a two-case analysis based on the utility of the most extreme individual being above or below \(\left(\frac{N}{L}\right)^{\frac{2}{2 +\alpha }}\). I leave out the details, given that it repeats much of the previous proof, except to note that the votes bought by the fraudulent individual are in \(O \left( a_{N} L u_{L}\right)\) and the probability that \(u_{L}\) exceeds threshold u is in \(\varTheta \left( N u^{ -\alpha }\right)\). Both of these are special cases of earlier reasoning.

For the \(\mu >0\) case with no strategic reactions by other agents, by the logic of the proof of Proposition 2 the fraudulent individual will be willing to act as an extremist if \(u_{L} \in \varOmega \left(\frac{N^{\frac{2}{1 +\alpha }}}{L}\right)\), which by the logic of the preceeding paragraph happens with probability in \(O \left( N^{ -\frac{\alpha -1}{\alpha +1}} L^{\alpha }\right)\), yielding the result in the proposition’s statement.

Finally following the proof of Proposition 3, I combine \(q \in \varTheta \left(\frac{\left| u_{L}\right| L}{N}\right)\) with \(q \in \varTheta \left( N \left| u_{L}\right| ^{ -\alpha }\right)\) to obtain the result in the text exactly as in the proof of Proposition 3. \(\square\)

Appendix 2: Aggregate uncertainty

I begin by calculating the form of equilibrium based on the assumptions that all chance of being pivotal occurs when \(\gamma\) is very close to \(\gamma ^{ \star }\) and that every voter therefore buys votes \(v (u) \approx k g \left( \gamma ^{ \star }\vert u\right) u\). Let \(E (\gamma ) \equiv {\mathbb {E}} \left[ \left. g \left( \gamma ^{ \star }\vert u\right) u\right| \gamma \right]\) and \(V (\gamma ) \equiv {\mathbb {V}} \left[ \left. g \left( \gamma ^{ \star }\vert u\right) u\right| \gamma \right]\). If this is the case, then for values of \(\gamma\) close to \(\gamma ^{ \star }\) Taylor’s theorem states that

because \(\gamma ^{ \star }\) is defined by \({\mathbb {E}}\left[ v (u)\vert \gamma \right] =0\). A similar Taylor approximation applies to \(V (\gamma )\), but I neglect all terms about above the zeroth order as, unlike in the expansion of E, this term is dominant as it does not vanish. Thus, I approximate \({\mathbb {E}}\left[ V\vert \gamma \right] \approx k N E' \left( \gamma ^{ \star }\right) \left( \gamma -\gamma ^{ \star }\right)\) and \({\mathbb {V}}\left[ V\vert \gamma \right] \approx k N V (\gamma )\) for \(\gamma\) near \(\gamma ^{ \star }\) by conditional i.i.d. sampling of the values.

Using the central limit theorem approximation, this implies that the density of a tie conditional on \(\gamma\) is

The chance of being pivotal from the perspective of an individual with value u is this quantity integrated over possible values of \(\gamma\). For \(\gamma\) far from \(\gamma ^{ \star }\) this quantity will be tiny and thus can be neglected in the calculation. For \(\gamma\) close to \(\gamma ^{ \star }\), the density of \(\gamma\) values is approximately uniform from this individual’s perspective at \(g \left( \gamma ^{ \star }\vert u\right)\). Thus, the chance of being pivotal from u’s perspective is approximately

For this to be consistent with the hypothesis that \(v (u) \approx k g \left( \gamma ^{ \star }\vert u\right) u\), we must therefore have

which yields the central tendency of Hypothesis 2. The \(\frac{1}{N}\) decay rate towards this is hypothesized on the basis of the decay rates towards limiting approximations that CW derive for the \(\mu =0\) case.

Example 1

Suppose that u is equal to \(\gamma\) plus normally distributed noise with standard deviation \(\sigma _{1}^{2}\) and that \(\gamma\) is normally distributed with mean \(\mu\) and variance \(\sigma _{2}^{2}\).

I assume, throughout and without loss of generality given the symmetry of the normal distribution, that \(\mu >0\). The marginal distribution of u is \({\mathcal {N}} \left( \mu ,\sigma _{1}^{2} +\sigma _{2}^{2}\right)\) while the \(\gamma\)-conditional distribution is \({\mathcal {N}} \left( \gamma ,\sigma _{1}^{2}\right)\) by standard properties of the normal distribution. I use this to solve for \(\gamma ^{ \star }\):

for some constants a and b independent of u as this is the only quadratic form symmetric about 0, and symmetry about 0 is clearly necessary to yield a 0 expectation given the normal form of the density.

Thus, \(\gamma ^{ \star }\) solves

In a large population, the first-best welfare is proportional to \({\mathbb {E}} \left[ \left| \gamma \right| \right] =\sigma _{2} \sqrt{\frac{2}{\pi }} e^{ -\frac{\mu ^{2}}{2 \sigma _{2}^{2}}} +\mu \left[ 1 -2 \varPhi \left( -\frac{\mu }{\sigma _{2}}\right) \right]\). Welfare loss relative to this occurs in a large population when \(\gamma \in \left( 0 ,\gamma ^{ \star }\right)\) and, in these cases, is proportional to \(\left| \gamma \right|\). This loss equals

which clearly is increasing monotonically in \(\frac{\sigma _{1}^{2}}{2 \left( \sigma _{1}^{2} +\sigma _{2}^{2}\right) } \mu\), which in turn increases monotonically in \(\sigma _{1}^{2}\). I can further compute analytically using Mathematica that

Thus, EI is

where \(x \equiv \frac{\mu }{\sigma _{2}}\). In the limit as \(\sigma _{1} \rightarrow \infty\), this becomes

and when \(\sigma _{1} =\sigma _{2}\)

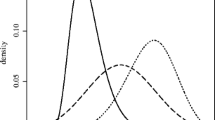

Figure 1 shows the EI expression in both of these cases. Note that 1p1v is limit-efficient by symmetry (1p1v always chooses the sign of the median, which is the same as the sign of the mean) and QV is not. \(\frac{\sigma _{1}^{2}}{2 \left( \sigma _{1}^{2} +\sigma _{2}^{2}\right) } \mu =\gamma ^{ \star } >\gamma _{0} =0\). For large N and a fixed \(\sigma _{2}^{2}\), maximal limit-EI occurs as \(\sigma _{1}^{2} \rightarrow \infty\); globally maximal limit-EI occurs when \(\frac{\mu }{\sigma _{2}^{2}} \approx \pm 1.6\) and equals approximately 2.2%. Typically, it is much less; for example, if \(\sigma _{1}^{2} \rightarrow \infty\) but \(\frac{\mu }{\sigma _{2}}\) is less than \({3}/{4}\) or greater than 3, inefficiency is below 1% and if \(\sigma _{1}^{2} =\sigma _{2}^{2}\), inefficiency then is always below half a percent. A natural intuition is that as the noise of individual values, \(\sigma _{1}^{2}\), grows large and thus value heterogeneity becomes important relative to the aggregate uncertainty, QV should become perfectly efficient. This turns out to be wrong: the greater is \(\sigma _{1}^{2}\), the lower is efficiency, presumably because the combination of extreme values and clearly separated likelihood ratios lead to greater over-weighting of the underdogs.

I now consider an example based on a set-up proposed by Krishna and Morgan (2012) to study costly voting.

Example 2

Suppose that \(\gamma\) is the fraction of individuals who have positive values, but that the distribution of the value magnitudes conditional on its sign is fixed and commonly known. Let \(\mu _+, \mu _-\) be respectively the mean magnitude of values for those with positive and negative values respectively. The average value conditional on \(\gamma\) is \(\gamma \mu _{ +} -(1 -\gamma ) \mu _{ -}\), so that \(\gamma _{0} =\frac{\mu _{ -}}{\mu _{ -} +\mu _{ +}}\). \(f (u\vert \gamma ) =\gamma\) for \(u >0\) and \(f (u\vert \gamma ) =1 -\gamma\) for \(u <0\). As a result, \(f (u) ={\mathbb {E}} [\gamma ]\) for \(u >0\) and \(1 -{\mathbb {E}} [u]\) for \(u <0\). Thus \(\gamma ^{ \star }\) solves

where \(k \equiv \frac{\mu _{ +} \left( 1 -{\mathbb {E}} [\gamma ]\right) }{\mu _{ -} {\mathbb {E}} [\gamma ]}\). Solving this quadratic equation yields

The solution must be in the interval [0, 1], which the negative solution never is and the positive solution always is. Thus,

Efficiency results if and only if \(\gamma _{0} =\gamma ^{ \star }\), that is, if

that is, the election is an expected welfare tie ex-ante .

so that \(\frac{\mu _{ +}}{\mu _{ -}}>(<)\sqrt{k} \Longleftrightarrow \mu _{ +} E [\gamma ] >( <)\mu _{ -} \left( 1 -E [\gamma ]\right)\). Thus,

the Bayesian Underdog Effect (BUE).

Under 1p1v, the threshold in \(\gamma\) for implementing the alternative with high probability is \({1}/{2}\). For each regime, I compute EI as

where \(\gamma _{t}\) is the appropriate threshold value of \(\gamma\). Using this method and explicit integration on Mathematica, I computed the relative (to the first best) efficiency of QV and 1p1v, assuming that g follows a Beta distribution. Note that, if one divides the numerator and denominator by \(\mu _{ -}\text {,}\)

So EI depends only on the ratio \(r \equiv \frac{\mu _{ +}}{\mu _{ -}}\) and on the parameters of the Beta distribution, not on both \(\mu _{ +}\) and \(\mu _{ -}\) independently

EI of QV compared to 1p1v in the Krishna and Morgan (2012) example with \(\gamma\) distributed uniform (left), \(\beta (15,10)\) (center) and \(\beta (10,1)\) (right). Both axes are on a log-scale, though labeled linearly; the x-axis, measures \(r=\frac{\mu _{+}}{\mu _{-}}\)

Figure 2 shows three examples that are representative of the more than 100 cases with which I experimented. Whenever \(\alpha =\beta\) (the distribution of \(\gamma\) is symmetric), QV always dominates 1p1v as it does in the left panel shown, which is \(\alpha =\beta =1\), the uniform distribution. 1p1v obviously performs best when r, shown on the horizontal axis, is near to unity. When \(\alpha\) is larger than \(\beta\), 1p1v may outperform QV near \(r =1\). This is shown in the center and right panels where \((\alpha ,\beta ) =(15 ,10)\) and (10, 1), respectively. The larger \(\alpha\) is relative to \(\beta\), the wider the region over which voting outperforms QV. However, it is precisely in these cases where, if r is very small, voting is most dramatically inefficient. Intuitively, majority rule may outperform QV by blindly favoring the majority, which almost always favors taking the action for \(\alpha \gg \beta\), while QV may be a bit too conservative in favoring the action because of the BUE. However, this blind favoritism towards the majority view can be highly destructive under 1p1v, but not under QV, when the minority has an intense preference. In fact, while voting becomes highly inefficient when the minority preference becomes very intense, QV actually becomes closer to the first best. In all cases (shown here and that I have sampled) QV’s efficiency is above 95% and usually is well above that.

In summary, EI never is greater than 5% for QV and can be arbitrarily large for majority-rule voting. In the special case when \(\gamma\) has a uniform distribution, QV dominates 1p1v voting, which may have EI as large as 25%, while for QV it is never greater than 3%. For “most” parameter ranges, QV appears to outperform majority-rules voting, often quite significantly.

Finally I consider an example similar to the previous one but calibrated to the evaluation of Proposition 8 in California from Sect. 2.4.

Example 3

Suppose that 4% of the electorate is commonly known to oppose the alternative and is willing to pay on average $34k to see it defeated. Suppose that the other 96% of the electorate is willing to pay on average $5k to either support or oppose the alternative with the intensity of their values being independent of \(\gamma\). Here, \(\gamma\) is the fraction of the 96% that support the alternative and is assumed to have a Beta distribution with parameters set so that on the mean fraction of the population in favor of the alternative is 52%. The average value from implementing the alternative is therefore \(\$4800 (2 \gamma -1) -1360\) and \(\gamma _{0} =0.64\).

Individuals in the 4% receive no signal about \(\gamma\) and thus \(f (u\vert \gamma )\) for this group is simply f(u). For proponents of the alternative among the 96%, \(f (u\vert \gamma )\) is, by the logic of the previous proof, \(0.96 f (u) \gamma\) and, for opponents among the 96%, \(0.96 f (u) (1 -\gamma ); f (u)\) is formed by taking expectations over \(\gamma\) as in the previous proof. Using the same techniques derivations as there I can solve for \(\gamma ^{ \star }\).

To calibrate, I assume that a Beta distribution of \(\gamma\) and that

Solving this out implies that \(\alpha =1.18 \beta\). The variance of \(\gamma\) is given by the standard formula for the variance of a Beta distributed variable:

Thus the standard deviation of the total fraction of the population supporting the alternative is \(\frac{0.96 \cdot .5}{\sqrt{2.18 \beta +1}} =\frac{0.48}{\sqrt{2.18 \beta +1}}\) and thus if the standard deviation of the vote share for the alternative under standard voting is \(\sigma\) then \(\beta =\frac{0.45 (0.23 -\sigma ^{2})}{\sigma ^{2}}\). Figure 3 shows the dominance of QV.

QV thus is always superior to 1p1v and the gap is larger the smaller is the standard deviation of the vote share. When the standard deviation of the population share supporting the alternative is 5% points (well above the margin of error in most individual polls), QV has 4% EI and 1p1v 47% EI. Even when the standard deviation is 20% points QV achieves 1.2% EI while 1p1v has 7%.

Appendix 3: Voter behavior

Proof of Proposition 6

In the counterfactual version of the expressivist model contemplated in Hypothesis 3, unless there exists a single voter with negative value greater than the sum of the values of the rest of the population, then standard large deviations results (Klüppelberg and Mikosch 1997) imply that V and U are both positive with probability whose complement is in \(O \left( N^{ -\gamma }\right)\) for any \(\gamma >1\). Thus, the probability of this event, which is in \(O \left( N^{ -\left( \alpha -1\right) }\right)\) by Fact 1, asymptotically dominates any other contributions to \(\widehat{E I}\). Thus \(\widehat{E I} \in O \left( N^{ -\left( \alpha -1\right) }\right)\) and thus \(E I \in O \left( N^{ -\left( \alpha -1\right) }\right)\) by Hypothesis 3. Tighter bounds may be possible, as in some events when one individual is very influential, individual efficiency may also arise, but I do not attempt to quantify what happens in that case.

In the misperception model if both no extremist and no individual with a value of size comparable to the aggregate value of all other individuals exists, then by the same reasoning as in the last paragraph, EI is exponentially small. Thus, we can upper bound EI by the union of the events if an extremist and there an individual who is very influential on the aggregate value exist. By the logic of the previous paragraph, the probability of the second event is in \(\varTheta \left( N^{ -\left( \alpha -1\right) }\right)\) and by Hypothesis 3, the probability of the first event is in \(\varTheta \left( N^{ -\frac{1 +\beta -2 \alpha \beta }{1 +\beta +2 \alpha }}\right)\). One of these events or the other will dominate asymptotically, implying the result. In this case, I do not believe that tighter bounds are possible, as the rationality of extremists will, I believe, often imply that, for the large values of \(\beta\) where the possibility of an influential-on-the-value individual dominates inefficiency, that individual will often be out-voted despite being the utilitarian optimal winner; the outcome thus will be inefficient in many of these cases. \(\square\)

Rights and permissions

About this article

Cite this article

Weyl, E.G. The robustness of quadratic voting. Public Choice 172, 75–107 (2017). https://doi.org/10.1007/s11127-017-0405-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11127-017-0405-4