Abstract

Experiments demonstrate that elites can influence public opinion through framing. Yet outside laboratories or surveys, real-world constraints are likely to limit elites’ ability to reshape public opinion. Additionally, it is difficult to distinguish framing from related processes empirically. This paper uses the 2009–2010 health care debate, coupled with automated content analyses of elite- and mass-level language, to study real-world framing effects. Multiple empirical tests uncover limited but real evidence of elite influence. The language Americans use to explain their opinions proves generally stable, although there is also evidence that the public adopts the language of both parties’ elites symmetrically. Elite rhetoric does not appear to have strong effects on Americans’ overall evaluations of health care reform, but it can influence the reasons they provide for their evaluations. Methodologically, the automated analysis of elite rhetoric and open-ended questions shows promise in distinguishing framing from other communication effects and illuminating elite-mass interactions.

Similar content being viewed by others

Notes

The three journals in question are the American Journal of Political Science, American Political Science Review, and Journal of Politics.

The KFF data was obtained from the Roper Center’s iPoll database. The Pew Research Center’s surveys were downloaded from http://www.pewresearch.org/data/download-datasets/. All other code and data to replicate the research reported herein is available at https://dataverse.harvard.edu/dataverse/DJHopkins.

To be sure, those changes in accessibility may be fleeting—and elite framing could influence public opinion even without influencing public word choice, especially if its effects do not rely on memory-based processing (see also Druckman and Leeper 2012). Put differently, in an online processing model of cognition, frames have the potential to influence attitudes even if the individual does not remember or employ the words used in the frame.

The fact that the Dirichlet distribution is conjugate to the Multinomial enables researchers to fit the model using either a Gibbs sampler or variational inference (Blei et al. 2003).

LDA allows words to be characteristic of multiple topics. Yet in theory, LDA has a potential limitation in this application. Political rhetoric commonly involves a combination of frames. Yet LDA’s use of the Dirichlet distribution to model topic generation means that the topics are assumed to be independent of one another. We thus confirm our analyses of elite rhetoric with the Correlated Topic Model (Blei and Lafferty 2006), which replaces the Dirichlet distribution giving rise to the topic probabilities with a logistic normal distribution.

The search terms were “healthcare,” “health care,” “obamacare,” “health reform,” and “health insurance reform.”

Lexis–Nexis keyword searches of CNN and Fox News transcripts indicate that attention to health care reform rose to a peak of 603 stories in August 2009, remained high through the fall and winter, and reached their maximum of 667 stories in March 2010. According to the Gallup Most Important Problem series (Baumgartner and Jones 2013), the share of the public citing health care as the nation’s most important problem rose from 7% in the second quarter of 2009 to 16% in the third quarter, and remained above 13% in the first quarter of 2010.

Like the significant majority of automated analyses in political science to date, our analyses focus only on single words or unigrams, although incorporating common bigrams (such as “public option”) would be a useful extension.

For the press releases, we remove 170 such text strings. Given the differing format of the press appearances, we need remove only a small set of 11 names, typically those of the shows’ hosts.

Specifically, with the topicmodels package for R, we fit LDA using a collapsed Gibbs sampler. The Gibbs sampler was run for 200,000 iterations after discarding 30,000 burn-in iterations, and then thinned by 1/1000. The prior for \(\alpha\) is set to 50/K, or 4.1667. Estimation using Variational Expectation-Maximization yields highly similar results. We fit the CTM using Variational Expectation-Maximization, with the two tolerance parameters set to \(10^{-5}\). The convergence of the Gibbs sampler was checked informally by running it three times with varying starting points and observing essentially identical clustering patterns.

CTM also returns a \((K-1) \times (K-1)\) matrix \(\Sigma\) indicating the covariances between the transformed topic probabilities.

The results from models fit with K set to 10 and 14 are available in Figs. 7 and 8 in Online Appendix, respectively.

For the robustness check using CTM, see Fig. 9 in Online Appendix.

For a more detailed discussion of interpreting the output from topic models, see Boyd-Graber et al. (2009).

Given the prior for \(\alpha\), it is not surprising that the LDA model distributes the probability relatively evenly across the twelve categories. The lowest-probability frame still had 0.074 of the total probability mass, while the highest-probability frame had only 0.095.

While most of these clusters are legitimate frames, some are procedural clusters without significant framing value. The cluster labeled with the stems “law,” “report,” “act,” and “fraud” (top right) appears to be one such cluster.

These analyses include television appearances by figures of both parties—including Senators, Representatives, and members of the Obama administration—that dealt with health care reform. Such press appearances are widely seen as attempts at agenda-setting, and present prime opportunities to offer health care-related frames. We collected such press appearances from five media outlets: Fox News, CNN, ABC, NBC, and CBS.

We exclude a small number of names—typically those of the shows’ hosts—as well as common English-language stop words.

As with the press releases, we also observe a few catch-all clusters without substantive interpretations.

Pew asked respondents, “what would you say is the main reason you favor/oppose the health care proposals being discussed in Congress?”

The Kaiser question asked, “Can you tell me in your own words what is the main reason you have a favorable/an unfavorable opinion of the health care reform law?”

For the Kaiser surveys, the comparable error rates were 1–2%.

Although “death panel” is technically a bigram, the absence of these unigrams implies its absence as a two-word phrase.

The Kullback-Liebler divergence between two distributions \(P_{pr}\) and \(P_{sur}\) is defined as \(\sum _i P_{pr}(i) Log \big ( \frac{P_{pr}(i)}{P_{sur}(i)} \big )\). To symmetrize this metric, we add the divergence from \(P_{pr}\) to \(P_{sur}\) to the divergence from \(P_{sur}\) to \(P_{pr}\).

Keep in mind that the Pearson’s correlation is negatively correlated with distance, so upward arrows in the right panel of Fig. 10 indicate an increasingly close relationship.

The Euclidean distance detects statistically significant declines in the first time period from late July to November 2009, with p-values of 0.004 for Democratic press releases and 0.003 for Republican press releases.

Prior to listwise deletion, there are 43,887 respondents. The results remain quite similar when omitting the measure of respondents’ income, which itself accounts for 47% of the missingness.

After the bill’s passage, the language was modified to ask about conditions “under the new health reform law.”

Not all respondents over 65 report insurance through Medicare, although a significant majority do.

References

Arceneaux, K., Johnson, M., & Murphy, C. (2012). Polarized political communication, oppositional media hostility, and selective exposure. The Journal of Politics, 74(01), 174–186.

Barabas, J., & Jerit, J. (2010). Are survey experiments externally valid? American Political Science Review, 104(2), 226–242.

Baumgartner, F. R., & Bryan, D. J. (2013). Policy Agendas Project. http://www.policyagendas.org/.

Baumgartner, F. R., Berry, J. M., Hojnacki, M., Leech, B. L., & David, C. K. (2009). Lobbying and policy change: Who wins, who loses, and why. Chicago: University of Chicago Press.

Baumgartner, F. R., De Boef, S., & Boydstun, A. E. (2008). The decline of the death penalty and the discovery of innocence. New York: Cambridge University Press.

Berinsky, A. J. (2007). Assuming the costs of war: Events, elites, and American public support for military conflict. Journal of Politics, 69(4), 975–997.

Blei, D. M., & Lafferty, J. D. (2006). Correlated topic models. Advances in Neural Information Processing Systems, 18, 147.

Blei, D. M., Ng, A. Y., & Jordan, M. I. (2003). Latent Dirichlet allocation. Journal of Machine Learning Research, 3, 993–1022.

Boyd-Graber, J., Chang, J., Gerrish, S., Wang C., & Blei, D. (2009). Reading tea leaves: How humans interpret topic models. In Proceedings of the 23rd annual conference on neural information processing systems.

Bullock, J. G. (2011). Elite influence on public opinion in an informed electorate. American Political Science Review, 105(3), 496–515.

Campbell, A. L. (2011). Policy feedbacks and the impact of policy designs on public opinion. Journal of Health Politcs, Policy, and Law, 36(6), 961–73.

Chong, D., & James, N. D. (2007a). A theory of framing and opinion formation. Journal of Communication, 57, 99–118.

Chong, D., & James, N. D. (2007b). Framing public opinion in competitive democracies. American Political Science Review, 101(04), 637–655.

Chong, D., & Druckman, J. N. (2010). Dynamic public opinion: Communication effects over time. American Political Science Review, 104(4), 663–680.

Chong, D., & Druckman, J. N. (2011). Public-elite interactions: Puzzles in search of researchers. In Y. S. Robert & L. Jacobs (Eds.), The Oxford Handbook of American Public Opinion and the Media. New York: Oxford University Press.

De Vreese, C. H. (2003). Framing Europe: Television news and European integration. Amsterdam: Het Spinhuis.

Druckman, J. N. (2003). On the limits of framing effects: Who can frame? Journal of Politics, 63(4), 1041–1066.

Druckman, J. N. (2004). Political preference formation: Competition, deliberation, and the (ir) relevance of framing effects. American Political Science Review, 98(04), 671–686.

Druckman, J. N., & Leeper, T. J. (2012). Learning more from political communication experiments: Pretreatment and its effects. American Journal of Political Science, 56(4), 875–896.

Druckman, J. N., Fein, J., & Leeper, T. J. (2012). A source of bias in public opinion stability. American Political Science Review, 106(2), 430–454.

Edwards, G. C. (2009). The strategic president: Persuasion and opportunity in presidential leadership. Princeton, NJ: Princeton University Press.

Eisenstein, J., O’Connor, B., Smith, N. A., & Xing, E.P. (2010). A latent variable model for geographic lexical variation. In Proceedings of the 2010 conference on empirical methods in natural language processing. Association for Computational Linguistics, pp. 1277–1287.

Entman, R. M. (2004). Projections of power: Framing news, public opinion, and U.S. Foreign Policy. Chicago: University of Chicago Press.

Gamson, W. A. (1992). Talking politics. New York: Cambridge University Press.

Gelman, A., & Hill, J. (2006). Data analysis using regression and multilevel/hierarchical models. New York: Cambridge University Press.

Grimmer, J. (2010). A Bayesian hierarchical topic model for political texts: Measuring expressed agendas in senate press releases. Political Analysis, 18(1), 1–35.

Grimmer, J., & King, G. (2011). General purpose computer-assisted clustering and conceptualization. Proceedings of the National Academy of Sciences, 108(7), 2643–2650.

Grimmer, J., & Stewart, B. (2013). Text as data: The promise and pitfalls of automatic content analysis methods for political texts. Political Analysis (Forthcoming).

Hayes, D. (2008). Does the messenger matter? Candidate-media agenda convergence and its effects on voter issue salience. Political Research Quarterly, 61(1), 134–146.

Henderson, M., & Sunshine Hillygus, D. (2011). The dynamics of health care opinion, 2008–2010: Partisanship, self-interest, and racial resentment. Journal of Health Politics, Policy, and Law, 36(6), 945–960.

Hill, S. J., Lo, J., Vavreck, L., & Zaller, J. (2013). How quickly we forget: The duration of persuasion effects from mass communication. Political Communication, 30, 521–547.

Hopkins, D. J., & King, G. (2010). A method of automated nonparametric content analysis for social science. American Journal of Political Science, 54(1), 229–247.

Huber, G. A., & Paris, C. (2012). Assessing the programmatic equivalence assumption in question wording experiments: Understanding why americans like assistance to the poor more than welfare. Public Opinion Quarterly, 77, 385–397.

Iyengar, S., & Kinder, D. R. (1987). News that matters. Chicago: University of Chicago Press.

Jacobs, L. R., & Burns, M. (2004). The second face of the public presidency: Presidential polling and the shift from policy to personality polling. Presidential Studies Quarterly, 34(3), 536–556.

Jacobs, L. R., & Shapiro, R. Y. (2000). Politicians don’t pander: Political manipulation and the loss of democratic responsiveness. Chicago: University of Chicago Press.

Jacobs, L. R., Page, B. I., Burns, M., McAvoy, G., & Ostermeier, Eric. (2003). What presidents talk about: The nixon case. Presidential Studies Quarterly, 33(4), 751–771.

Kellstedt, P. M. (2003). The mass media and the dynamics of American racial attitudes. New York: Cambridge University Press.

Kriner, D. L., & Reeves, A. (2014). Responsive partisanship: Public support for the Clinton and Obama health care plans. Journal of Health Politics, Policy and Law, 39(4), 717–749.

Lauderdale, B. E., & Clark, T. S. (2014). Scaling politically meaningful dimensions using texts and votes. American Journal of Political Science, 58(3), 754–771.

Lecheler, S., de Vreese, C., & Slothuus, R. (2009). Issue importance as a moderator of framing effects. Communication Research, 36(3), 400–425.

Leeper, T., & Slothuus, R. (2015). Can citizens be framed? How information, not emphasis, changes opinions. Aarhus C: Aarhus University.

Lenz, G. S. (2013). Follow the leader?: How voters respond to politicians’ policies and performance. Chicago: University of Chicago Press.

Lodge, M., & Taber, C. S. (2013). The rationalizing voter. Cambridge: Cambridge University Press.

Lynch, J., & Gollust, S. E. (2010). Playing fair: Fairness beliefs and health policy preferences in the United States. Journal of Health Politics, Policy and Law, 35(6), 849–887.

Mutz, D. C. (1994). Contextualizing personal experience: The role of mass media. The Journal of Politics, 56(3), 689–714.

Nelson, T. (2011). Issue framing. In R. Y. Shapiro & L. Jacobs (Eds.), The Oxford Handbook of American Public Opinion and the Media (pp. 189–203). New York: Oxford University Press.

Nelson, T. E., Clawson, R. A., & Oxley, Z. M. (1997). Media framing of a civil liberties conflict and its effect on tolerance. American Political Science Review, 91(3), 567–583.

Noel, H. (2014). Political ideologies and political parties in America. New York: Cambridge University Press.

Nyhan, B. (2010). Why the “Death Panel” myth wouldn’t die: Misinformation in the health care reform debate. The Forum, 8(1), 1–24.

Payne, S. L. (1951). The art of asking questions. Princeton, NJ: Princeton University Press.

Porter, M. F. (1980). An algorithm for suffix stripping. Program: Electronic Library and Information Systems, 14(3), 130–137.

Prior, M. (2007). Post-broadcast democracy: How media choice increases inequality in political involvement and polarizes elections. New York: Cambridge University Press.

Quinn, K. M., Monroe, B. L., Colaresi, M., Crespin, M. H., & Radev, D. R. (2010). How to analyze political attention with minimal assumptions and costs. American Journal of Political Science, 54(1), 209–228.

Roberts, M. E., Stewart, B. M., Tingley, D., Lucas, C., Leder-Luis, J., Gadarian, S. K., et al. (2014). Structural topic models for open-ended survey responses. American Journal of Political Science, 58(4), 1064–1082.

Scherer, M. (2010). The White House scrambles to Tame the news cyclone. Time March 4.

Scheufele, D. A., & Iyengar, S. (2012). The state of framing research: A call for new directions. In K. Kenski & K. H. Jamieson (Eds.), The Oxford handbook of political communication theories (pp. 1–26). New York: Oxford University Press.

Shapiro, R. Y., & Jacobs, L. (2010). Simulating representation: Elite mobilization and political power in health care reform. The Forum, 8(1), 1–15.

Slothuus, R., & de Vreese, C. H. (2010). Political parties, motivated reasoning, and issue framing effects. Journal of Politics, 72(3), 630–645.

Smith, M. A. (2007). The right talk: How conservatives transformed the great society into the economic society. Princeton, NJ: Princeton University Press.

Sniderman, P. M., & Theriault, S. M. (2004). The structure of political argument and the logic of issue framing. Studies in Public Opinion: Attitudes, Nonattitudes, Measurement Error, and Change (pp. 133–65). Princeton, NJ: Princeton University Press.

Steinhauer, J., & Pear, R. (2011). G.O.P. Newcomers set out to undo Obama victories. New York Times.

Taber, C. S., & Lodge, M. (2006). Motivated skepticism in the evaluation of political beliefs. American Journal of Political Science, 50(3), 755–769.

Acknowledgements

The author gratefully acknowledges feedback or recommendations from Michael Bailey, Andrea L. Campbell, Lee Drutman, Bill Gormley, Justin Grimmer, Justin Gross, Jacob Hacker, Danny Hayes, Gary King, David Konisky, Jonathan M. Ladd, Ben Lauderdale, Thomas Leeper, Marc Meredith, Burt Monroe, Hans Noel, Brendan Nyhan, Kalind Parish, Andrew Reeves, John Sides, and Brandon Stewart.

Author information

Authors and Affiliations

Corresponding author

Additional information

Prior versions of this manuscript were presented at the 2011 Summer Meeting of the Society for Political Methodology at Princeton University, the 2012 “Analyzing Text as Data” conference at Harvard University, the McCourt School of Public Policy at Georgetown University in 2014, and the Northeastern Methods Meeting at New York University in April 2016. This manuscript would not have been possible without the tireless and insightful research assistance of Robert Biemesderfer, Tiger Brown, Patrick Gavin, Saleel Huprikar, Douglas Kovel, Louis Lin, Owen O’Hare, Jake Sticka, Anton Strezhnev, Clare Tilton, and Marzena Zukowska. The author gratefully acknowledges support for his research into the Affordable Care Act from the Russell Sage Foundation (94-17-01).

Electronic supplementary material

Below is the link to the electronic supplementary material.

Appendix: Framing and Sub-group Effects

Appendix: Framing and Sub-group Effects

Frames and Sub-group Opinion

Here, we proceed as analyses of real-world framing typically do and examine closed-ended survey data. Doing so allows us to assess the stability of public opinion on health care reform, which might place an upper bound on framing effects: variable elite frames cannot explain stable opinions. Analyzing citizens’ closed-ended assessments of health care reform also enables us to measure sub-group trends that provide tests of targeted framing effects. The results advance the claim that real-world framing effects are limited, as we find no evidence that sub-groups targeted by frames subsequently shift their attitudes. At the same time, the limitations of this common approach help motivate the use of open-ended responses to measure public opinion and mass-elite interactions.

To analyze public health care attitudes, we turn to 32 telephone surveys of American adults conducted by the Kaiser Family Foundation between February 2009 and January 2012. With at least 1,200 respondents, the Kaiser surveys jointly provide us with information on 30,370 Americans’ attitudes toward health care reform.Footnote 27 For this analysis, we focus on a single question: “Do you think the country as a whole would be better off or worse off if the president and Congress passed health care reform, or don’t you think it would make much difference?”Footnote 28 This question was asked consistently throughout the debate, providing us with a common metric of respondents’ health care reform attitudes. The responses are coded on a scale from 1 to 3, with 1 indicating that the country will be worse off, 2 indicating no difference, and 3 indicating that the country will be better off.

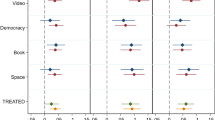

Drawing on Gelman and Hill (2006), the analysis proceeds by estimating separate linear models of attitudes toward health care reform in each month. The models include basic demographics, such as a respondent’s gender, race, ethnicity, age and education in years, and income in thousands of dollars. They also include measures for self-reported Republicans and Independents, although in most administrations the survey did not push Independents to “lean” to a party. In addition, the models employ a few health care-related measures that gauge respondents’ self-interest in the debate. These include indicator variables for receiving Medicare or Medicaid as well as an indicator for lacking health insurance and a five-category measure of self-reported health.Footnote 29 To analyze any shifts in public opinion over time, the analysis ran separate linear regression models for each month’s survey. It then aggregated each variable’s coefficients into a single plot, presented in Fig. 6. The x-axis indicates time while the y-axis indicates the size of each coefficient. Note that the scale of the y-axis differs by variable. Each dot indicates the corresponding month’s mean coefficient, while the surrounding line depicts the 95% confidence interval.

Data: 30,370 American adults interviewed in 32 monthly Kaiser Family Foundation surveys between February 2009 and January 2012. The x-axis indicates the month. The dependent variable is each respondent’s assessment that health care reform is good for the country as a whole, measured on a scale from 1 (“worse off”) to 3 (“better off”). Each dot indicates the mean coefficient estimate for an OLS model of that month’s data, with lines reflecting 95% confidence intervals

The first thing to notice: partisanship is a uniquely powerful predictor of attitudes toward health care reform, and increasingly so as the debate went on (see also Kriner and Reeves 2014). From the coefficient magnitudes, we see that identifying as a Republican or as an Independent leads to markedly reduced assessments of health care reform’s benefits. There are small but discernible changes over time in these coefficients, with increasing evidence of partisan polarization as the debate unfolds. We see, for example, that Republican identifiers are less negative on health care reform during the spring and summer of 2009, when President Obama was still enjoying a honeymoon and when health care had yet to take center stage. GOP assessments of health care reform drop notably in August of 2009, as angry town hall meetings captured national media attention. They then remain at a plateau during the fall of 2009 before dropping again in January 2010, just after the ACA passed the U.S. Senate. Both the GOP and Independent coefficients reach their nadir around the spring of 2010, when the ACA was passed and signed into law. As with several other covariates, including the indicators for race and ethnicity, the pattern for partisanship shows initial polarization in the early months of the debate and then impressive stability thereafter. Notice as well that the objective measures of self-interest, such as self-reported health or receipt of Medicare or Medicaid, have very little predictive power at any point during the debate. Those without health care coverage are mildly more positive in their assessments, but there is little evidence those assessments are bolstered by Democrats’ use of related frames just after the law’s passage. This contrasts with findings for subjective measures of self-interest reported in Henderson and Hillygus (2011). If anything, Medicare recipients appear slightly more positive about health care reform in early 2010, when Republican Senators were emphasizing its reductions in Medicare spending (but see Campbell 2011).

Figure 6 also demonstrates the stability of opinions, especially after the issue of health care reform became salient in August and September of 2009. The changes afterward tend to be small, such as the potential uptick in positive views among the well-educated in late 2011, the gradual increase in support among the elderly, or the gradual decline in the baseline respondent. This stability of sub-group opinion contrasts with the punctuation in frames identified above: the sudden shifts in elite framing do not match up with the stability of sub-group opinion. In that way, these results amplify the conclusion of Druckman et al. (2012) that choosing to be exposed to certain initial health care frames induces opinion stability thereafter.

Granger Tests of Framing Effects

Expecting a single frame to produce a homogeneous effect across the population might set the bar for framing too high. Prior research shows that partisans respond to frames or arguments in quite different ways, and Americans’ responses to health care frames might also hinge on the frames’ personal relevance. We thus consider whether three of the pronounced shifts in framing identified in the article disproportionately influenced the sub-groups targeted by those frames. First, if the sudden spike in Medicare-related rhetoric by Republicans in January 2010 was influential, those on Medicare might become less sanguine about health care reform just afterward. Second, if the Democratic emphasis on extending coverage and increasing its affordability after the law’s passage was influential, we might expect the prominence of that frame to be especially powerful among those without health insurance. Alternately, the Republicans drew from a frame about rising taxes and increased business costs at multiple times, a frame that might have been more influential among well-to-do respondents.

To test these possibilities, we begin with the 20 months for which we have Senators’ press releases and public opinion data, which covers the period from February 2009 to December 2010. We extract the relevant coefficient from the regressions conducted above which predict assessments about health care reform’s impact on the country as a whole. We then use Granger tests to examine the sequencing of the frames and any shifts in sub-group opinion during the subsequent month. To measure frames, we consider both the difference in partisan usage of the frame and the share of usage by the party deploying the frame strategically, as Table 1 shows. On the Medicare frame, for example, we consider the difference between Republican and Democratic usage as well as Republican usage alone. Frames are measured based on the maximum share of a party’s discourse that drew on related language in the prior month, although the results are robust to the use of means instead.

As the consistently high p-values indicate, there is no evidence that increased use of a frame shifts the opinion of the relevant sub-group in the subsequent month. This same pattern of null results holds if we increase the lag to 2 months. It also holds when we multiply the frame shares by the total number of press releases in each month, which allows us to measure the frames’ salience. Groups targeted by frames are not differentially responsive to those frames.

By contrast, if political rhetoric is responsive to changes in public opinion, it is less clear precisely whose opinions rhetoric should track—should it track overall opinions or those of key sub-groups? Still, it is noteworthy that when we repeat these analyses allowing sub-group opinion to lead political rhetoric on related issues, we do not find significant relationships either, as illustrated by Table 2.

Rights and permissions

About this article

Cite this article

Hopkins, D.J. The Exaggerated Life of Death Panels? The Limited but Real Influence of Elite Rhetoric in the 2009–2010 Health Care Debate. Polit Behav 40, 681–709 (2018). https://doi.org/10.1007/s11109-017-9418-4

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11109-017-9418-4