Abstract

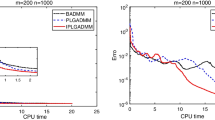

Founded upon a sparse estimation of the Hessian obtained by a recent diagonal quasi-Newton update, a conjugacy condition is given, and then, a class of conjugate gradient methods is developed, being modifications of the Hestenes–Stiefel method. According to the given sparse approximation, the curvature condition is guaranteed regardless of the line search technique. Convergence analysis is conducted without convexity assumption, based on a nonmonotone Armijo line search in which a forgetting factor is embedded to enhance probability of applying more recent available information. Practical advantages of the method are computationally depicted on a set of CUTEr test functions and also, on the well-known signal processing problems such as sparse recovery and nonnegative matrix factorization.

Similar content being viewed by others

Data Availability

The authors confirm that the data supporting the findings of this study are available within the manuscript. Raw data that support the finding of this study are available from the corresponding author, upon reasonable request.

References

Abubakar, A.B., Kumam, P., Awwal, A.M.: Global convergence via descent modified three-term conjugate gradient projection algorithm with applications to signal recovery. Result. Appl. Math. 4, 100069 (2019)

Ahookhoosh, M., Amini, K., Peyghami, M.: A nonmonotone trust region line search method for large-scale unconstrained optimization. Appl. Math Model. 36(1), 478–487 (2012)

Ahookhosh, M., Ghaderi, S.: On efficiency of nonmonotone armijo-type line searches. Appl. Math. Model. 43, 170–190 (2017)

Amini, K., Ahookhosh, M., Nosratipour, H.: An inexact line search approach using modified nonmonotone strategy for unconstrained optimization. Numer. Algorithms 66(1), 49–78 (2014)

Aminifard, Z., Babaie–Kafaki, S.: A modified descent polak–Ribiére–Polyak conjugate gradient method with global convergence property for nonconvex functions. Calcolo 56(2), 16 (2019)

Aminifard, Z., Babaie–Kafaki, S.: Modified spectral conjugate gradient methods based on the quasi–Newton aspects. Pac. J. Optim. 16(4), 581–594 (2020)

Aminifard, Z., Babaie–Kafaki, S.: Diagonally scaled memoryless quasi-Newton methods with application to compressed sensing. J. Ind. Manag. Optim. 19(1), 437 (2023)

Aminifard, Z., Hosseini, A., Babaie–Kafaki, S.: Modified conjugate gradient method for solving sparse recovery problem with nonconvex penalty. Signal Process. 193, 108424 (2022)

Andrei, N.: Convex functions. Adv. Model. Optim. 9(2), 257–267 (2007)

Andrei, N.: A diagonal quasi–Newton updating method based on minimizing the measure function of Byrd and Nocedal for unconstrained optimization. Optimization 67(9), 1553–1568 (2018)

Awwal, A.M., Kumam, P., Abubakar, A.B.: A modified conjugate gradient method for monotone nonlinear equations with convex constraints. Appl. Numer. Math. 145, 507–520 (2019)

Babaie–Kafaki, S.: On optimality of the parameters of self–scaling memoryless quasi–Newton updating formulae. J. Optim. Theory Appl. 167(1), 91–101 (2015)

Babaie–Kafaki, S., Ghanbari, R.: A descent family of Dai–Liao conjugate gradient methods. Optim Methods Softw. 29(3), 583–591 (2014)

Babaie–Kafaki, S., Ghanbari, R.: Two hybrid nonlinear conjugate gradient methods based on a modified secant equation. Optimization 63(7), 1027–242 (2014)

Babaie–Kafaki, S., Mahdavi–Amiri, N.: Two modified hybrid conjugate gradient methods based on a hybrid secant equation. Math. Model. Anal. 18(1), 32–52 (2013)

Barzilai, J., Borwein, J.M.: Two–point stepsize gradient methods. IMA J. Numer. Anal. 8(1), 141–148 (1988)

Becker, S., Bobin, J., Candès, E. J.: NESTA: A fast and accurate first-order method for sparse recovery. SIAM J. Imaging Sci. 4(1), 1–39 (2011)

Birgin, E., Martínez, J.M., Raydan, M.: Nonmonotone spectral projected gradient methods on convex sets. SIAM J. Optim. 10(4), 1196–1211 (2000)

Black, M.J., Rangarajan, A.: On the unification of line processes, outlier rejection, and robust statistics with applications in early vision. Int. J. Comput. Vis. 19(1), 57–91 (1996)

Bruckstein, A.M., Donoho, D.L., Elad, M.: From sparse solutions of systems of equations to sparse modeling of signals and images. SIAM Rev. 51 (1), 34–81 (2009)

Cao, J., Wu, J.: A conjugate gradient algorithm and its applications in image restoration. Appl. Numer. Math. 152, 243–252 (2020)

Cui, Z., Wu, B.: A new modified nonmonotone adaptive trust region method for unconstrained optimization. Comput. Optim. Appl. 53(3), 795–806 (2012)

Dai, Y.H.: A nonmonotone conjugate gradient algorithm for unconstrained optimization. J. Syst. Sci. Complex. 15(2), 139–145 (2002)

Dai, Y.H.: On the nonmonotone line search. J. Optim. Theory Appl. 112(2), 315–330 (2002)

Dai, Y.H., Kou, C.X.: A nonlinear conjugate gradient algorithm with an optimal property and an improved Wolfe line search. SIAM J. Optim. 23(1), 296–320 (2013)

Dai, Y.H., Liao, L.Z.: New conjugacy conditions and related nonlinear conjugate gradient methods. Appl. Math. Optim. 43(1), 87–101 (2001)

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Programming 91(2, Ser. A), 201–213 (2002)

Esmaeili, H., Shabani, S., Kimiaei, M.: A new generalized shrinkage conjugate gradient method for sparse recovery. Calcolo 56(1), 1–38 (2019)

Faramarzi, P., Amini, K.: A modified spectral conjugate gradient method with global convergence. J. Optim. Theory Appl. 182(2), 667–690 (2019)

Fathi Hafshejani, S., Gaur, D.R., Hossain, S., Benkoczi, R.: Barzilai and Borwein conjugate gradient method equipped with a nonmonotone line search technique and its application on non-negative matrix factorization. arxiv: abs/2109.05685, (2021)

Gilbert, J.C., Nocedal, J.: Global convergence properties of conjugate gradient methods for optimization. SIAM J. Optim. 2(1), 21–42 (1992)

Gould, N.I.M., Orban, D., Toint, P.H.L.: CUTEr: a constrained and unconstrained testing environment, revisited. ACM Trans. Math. Software 29(4), 373–394 (2003)

Grippo, L., Lampariello, F., Lucidi, S.: A nonmonotone line search technique for Newton’s method. SIAM J. Numer Anal. 23(4), 707–716 (1986)

Grippo, L., Lampariello, F., Lucidi, S.: A truncated Newton method with nonmonotone line search for unconstrained optimization. J. Optim Theory Appl. 60(3), 401–419 (1989)

Hafshejani, S.F., Gaur, D., Hossain, S., Benkoczi, R.: Barzilai and Borwein conjugate gradient method equipped with a nonmonotone line search technique and its application on nonnegative matrix factorization. arXiv:2109.05685(2021)

Hager, W.W., Zhang, H.: A new conjugate gradient method with guaranteed descent and an efficient line search. SIAM J. Optim. 16(1), 170–192 (2005)

Hager, W.W., Zhang, H.: Algorithm 851: CG−Descent, a conjugate gradient method with guaranteed descent. ACM Trans Math. Softw. 32(1), 113–137 (2006)

Heravi, A.R., Hodtani, G.A.: A new correntropy–based conjugate gradient backpropagation algorithm for improving training in neural networks. IEEE Trans. Neural Netw. Learn Syst. 29(12), 6252–6263 (2018)

Hestenes, M.R., Stiefel, E.: Methods of conjugate gradients for solving linear systems. J. Research Nat. Bur. Standards 49(6), 409–436 (1952)

Huber, P.J.: Robust regression: asymptotics, conjectures and monte carlo. Ann. Stat. 1(5), 799–821 (1973)

Johnstone, R.M., Johnson, C.R. Jr, Bitmead, R.R., Anderson, B.D.: Exponential convergence of recursive least-squares with exponential forgetting factor. Syst Control Lett. 2(2), 77–82 (1982)

Lee, D.D., Seung, S.H.: Learning the parts of objects by nonnegative matrix factorization. Nature 401(6755), 788–791 (1999)

Leung, S.H., So, C.F.: Gradient-based variable forgetting factor RLS algorithm in time-varying environments. IEEE Trans Signal Process. 53 (8), 3141–3150 (2005)

Li, X., Zhang, W., Dong, X.: A class of modified FR conjugate gradient method and applications to nonnegative matrix factorization. Comput. Math. Appl. 73, 270–276 (2017)

Liu, G.H., Jing, L.L., Han, L.X., Han, D.: A class of nonmonotone conjugate gradient methods for unconstrained optimization. J. Optim. Theory Appl. 101(1), 127–140 (1999)

Liu, H., Li, X.: Modified subspace Barzilai–Borwein gradient method for nonnegative matrix factorization. Comput. Optim. Appl. 55(1), 173–196 (2013)

Narushima, Y., Yabe, H.: Conjugate gradient methods based on secant conditions that generate descent search directions for unconstrained optimization. J. Comput. Appl. Math. 236(17), 4303–4317 (2012)

Nesterov, Y.: Smooth minimization of nonsmooth functions. Math. Programming 103(1), 127–152 (2005)

Paatero, P., Tapper, U.: Positive matrix factorization: a nonnegative factor model with optimal utilization of error estimates of data values. Environmetrics 5(2), 111–126 (1994)

Paleologu, C., Benesty, J., Ciochina, S.: A robust variable forgetting factor recursive least-squares algorithm for system identification. IEEE Signal Process Lett. 15, 597–600 (2008)

Rezaee, S., Babaie–Kafaki, S.: An adaptive nonmonotone trust region algorithm. Optim. Methods Softw. 34(2), 264–277 (2019)

Sugiki, K., Narushima, Y., Yabe, H.: Globally convergent three-term conjugate gradient methods that use secant conditions and generate descent search directions for unconstrained optimization. J. Optim. Theory Appl. 153(3), 733–757 (2012)

Sulaiman, I.M., Malik, M., Awwal, A.M., Kumam, P., Mamat, M., Al-Ahmad, S.: On three–term conjugate gradient method for optimization problems with applications on COVID–19 model and robotic motion control. Advances in Continuous and Discrete Models, 2022(1):1–22 (2022)

Sun, W.: Nonmonotone trust region method for solving optimization problems. Appl. Math. Comput. 156(1), 159–174 (2004)

Sun, W., Yuan, Y.X.: Optimization Theory and Methods: Nonlinear Programming. Springer, New York (2006)

Toint, P.H.L.: An assessment of nonmonotone line search techniques for unconstrained optimization. SIAM J. Sci. Comput. 17(3), 725–739 (1996)

Wang, X.Y., Li, S.J., Kou, X.P.: A self-adaptive three-term conjugate gradient method for monotone nonlinear equations with convex constraints. Calcolo 53, 133–145 (2016)

Wang, Y., Jia, Y., Hu, C., Turk, M.: Nonnegative matrix factorization framework for face recognition. Int. J. Pattern Recognit. Artif Intell. 19 (04), 495–511 (2005)

Yahaya, M.M., Kumam, P., Awwal, A.M., Chaipunya, P., Aji, S., Salisu, S.: A new generalized quasi–Newton algorithm based on structured diagonal hessian approximation for solving nonlinear least-squares problems with application to 3DOF planar robot arm manipulator. IEEE Access 10, 10816–10826 (2022)

Yahaya, M.M., Kumam, P., Awwal, A.M., Aji, S.: A structured quasi–Newton algorithm with nonmonotone search strategy for structured NLS problems and its application in robotic motion control. J. Comput. Appl. Math., 395 (2021)

Yu, Z., Zang, J., Liu, J.: A class of nonmonotone spectral memory gradient method. J. Korean Math. Soc. 47(1), 63–70 (2010)

Yuan, G., Li, T., Hu, W.: A conjugate gradient algorithm for large-scale nonlinear equations and image restoration problems. Appl. Numer. Math. 147, 129–141 (2020)

Zhang, H., Hager, W.W.: A nonmonotone line search technique and its application to unconstrained optimization. SIAM J. Optim. 14(4), 1043–1056 (2004)

Zhang, L., Zhou, W., Li, D.H.: Some descent three-term conjugate gradient methods and their global convergence. Optim. Methods Softw. 22(4), 697–711 (2007)

Zhu, H., Xiao, Y., Wu, S.Y.: Large sparse signal recovery by conjugate gradient algorithm based on smoothing technique. Comput. Math. Appl. 66(1), 24–32 (2013)

Acknowledgements

The authors thank the anonymous reviewers for their valuable comments and suggestions helped to improve the quality of this work. They also owe a major debt of gratitude to Professor Michael Navon for the line search code.

Funding

This research was in part supported by the Research Council of Semnan University and in part by the grant no. 4005578 from Iran National Science Foundation (INSF),

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Consent for publication

The participants have consented to the submission of the manuscript to the Numerical Algorithms journal.

Conflict of interest

The authors declare no competing interests.

Additional information

Author contribution

The authors confirm contribution to the manuscript as follows:

∙ study conception and design: S. Babaie–Kafaki;

∙ convergence analysis: S. Babaie–Kafaki and Z. Aminifard;

∙ performing numerical tests and interpretation of results: Z. Aminifard and F. Dargahi;

∙ draft manuscript preparation: S. Babaie–Kafaki and Z. Aminifard. All authors reviewed the results and approved the final version of the manuscript.

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Aminifard, Z., Babaie–Kafaki, S. & Dargahi, F. Nonmonotone Quasi–Newton-based conjugate gradient methods with application to signal processing. Numer Algor 93, 1527–1541 (2023). https://doi.org/10.1007/s11075-022-01477-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11075-022-01477-7

Keywords

- Nonlinear optimization

- Conjugate gradient method

- Nonmonotone line search

- Forgetting factor

- Sparse recovery

- Nonnegative matrix factorization