Abstract

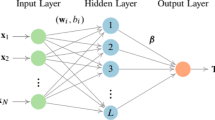

The extreme learning machine (ELM) algorithm is advantageous to regression modeling owing to its simple structure, fast computation, and good generalization performance. However, the existing ELM algorithm uses an \(l_{2}\)-norm loss function, which is sensitive to outliers and has low robustness. In addition, some existing robust loss functions are not sufficiently flexible to accurately estimate the relationship between sample points and loss values, resulting in unsatisfactory ELM performance. To address these problems, this study established a robust ELM (ALFELM) algorithm. First, an adaptive loss function with two tunable hyperparameters was introduced; the function can be transformed into several robust loss functions by varying the parameters. It overcomes the limitations of fixed robust loss functions. Then, the Bayesian optimization strategy was used to determine the optimal parameters of the loss function. Furthermore, the classical iterative reweighted least squares method was used to solve for output weights, with a weight function corresponding to the loss function and a regularization parameter to prevent overfitting. Finally, the proposed method was tested using several artificial and benchmark datasets, and its effectiveness was verified for a real engineering case. The results indicated that the proposed ALFELM algorithm is more robust and accurate compared with other methods, especially for a large number of outliers. In addition, the algorithm can be used to establish effective regression models for actual processes.

Similar content being viewed by others

References

Huang GB, Zhu QY, Siew CK (2006) Extreme learning machine: theory and applications. Neurocomputing 70(1):489–501

Zhao J, Wang Z, Park DS (2012) Online sequential extreme learning machine with forgetting mechanism. Neurocomputing 87:79–89

Yang LX, Yang SY, Li SJ et al (2017) Incremental laplacian regularization extreme learning machine for online learning. Appl Soft Comput 59:546–555

Zhang J, Li YJ, Xiao WD et al (2022) Online spatiotemporal modeling for robust and lightweight device-free localization in nonstationary environments. IEEE Trans Ind Informatics Inf 19:8528–8538

Zhang J, Li YJ, Xiao WD et al (2020) Robust extreme learning machine for modeling with unknown noise. J Frankl Inst 357:9885–9908

Zhang J, Li YJ, Xiao WD et al (2020) Non-iterative and fast deep learning: multilayer extreme learning machines. J Frankl Inst 357:8925–8955

Hodge V, Austin J (2004) A survey of outlier detection methodologies. Artif Intell Rev 22(2):85–126

Wang KN, Zhong P (2014) Robust non-convex least squares loss function for regression with outliers. Knowl-Based Syst 71:290–302

Zhang K, Luo MX (2015) Outlier-robust extreme learning machine for regression problems. Neurocomputing 151:1519–1527

Horata P, Chiewchanwattana S, Sunat K (2013) Robust extreme learning machine. Neurocomputing 102:31–44

Barreto GA, Barros A (2016) A robust extreme learning machine for pattern classification with outliers. Neurocomputing 176:3–13

Chen K, Lv Q, Lu Y et al (2017) Robust regularized extreme learning machine for regression using iteratively reweighted least squares. Neurocomputing 230:345–358

Wang KN, Cao JD, Pei HM (2020) Robust extreme learning machine in the presence of outliers by iterative reweighted algorithm. Appl Math Comput 377:125186

Barron JT (2019) A general and adaptive robust loss function. In: Proceedings of the 2019 IEEE/CVF conference on computer vision and pattern recognition, pp 4331–4339

Deng WY, Zheng QH, Chen L (2009) Regularized extreme learning machine. In: IEEE symposium on computational intelligence and data mining, pp 389–395

Rousseeuw PJ, Leroy AM (1989) Robust regression and outlier detection. J R Stat Soc Ser A 152(1):133–134

David HA (1998) Early sample measures of variability. Stat Sci 13(4):368–377

Fernandes B, Street A, Valladao D et al (2016) An adaptive robust portfolio optimization model with loss constraints based on data-driven polyhedral uncertainty sets. Eur J Oper Res 255(3):961–971

Chebrolu N, Labe T, Vysotska O et al (2021) Adaptive robust kernels for non-linear least squares problems. IEEE Robot Autom Lett 6(2):2240–2247

Jones DR, Schonlau M, Welch WJ (1998) Efficient global optimization of expensive black-box functions. J Global Optim 13(4):455–492

Ghahramani Z (2015) Probabilistic machine learning and artificial intelligence. Nature 521:452–459

Shahriari B, Swersky K, Wang Z et al (2016) Taking the human out of the loop: a review of Bayesian optimization. Proc IEEE 104(1):148–175

Cui JX, Yang B (2018) Survey on Bayesian optimization methodology and applications. J Softw 29(10):3068–3090

Zhong P (2012) Training robust support vector regression with smooth non-convex loss function. Optim Methods Softw 27(6):1039–1058

Acknowledgements

This work is supported in part by the NSF China (No. 62103216) and Natural Science Foundation of Shandong Province (No. ZR2020QF060).

Author information

Authors and Affiliations

Contributions

FZ and SC whote the code, ZH performed the data analysis, BS prepared all tables and figures, QX wrote the main manuscript. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Zhang, F., Chen, S., Hong, Z. et al. A Robust Extreme Learning Machine Based on Adaptive Loss Function for Regression Modeling. Neural Process Lett 55, 10589–10612 (2023). https://doi.org/10.1007/s11063-023-11340-y

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-023-11340-y