Abstract

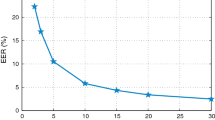

Many questions remain with regards to how context affects perceptual and automatic speaker identification performance. To examine the effects of task design on perceptual speaker identification performance, three tasks were developed, including lineup and binary tasks, as well as a novel clustering task. Speech recordings of native French speakers were compared similarly across tasks evaluated by unfamiliar Francophone listeners. True positive (sensitivity) and true negative (specificity) response rates across tasks were measured. Our results showed participants had similar sensitivity and specificity for binary (88%) and clustering (84%) tasks, but random selection rates for the lineup task. Pearson correlation procedures were used to evaluate the efficiency of scores produced by a state-of-the-art automatic speaker verification to model perceptual responses (equal error rate = 89%). Automatic scores modelled lineup (r2 = 0.6) and clustering (r2 = 0.5) task accuracy quite well, however, they were less robust when modelling binary task responses (r2 = -0.2). The results underscore the role task design plays in shaping perceptual responses, which, in turn, affects the modelling effectiveness of automatic scores. As evidence points to humans and algorithms modelling speakers differently, our findings suggest automatic speaker identification performance might be improved with a greater understanding on how context shapes perceptual responses.

Similar content being viewed by others

Data availability

The datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request.

References

Amor I B, Bonastre J -F (2022) Ba-lr: binary-attribute-based likelihood ratio estimation for forensic voice comparison. In: 2022 International workshop on biometrics and forensics (IWBF), pp 1–6. https://doi.org/10.1109/IWBF55382.2022.9794542

Andre C, Ghio A, Cavé C, Teston B (2007) Perceval: a computer-driven system for experimentation on auditory and visual perception. In: International congress of phonetic sciences, pp 1421–1424

Audibert N, Larcher A, Kahn J, Rossato S, Matrouf D, Bonastre J -F (2010) Lia human-based system description for nist hasr 2010. In: Proceedings NIST HASR 2010

Baumann O, Belin P (2008) Perceptual scaling of voice identity: common dimensions for different vowels and speakers. Psychol Res 74:110–20. https://doi.org/10.1007/s00426-008-0185-z

Boersma P (2001) Praat, a system for doing phonetics by computer. 5(9/10):341–345

Bricker P D, Pruzanski S (1966) Effects of stimulus content and duration on talker identification. J Acoust Soc Am 40:1442–1449

Chanclu A, Georgeton L, Fredouille C, Bonastre J -F (2020) Ptsvox: une base de données pour la comparaison de voix dans le cadre judiciaire. In: 6e Conférence conjointe journées d’études sur la parole, pp 73–81

Cheveigné A, Kawahara H (2002) Yin, a fundamental frequency estimator for speech and music. J Acous Soc Am 111:1917–30. https://doi.org/10.1121/1.1458024

Chung J S, Nagrani A, Zisserman A (2018) VoxCeleb2: deep speaker recognition. In: Interspeech, pp 1086–1090

Clopper C, Pisoni D (2004) Some acoustic cues for the perceptual categorization of American English regional dialects. J Phon 32:111–140. https://doi.org/10.1016/S0095-4470(03)00009-3

Dellwo V, Ferragne E, Pellegrino F (2006) The perception of intended speech rate in English, French, and German by French speakers. In: Speech Prosody. Dresden, Germany, p 2006. https://hal-univ-paris.archives-ouvertes.fr/hal-01240462

Dufour S, Nguyen N, Frauenfelder U H (2007) The perception of phonemic contrasts in a non-native dialect. J Acoust Soc Am 121(4):131–136. https://doi.org/10.1121/1.2710742

Fleming D, Giordano B, Caldara R, Belin P (2014) A language-familiarity effect for speaker discrimination without comprehension. Proc Natl Acad Sci 111:0. https://doi.org/10.1073/pnas.1401383111

Floccia C, Goslin J, Girard F, Konopczynski G (2006) Does a regional accent perturb speech processing. J Exp Psychol: Hum Percept Perform 32:1276–93. https://doi.org/10.1037/0096-1523.32.5.1276

Gerlach L, McDougall K, Kelly F, Alexander A, Nolan F (2020) Exploring the relationship between voice similarity estimates by listeners and by an automatic speaker recognition system incorporating phonetic features. Speech Comm, 124. https://doi.org/10.1016/j.specom.2020.08.003

Greenberg C, Martin A, Brandschain L, Campbell J, Cieri C, Doddington G, Godfrey J (2010) Human assisted speaker recognition in NIST SRE: 10. In: Proceedings of the speaker and language recognition workshop (Odyssey 2010), p 32

Hautamäki V, Kinnunen T, Nosratighods M, Lee K A, Ma B, Li H (2010) Approaching human listener accuracy with modern speaker verification. In: Proceedings of Interspeech 2010, pp 1473–1476. https://doi.org/10.21437/Interspeech.2010-152

Ioffe S (2006) Probabilistic linear discriminant analysis. In: European conference on computer vision. Springer, pp 531–542

Jenson D, Saltuklaroglu T (2021) Sensorimotor contributions to working memory differ between the discrimination of same and different syllable pairs. Neuropsychologia 159:107947. https://doi.org/10.1016/j.neuropsychologia.2021.107947

Jong G D, Nolan F, McDougall K, Hudson T (2015) Voice lineups: a practical guide. In: ICPhS

Johnson J, Mcgettigan C, Lavan N (2020) Comparing unfamiliar voice and face identity perception using identity sorting tasks. Q J Exp Psychol 73:174702182093865. https://doi.org/10.1177/1747021820938659

Kahn J, Audibert N, Rossato S, Bonastre J -F (2011) Speaker verification by inexperienced and experienced listeners vs. speaker verification system. In: 2011 IEEE international conference on acoustics, speech and signal processing (ICASSP), pp 5912–5915. https://doi.org/10.1109/ICASSP.2011.5947707

Kelly F, Alexander A, Forth O, Kent S, Lindh J (2016) Identifying perceptually similar voices with a speaker recognition system using auto-phonetic features. In: INTERSPEECH

Kerstholt J, Jansen E, Amelsvoort A, Broeders T (2006) Earwitnesses: effects of accent, retention and telephone. Appl Cogn Psychol 20:187–197. https://doi.org/10.1002/acp.1175

Kreiman J, Van Lancker Sidtis D (2011) Foundations of voice studies: an interdisciplinary approach to voice production and perception. https://doi.org/10.1002/9781444395068

LaRiviere C (1971) Some acoustic and perceptual correlates of speaker identification. In: Proceedings of the seventh international congress of phonetic sciences, pp 558–564

Lavan N, Burston L, Garrido L (2018) How many voices did you hear? Natural variability disrupts identity perception from unfamiliar voices. Brit J Psychol 0:110. https://doi.org/10.1111/bjop.12348

Lavan N, Kreitewolf J, Obleser J, McGettigan C (2021) Familiarity and task context shape the use of acoustic information in voice identity perception. Cognition 215:104780. https://doi.org/10.1016/j.cognition.2021.104780

Lavner Y, Gath I, Rosenhouse J (2000) The effects of acoustic modifications on the identification of familiar voices speaking isolated vowels. Speech Comm 30(1):9–26. https://doi.org/10.1016/S0167-6393(99)00028-X

Levi S V (2019) Methodological considerations for interpreting the language familiarity effect in talker processing. WIREs Cogn Sci 10(2):1483. https://doi.org/10.1002/wcs.1483. https://arxiv.org/abs/https://wires.onlinelibrary.wiley.com/doi/pdf/. https://wires.onlinelibrary.wiley.com/doi/pdf/doi:10.1002/wcs.1483

Levi S, Schwartz R G (2013) The development of language-specific and language-independent talker processing. J Speech Lang Hear Res: JSLHR. https://doi.org/10.1044/1092-4388(2012/12-0095

Lindh J (2009) Perception of voice similarity and the results of a voice line-up. In: Proceedings of FONETIK 2009, The XXII Swedish phonetics conference, Department of Linguistics, pp 186–1897

Lindh J, Eriksson A (2010) Voice similarity—a comparison between judgements by human listeners and automatic voice comparison. In: Proceedings of FONETIK 2010, pp 63–69

Mathias SR, von Kriegstein K (2014) How do we recognise who is speaking? Front Biosci-Scholar 6(1):92–109. https://doi.org/10.2741/S417

Mattys S, Davis M, Bradlow A, Scott S (2012) Speech recognition in adverse conditions: a review. Lang Cogn Process 27:953–978. https://doi.org/10.1080/01690965.2012.705006

McDougall K, Nolan F, Hudson T (2015) Telephone transmission and earwitnesses: performance on voice parades controlled for voice similarity. Phonetica 72:257–272. https://doi.org/10.1159/000439385

Mendoza E, Valencia N, Muñoz López J, Trujillo Mendoza H (1996) Differences in voice quality between men and women: use of the long-term average spectrum (ltas). J Voice: Official Journal of the Voice Foundation 10:59–66. https://doi.org/10.1016/S0892-1997(96)80019-1

Meunier C, Ghio A (2018) Caractériser la distinctivité du système vocalique des locuteurs. In: Actes des XXXII Journées d’Etudes sur la Parole, pp 469–477. https://doi.org/10.21437/JEP.2018-54

Mühl C, Sheil O, Jarutyte L, Bestelmeyer P (2017) The bangor voice matching test: a standardized test for the assessment of voice perception ability. Behav Res Methods 50:1–9. https://doi.org/10.3758/s13428-017-0985-4

Nagrani A, Chung J S, Zisserman A (2017) VoxCeleb: a large-scale speaker identification dataset. In: Interspeech, pp 2616–2620

Naika R Bhalla S, Bhateja V, Chandavale A A, Hiwale A S, Satapathy S C (eds) (2018) An overview of automatic speaker verification system. Springer, Singapore

Nolan F, Mcdougall K, Hudson T (2011) Some acoustic correlates of perceived (dis)similarity between same-accent voices. In: Proceedings of the 17th international congress of phonetic sciences, Hong Kong, 17–21 August

Nolan F, McDougall K, Hudson T (2013) Effects of the telephone on perceived voice similarity: implications for voice line-ups. Int J Speech Lang Law 0:20. https://doi.org/10.1558/ijsll.v20i2.229

O’Brien B, Tomashenko N, Chanclu A, Bonastre J -F (2021) Anonymous speaker clusters: making distinctions between anonymised speech recordings with clustering interface. In: Proceedings of Interspeech 2021, pp 3580–3584. https://doi.org/10.21437/Interspeech.2021-1588

O’Brien B, Meunier C, Ghio A (2021) Presentation matters: evaluating speaker identification tasks. In: Proceedings of Interspeech 2021, pp 4623–4627. https://doi.org/10.21437/Interspeech.2021-1211

O’Brien B, Meunier C, Ghio A, Fredouille C, Bonastre J -F, Guarino C (2022) Discriminating speakers using perceptual clustering interface, pp 97–111. https://doi.org/10.17469/O2108AISV000005

Öhman L, Eriksson A, Granhag P (2010) Mobile phone quality vs. direct quality: how the presentation format affects earwitness identification accuracy. Eur J Psychol Appl Legal Context 2

Park S J, Yeung G, Vesselinova N, Kreiman J, Keating P, Alwan A (2018) Towards understanding speaker discrimination abilities in humans and machines for text-independent short utterances of different speech styles. J Acoust Soc Am 144:375–386. https://doi.org/10.1121/1.5045323

Perrachione TK (2017) Speaker recognition across languages. OpenBU. https://open.bu.edu/handle/2144/23877

Poddar A, Sahidullah M, Saha G (2017) Speaker verification with short utterances: a review of challenges, trends and opportunities. IET Biomet 7:0. https://doi.org/10.1049/iet-bmt.2017.0065

Pollack I, Pickett J (1954) On the identification of speakers by voice. J Acoust Soc Am 26. https://doi.org/10.1121/1.1907349

Povey D, Ghoshal A, Boulianne G, Burget L, Glembek O, Goel N (2011) The Kaldi speech recognition toolkit. In: Proceedings of 2011 IEEE workshop on automatic speech recognition and understanding

Prince S, Elder J (2007) Probabilistic linear discriminant analysis for inferences about identity. In: IEEE 11th International conference on computer vision, pp 1–8. https://doi.org/10.1109/ICCV.2007.4409052

Quené H (2001) On the just noticeable difference for tempo in speech. J Phon 35:353–362. https://doi.org/10.1016/j.wocn.2006.09.001

Ramos D, Franco-Pedroso J, Gonzalez-Rodriguez J (2011) Calibration and weight of the evidence by human listeners. The atvs-uam submission to nist human-aided speaker recognition 2010. In: 2011 IEEE International conference on acoustics, speech and signal processing (ICASSP), pp 5908–5911. https://doi.org/10.1109/ICASSP.2011.5947706

Rietveld A C M, Broeders A P A (1991) Testing the fairness of voice identity parades: the similarity criterion. In: Proceedings of the 12th international congress of phonetic sciences, pp 46–49

Roebuck R, Wilding J (1993) Effects of vowel variety and sample length on identification of a speaker in a line-up. Appl Cogn Psychol 7:475–481. https://doi.org/10.1002/acp.2350070603

Schwartz R, Campbell J P, Shen W, Sturim D E, Campbell W M, Richardson F S, Dunn R B, Granville R (2011) Usss-mitll 2010 human assisted speaker recognition. In: 2011 IEEE International conference on acoustics, speech and signal processing (ICASSP), pp 5904–5907. https://doi.org/10.1109/ICASSP.2011.5947705

Schweinberger S, Kawahara H, Simpson A, Skuk V, Zaske R (2014) Speaker perception. Wiley interdisciplinary reviews. Cogn Sci 5. https://doi.org/10.1002/wcs.1261

Singh N, Agrawal A, Khan P R (2017) Automatic speaker recognition: current approaches and progress in last six decades. Glob J Enterp Inf Syst 9:38–45. https://doi.org/10.18311/gjeis/2017/15973

Smith H M J, Bird K, Roeser J, Robson J, Braber N, Wright D, Stacey P C (2020) Voice parade procedures: optimising witness performance. Memory 28(1):2–17. https://doi.org/10.1080/09658211.2019.1673427

Smith H M J, Roeser J, Pautz N, Davis J P, Robson J, Wright D, Braber N, Stacey P C (2023) Evaluating earwitness identification procedures: adapting pre-parade instructions and parade procedure. Memory 31 (1):147–161. https://doi.org/10.1080/09658211.2022.2129065. https://arxiv.org/abs/https://doi.org/doi:10.1080/09658211.2022.2129065. PMID: 36201314

Snyder D, Garcia-Romero D, Sell G, Povey D, Khudanpur S (2018) X-vectors: robust DNN embeddings for speaker recognition. In: 2018 IEEE international conference on acoustics, speech and signal processing (ICASSP). IEEE, pp 5329–5333

Stevenage S V (2018) Drawing a distinction between familiar and unfamiliar voice processing: a review of neuropsychological, clinical and empirical findings. Neuropsychologia 116:162–178. Special Issue: Familiar Voice Recognition

Stevenage S, Symons A, Fletcher A, Coen C (2019) Sorting through the impact of familiarity when processing vocal identity: results from a voice sorting task: familiarity and voice sorting. Q J Exp Psychol 73. https://doi.org/10.1177/1747021819888064

Sussman J (1991) Stimulus ratio effects on speech discrimination by children and adults. J Speech Hearing Res 34:671–8. https://doi.org/10.1044/jshr.3403.671

Titze I (2000) Principles of voice production, second printing. National Center for Voice and Speech, Iowa City, pp 245–251

Tomashenko N, Srivastava B M L, Wang X, Vincent E, Nautsch A, Yamagishi J, Evans N et al The VoicePrivacy 2020 challenge evaluation plan. https://doi.org/10.48550/ARXIV.2205.07123

Tomashenko N, Wang X, Vincent E, Patino J, Srivastava B, Noe P -G, Nautsch A, Evans N, Yamagishi J, O’Brien B, Chanclu A, Bonastre J -F, Todisco M, Maouche M (2022) The voiceprivacy 2020 challenge: results and findings. Comput Speech 74:101362. https://doi.org/10.1016/j.csl.2022.101362

Van Lancker D, Kreiman J (1987) Voice discrimination and recognition are separate abilities. Neuropsychologia 25(5):829–834. https://doi.org/10.1016/0028-3932(87)90120-5

Venezia J, Saberi K, Chubb C, Hickock G (2012) Response bias modulates the speech motor system during syllable discrimination. Front Psychol 3:157. https://doi.org/10.3389/fpsyg.2012.00157

Whiteside S P (1998) Identification of a speaker’s sex: a study of vowels. Percept Mot Skills 86:579–584

Wu K -C, Childers D G (1991) Gender recognition from speech. Part i: coarse analysis. J Acoust Soc Am 90(4 Pt 1):1828–1840

Yarmey A (2003) Earwitness identification over the telephone and in field settings, vol 10. https://doi.org/10.1558/sll.2003.10.1.62

Zetterholm E, Blomberg M, Elenius D (2004) A comparison between human perception and a speaker verification system score of a voice imitation

Acknowledgments

This work was funded by the French National Research Agency (ANR) under the VoxCrim project (ANR-17-CE39-0016).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A: Speech utterances

Description of each speech utterance group assignment, French text, and English translation.

Group | Text | English translation |

|---|---|---|

Target | je m’approchais du bord de la fenêtre | I approached the edge of the |

window | ||

Target | serrait son manteau autour de lui | [he] tightened his coat around |

him | ||

Target | on trouve une espèce de chat | we found a species of cat |

Target | la bise et le soleil se disputaient | the wind and the sun were |

fighting | ||

Target | pour rencontrer ces deux espèces | to meet these two species |

Target | le soleil a commencé à briller | the sun began to shine |

Target | faire ôter son manteau au voyageur | to make the traveler take off his |

coat | ||

Target | s’éloignant d’un nid perché sur un arbre | [it] moved away from a nest |

perched on a tree | ||

Target | il avait dû faire fuir l’oiseau | he had to scare the bird away |

Target | son plumage était beau et doux | its plumage was beautiful and |

soft | ||

Target | ses deux ailes étaient blessées | his two wings were injured |

Target | serait regardé comme le plus fort | [he] would be regarded as the |

strongest |

Group | Text | English translation |

|---|---|---|

Non-Target | vit une colonie d’oiseaux | lives a colony of birds |

Non-Target | ma sœur n’a qu’à traverser la rue | my sister only has to cross the |

street | ||

Non-Target | son cœur battait très vite | his heart was beating very fast |

Non-Target | que le soleil était le plus fort des deux | that the sun was the strongest of |

the two | ||

Non-Target | au cœur d’un parc naturel | in the heart of a natural park |

Non-Target | sur le coup de midi | at the stroke of noon |

Non-Target | pour regarder dans la rue | to look in the street |

Non-Target | quand ils ont vu un voyageur qui | when they saw a traveler coming |

s’avancait | forward | |

Non-Target | leur poil est beau et doux | his hair is beautiful and soft |

Non-Target | ma sœur est venue chez moi hier | my sister came to my house |

yesterday | ||

Non-Target | elle me parlait de ses vacances en | she spoke about her vacations at |

mer du Nord | the North Sea | |

Non-Target | ils sont noirs avec deux tâches blanches | they are black with two white |

sur le dos | spots on their backs |

Appendix B: Lineup task interface

A screenshot of the Lineup task interface, where each speaker icon represents a speech recording. The top speaker icon is the Target, while the rest constitute the Lineup. The following are French to English translations: “Comparaison de voix” (“Voice comparison”); “Reponse” (“response”); “Valider” (“Confirm”); “Effacer” (“Reset”). The boxes above the Lineup were optional for marking progress.

Appendix C: Same-Different task interface

A screenshot of the Same-Different interface. After listening to two speech recordings separated by 2 s, they decided whether the voices belonged to the same (“Même voix”) or different speakers (“Voix différentes”).

Appendix D: Cluster task interface

A screenshot of the Cluster task interface. Each numbered circle represents a speech recording and the color and grey assignments represent whether it has been assigned or not to a specific speaker, respectively.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

O’Brien, B., Meunier, C., Tomashenko, N. et al. Evaluating the effects of task design on unfamiliar Francophone listener and automatic speaker identification performance. Multimed Tools Appl 83, 10615–10635 (2024). https://doi.org/10.1007/s11042-023-15391-0

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-15391-0