Abstract

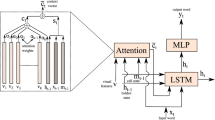

In a country like India with high density of population, motorcycle is one of the common and viable mode of transport. It is observed that many motorcyclists refrain from wearing helmets while driving. This results in fatal road accidents every year. In crowded roads and highways, it becomes difficult for the police to identify such cases and to take necessary actions. These traffic rule violators can be detected by analysing the traffic videos of surveillance camera. The main objective of this work is to detect the helmetless motorcyclists (and pillion riders) and generate appropriate video caption to help the traffic authority to take fast action against the rule violators. The system can also detect helmetless multiple riders and child rider cases from the video captions. A deep neural network based approach is proposed to generate the video captions for motorcycle riders from surveillance video analysis. In the proposed encoder-decoder based model, Convolutional Neural Network (CNN) along with optical flow guided approach are used for visual feature extraction in encoder part. In the decoder part, Recurrent Neural Network (RNN) based Long-Short-Term-Memory (LSTM) with Soft Attention (SA) technique is applied to achieve best result for video caption generation. The effectiveness of the proposed approach is evaluated by computing BiLingual Evaluation Understudy (BLEU) and Metric for Evaluation of Translation with Explicit Ordering (METEOR) metrices. The extensive experimental results show that the proposed method outperforms other state-of-the-art methods.

Similar content being viewed by others

References

Ba J, Mnih V, Kavukcuoglu K (2015) Multiple object recognition with visual attention. ICLR, arXiv:1412.7755

Bahdanau D, Cho K, Bengio Y (2015) Neural machine translation by jointly learning to align and translate. International Conference on Learning Representations (ICLR), arXiv:1409.0473

Banerjee S, Lavie A (June 2005) METEOR: an automatic metric for MT evaluation with improved correlation with human judgments. In: Proceedings of the ACL workshop on intrinsic and extrinsic evaluation measures for machine translation and/or summarization. https://www.aclweb.org/anthology/W05-0909. Association for Computational Linguistics, Ann Arbor, pp 65–72

Bello I, Zoph B, Vaswani A, Shlens J, Le QV (2019) Attention augmented convolutional networks. In: Proceedings of the IEEE international conference on computer vision, pp 3286–3295

Chen S, Jiang Y-G (2019) Motion guided spatial attention for video captioning. In: Proceedings of the AAAI conference on artificial intelligence, vol 33(01), pp 8191–8198

Chiverton J (2012) Helmet presence classification with motorcycle detection and tracking. IET Intell Transp Syst 6(3):259–269

Cho K, Van Merriënboer B, Bahdanau D, Bengio Y (2014) On the properties of neural machine translation: Encoder-decoder approaches. arXiv:1409.1259

Dasgupta M, Bandyopadhyay O, Chatterji S (2019) Automated helmet detection for multiple motorcycle riders using CNN. In: IEEE Conference on information and communication technology, pp 1–4

Donahue J, Anne Hendricks L, Guadarrama S, Rohrbach M, Venugopalan S, Saenko K, Darrell T (2015) Long-term recurrent convolutional networks for visual recognition and description. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 2625–2634

E Silva RRV, Aires KRT, Veras RdMS (2014) Helmet detection on motorcyclists using image descriptors and classifiers. In: 2014 27th SIBGRAPI conference on graphics, patterns and images, IEEE, pp 141–148

Espinosa JE, Velastin SA, Branch JW (2018) Motorcycle detection and classification in urban scenarios using a model based on faster r-cnn. ArXiv:1808.02299

Gao L, Guo Z, Zhang H, Xu X, Shen HT (2017) Video captioning with attention-based lstm and semantic consistency. IEEE Trans Multimed 19 (9):2045–2055

Girshick R (2015) Fast r-cnn. In: Proceedings of the IEEE international conference on computer vision, pp 1440–1448

Girshick R, Donahue J, Darrell T, Malik J (2014) Rich feature hierarchies for accurate object detection and semantic segmentation. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 580–587

Hochreiter S, Schmidhuber J (1997) Long short-term memory. Neur Comput 9(8):1735–1780. https://doi.org/10.1162/neco.1997.9.8.1735

Howard AG, Zhu M, Chen B, Kalenichenko D, Wang W, Weyand T, Andreetto M, Adam H (2017) Mobilenets: efficient convolutional neural networks for mobile vision applications. arXiv:1704.04861

Hu J, Shen L, Albanie S, Sun G, Vedaldi A (2018) Gather-excite: exploiting feature context in convolutional neural networks. Adv Neur Inform Process Syst 31:9401–9411

Hu J, Shen L, Sun G (2018) Squeeze-and-excitation networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 7132–7141

Iandola FN, Han S, Moskewicz MW, Ashraf K, Dally WJ, Keutzer K (2016) Squeezenet: Alexnet-level accuracy with 50x fewer parameters and< 0.5 mb model size. arXiv:1602.07360

Karpathy A, Fei-Fei L (2014) Deep visual-semantic alignments for generating image descriptions. CVPR, arXiv:cs.CV/1412.2306

Kiros R, Salakhutdinov R, Zemel R (2014) Unifying visual-semantic embeddings with multimodal neural language models. ACL, arXiv:1411.2539

Krizhevsky A, Sutskever I, Hinton GE (2017) Imagenet classification with deep convolutional neural networks. Commun ACM 60(6):84–90

Kunar A (2020) Object detection with ssd and mobilenet, https://aditya-kunar-52859.medium.com/object-detection-with-ssd-and-mobilenet-aeedc5917ad0. Accessed 08 June 2021

Li X, Zhang X, Huang W, Wang Q (2021) Truncation cross entropy loss for remote sensing image captioning. IEEE Trans Geosci Remote Sens 59(6):5246–5257. https://doi.org/10.1109/TGRS.2020.3010106https://doi.org/10.1109/TGRS.2020.3010106

Liu W, Anguelov D, Erhan D, Szegedy C, Reed S, Fu C-Yg, Berg AC (2016) Ssd: single shot multibox detector. In: European conference on computer vision. Springer, pp 21–37

Mallela NC, Volety R, Srinivasa PR, Nadesh RK (2021) Detection of the triple riding and speed violation on two-wheelers using deep learning algorithms. Multimedia Tools and Application, https://doi.org/10.1007/s11042-020-10126-x

Panesar S, Sanjeev KS (2019) Motorcycle helmet use and its correlates in fatal crashes. Prof RK Sharma 13(3):43

Papineni K, Roukos S, Ward T, Zhu W-J (2002) Bleu: a method for automatic evaluation of machine translation. In: Proceedings of the 40th annual meeting of the association for computational linguistics, pp 311–318

Redmon J, Divvala S, Girshick R, Farhadi A (2016) You only look once: unified, real-time object detection. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 779–788

Ren S, He K, Girshick R, Sun J (2016) Faster r-cnn: towards real-time object detection with region proposal networks. IEEE Trans Pattern Anal Mach Intell 39(6):1137–1149

Ruder S (2020) An overview of gradient descent optimization algorithms. arXiv:1609.04747

Simonyan K, Zisserman A (2014) Two-stream convolutional networks for action recognition in videos. Advances in Neural Information Processing Systems, 27

Sun S, Kuang Z, Sheng L, Ouyang W, Zhang W (2018) Optical flow guided feature: a fast and robust motion representation for video action recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 1390–1399

Venugopalan S, Rohrbach M, Donahue J, Mooney R, Darrell T, Saenko K (2015) Sequence to sequence-video to text. In: Proceedings of the IEEE international conference on computer vision, pp 4534–4542

Wang L, Xiong Y, Wang Z, Qiao Y, Lin D, Tang X, Gool LV (2016) Temporal segment networks: towards good practices for deep action recognition. In: European conference on computer vision, Springer, pp 20–36

Wang Q, Huang W, Zhang X, Li X (2020) Word-sentence framework for remote sensing image captioning. IEEE Transactions on Geoscience and Remote Sensing, https://doi.org/10.1109/TGRS.2020.3044054https://doi.org/10.1109/TGRS.2020.3044054

Wang X, Girshick R, Gupta A, He K (2018) Non-local neural networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 7794–7803

Xu K, Ba J, Kiros R, Cho K, Courville A, Salakhudinov R, Zemel R, Bengio Y (2015) Show, attend and tell: neural image caption generation with visual attention. In: International conference on machine learning, pp 2048–2057

Funding

No funding was received for conducting this study.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflicts of interest/Competing interests

The authors have no competing interests to declare that are relevant to the content of this article.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Dasgupta, M., Bandyopadhyay, O. & Chatterji, S. Detection of helmetless motorcycle riders by video captioning using deep recurrent neural network. Multimed Tools Appl 82, 5857–5877 (2023). https://doi.org/10.1007/s11042-022-13473-z

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-022-13473-z