Abstract

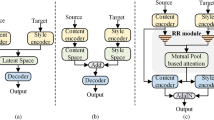

With the feature-level constraints, unpaired image translation is challenging in generating poor realistic images, which focuses on convolutional feature extraction, ignoring the SVD feature extraction. To address this limitation, the Unpaired Image-to-image Translation with Improved Two-dimensional Feature (UNTF) is proposed. Specifically, in our method the novel feature extraction module consists two part: the SVD feature extraction and the convolutional feature extraction. The SVD feature maps were built by Two-Dimensional Feature which transform 1-D features into 2-D features to cascade with convolutional features. In up-sampling module sub-pixel convolution is used to replace transposed convolution. What’s more, the proposed feature loss can stabilize the training process of generator. Finally, the proposed network was verified by ablation study and state-of-the-art methods. Experiments on image translation, image illustration, and image restoration show that both the image clarity index (EGF) and experts agree that the proposed method is superior to the existing methods.

Similar content being viewed by others

References

Ahmed KT, Ummesafi S, Iqbal A (2019) Content based image retrieval using image features information fusion. Inf Fusion 51:76–99

Andrews H, Patterson CLIII (1976) Singular value decomposition (SVD) image coding. IEEE Trans Commun 24(4):425–432

Bao J, Chen D, Wen F, Li H, Hua G (2017) CVAE-GAN: fine-grained image generation through asymmetric training. In: Proceedings of the IEEE international conference on computer vision pp. 2745-2754

Buisine J, Bigand A, Synave R, Delepoulle S, Renaud C (2021) Stopping criterion during rendering of computer-generated images based on SVD-entropy. Entropy 23(1):75

Chai C, Liao J, Zou N, Sun L (2018) A one-to-many conditional generative adversarial network framework for multiple image-to-image translations. Multimed Tools Appl 77(17):22339–22366

Chen G, Wang W, Wang Z, Liu H, Zang Z, Li W (2020) Two-dimensional discrete feature based spatial attention CapsNet for sEMG signal recognition. Appl Intell 50(10):3503–3520

Donoho DL (2006) Compressed sensing. IEEE Trans Inf Theory 52(4):1289–1306

Emami H, Aliabadi MM, Dong M, Chinnam RB (2020) Spa-Gan: spatial attention Gan for image-to-image translation. IEEE Trans Multimedia 23:391–401

Fang Y, Deng W, Du J, Hu J (2020) Identity-aware CycleGAN for face photo-sketch synthesis and recognition. Pattern Recogn 102:107249

Gatys LA, Ecker AS, Bethge M (2016) Image style transfer using convolutional neural networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition pp. 2414-2423

Guo X, Liu F, Yao J, Chen Y, Tian X (2020) Multi-weighted nuclear norm minimization for real world image denoising. Optik 206:164214

Hicsonmez S, Samet N, Akbas E, Duygulu P (2020) GANILLA: generative adversarial networks for image to illustration translation. Image Vis Comput 95:103886

Huang J (2020) Image super-resolution reconstruction based on generative adversarial network model with double discriminators. Multimed Tools Appl 79(39):29639–29662

Isola P, Zhu JY, Zhou T, Efros AA (2017) Image-to-image translation with conditional adversarial networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition pp. 1125-1134

Johnson J, Alahi A, Fei-Fei L (2016, October) Perceptual losses for real-time style transfer and super-resolution. In: European conference on computer vision pp 694–711

Kim T, Cha M, Kim H, Lee JK, Kim J (2017) Learning to discover cross-domain relations with generative adversarial networks. In: International conference on machine learning pp. 1857-1865

Lee HY, Tseng HY, Huang JB, Singh M, Yang MH (2018) Diverse image-to-image translation via disentangled representations. In: Proceedings of the European conference on computer vision (ECCV) (pp. 35-51)

Lim S, Park H, Lee SE, Chang S, Sim B, Ye JC (2020) Cyclegan with a blur kernel for deconvolution microscopy: optimal transport geometry. IEEE Trans Comput Imaging 6:1127–1138

Liu MY, Breuel T, Kautz J (2017) Unsupervised image-to-image translation networks. In: Advances in neural information processing systems pp. 700-708

Liu R, Yang R, Li S, Shi Y, Jin X (2020) Painting completion with generative translation models. Multimed Tools Appl 79(21):14375–14388

Liu J, He J, Xie Y, Gui W, Tang Z, Ma T, Niyoyita JP (2020) Illumination-invariant flotation froth color measuring via Wasserstein distance-based CycleGAN with structure-preserving constraint. IEEE Trans Cybern 51(2):839–852

Liu L, Zhang H, Zhou D (2021) Clothing generation by multi-modal embedding: a compatibility matrix-regularized GAN model. Image Vis Comput 107:104097

Lu, G., Zhou, Z., Song, Y., Ren, K., & Yu, Y. (2019, July). Guiding the one-to-one mapping in cyclegan via optimal transport. In: Proceedings of the AAAI conference on artificial intelligence (Vol. 33, no. 01, pp. 4432-4439)

Mirza M, Osindero S (2014) Conditional generative adversarial nets. arXiv preprint arXiv:1411.1784

Oh G, Sim B, Chung H, Sunwoo L, Ye JC (2020) Unpaired deep learning for accelerated MRI using optimal transport driven cycleGAN. IEEE Trans Comput Imaging 6:1285–1296

Qi GJ (2020) Loss-sensitive generative adversarial networks on lipschitz densities. Int J Comput Vis 128(5):1118–1140

Richardson E, Alaluf Y, Patashnik O, Nitzan Y, Azar Y, Shapiro S, Cohen-Or D (2021) Encoding in style: a stylegan encoder for image-to-image translation. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 2287-2296)

Ronneberger O, Fischer P, Brox T (2015, October) U-net: convolutional networks for biomedical image segmentation. In international conference on medical image computing and computer-assisted intervention pp. 234-241

Rosales R, Achan K, Frey BJ (2003, October) Unsupervised image translation. In iccv pp. 472-478

Shi W, Caballero J, Huszár F, Totz J, Aitken AP, Bishop R, Wang Z (2016) Real-time single image and video super-resolution using an efficient sub-pixel convolutional neural network. In: Proceedings of the IEEE conference on computer vision and pattern recognition pp. 1874-1883

Singh S, Anand RS (2019) Multimodal medical image sensor fusion model using sparse K-SVD dictionary learning in nonsubsampled shearlet domain. IEEE Trans Instrum Meas 69(2):593–607

Taigman Y, Polyak A, Wolf L (2016) Unsupervised cross-domain image generation. arXiv preprint arXiv:1611.02200

Tang H, Liu H, Xu D, Torr PH, Sebe N (2021) Attentiongan: unpaired image-to-image translation using attention-guided generative adversarial networks. IEEE Trans Neural Netw Learn Syst PP:1–16

Xu H, Zheng J, Yao X, Feng Y, Chen S (2021) Fast tensor nuclear norm for structured low-rank visual Inpainting. IEEE Trans Circuits Syst Video Technol 32:538–552

Xu H, Qin M, Chen S, Zheng Y, Zheng J (2021) Hyperspectral-multispectral image fusion via tensor ring and subspace decompositions. IEEE J Sel Top Appl Earth Obs Remote Sens 14:8823–8837

Yang J, Kannan A, Batra D, Parikh D (2017) Lr-Gan: layered recursive generative adversarial networks for image generation. arXiv preprint arXiv:1703.01560

Yang X, Zhao J, Wei Z, Wang N, Gao X (2022) SAR-to-optical image translation based on improved CGAN. Pattern Recogn 121:108208

Yi Z, Zhang H, Tan P, Gong M (2017) Dualgan: unsupervised dual learning for image-to-image translation. In: Proceedings of the IEEE international conference on computer vision pp. 2849-2857

Zang Z, Wang W, Song Y, Lu L, Li W, Wang Y, Zhao Y (2019) Hybrid deep neural network scheduler for job-shop problem based on convolution two-dimensional transformation. Comput Intell Neurosci 2019:1–19

Zhang S, Li N, Qiu C, Yu Z, Zheng H, Zheng B (2020) Depth map prediction from a single image with generative adversarial nets. Multimed Tools Appl 79(21):14357–14374

Zhao Y, Chen C (2021) Unpaired image-to-image translation via latent energy transport. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition pp. 16418-16427

Zhao B, Chang B, Jie Z, Sigal L (2018) Modular generative adversarial networks. In: Proceedings of the European conference on computer vision (ECCV) (pp. 150-165)

Zhao J, Zhang J, Li Z, Hwang JN, Gao Y, Fang Z, Huang B (2019) Dd-cyclegan: unpaired image dehazing via double-discriminator cycle-consistent generative adversarial network. Eng Appl Artif Intell 82:263–271

Zhu JY et al (2017) Toward multimodal image-to-image translation. In: Advances in Neural Information Processing Systems pp. 465–476

Zhu JY, Park T, Isola P, Efros AA (2017) Unpaired image-to-image translation using cycle-consistent adversarial networks. In: Proceedings of the IEEE international conference on computer vision pp. 2223-2232

Acknowledgements

This work is supported by National Natural Science Foundation of China (No. 61873240). The data used to support the findings of this study are available from the corresponding author upon request.

Declaration of Interest Statement

We declare that we have no financial and personal relationships with other people or organizations that can inappropriately influence our work, there is no professional or other personal interest of any nature or kind in any product, service and/or company that could be construed as influencing the position presented in, or the review of, the manuscript entitled.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Tu, H., Wang, W., Chen, J. et al. Unpaired image-to-image translation with improved two-dimensional feature. Multimed Tools Appl 81, 43851–43872 (2022). https://doi.org/10.1007/s11042-022-13115-4

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-022-13115-4