Abstract

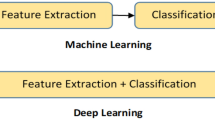

In this paper, an approach for Facial Expressions Recognition (FER) based on a multi-facial patches (MFP) aggregation network is proposed. Deep features are learned from facial patches using convolutional neural sub-networks and aggregated within one architecture for expression classification. Besides, a framework based on two data augmentation techniques is proposed to expand FER labels training datasets. Consequently, the proposed shallow convolutional neural networks (CNN) based approach does not need large datasets for training. The proposed framework is evaluated on three FER datasets. Results show that the proposed approach achieves state-of-art FER deep learning approaches performance when the model is trained and tested on images from the same dataset. Moreover, the proposed data augmentation techniques improve the expression recognition rate, and thus can be a solution for training deep learning FER models using small datasets. The accuracy degrades significantly when testing for dataset bias. A fine-tuning can overcome the problem of transition from laboratory-controlled conditions to in-the-wild conditions. Finally, the emotional face is mapped using the MFP-CNN and the contribution of the different facial areas in displaying emotion as well as their importance in the recognition of each facial expression are studied.

Similar content being viewed by others

References

Bartlett MS, Littlewort G, Fasel I, Movellan JR (2003) Real time face detection and facial expression recognition: Development and applications to human computer interaction. In: Conference on computer vision and pattern recognition workshop, vol. 5, pp 53–53

Cohen I, Sebe N, Gozman FG, Cirelo MC, Huang TS (2003) Learning bayesian network classifiers for facial expression recognition both labeled and unlabeled data. In: Proceedings IEEE computer society conference on computer vision and pattern recognition, vol. 1, pp I–I

Cohn J, Zlochower A (1995) A computerized analysis of facial expression: Feasibility of automated discrimination. Amer Psychol Soc

Dahmane M, Meunier J (2011) Emotion recognition using dynamic grid-based hog features. In: Face and gesture, pp 884–888

Dhall A, Asthana A, Goecke R, Gedeon T (2011) Emotion recognition using phog and lpq features. In: Face and gesture, pp 878–883

Dhall A, Goecke R, Gedeon T (2015) Automatic group happiness intensity analysis. IEEE Trans Affect Comput 6(1):13–26

Dhall A, Goecke R, Ghosh S, Joshi J, Hoey J, Gedeon T (2017) From individual to group-level emotion recognition: Emotiw 5.0. In: Proceedings of the 19th ACM international conference on multimodal interaction, pp 524–528

Dhall A, Goecke R, Lucey S, Gedeon T (2011) Acted facial expressions in the wild database. Australian National University, Canberra, Australia Technical Report TR-CS-11 2:1

Dhall A, Goecke R, Lucey S, Gedeon T (2011) Static facial expression analysis in tough conditions: Data, evaluation protocol and benchmark. In: IEEE International conference on computer vision workshops, pp 2106–2112

Dhall A, Goecke R, Lucey S, Gedeon T (2012) Collecting large, richly annotated facial-expression databases from movies. IEEE Multimed 19 (1):34–41

Dhall A, Ramana Murthy OV, Goecke R, Joshi J, Gedeon T (2015) Video and image based emotion recognition challenges in the wild: Emotiw 2015. In: Proceedings of the 2015 ACM on international conference on multimodal interaction, pp 423–426

Du L, Wu Y, Hu H, Wang W (2020) Self-adaptive weighted synthesised local directional pattern integrating with sparse autoencoder for expression recognition based on improved multiple kernel learning strategy. IET Computer Vision

Ekman P, Friesen WV, Hager J (2002) Facial action coding system. A Human Face

Ekman P, Friesen WV, O’sullivan M, Chan A, Diacoyanni-Tarlatzis I, Heider K, Scherer K (1987) Universals and cultural differences in the judgments of facial expressions of emotion. J Personal Soc Psychol 53(4):712

El Kaliouby R, Robinson P (2005) Real-time inference of complex mental states from facial expressions and head gestures. In: Real-time vision for human-computer interaction, pp 181–200. Springer

Gauthier J (2014) Conditional generative adversarial nets for convolutional face generation. Class Project for Stanford CS231n: Convolutional Neural Networks for Visual Recognition Winter semester 2014(5):2

Goodfellow I, Pouget-Abadie J, Mirza M, Xu B, Warde-Farley D, Ozair S, Courville A, Bengio Y (2014) Generative adversarial nets. In: Advances in neural information processing systems, pp 2672–2680

Goodfellow IJ, Erhan D, Carrier PL, Courville A, Mirza M, Hamner B, Cukierski W, Tang Y, Thaler D, Lee D-H, Zhou Y, Ramaiah C, Feng F, Li R, Wang X, Athanasakis D, Shawe-Taylor J, Milakov M, Park J, Ionescu R, Popescu M, Grozea C, Bergstra J, Xie J, Romaszko L, Xu B, Chuang Z, Bengio Y (2013) Challenges in representation learning: A report on three machine learning contests. In: International conference on neural information processing, pp 117–124. Springer, arXiv:1307.0414

Gross R, Matthews I, Cohn J, Kanade T, Baker S (2010) Multi-pie. Image Vision Comput 25(5):807–813

Hernández B., Olague G, Hammoud R, Trujillo L, Romero E (2007) Visual learning of texture descriptors for facial expression recognition in thermal imagery. Comput Vis Image Underst 106(2-3):258–269

Ioannou SV, Raouzaiou AT, Tzouvaras VA, Mailis TP, Karpouzis KC, Kollias SD (2005) Emotion recognition through facial expression analysis based on a neurofuzzy network. Neural Netw 18(4):423–435

Isola P, Zhu JY, Zhou T, Efros AA (2017) Image-to-image translation with conditional adversarial networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 1125–1134

Jack RE, Garrod OG, Yu H, Caldara R, Schyns PG (2012) Facial expressions of emotion are not culturally universal. In: Proceedings of the National Academy of Sciences, pp 7241–7244

Jian M, Cui C, Nie X, Zhang H, Nie L, Yin Y (2019) Multi-view face hallucination using svd and a mapping model. Inf Sci 488:181–189

Jian M, Lam KM (2014) Face-image retrieval based on singular values and potential-field representation. Signal Process 100:9–15

Jian M, Lam KM (2015) Simultaneous hallucination and recognition of low-resolution faces based on singular value decomposition. IEEE Trans Circ Syst Video Technol 25(11):1761–1772

Jian M, Lam KM, Dong J (2013) A novel face-hallucination scheme based on singular value decomposition. Pattern Recogn 46(11):3091–3102

Jian M, Lam KM, Dong J (2014) Facial-feature detection and localization based on a hierarchical scheme. Inf Sci 262:1–14

Jung H, Lee S, Yim J, Park S, Kim J (2015) Joint fine-tuning in deep neural networks for facial expression recognition. In: Proceedings of the IEEE international conference on computer vision, pp 2983–2991

Kazemi V, Sullivan J (2014) One millisecond face alignment with an ensemble of regression trees. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 1867–1874

Khorrami P, Paine T, Huang T (2015) Do deep neural networks learn facial action units when doing expression recognition?. In: Proceedings of the IEEE international conference on computer vision workshops, pp 19–27

Kim Y, Lee H, Provost EM (2013) Deep learning for robust feature generation in audiovisual emotion recognition. In: IEEE International conference on acoustics, speech and signal processing, pp 3687–3691

Kim Y, Yoo B, Kwak Y, Choi C, Kim J (2017) Deep generative-contrastive networks for facial expression recognition. arXiv:1703.07140

Kuo CM, Lai SH, Sarkis M (2018) A compact deep learning model for robust facial expression recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition workshops, pp 2121–2129

Li S, Deng W (2018) Deep facial expression recognition: A survey. arXiv:1804.08348

Liu M, Li S, Shan S, Chen X (2013) Au-aware deep networks for facial expression recognition. In: 2013 10th IEEE international conference and workshops on automatic face and gesture recognition (FG), pp 1–6. IEEE

Liu T, Guo W, Sun Z, Lian Y, Liu S, Wu K (2020) Facial expression recognition based on regularized semi-supervised deep learning. In: Advances in intelligent information hiding and multimedia signal processing, pp 323–331. Springer

Lu X, Lin Z, Shen X, Mech R, Wang JZ (2015) Deep multi-patch aggregation network for image style, aesthetics, and quality estimation. In: Proceedings of the IEEE international conference on computer vision, pp 990–998

Luc P, Couprie C, Chintala S, Verbeek J (2016) Semantic segmentation using adversarial networks. arXiv:1611.08408

Lucey P, Cohn JF, Kanade T, Saragih J, Ambadar Z, Matthews I (2010) The extended cohn-kanade dataset (ck+): a complete dataset for action unit and emotion-specified expression. In: IEEE Computer society conference on computer vision and pattern recognition-workshops, pp 94–101. IEEE

Lyons M, Akamatsu S, Kamachi M, Gyoba J (1998) Coding facial expressions with gabor wavelets. In: Proceedings third IEEE international conference on automatic face and gesture recognition, pp 200–205

Marsh AA, Elfenbein HA, Ambady N (2003) Nonverbal accents cultural differences in facial expressions of emotion. Psychol Sci 14(4):373–376

Michel P, El Kaliouby R (2003) Real time facial expression recognition in video using support vector machines. In: Proceedings of the 5th international conference on Multimodal interfaces, pp 258–264

Minaee S, Abdolrashidi A (2019) Deep-emotion: Facial expression recognition using attentional convolutional network. arXiv:1902.01019

Mirza M, Osindero S (2014) Conditional generative adversarial nets. arXiv:1411.1784

Mollahosseini A, Chan D, Mahoor MH (2016) Going deeper in facial expression recognition using deep neural networks. In: 2016 IEEE Winter conference on applications of computer vision (WACV), pp 1–10. IEEE

Mollahosseini A, Chan D, Mahoor MH (2016) Going deeper in facial expression recognition using deep neural networks. In: IEEE Winter conference on applications of computer vision (WACV), pp 1–10

Ng HW, Nguyen VD, Vonikakis V, Winkler S (2015) Deep learning for emotion recognition on small datasets using transfer learning. In: Proceedings of the 2015 ACM on international conference on multimodal interaction, pp 443–449

Nicolle J, Bailly K, Chetouani M (2015) Facial action unit intensity prediction via hard multi-task metric learning for kernel regression. In: 11th IEEE international conference and workshops on automatic face and gesture recognition (FG), vol. 6, pp 1–6

Nusseck M, Cunningham DW, Wallraven C, Bülthoff H. H. (2008) The contribution of different facial regions to the recognition of conversational expressions. J Vis 8:1–23

Othmani A, Taleb AR, Abdelkawy H, Hadid A (2020) Age estimation from faces using deep learning: A comparative analysis. Computer Vision and Image Understanding p 102961

Pantic M, Valstar M, Rademaker R, Maat L (2005) Web-based database for facial expression analysis. In: IEEE International conference on multimedia and expo, pp 5. IEEE

Poria S, Chaturvedi I, Cambria E, Hussain A (2016) Convolutional mkl based multimodal emotion recognition and sentiment analysis. In: IEEE 16th international conference on data mining (ICDM), pp 439–448

Rejaibi E, Komaty A, Meriaudeau F, Agrebi S, Othmani A (2019) Mfcc-based recurrent neural network for automatic clinical depression recognition and assessment from speech. arXiv:1909.07208

Salam H, Seguier R (2018) A survey on face modeling: Building a bridge between face analysis and synthesis. Vis Comput 34(2):289–319

Senechal T, Rapp V, Salam H, Seguier R, Bailly K, Prevost L (2012) Facial action recognition combining heterogeneous features via multikernel learning. IEEE Transactions on Systems, Man, and Cybernetics Part B (Cybernetics) 42(4):993–1005

Simcock G, McLoughlin LT, De Regt T, Broadhouse KM, Beaudequin D, Lagopoulos J, Hermens DF (2020) Associations between facial emotion recognition and mental health in early adolescence. Int J Environ Res Public Health 17(1):330

Sun N, Li Q, Huan R, Liu J, Han G (2019) Deep spatial-temporal feature fusion for facial expression recognition in static images. Pattern Recogn Lett 119:49–61

Tong Y, Liao W, Ji Q (2007) Facial action unit recognition by exploiting their dynamic and semantic relationships. IEEE Trans Pattern Anal Machine Intell 29(10):1683–1699

Tonguċ G, Ozkara BO (2020) Automatic recognition of student emotions from facial expressions during a lecture. Comput Educ p 103797

Valstar MF, Jiang B, Mehu M, Pantic M, Scherer K (2011) The first facial expression recognition and analysis challenge. In: Face and gesture, pp 921–926

Wang W, Fu Y, Sun Q, Chen T, Cao C, Zheng Z, Xu G, Qiu H, Jiang YG, Xue X (2020) Learning to augment expressions for few-shot fine-grained facial expression recognition. arXiv:2001.06144

Wegrzyn M, Vogt M, Kireclioglu B, Schneider J, Kissler J (2017) Mapping the emotional face. how individual face parts contribute to successful emotion recognition. PloS One 12:e0177,239

Whitehill J, Littlewort G, Fasel I, Bartlett M, Movellan J (2009) Toward practical smile detection. IEEE Trans Pattern Anal Machine Intell 2106–2111

Yan H, Ang MH, Poo AN (2012) Adaptive discriminative metric learning for facial expression recognition. IET biometrics 1(3):160–167

Yang H, Zhang Z, Yin L (2018) Identity-adaptive facial expression recognition through expression regeneration using conditional generative adversarial networks. In: 13Th IEEE international conference on automatic face & gesture recognition (FG 2018), pp 294–301

Yu Z, Liu Q, Liu G (2018) Deeper cascaded peak-piloted network for weak expression recognition. Vis Comput 34(12):1691–1699

Yu Z, Zhang C (2015) Image based static facial expression recognition with multiple deep network learning. In: Proceedings of the 2015 ACM on International Conference on Multimodal Interaction, pp 435–442

Zhang H, Huang B, Tian G (2020) Facial expression recognition based on deep convolution long short-term memory networks of double-channel weighted mixture. Pattern Recogn Lett 131:128–134

Zhang J, Xiao N (2020) Capsule network-based facial expression recognition method for a humanoid robot. In: Recent trends in intelligent computing, communication and devices, pp 113–121. Springer

Zhang K, Huang Y, Du Y, Wang L (2017) Facial expression recognition based on deep evolutional spatial-temporal networks. IEEE Trans Image Process 26(9):4193–4203

Zhao G, Huang X, Taini M, Li SZ, PietikäInen M (2011) Facial expression recognition from near-infrared videos. Image Vis Comput 29 (9):607–619

Zhao X, Liang X, Liu L, Li T, Han Y, Vasconcelos N, Yan S (2016) Peak-piloted deep network for facial expression recognition. In: European conference on computer vision, pp 425–442

Zhong L, Liu Q, Yang P, Liu B, Huang J, Metaxas DN (2012) Learning active facial patches for expression analysis. In: IEEE Conference on computer vision and pattern recognition, pp 2562–2569

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Hazourli, A.R., Djeghri, A., Salam, H. et al. Multi-facial patches aggregation network for facial expression recognition and facial regions contributions to emotion display. Multimed Tools Appl 80, 13639–13662 (2021). https://doi.org/10.1007/s11042-020-10332-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-020-10332-7