Abstract

Three-dimensional manifold data arise in many contexts of geoscience, such as laser scanning, drilling surveys and seismic catalogs. They provide point measurements of complex surfaces, which cannot be fitted by common statistical techniques, like kriging interpolation and principal curves. This paper focuses on iterative methods of manifold smoothing based on local averaging and principal components; it shows their relationships and provides some methodological developments. In particular, it develops a kernel spline estimator and a data-driven method for selecting its smoothing coefficients. It also shows the ability of this approach to select the number of nearest neighbors and the optimal number of iterations in blurring-type smoothers. Extensive numerical applications to simulated and seismic data compare the performance of the discussed methods and check the efficacy of the proposed solutions.

Similar content being viewed by others

References

Aliyari-Ghassabeh Y, Linder T, Takahara G (2013) On convergence properties of the subspace constrained mean shift. Pattern Recognit 46(11):3140–3147

Biedermann S, Yang M (2015) Designs for selected nonlinear models. In: Dean A, Morris M, Stufken J, Bingham D (eds) Handbook of design and analysis of experiments. Chapman & Hall, London, pp 515–547

Carreira-Perpiñán MÁ (2006) Fast nonparametric clustering with Gaussian blurring mean-shift. In: Proceedings of 23rd international conference on machine learning. Pittsburgh, pp 153–160

Caumon G, Collon-Drouaillet P (2014) Special issues on three-dimensional structural modeling. Math Geosci 46(8):905–908

Chen Y (1995) Mean shift, mode seeking and clustering. IEEE Trans Pattern Anal Mach Intell 17(8):790–799

Comaniciu D, Meer P (2002) Mean shift: a robust approach toward feature space analysis. IEEE Trans Pattern Anal Mach Intell 24(5):603–619

Csontos R, Van Arsdale R (2008) New Madrid seismic zone fault geometry. Geosphere 4(5):802–813

Eberly D (1996) Ridges in image and data analysis. Kluwer, Dordrecht

Einbeck J, Evers L, Powell B (2010) Data compression and regression, through local principal curves and surfaces. Int J Neural Syst 20(3):177–192

Forte AM, Mitrovica JX, Moucha R, Simmons RA, Grand SP (2007) Descent of the ancient Farallon slab drives localized mantle flow below the New Madrid seismic zone. Geophys Res Lett 34(4):L04308. doi:10.1029/2006GL027895

Genovese CR, Perone-Pacifico M, Verdinelli I, Wasserman L (2014) Nonparametric ridge estimation. Ann Stat 42(4):1511–1545

Grillenzoni C (1998) Forecasting unstable and non-stationary time series. Int J Forecast 14(4):469–482

Grillenzoni C (2007) Pattern recognition via robust smoothing, with application to laser data. Aust NZ J Stat 49(2):139–153

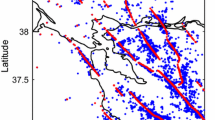

Grillenzoni C (2014) Detection of tectonic faults by spatial clustering of earthquake hypocenters. Spat Stat 7(2):62–78

Hastie T, Stuetzle W (1989) Principal curves. J Am Stat Assoc 84(2):502–516

Hill BJ, Kendall WS, Thönnes E (2012) Fiber-generated point processes and fields of orientations. Ann Appl Stat 6(3):994–1020

Jordan TH (2014) Faults in focus: 20 years of earthquake science accomplishments. SECS: Southern California Earthquake Center

Li X, Hu Z, Wu F (2007) A note on the convergence of the mean shift. Pattern Recognit 40(6):1756–1762

Mérigot Q, Ovsjanikov M, Guibas L (2011) Voronoi-based curvature and feature estimation from point clouds. IEEE Trans Vis Comput Graph 17(6):743–756

Ozertem U, Erdogmus D (2011) Locally defined principal curves and surfaces. J Mach Learn Res 12:1249–1286

Park JH, Zhang Z, Zha H, Kasturi R (2004) Local smoothing for manifold learning. In: Proceedings of 2004 IEEE conference on computer vision and pattern recognition Vol 2. Washington, pp 452–459

Prechelt L (2012) Early stopping—But when? In: Montavon G, Orr GB, Müller KR (eds) Neural networks: tricks of the trade. Springer, Berlin, pp 53–68

Silverman BW (1986) Density estimation for statistical data analysis. Chapman & Hall, London

Stanford DC, Raftery AE (2000) Finding curvilinear features in spatial point patterns: principal curve clustering with noise. IEEE Trans Pattern Anal Mach Intell 22(6):601–609

Tenenbaum J, De Silva V, Langford J (2000) A global geometric framework for nonlinear dimension reduction. Science 290:2319–2323

Wahba G (1990) Spline models for observational data. SIAM, Philadelphia

Wang W, Carreira-Perpiñán MÁ (2010) Manifold blurring mean shift algorithms for manifold denoising. In: Proceedings of 2010 IEEE conference on computer vision and pattern recognition. San Francisco, pp 1759–1766

Acknowledgments

A sincere thank to the Editors and Reviewers for their useful comments.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix 1: Proof of Results (14)

The proof of Eq. (14) need assumptions similar to those of Comaniciu and Meer (2002). The Gaussian kernel \(K(\cdot )\) in (11) is based on the profile function \(k(z)=\exp (-z/2)\), which is positive, decreasing and convex. Letting \(c=1 / \Big ( (2 \pi )^{m/2} N \beta ^m \Big )\), the expression in (14, i) can then be written as

for all i, t. Convex functions have the property that \([k(z_2)-k(z_1)]\ge -k'(z_1) (z_1 - z_2)\) for all \(z_i , z_2 > 0\), and for negative exponentials \(-k'(z)=k(z)\). Thus, the above expression satisfies the inequality

By defining \(\hat{k}_{ij}^{(t)} = k \Big ( \Big \Vert \, \hat{\varvec{y}}_i^{(t)} - {\mathbf{x}}_j \Big \Vert ^2 / \beta ^2 \Big )\), the weights \(\hat{w}_{ij}^{(t)} = \hat{k}_{ij}^{(t)} / \sum _j \hat{k}_{ij}^{(t)}\) and the constants \(C_t = c \sum _j \hat{k}_{ij}^{(t)} / 2 \beta ^2\), the above can be written as

where \(C_t > 0\). Inequality (32) follows as in the proof of Theorem 1 of Li et al. (2007); thus, combining (30) and (32) one has

for all i, t. This implies that \(\Big \{ \hat{f}_N ( \hat{\varvec{y}}_i^{(t)}) \Big \}\) is non-decreasing in t, but since it is bounded as k(z), it converges for all i, as stated by Eq. (14, i).

Also the result (14, ii) follows from (33), by noting that \(\min _t C_t > 0\) and \(\hat{\varvec{y}}_i^{(t)}\) is bounded. In fact, having \(\min _t \Big \{ \hat{f}_N ( \hat{\varvec{y}}_i^{(t)}) \Big \} = \hat{f}_N ({\mathbf{x}}_i) > 0\) (from the initial condition in (11)), this implies the boundedness of \(\hat{\varvec{y}}_i^{(t)}\) (otherwise it would be \(\min _t \hat{f}_N = 0\)). As a consequence, there exists \(D>0\) such that \(\max _j \Vert \, \hat{\varvec{y}}_i^{(t)} - {\mathbf{x}}_j \Vert ^2 \le D\) and therefore \(C_t \ge c \, k(D/\beta ^2) / 2 \beta ^2 = C > 0\). Using C in (33), the result (14, i) implies (14, ii).

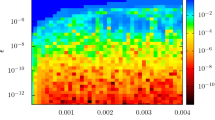

Appendix 2: Results of MS Estimates

This appendix reports the manifold estimates of the data in Fig. 1 obtained with the algorithm (13) with the full Hessian matrix (12), and the method of Ozertem and Erdogmus (2011) based on the Hessian estimation of the inverse covariance. The two estimators use the same bandwidth \(\beta =0.133\), identified in Fig. 4(b)(d). Results in Fig. 12 show that the first algorithm has a significant bias in two-dimensional at the corners, whereas the second reduces the three-dimensional sphere to a disk.

Rights and permissions

About this article

Cite this article

Grillenzoni, C. Smoothing Three-Dimensional Manifold Data, with Application to Tectonic Fault Detection. Math Geosci 48, 487–510 (2016). https://doi.org/10.1007/s11004-015-9630-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11004-015-9630-x