Abstract

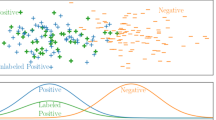

Directly applying single-label classification methods to the multi-label learning problems substantially limits both the performance and speed due to the imbalance, dependence and high dimensionality of the given label matrix. Existing methods either ignore these three problems or reduce one with the price of aggravating another. In this paper, we propose a {0,1} label matrix compression and recovery method termed “compressed labeling (CL)” to simultaneously solve or at least reduce these three problems. CL first compresses the original label matrix to improve balance and independence by preserving the signs of its Gaussian random projections. Afterward, we directly utilize popular binary classification methods (e.g., support vector machines) for each new label. A fast recovery algorithm is developed to recover the original labels from the predicted new labels. In the recovery algorithm, a “labelset distilling method” is designed to extract distilled labelsets (DLs), i.e., the frequently appeared label subsets from the original labels via recursive clustering and subtraction. Given a distilled and an original label vector, we discover that the signs of their random projections have an explicit joint distribution that can be quickly computed from a geometric inference. Based on this observation, the original label vector is exactly determined after performing a series of Kullback-Leibler divergence based hypothesis tests on the distribution about the new labels. CL significantly improves the balance of the training samples and reduces the dependence between different labels. Moreover, it accelerates the learning process by training fewer binary classifiers for compressed labels, and makes use of label dependence via DLs based tests. Theoretically, we prove the recovery bounds of CL which verifies the effectiveness of CL for label compression and multi-label classification performance improvement brought by label correlations preserved in DLs. We show the effectiveness, efficiency and robustness of CL via 5 groups of experiments on 21 datasets from text classification, image annotation, scene classification, music categorization, genomics and web page classification.

Article PDF

Similar content being viewed by others

References

Bian, W., & Tao, D. (2011). Max-min distance analysis by using sequential sdp relaxation for dimension reduction. IEEE Transactions on Pattern Analysis and Machine Intelligence, 33(5), 1037–1050.

Bianchi, N.C., Gentile, C., & Zaniboni, L. (2006). Incremental algorithms for hierarchical classification. Journal of Machine Learning Research, 7, 31–54.

Boutell, M.R., Luo, J., Shen, X., & Brown, C.M. (2004). Learning multi-label scene classification. Pattern Recognition, 37(9), 1757–1771.

Breiman, L., & Friedman, J.H. (1997). Predicting multivariate responses in multiple linear regression (with discussion). The Journal of the Royal Statistical Society Series B, 54, 5–54.

Candès, E.J., Romberg, J.K., & Tao, T. (2006). Robust uncertainty principles: exact signal reconstruction from highly incomplete frequency information. IEEE Transactions on Information Theory, 52(2), 489–509.

Chang, C.-C., & Lin, C.-J. (2001). LIBSVM: a library for support vector machines. Software available at http://www.csie.ntu.edu.tw/~cjlin/libsvm.

Chawla, N.V., Bowyer, K.W., Hall, L.O., Kegelmeyer, W.P. (2002). Smote: Synthetic minority over-sampling technique. Journal of Artificial Intelligence Research, 16, 321–357.

Chen, J., Liu, J., & Ye, J. (2010). Learning incoherent sparse and low-rank patterns from multiple tasks. In SIGKDD’10: The 16th ACM SIGKDD international conference on knowledge discovery and data mining.

Cheng, W., & Hüllermeier, E. (2009). Combining instance-based learning and logistic regression for multilabel classification. Machine Learning, 76(2–3), 211–225.

Clare, A., & King, R. D. (2001). Knowledge discovery in multi-label phenotype data. In PKDD’01: Proceedings of the 5th European conference on principles of data mining and knowledge discovery (pp. 42–53). London: Springer.

Clarkson, K.L. (2008). Tighter bounds for random projections of manifolds. In SCG’08: Proceedings of the 24 annual symposium on computational geometry (pp. 39–48).

Crammer, K., & Singer, Y. (2003). A family of additive online algorithms for category ranking. Journal of Machine Learning Research, 3, 1025–1058.

Dasgupta, S. (2000). Experiments with random projection. In UAI’00: Proceedings of the 16th conference on uncertainty in artificial intelligence, San Francisco, CA, USA (pp. 143–151).

Dasgupta, S., & Freund, Y. (2008). Random projection trees and low dimensional manifolds. In STOC’08: Proceedings of the 40th annual ACM symposium on theory of computing (pp. 537–546).

Dembczyński, K., Cheng, W., & Hüllermeier, E. (2010). Bayes optimal multilabel classification via probabilistic classifier chains. In The 27th international conference on machine learning (ICML 2010).

Dembczyński, K., Waegeman, W., Cheng, W., & Hüllermeier, E. (2010). On label dependence in multi-label classification. In ICML 2010 workshop on learning from multi-label data (MLD 10) (pp. 5–13).

Dietterich, T.G., & Bakiri, G. (1995). Solving multiclass learning problems via error-correcting output codes. Journal of Artificial Intelligence Research, 2, 263–282.

Diplaris, S., Tsoumakas, G., Mitkas, P.A., & Vlahavas, I. (2005). Protein classification with multiple algorithms. In Proceedings of the 10th Panhellenic conference on informatics (PCI 2005) (pp. 448–456).

Donoho, D.L. (2006). Compressed sensing. IEEE Transactions on Information Theory, 52(4), 1289–1306.

Duygulu, P., Barnard, K., de Freitas, N., & Forsyth, D. (2002). Object recognition as machine translation: Learning a lexicon for a fixed image vocabulary. In ECCV’02: Proceedings of the 7th European conference on computer vision-part IV (pp. 97–112). London: Springer.

Efron, B., Hastie, T., Johnstone, L., & Tibshirani, R. (2002). Least angle regression. Annals of Statistics, 32, 407–499.

Escalera, S., Pujol, O., & Radeva, P. (2010). On the decoding process in ternary error-correcting output codes. IEEE Transactions on Pattern Analysis and Machine Intelligence, 32(1), 120–134.

Evgeniou, T., & Pontil, M. (2004). Regularized multi-task learning. In KDD’04: Proceedings of the tenth ACM SIGKDD international conference on knowledge discovery and data mining (pp. 109–117).

Fürnkranz, J., Hüllermeier, E., Mencía, E.L., & Brinker, K. (2008). Multilabel classification via calibrated label ranking. Machine Learning, 73(2), 133–153.

Ghamrawi, N., & McCallum, A. (2005). Collective multi-label classification. In CIKM’05: Proceedings of the 14th ACM international conference on information and knowledge management (pp. 195–200).

Goemans, M.X., & Williamson, D.P. (1995). Improved approximation algorithms for maximum cut and satisfiability problems using semidefinite programming. Journal of the ACM, 42(6), 1115–1145.

Gomez, J., Boiy, E., & Moens, M.-F. (2011). Highly discriminative statistical features for email classification. Knowledge and Information Systems. doi:10.1007/s10115-011-0403-7.

Gretton, A., Bousquet, O., Smola, E., & Schölkopf, B. (2005). Measuring statistical dependence with Hilbert-Schmidt norms. In Proceedings algorithmic learning theory (pp. 63–77). Berlin: Springer.

Guan, N., Tao, D., Luo, Z., & Yuan, B. (2011). Non-negative patch alignment framework. IEEE Transactions on Neural Networks, 22(8), 1218–1230.

Gupta, A., Nowak, R., & Recht, B. (2010). Sample complexity for 1-bit compressed sensing and sparse classification. In Proceedings of the IEEE international symposium on information theory (ISIT).

Halko, N., Martinsson, P.-G., & Tropp, J.A. (2009). Finding structure with randomness: Stochastic algorithms for constructing approximate matrix decompositions. arXiv:0909.4061.

Hastie, T., Tibshirani, R., & Friedman, J. (2009). The elements of statistical learning: data mining, inference, and prediction (2nd ed.). Springer series in statistics. Berlin: Springer. Corr. 3rd printing edition.

Hsu, D., Kakade, S.M., Langford, J., & Zhang, T. (2009). Multi-label prediction via compressed sensing. In Advances in neural information processing systems 23.

Hüllermeier, E., Fürnkranz, J., Cheng, W., & Brinker, K. (2008). Label ranking by learning pairwise preferences. Artificial Intelligence, 172(16–17), 1897–1916.

Indyk, P., & Motwani, R. (1998). Approximate nearest neighbors: towards removing the curse of dimensionality. In STOC’98: Proceedings of the thirtieth annual ACM symposium on theory of computing (pp. 604–613).

Jia, S., & Ye, J. (2009). An accelerated gradient method for trace norm minimization. In The 26th international conference on machine learning (ICML) (pp. 457–464).

Jia, S., Tang, L., Yu, S., & Ye, J. (2010). A shared-subspace learning framework for multi-label classification. ACM Transactions on Knowledge Discovery from Data, 2(1).

Johnson, W., & Lindenstrauss, J. (1984). Extensions of Lipschitz mappings into a Hilbert space. In Contemporary Mathematics: Vol. 26. Conference in modern analysis and probability, New Haven, Conn., 1982 (pp. 189–206). Providence: Am. Math. Soc.

Katakis, I., Tsoumakas, G., & Vlahavas, I. (2008). Multilabel text classification for automated tag suggestion. In Proceedings of the ECML/PKDD 2008 discovery challenge.

Kong, X., & Yu, P. (2011). gmlc: a multi-label feature selection framework for graph classification. Knowledge and Information Systems. doi:10.1007/s10115-011-0407-3.

Koufakou, A., Secretan, J., & Georgiopoulos, M. (2011). Non-derivable itemsets for fast outlier detection in large high-dimensional categorical data. Knowledge and Information Systems, 29, 697–725.

Kullback, S., & Leibler, R.A. (1951). On information and sufficiency. The Annals of Mathematical Statistics, 22(1), 79–86.

Langford, J., & Beygelzimer, A. (2005). Sensitive error correcting output codes. In COLT’05: annual conference on learning theory (Vol. 3559, pp. 158–172).

Li, P. (2008). Estimators and tail bounds for dimension reduction in ℓ α (0<α≤2) using stable random projections. In SODA’08: Proceedings of the nineteenth annual ACM-SIAM symposium on discrete algorithms (pp. 10–19). Philadelphia: Society for Industrial and Applied Mathematics.

Li, P. (2010). Approximating higher-order distances using random projections. In The 26th conference on uncertainty in artificial intelligence (UAI 2010).

Liu, L., & Liang, Q. (2011). A high-performing comprehensive learning algorithm for text classification without pre-labeled training set. Knowledge and Information Systems, 29, 727–738.

Luxburg, U. (2007). A tutorial on spectral clustering. Statistics and Computing, 17(4), 395–416.

MacQueen, J.B. (1967). Some methods for classification and analysis of multivariate observations. In Proceeding of the fifth Berkeley symposium on mathematical statistics and probability (Vol. 1, pp. 281–297).

Masud, M., Woolam, C., Gao, J., Khan, L., Han, J., Hamlen, K., & Oza, N. (2011). Facing the reality of data stream classification: coping with scarcity of labeled data. Knowledge and Information Systems. doi:10.1007/s10115-011-0447-8.

Mencía, L., & Fürnkranz, J. (2008). Pairwise learning of multilabel classifications with perceptrons. In IEEE international joint conference on neural networks (IJCNN-08) (pp. 995–1000).

Ng, A.Y., Jordan, M.I., & Weiss, Y. (2001). On spectral clustering: Analysis and an algorithm. In NIPS’01: advances in neural information processing systems 14 (Vol. 2, pp. 849–856).

Osuna, E., Freund, R., & Girosi, F. (1997). Support vector machines: Training and applications (Technical report). Massachusetts Institute of Technology.

Raginsky, M., & Lazebnik, S. (2009). Locality-sensitive binary codes from shift-invariant kernels. In The 23rd annual conference on neural information processing systems (NIPS 2009).

Read, J. (2010). Meka softwares.

Read, J., Pfahringer, B., & Holmes, G. (2008). Multi-label classification using ensembles of pruned sets. In ICDM’08: Proceedings of the 2008 eighth IEEE international conference on data mining, Washington, DC, USA (pp. 995–1000).

Read, J., Pfahringer, B., Holmes, G., & Frank, E. (2009). Classifier chains for multi-label classification. Machine Learning, 85(3), 333–359.

Schapire, R.E., & Singer, Y. (2000). Boostexter: a boosting-based system for text categorization. Machine Learning, 39(2/3), 135–168.

Si, S., Tao, D., & Geng, B. (2010). Bregman divergence-based regularization for transfer subspace learning. IEEE Transactions on Knowledge and Data Engineering, 22(7), 929–942.

Snoek, C. G. M., Worring, M., van Gemert, J. C., Geusebroek, J. M., & Smeulders, A. W. M. (2006). The challenge problem for automated detection of 101 semantic concepts in multimedia. In MULTIMEDIA ’06: Proceedings of the 14th annual ACM international conference on multimedia (pp. 421–430). New York: ACM.

Tao, D., Li, X., Wu, X., & Maybank, S.J. (2009). Geometric mean for subspace selection. IEEE Transactions on Pattern Analysis and Machine Intelligence, 31(2), 260–274.

Trohidis, K., Tsoumakas, G., Kalliris, G., & Vlahavas, I. (2008). Multilabel classification of music into emotions. In Proc. 9th international conference on music information retrieval (ISMIR 2008). Philadelphia, PA, USA.

Tsoumakas, G. (2010). Mulan: A java library for multi-label learning. http://mulan.sourceforge.net/.

Tsoumakas, G., & Katakis, I. (2007). Multi-label classification: An overview. International Journal of Data Warehousing and Mining, 3(3), 1–13.

Tsoumakas, G., Katakis, I., & Vlahavas, I. (2008). Effective and efficient multilabel classification in domains with large number of labels. In Proc. ECML/PKDD 2008 workshop on mining multidimensional data (MMD’08 2007).

Tsoumakas, G., Katakis, I., & Vlahavas, I. (2010). Mining multi-label data. In Data mining and knowledge discovery handbook.

Tsoumakas, G., & Vlahavas, I. (2007). Random k-labelsets: An ensemble method for multilabel classification. In Proceedings of the 18th European conference on machine learning (ECML 2007), Warsaw, Poland, 2007 (pp. 406–417)

Ueda, N., & Saito, K. (2002). Parametric mixture models for multi-labeled text. In Advances in neural information processing systems 15. Neural information processing systems, NIPS 2002.

Vapnik, V. N. (1995). The nature of statistical learning theory. New York: Springer.

Vempala, S.S. (2004). The random projection method. DIMACS Series in Discrete Mathematics and Theoretical Computer Science: Vol. 65. Providence: Am. Math. Soc.

Yates, F. (1934). Contingency tables involving small numbers and the χ 2 test. Journal of the Royal Statistical Society, 1, 217–235.

Zhang, M., & Wang, Z. (2009). Mimlrbf: Rbf neural networks for multi-instance multi-label learning. Neurocomputing, 72(16–18), 3951–3956.

Zhang, M., & Zhou, Z. (2006). Multi-label neural networks with applications to functional genomics and text categorization. IEEE Transactions on Knowledge and Data Engineering, 18(10), 1338–1351.

Zhang, M., & Zhou, Z. (2007). Ml-knn: A lazy learning approach to multi-label learning. Pattern Recognition, 40(7), 2038–2048.

Zhang, Y., & Zhou, Z. (2008). Multi-label dimensionality reduction via dependence maximization. In AAAI’08: Proceedings of the 23rd national conference on artificial intelligence (pp. 1503–1505).

Zhou, T., Tao, D., & Wu, X. (2010). Nesvm: A fast gradient method for support vector machines. In ICDM’10: Proceedings of the 2010 IEEE international conference on data mining (pp. 679–688).

Zhou, T., Tao, D., & Wu, X. (2011). Manifold elastic net: a unified framework for sparse dimension reduction. Data Mining and Knowledge Discovery (Springer), 22(3), 340–371.

Author information

Authors and Affiliations

Corresponding author

Additional information

Editors: Grigorios Tsoumakas, Min-Ling Zhang, and Zhi-Hua Zhou.

Rights and permissions

About this article

Cite this article

Zhou, T., Tao, D. & Wu, X. Compressed labeling on distilled labelsets for multi-label learning. Mach Learn 88, 69–126 (2012). https://doi.org/10.1007/s10994-011-5276-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10994-011-5276-1