Abstract

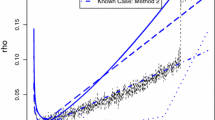

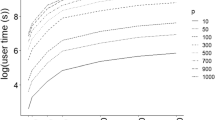

We consider the binary classification problem. Given an i.i.d. sample drawn from the distribution of an χ×{0,1}−valued random pair, we propose to estimate the so-called Bayes classifier by minimizing the sum of the empirical classification error and a penalty term based on Efron’s or i.i.d. weighted bootstrap samples of the data. We obtain exponential inequalities for such bootstrap type penalties, which allow us to derive non-asymptotic properties for the corresponding estimators. In particular, we prove that these estimators achieve the global minimax risk over sets of functions built from Vapnik-Chervonenkis classes. The obtained results generalize Koltchinskii (2001) and Bartlett et al.’s (2002) ones for Rademacher penalties that can thus be seen as special examples of bootstrap type penalties. To illustrate this, we carry out an experimental study in which we compare the different methods for an intervals model selection problem.

Article PDF

Similar content being viewed by others

References

Azuma, K. (1967). Weighted sums of certain dependent random variables. Tohoku Math. J., 19, 357–367.

Barron, A. (1985). Logically smooth density estimation. Technical Report 56, Dept. of Statistics, Stanford University.

Barron, A. (1991). Complexity regularization with application to artificial neural networks. Nonparametric functional estimation and related topics (NATO ASI Ser.), C, 335, 561–576.

Barron, A., & Cover, T. (1991). Minimum complexity density estimation. IEEE Trans. Inf. Theory, 37, 1034–1054.

Bartlett, P., Boucheron, S., & Lugosi, G. (2002). Model selection and error estimation. Mach. Learn., 48(1–3), 85–113.

Bartlett, P., Bousquet, O., & Mendelson, S. (2005). Localized Rademacher complexities. Ann. Stat., 33(4), 1497–1537.

Bartlett, P., Mendelson, S., & Philips, P. (2004). Local complexities for empirical risk minimization. In Learning theory: 17th annual conference on learning theory, COLT 2004, volume 3120 of lecture notes in computer science (pp. 270–284). Springer-Verlag.

Birgé, L., & Massart, P. (1998). Minimum contrast estimators on sieves: Exponential bounds and rates of convergence. Bernoulli, 4(3), 329–375.

Boucheron, S., Bousquet, O., & Lugosi, G. (2005). Theory of classification: A survey of recent advances. To appear in ESAIM: probability and statistics.

Boucheron, S., Lugosi, G., & Massart, P. (2000). A sharp concentration inequality with applications. Random Struct. Algorithms, 16(3), 277–292.

Buescher, K., & Kumar, P. (1996). Learning by canonical smooth estimation. I: Simultaneous estimation, II: Learning and choice of model complexity. IEEE Trans. Autom. Control, 41(4), 545–556, 557–569.

Devroye, L. (1982). Bounds for the uniform deviation of empirical measures. J. Multivariate Anal., 12, 72–79.

Devroye, L., & Lugosi, G. (1995). Lower bounds in pattern recognition and learning. Pattern Recognition 28, 1011–1018.

Giné, E., & Zinn, J. (1984). Some limit theorems for empirical processes. Ann. Probab., 12(4), 929–998.

Giné, E., & Zinn, J. (1990). Bootstrapping general empirical measures. Ann. Probab., 18(2), 851–869.

Grenander, U. (1981). Abstract inference. New York: Wiley.

Haussler, D. (1995). Sphere packing numbers for subsets of the Boolean $n$-cube with bounded Vapnik-Chervonenkis dimension. J. Comb. Theory, A 69(2), 217–232.

Kay, S. (1998). Fundamentals of statistical signal processing–-Detection theory. Prentice Hall Signal Processing Series.

Koltchinskii, V. (1981). On the central limit theorem for empirical measures. Prob. Theory Math. Statist., 24, 71–82.

Koltchinskii, V. (2001). Rademacher penalties and structural risk minimization. IEEE Trans. Inf. Theory, 47(5), 1902–1914.

Koltchinskii, V. (2003). Local Rademacher complexities and oracle inequalities in risk minimization. Technical report, University of New Mexico. To appear in Ann. Stat.

Koltchinskii, V., & Panchenko, D. (1999). Rademacher processes and bounding the risk of function learning. In Giné, E. (Ed.), High dimensional probability II. 2nd international conference, (pp. 443–459). Univ. of Washington, DC, USA, Volume Prog. Probab. 47, Boston, Birkhäuser.

Lebarbier, E. (2002). Quelques approches pour la détection de ruptures à horizon fini. Ph. D. thesis, Université Paris XI.

Lo, A. (1987). A large sample study of the Bayesian bootstrap. Ann. Stat., 15(1), 360–375.

Lozano, F. (2000). Model selection using Rademacher penalization. In Proceedings of the 2nd ICSC Symp. on Neural Computation (NC2000). Berlin, Germany. ICSC Academic Press.

Lugosi, G. (2002). Pattern classification and learning theory. In L. Györfi (Ed.), Principles of nonparametric learning (pp. 1–56). New York: Springer, Wien.

Lugosi, G., & Nobel, A. (1999). Adaptive model selection using empirical complexities. Ann. Stat., 27(6), 1830–1864.

Lugosi, G., & Zeger, K. (1996). Concept learning using complexity regularization. IEEE Trans. Inf. Theory, 42(1), 48–54.

Mammen, E., & Tsybakov, A. (1999). Smooth discrimination analysis. Ann. Stat., 27(6), 1808–1829.

Massart, P. (2003). Concentration inequalities and model selection. Lectures given at the Saint-Flour summer school of probability theory, To appear in Lect. Notes Math.

Massart, P., & Nédélec, E. (2005). Risk bounds for statistical learning. To appear in Ann. Stat.

Massart, P., & Rio, E. (1998). A uniform Marcinkiewicz-Zygmund strong law of large numbers for empirical processes. In Asymptotic methods in probability and statistics (pp. 199–211) (Ottawa, ON, 1997).

McDiarmid, C. (1989). On the method of bounded differences. In Surveys in combinatorics (Lond. Math. Soc. Lect. Notes) (vol. 141, pp. 148–188).

Pollard, D. (1982). A central limit theorem for empirical processes. J. Austral. Math. Soc., A 33(2), 235–248.

Præstgaard, J., & Wellner, J. (1993). Exchangeably weighted bootstraps of the general empirical process. Ann. Probab., 21(4), 2053–2086.

Rubin, D. (1981). The Bayesian bootstrap. Ann. Stat., 9, 130–134.

Sauer, N. (1972). On the density of families of sets. J. Combinatorial Theory, A 13, 145–147.

Tsybakov, A. (2004). Optimal aggregation of classifiers in statistical learning. Ann. Stat., 32(1), 135–166.

Van der Vaart, A., & Wellner, J. (1996). Weak convergence and empirical Processes. New York: Springer.

Vapnik, V. (1982). Estimation of dependences based on empirical data. Springer-Verlag: New York.

Vapnik, V., & Chervonenkis, A. (1971). On the uniform convergence of relative frequencies of events to their probabilities. Theor. Probab. Appl., 16, 264–280.

Vapnik, V., & Chervonenkis, A. (1974). Teoriya raspoznavaniya obrazov. Statisticheskie problemy obucheniya (Theory of pattern recognition. Statistical problems of learning). Moscow: Nauka.

Weng, C.-S. (1989). On a second-order asymptotic property of the Bayesian bootstrap mean. Ann. Stat., 17(2), 705–710.

Author information

Authors and Affiliations

Corresponding author

Additional information

Editor: Oliver Bousquet and Andre Elisseeff

Rights and permissions

About this article

Cite this article

Fromont, M. Model selection by bootstrap penalization for classification. Mach Learn 66, 165–207 (2007). https://doi.org/10.1007/s10994-006-7679-y

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10994-006-7679-y