Abstract

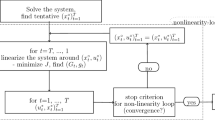

The supervisor and searcher cooperation framework (SSC), introduced in Refs. 1 and 2, provides an effective way to design efficient optimization algorithms combining the desirable features of the two existing ones. This work aims to develop efficient algorithms for a wide range of noisy optimization problems including those posed by feedforward neural networks training. It introduces two basic SSC algorithms. The first seems suited for generic problems. The second is motivated by neural networks training problems. It introduces also inexact variants of the two algorithms, which seem to possess desirable properties. It establishes general theoretical results about the convergence and speed of SSC algorithms and illustrates their appealing attributes through numerical tests on deterministic, stochastic, and neural networks training problems.

Similar content being viewed by others

References

Liu, W., and Dai, Y., Minimization Algorithms Based on Supervisor and Searcher Cooperation, Journal of Optimization Theory and Applications, Vol. 111, pp. 359–379, 2001.

Liu, W., and Sirlantzis, K., SSC Minimization Algorithms, Encyclopaedia of Optimization, Kluwer Academic Publishers, Dordrecht, Netherlands, Vol. 5, pp. 253–257, 2001.

White, H., Learning in Artificial Neural Networks: A Statistical Perspective, Neural Computation, Vol. 1, pp. 425–464, 1989.

Yan, D., and Mukai, H., Optimization Algorithm with Probabilistic Estimation, Journal of Optimization Theory and Applications, Vol. 79, pp. 345–371, 1993.

Benveniste, M., Metivier, M., and Priouret, P., Adaptive Algorithms and Stochastic Approximation, Springer Verlag, Berlin, Germany, 1990.

Barzilai, J., and Borwein, M., 2-Point Stepsize Gradient Methods, IMA Journal of Numerical Analysis, Vol. 8, pp. 141–148, 1988.

Raydan, M., On the Barzilai and Borwein Choice of Steplength for the Gradient. Method, IMA Journal of Numerical Analysis, Vol. 13, pp. 321–326, 1993.

Fahlman, S. E., An Empirical Study of Learning Speed in Back-Propagation Networks, Technical Report CMU-CS-88-162, Carnegie Mellon University, 1988.

Cichocki, A., and Unbehauen, R., Neural Networks for Optimization and Signal Processing, John Wiley and Sons, Stuttgart, Germany 1993.

Veitch, A. C., and Holmes, G., A Modified Quickprop Algorithm, Neural Computation, Vol. 3, pp. 310–311, 1991.

Moré, J., Garbow, B. S., and Hillstrom, K. E., Testing Unconstrained Optimization Software, ACM Transactions on Mathematical Software, Vol. 7, pp. 17–41, 1981.

Raydan, M., The Barzilai and Borwein Gradient Method for the Large-Scale Unconstrained Minimization Problem, SIAM Journal on Optimization, Vol. 7, pp. 26–33, 1997.

Bishop, C. M., Neural Networks for Pattern Recognition, Clarendon Press, Oxford, UK, 1995.

Rumelhardt, D., Hinton, G., and Williams, R., Learning Representations by Back-propagating Errors, Nature, Vol. 323, pp. 533–536, 1986.

Müller, B., and Reinhardt, J., Neural Networks: An Introduction, Springer-Verlag, Berlin, Germany, 1990.

CMU Machine Learning Benchmarking Archive; see http://www.cs.cmu.edu/.

Mackey, M., and Glass, L., Oscillations and Chaos in Physiological Control Systems, Science, Vol. 197, p. 287, 1977.

Thornton, C., Parity: The Problem That Won’t Go Away, Proceedings of AI-96, Edited by G. McCalla, Toronto, Canada, 1996.

Gorman, R., and Sejnowski, T., Analysis of Hidden Units in a Layered Network Trained to Classify Sonar Targets, Neural Networks, Vol. 1, pp. 75–79, 1988.

UCI Machine Learning Repository; see http://www.ics.uci.edu/mlearn/.

Author information

Authors and Affiliations

Additional information

Communicated by P. M. Pardalos

Rights and permissions

About this article

Cite this article

Sirlantzis, K., Lamb, J.D. & Liu, W.B. Novel Algorithms for Noisy Minimization Problems with Applications to Neural Networks Training. J Optim Theory Appl 129, 325–340 (2006). https://doi.org/10.1007/s10957-006-9066-z

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-006-9066-z