Abstract

We present three results which support the conjecture that a graph is minimally rigid in d-dimensional \(\ell _p\)-space, where \(p\in (1,\infty )\) and \(p\not =2\), if and only if it is (d, d)-tight. Firstly, we introduce a graph bracing operation which preserves independence in the generic rigidity matroid when passing from \(\ell _p^d\) to \(\ell _p^{d+1}\). We then prove that every (d, d)-sparse graph with minimum degree at most \(d+1\) and maximum degree at most \(d+2\) is independent in \(\ell _p^d\). Finally, we prove that every triangulation of the projective plane is minimally rigid in \(\ell _p^3\). A catalogue of rigidity preserving graph moves is also provided for the more general class of strictly convex and smooth normed spaces and we show that every triangulation of the sphere is independent for 3-dimensional spaces in this class.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Triangles, as everyone knows, are structurally rigid in the Euclidean plane, as are tetrahedral frames in Euclidean 3-space, or the 1-skeleton of any d-simplex in d-dimensional Euclidean space. In fact these are examples of minimally rigid structures since the removal of any edge will result in a flexible structure. More generally, one can consider the structural properties of bar-joint frameworks obtained by embedding the vertices of a graph G in \(\mathbb {R}^d\). Such a framework is rigid if the only edge-length-preserving continuous motions of the vertices arise from isometries of \(\mathbb {R}^d\). There is a long and abiding theory of rigidity with its origins in both the work of Cauchy on Euclidean polyhedra [3] and the work of Maxwell on stresses and strains in structures [16].

Much of the modern theory of rigidity considers a linearisation known as infinitesimal rigidity, which leads into matroid theory, and concentrates on the generic behaviour of the underlying graph. Standard graph operations such as Henneberg moves and vertex splitting moves [17] provide a means of constructing further rigid structures in a fixed dimension d, whereas the coning operation applied to a rigid d-dimensional structure produces a rigid structure one dimension higher [20].

But what happens if the underlying Euclidean metric is changed? An illustrative example is the observation by Cook, Lovett and Morgan [5] that in any non-Euclidean normed plane a rhombus with generic diagonal lengths cannot be fully rotated whilst maintaining the distances between the corners. The study of rigidity for graphs placed in non-Euclidean finite dimensional normed spaces was initiated by Kitson and Power [14] (see also [6, 7, 12] eg.). These works include the fundamental result, analogous to the Geiringer-Laman theorem for the Euclidean plane [15, 18], that the minimally rigid graphs in dimension 2 are exactly those that decompose into the edge-disjoint union of two spanning trees.

Throughout this article we consider d-dimensional \(\ell _p\)-space (denoted \(\ell _p^d\)), where \(p\in (1,\infty )\) and \(p\not =2\), and occasionally the more general class of strictly convex and smooth normed spaces. In Sect. 2, we provide some necessary background material and present the sparsity conjecture (Conjecture 2.7) which is our main motivation for the sections that follow. Our first main result is in Sect. 3 where we provide a tight analogue of coning, which we term bracing, to transfer rigidity from \(\ell _p^d\) to \(\ell _p^{d+1}\) for certain complete graphs (Theorem 3.3). Using this, we show that for a non-Euclidean \(\ell _p^d\)-space with \(p \in (1,\infty )\) and \(p \ne 2\), the analogue of a d-simplex is the complete graph on 2d vertices, in the sense that it is minimally rigid for \(\ell _p^d\) and there is no smaller graph with this property. In Sect. 4, we present several simple construction moves for generating new rigid structures from existing ones in a strictly convex and smooth space.

Our second main result concerns independence which, as in the Euclidean case, is characterised by the rigidity matrix (defined below) having full rank. Analogous to a result for Euclidean frameworks due to Jackson and Jordán [11], we obtain a result showing independence in smooth non-Euclidean \(\ell _p\)-spaces for graphs of bounded degree (Theorem 5.1).

Our final main result concerns the rigidity of triangulated surfaces in dimension 3. It is well-known that the graph of a triangulated sphere is minimally rigid in the Euclidean space \(\ell _2^3\) and that, in general, triangulations of closed surfaces are generically rigid in \(\ell _2^3\) (see [9, 10] eg). It follows from Euler’s formula that if \(G=(V,E)\) is a triangulation of the sphere then \(|E|=3|V|-6\) while if G is a triangulation of any closed surface of orientable genus \(>0\) then \(|E|\ge 3|V|\). A graph which is minimally rigid for a non-Euclidean \(\ell _p^3\)-space must satisfy \(|E|=3|V|-3\) and so such triangulations are clearly either underbraced or overbraced for \(\ell _p^3\). Triangulations of the projective plane, on the other hand, do satisfy the necessary counting condition for minimal rigidity in non-Euclidean \(\ell _p^3\)-spaces and we prove that these triangulations are indeed minimally rigid (Theorem 6.7).

2 Rigidity in \(\ell _p^d\)

Let X be a finite dimensional real normed linear space and let \(X^*\) denote the dual space of X. Let \(G=(V,E)\) be a finite simple graph with vertex set V, and consider a point \(p=(p_v)_{v\in V}\in X^{V}\) such that the components \(p_v\) and \(p_w\) are distinct for each edge \(vw\in E\). We refer to p as a placement of the vertices of G in X. The pair (G, p) is referred to as a bar-joint framework in X.

A linear functional \(f: X \rightarrow \mathbb {R}\) supports a non-zero point \(x_0 \in X\) if \(f(x_0) = \Vert x_0\Vert ^2\) and \(\sup _{\Vert x\Vert \le 1}\,|f(x)|=\Vert x_0\Vert \); if exactly one linear functional supports a non-zero point \(x_0\) then we say \(x_0\) is smooth and define \(\varphi _{x_0}\) to be the unique support functional for \(x_0\). A space X is said to be smooth if every non-zero point in X is smooth. A space X is said to be strictly convex if \(\Vert x+y\Vert <\Vert x\Vert +\Vert y\Vert \) whenever \(x,y\in X\) are non-zero and x is not a scalar multiple of y (or equivalently, if the closed unit ball in X is strictly convex). We will make use of the following elementary facts (see for example [2, Part III] and [4, Ch. II] for a general treatment of these topics).

Lemma 2.1

Let X be a finite dimensional normed linear space and let \(\mathcal {S}(X)\) denote the set of all smooth points in X together with the point \(0 \in X\). Define \(\Gamma : \mathcal {S}(X) \rightarrow X^*\) by setting \(\Gamma (x) = \varphi _x\) and \(\Gamma (0)=0\). Then,

-

(i)

\(\Gamma \) is continuous,

-

(ii)

X is strictly convex if and only if \(\Gamma \) is injective,

-

(iii)

X is smooth if and only if \(\Gamma \) is surjective, and,

-

(iv)

X is both strictly convex and smooth if and only if \(\Gamma :X \rightarrow X^*\) is a homeomorphism.

2.1 Configuration spaces

Two bar-joint frameworks (G, p) and \((G,p')\) in X are said to be equivalent if \(\Vert p_v-p_w\Vert = \Vert p_v'-p_w'\Vert \) for each edge \(vw\in E\). The configuration space for (G, p), denoted \({\mathcal {C}}(G,p)\), consists of all placements \(p'\in X^{V}\) such that \((G,p')\) is equivalent to (G, p). The configuration space will always contain all translations of p, however rotations and reflections of p are not guaranteed to be contained in \({\mathcal {C}}(G,p)\), as such operations do not always preserve distance in general normed spaces. We can alternatively express the configuration space in terms of the rigidity map,

where we note that \({\mathcal {C}}(G,p) = f_G^{-1}(f_G(p))\).

Remembering that an isometry is a map from X to itself that preserves the distance between points with respect to the norm of X, a pair of frameworks (G, p) and \((G,p')\) are said to be isometric if there exists an isometry \(T:X\rightarrow X\) such that \(p_v=T(p'_v)\) for all \(v\in V\). It is immediate that any two isometric frameworks will be equivalent, but the converse is not true in general. The set of placements \(p'\in X^{V}\) such that \((G,p')\) is isometric to (G, p) is denoted \({\mathcal {O}}_p\) (note this set depends only on p). It can be shown (see [7, Lemma 3.4] for example) that \({\mathcal {O}}_p\) is a smooth submanifold of \(X^{V}\).

Remark 2.2

If X is the standard Euclidean d-space, then a pair (G, p) and \((G,p')\) are isometric if and only if the frameworks (K, p) and \((K,p')\) are equivalent, where K denotes the complete graph on the vertex set of G. This is not however true in general for non-Euclidean normed spaces; see [7, Section 5] for more discussion surrounding the topic.

2.2 The rigidity matrix

Suppose (G, p) is a bar-joint framework in a normed space X with the property that \(p_v-p_w\) is smooth in X for each edge \(vw\in E(G)\). Such placements p are said to be well-positioned in X. Given a basis \(b_1,\ldots ,b_d\) for X, the rigidity matrix for (G, p) is a matrix \(R(G,p) = (r_{e,(v,k)})\), with rows indexed by E and columns indexed by \(V\times \{1,\ldots ,d\}\). The entries are defined as follows;

If the rank of R(G, p) is maximal with respect to the set of all well-positioned placements of G in X then (G, p) is said to be a regular bar-joint framework. If the rigidity matrix R(G, p) has independent rows then (G, p) is said to be independent in X.

Remark 2.3

Note that if the set \({\mathcal {S}}(X)\) of smooth points in a normed space X is open then the set \({\text {Reg}}(G;X)\) of regular placements of a graph \(G=(V,E)\) in X is an open subset of \(X^V\). This follows immediately from Lemma 2.1(i) and the fact that the rank function is lower semicontinuous.

2.3 Framework rigidity

A regular bar-joint framework (G, p) is rigid in X if the equivalent conditions of Proposition 2.4 are satisfied.

Proposition 2.4

[7, Theorem 1.1] Let (G, p) be a regular bar-joint framework in a finite dimensional real normed linear space X. If \({\mathcal {S}}(X)\) is an open subset of X then the following statements are equivalent.

-

(i)

If \(\gamma :[0,1]\rightarrow {\mathcal {C}}(G,p)\) is a continuous path with \(\gamma (0)=p\) and \(\gamma (1)=p'\) then (G, p) and \((G,p')\) are isometric.

-

(ii)

There exists an open neighbourhood U of p in \({\mathcal {C}}(G,p)\) such that if \(p'\in U\) then (G, p) and \((G,p')\) are isometric.

-

(iii)

\({\text {rank}}R(G,p) = d|V|-\dim {\mathcal {T}}(p)\), where \({\mathcal {T}}(p)\) denotes the tangent space of the smooth manifold \({\mathcal {O}}_p\) at p.

If a bar-joint framework (G, p) is both rigid and independent then it is said to be minimally rigid in X. A graph \(G=(V,E)\) is said to be independent (respectively, minimally rigid or rigid) in X if there exists a placement \(p \in X^{V}\) such that the pair (G, p) is an independent (respectively, minimally rigid or rigid) bar-joint framework in X.

2.4 Frameworks in \(\ell ^d_q\)

Remark 2.5

In the area of functional analysis, the variable p is classically used to discuss \(\ell _p\) spaces. This conflicts, however, with the standard notation for rigidity theory, where p is usually used to denote a placement of a framework. To remove any ambiguity, we will from now on opt for \(\ell _q^d\) spaces instead of \(\ell _p^d\), and will retain p for referring solely to placements.

Let \(\ell ^d_q\) denote the d-dimensional vector space \({\mathbb {R}}^d\) together with the norm \(\Vert (x_1,\ldots ,x_d)\Vert _q := (\sum _{k=1}^d |x_k|^q )^{\frac{1}{q}}\) where \(d\ge 1\) and \(q\in (1,\infty )\). With respect to the usual basis on \({\mathbb {R}}^d\), the rigidity matrix R(G, p) for a bar-joint framework (G, p) in \(\ell ^d_q\) has entries,

Here, for convenience, we use the notation \(x^{(q)} := ({\text {sgn}}(x_1)|x_1|^q,\ldots ,{\text {sgn}}(x_d)|x_d|^q)\) and \([x]_k := x_k\) for each \(x=(x_1,\ldots ,x_d)\in {\mathbb {R}}^d\). Note that by scaling each row of the rigidity matrix by the appropriate value \(\Vert p_v-p_w\Vert _q^{q-2}\) we obtain an equivalent matrix \(\tilde{R}(G,p)\) with entries,

We refer to \(\tilde{R}(G,p)\) as the altered rigidity matrix for (G, p). It can be shown (see [14, Lemma 2.3]) that if \(q\not =2\) then \(\dim {\mathcal {T}}(p) = d\). Thus, for \(q\not =2\), a regular bar-joint framework (G, p) in \(\ell ^d_q\) is rigid if and only if \({\text {rank}}R(G,p) = d|V|-d\).

Example 2.6

Let G be the wheel graph on vertices \(V=\{v_0,v_1,v_2,v_3,v_4\}\) with center \(v_0\) and let \(q\in (1,\infty )\). Define p to be the placement of G in \(\ell _q^2\) where,

See left hand side of Fig. 1 for an illustration. The altered rigidity matrix \(\tilde{R}(G,p)\) is as follows,

Let M be the \(8 \times 8\) matrix formed by the first 8 columns. We compute \(\det M = 2^{q-1}-2\) and so, for \(q\ne 2\), \({\text {rank}}\tilde{R}(G,p) = 8 = 2|V|-2\). Thus (G, p) is regular and minimally rigid in \(\ell _q^2\) for all \(q \ne 2\). Note that if we instead set \(p_{v_4}=(0,-1)\) then the resulting bar-joint framework is non-regular in \(\ell _q^2\) for all \(q \ne 2\) (see right hand side of Fig. 1).

2.5 The sparsity conjecture

Given a graph \(G=(V,E)\) and \(d\ge 1\) we write \(f_d(G) = d|V|-|E|\). We say G is (d, d)-sparse if \(f_d(H) \ge d\) for all subgraphs \(H \subset G\). If G is (d, d)-sparse and \(f_d(G) = d\) then G is said to be (d, d)-tight.

Conjecture 2.7

Let \(q\in (1,\infty )\), \(q\not =2\), and let \(d\ge 1\). A graph G is independent in \(\ell _q^d\) if and only if G is (d, d)-sparse.

The conjecture above is a reformulation of a conjecture from [14, Remark 3.16]. When \(d=1\) the conjecture is true and the result is well-known. The case \(d=2\) is proved in [14] and is analogous to a landmark theorem proved independently by Pollaczek-Geiringer [18] and Laman [15] for graphs in the Euclidean plane. For \(d\ge 3\), it is known that graphs which are independent in \(\ell _q^d\) are necessarily (d, d)-sparse (see [14]). Thus, it remains to prove the converse statement: every (d, d)-sparse graph is independent in \(\ell _q^d\) for all \(q\in (1,\infty )\), \(q\not =2\), and for all \(d\ge 3\).

In this article, we prove this converse statement holds in three special cases: 1) when \(|V|\le 2d\), 2) when G has minimum degree at most \(d+1\) and maximum degree at most \(d+2\), and 3) when \(d=3\) and G is a triangulation of the projective plane. We also provide a catalogue of independence preserving graph operations, including the well-known Henneberg moves, vertex splitting and rigid subgraph substitution.

3 Dimension hopping

In this section we consider two graph operations called coning and bracing. It is well-known that the coning operation preserves both independence and minimal rigidity when passing from \(\ell _2^d\) to \(\ell _2^{d+1}\) (see [20]). We will show that for \(q\in (1,\infty )\), \(q\not =2\), both the coning operation and the bracing operation preserve independence (but not minimal rigidity) when passing from \(\ell _q^d\) to \(\ell _q^{d+1}\). A simple application of the coning operation is that the complete graph \(K_{d+1}\) is minimally rigid in \(\ell _2^d\) for all \(d\ge 2\). Indeed, \(K_2\) is minimally rigid in 1-space, and for every \(d\ge 2\), \(K_{d+1}\) is obtained from \(K_d\) by a coning operation. We will apply the bracing operation to prove the analogous result that \(K_{2d}\) is minimally rigid in \(\ell _q^d\), for all \(d\ge 2\) and all \(q\in (1,\infty )\), \(q\not =2\). In particular, Conjecture 2.7 is true whenever G is a subgraph of \(K_{2d}\).

3.1 The coning operation

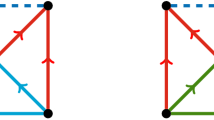

Let \(G=(V,E)\) and define \(G'=(V',E')\) to be the graph with vertex set \(V' = V \cup \{v_0\}\) and edge set \(E'=E \cup \{v_0v : v \in V \}\). Then \(G'\) is said to be obtained from G by a coning operation. (See left hand side of Fig. 2 for an illustration).

Theorem 3.1

Let \(q\in (1,\infty )\) and let \(d\ge 1\). Suppose \(G'=(V',E')\) is obtained from a graph \(G=(V,E)\) by a coning operation. If G is independent in \(\ell _q^d\) then \(G'\) is independent in \(\ell _q^{d+1}\).

Proof

Choose a placement p such that (G, p) is independent in \(\ell _q^d\). Let \(\eta :\ell _q^d\rightarrow \ell _q^{d+1}\) be the natural embedding \((x_1,\ldots ,x_d)\mapsto (x_1,\ldots ,x_d,0)\). Choose any \(x\in \ell _q^{d+1}\) such that \(\left[ x \right] _{d+1}\not =0\). Define \(p'\) to be the placement of \(G'\) in \(\ell _q^{d+1}\) with \(p'_v = \eta (p_v)\) for all \(v \in V\) and \(p'_{v_0} =x\). Let \(\omega = (\omega _{e})_{e \in E'}\) be a vector in the cokernel of \(\tilde{R}(G',p')\). Then, for each \(v\in V\) we have,

Thus \(\omega _{v v_0}=0\) for all \(v \in V\) and so it follows that the vector \((\omega _{e})_{e \in E}\) lies in the cokernel of \(\tilde{R}(G,p)\). Since \(\tilde{R}(G,p)\) is independent, we have \(\omega _{e}=0\) for all \(e\in E\). Hence, \(\omega =0\) and so \((G',p')\) is independent in \(\ell _q^{d+1}\). \(\square \)

3.2 The bracing operation

Let \(d\ge 1\) and let \(G=(V,E)\) be a finite simple graph with \(|V|\ge 2d\). Define \(\tilde{G}\) to be the graph with vertex set \(V(\tilde{G})=V\cup \{v_0,v_1\}\) and edge set,

where \(S\subseteq V\) and \(|S|=2d\). The graph \(\tilde{G}\) is said to be obtained from G by a bracing operation on S. (See right hand side of Fig. 2 for an illustration).

Lemma 3.2

Let \(G=(V,E)\) be a graph with \(|V|\ge 2d\) and suppose \(\tilde{G}\) is obtained from G by a bracing operation on \(S\subseteq V\), where \(|S|=2d\).

-

(i)

If G is (d, d)-sparse then \(\tilde{G}\) is \((d+1,d+1)\)-sparse.

-

(ii)

If G is (d, d)-tight then \(\tilde{G}\) is \((d+1,d+1)\)-tight if and only if \(G=K_{2d}\).

Proof

(i) Let \(\tilde{H}\) be a subgraph of \(\tilde{G}\) and let \(H=\tilde{H} \cap G\). Recall that \(K_{2(d+1)}\) is \((d+1,d+1)\)-sparse and so we may assume that \(|V(\tilde{H})|>2d+2\). If \(\tilde{H}=H\) then,

If \(|V(\tilde{H})| = |V(H)|+1\) then,

Similarly, if \(|V(\tilde{H})| = |V(H)|+2\) then,

Thus \(\tilde{G}\) is \((d+1,d+1)\)-sparse.

(ii) By a counting argument similar to (i), \(\tilde{G}\) is \((d+1,d+1)\)-tight if and only if \(|V(\tilde{G})|=2d+2\). In the latter case, G is a (d, d)-tight graph with \(|V|=2d\) and so \(G=K_{2d}\). \(\square \)

Theorem 3.3

Let \(G=(V,E)\) be a graph with \(|V|\ge 2d\) and suppose \(\tilde{G}\) is obtained from G by a bracing operation on \(S\subseteq V\), where \(|S|=2d\). Let \(q\in (1,\infty )\), \(q\not =2\), and let \(d\ge 1\). If G is independent in \(\ell ^d_q\) then \(\tilde{G}\) is independent in \(\ell ^{d+1}_q\).

Proof

Let \(p:V\rightarrow {\mathbb {R}}^d\) be a placement of G in \({\mathbb {R}}^d\) and write \(p_w=(p_w^1,\ldots ,p_w^d)\) for each \(w\in V\). Define \(\tilde{p}:V(\tilde{G}) \rightarrow {\mathbb {R}}^{d+1}\) by setting \(\tilde{p}_w=(p_w^1,\ldots ,p_w^d,0)\) for all \(w\in V\), \(\tilde{p}_{v_0}=(0,\ldots ,0,-\lambda )\) and \(\tilde{p}_{v_1}=(1,\ldots ,1,\lambda )\) for some positive scalar \(\lambda >0\). Thus the vertices of G are embedded in \({\mathbb {R}}^d\times \{0\}\) and the two new vertices \(v_0\) and \(v_1\) are placed on the hyperplanes \(x_{d+1}=-\lambda \) and \(x_{d+1}=\lambda \) respectively. After a suitable permutation of rows and columns, the (altered) rigidity matrix for \((\tilde{G},\tilde{p})\) takes the form,

where D(p) is a \((2|S|+1)\times (|V|+2(d+1))\)-matrix. We will show that D(p) is independent for some (and hence almost every) choice of p.

Suppose \(|V|=2d\). Then \(S = V\) and the rows of D(p) are those indexed by the sets \(E_0=\{v_0w:w\in V\}\) and \(E_1=\{v_1w:w\in V\}\) together with the edge \(v_0v_1\). The columns of D(p) are those indexed by \(\{(w,d+1):w\in V\}\) together with the pairs \((v_0,1),\ldots ,(v_0,d+1)\) and \((v_1,1),\ldots ,(v_1,d+1)\). Thus, after a suitable permutation of rows and columns, D(p) takes the form,

Note that to show D(p) is independent for some p, it is sufficient to show that the square submatrix of D(p) formed by deleting the \((v_1,d+1)\)-column is independent. Adding each \(E_0\) row, indexed by \(v_0w\), of this square submatrix to the corresponding \(E_1\) row, indexed by \(v_1w\), we obtain,

It is clear that the first |V| rows, indexed by \(E_0\), are independent and that it is now sufficient to show there exists p such that the \((2d+1)\times (2d+1)\)-matrix,

is independent. To this end, let \(V=\{w_1,\ldots ,w_{d},\tilde{w}_1,\ldots ,\tilde{w}_d\}\). For each \(i=1,\ldots ,d\), choose \(p_{w_i}\in {\mathbb {R}}^d\) and \(p_{\tilde{w}_i}\in {\mathbb {R}}^d\) such that,

Then, after a suitable permutation of rows and columns, the square submatrix A takes the form,

where C is the \(d\times d\)-matrix,

Subtracting each \(v_1\tilde{w}_i\) row from the corresponding \(v_1w_i\) row and applying further row reductions, this matrix reduces to,

For \(i=1,\ldots ,d\), let \(r_{i}\) denote the row of B which is indexed by \(v_1\tilde{w}_i\) and let \(r_e\) denote the row indexed by \(v_0v_1\). Note that C is a circulant matrix with determinant,

Thus, since \(q\not =2\), C is invertible and so the rows \(r_1,\ldots ,r_d\) are independent.

Suppose \(r_{e} = \sum _{i=1}^d \mu _{i} r_{i}\) for some scalars \(\mu _{1},\ldots , \mu _d\in {\mathbb {R}}\). On considering the \((v_0,d+1)\) column it is clear that \(\sum _{i=1}^d\mu _i =2^{q-1}\). Moreover, considering the \((v_1,1)\) column,

Thus, since \(q\not =2\), we have \(\mu _1=0\). By similar arguments, \(\mu _2=\cdots =\mu _d=0\). Thus the matrix B, and hence also the matrices A and D(p), are independent.

Note that the set of points p for which D(p) is independent is open and dense in \({\mathbb {R}}^{d|V(G)|}\). Thus we may choose \(p\in {\mathbb {R}}^{d|V(G)|}\) such that both \(\tilde{R}(G,p)\) and D(p) are independent. In particular, \(\tilde{R}(\tilde{G},\tilde{p})\) is independent, as required.

Finally, if \(|V|>2d\) then note that \(\tilde{R}(\tilde{G},\tilde{p})\) will have additional columns, indexed by \(\{(w,d+1):w\in V\backslash S\}\), with zero entries. These columns do not alter the dependencies between the rows and so the result follows as above. \(\square \)

As a corollary we show that \(K_{2d}\) is minimally rigid in \(\ell _q^d\) for \(q\in (1,\infty )\), \(q\not =2\).

Corollary 3.4

Let \(q\in (1,\infty )\), \(q\not =2\).

-

(i)

If \(|V|\le 2d\) then \(G=(V,E)\) is independent in \(\ell ^d_q\).

-

(ii)

\(K_{2d}\) is minimally rigid in \(\ell ^d_q\) for all \(d\ge 1\).

Proof

It is clear that \(K_2\) is independent in \({\mathbb {R}}\). Note that, for all \(d\ge 2\), \(K_{2d}\) is obtained from \(K_{2(d-1)}\) by a bracing operation on the vertex set of \(K_{2(d-1)}\). Thus, by Theorem 3.3, \(K_{2d}\) is independent in \(\ell ^d_q\) for all \(d\ge 2\). If \(|V|\le 2d\) then G is a subgraph of \(K_{2d}\) and hence is independent in \(\ell ^d_q\). Finally, since \(K_{2d}\) is independent and \(|E|=d|V|-d\), it is minimally rigid in \(\ell ^d_q\). \(\square \)

Remark 3.5

We conjecture that \(K_{2d}\) admits a rigid (but not necessarily minimally rigid) placement in every d-dimensional normed space. This conjecture clearly holds for the Euclidean norm and the above corollary confirms the conjecture for all non-Euclidean smooth \(\ell _q\) norms. The conjecture is also known to hold for all non-Euclidean normed planes (see [6]) and for the cylinder and hypercylinder norms on \({\mathbb {R}}^3\) and \({\mathbb {R}}^4\) respectively (see [13]).

4 Graph operations

In this section we provide a catalogue of graph operations which preserve independence in smooth and strictly convex normed spaces. These include the well known Henneberg moves (0 and 1-extensions), vertex splitting moves and rigid subgraph substitutions. By applying any sequence of these graph operations to \(K_{2d}\) we may obtain a large class of minimally rigid graphs for \(\ell _q^d\) when \(q\in (1,\infty )\) and \(q\not =2\).

4.1 0-extensions

Definition 4.1

Let \(G=(V,E)\) be a graph and define \(G'\) by setting \(V(G')=V\cup \{v\}\) and \(E(G')= E\cup \{vw:w\in S\}\), where \(S\subseteq V\) and \(|S|=d\). The graph \(G'\) is said to be obtained from G by a d-dimensional 0-extension on S; see Fig. 3.

To prove that 0-extensions preserve rigidity in the generality of strictly convex and smooth normed spaces we will need the following lemma.

Lemma 4.2

Let X be a finite dimensional real normed linear space which is smooth and strictly convex and let \(d=\dim X\). Let \(y_1, \ldots , y_n \in X\) where \(n \le d\). Then, for all \(\epsilon > 0\), there exists \(y'_1, \ldots , y'_n \in X\) such that \(\Vert y_i - y'_i \Vert < \epsilon \) for each \(1 \le i \le n\) and \(\varphi _{y'_1}, \ldots , \varphi _{y'_n}\) are linearly independent in \(X^*\).

Proof

Let \(\epsilon >0\) and let \(b_1,\ldots ,b_d\) be a basis for X. Define,

Note that since X is smooth and strictly convex then by Lemma 2.1(iv), the duality map \(\Gamma :X\rightarrow X^*\), \(x\mapsto \varphi _x\), is a homeomorphism. It follows that \(\theta \) is also a homeomorphism. Recall that the set \({\mathcal {I}}_{n\times d}({\mathbb {R}})\) of independent \(n\times d\) real matrices is open and dense in \(M_{n\times d}({\mathbb {R}})\). Thus \(\theta ^{-1}({\mathcal {I}}_{n\times d}({\mathbb {R}}))\) is dense in \(X^{n}\) and so there exists \(y'=(y'_1, \ldots , y'_n) \in X^{n}\) such that \(\Vert y_i - y'_i \Vert < \epsilon \) for each \(1 \le i \le n\) and \(\theta (y')\) is independent. In particular, the linear functionals \(\varphi _{y'_1}, \ldots , \varphi _{y'_n}\) are linearly independent, as required. \(\square \)

In the following proposition, the set of regular placements of G in X is denoted \(\text {Reg}(G;X)\).

Proposition 4.3

Let X be a finite dimensional real normed linear space which is smooth and strictly convex and let \(d= \dim X\). Let \(G=(V,E)\) be a graph and suppose \(G'\) is obtained from G by a d-dimensional 0-extension on \(S\subseteq V\), where \(|S|= d\). Then G is independent (resp. minimally rigid) in X if and only if \(G'\) is independent (resp. minimally rigid) in X.

Proof

Let \(\theta \) be the homeomorphism described in Lemma 4.2 for \(n=d\). Then \(\theta ^{-1}({\text {GL}}_d({\mathbb {R}}))\) is dense in \(X^{d}\) where \({\text {GL}}_d({\mathbb {R}})\) denotes the general linear group of degree d over \({\mathbb {R}}\). Note that the map,

is continuous. Since the rank function is lower semicontinuous, it follows that \({\text {Reg}}(G;X)\) is open in \(X^{|V|}\). Thus the intersection

is non-empty in \(X^{|V|}\). Let \(p=(p_1,p_2)\) be a point in this intersection and set

Here \(p'\) describes a placement of \(G'\) in X in which \(p_1\) is a placement of the vertices in \(V\backslash S\), \(p_2\) is a placement of the vertices in S, and the new vertex v is placed at the origin. After a suitable permutation of rows and columns, the rigidity matrix for \((G',p')\) takes the form,

As \(p_1\in {\text {Reg}}(G;X)\) and \(\theta (p_2)\) is invertible it follows that \(p'\in {\text {Reg}}(G';X)\). Note that \(R(G',p')\) is independent if and only if R(G, p) is independent, and that \(f_d(G')= f_d(G)\) so the result follows. \(\square \)

4.2 1-extensions

Definition 4.4

Let \(G=(V,E)\) be a graph containing vertices \(v_1,\ldots ,v_{d+1}\) and the edge \(v_dv_{d+1}\in E\). Define \(G'\) by setting,

The graph \(G'\) is said to be obtained from G by a d-dimensional 1-extension on the vertices \(v_1,\ldots ,v_{d+1}\in V\) and the edge \(v_dv_{d+1}\in E\); see Fig. 4.

Proposition 4.5

Let X be a finite dimensional real normed linear space which is smooth and strictly convex and let \(d= \dim X\). Suppose \(G'\) is obtained from \(G=(V,E)\) by a d-dimensional 1-extension. If G is independent in X then \(G'\) is independent in X. Further; if both G and \(G'\) are independent then G is rigid in X if and only if \(G'\) is rigid in X.

Proof

Let \(v_0\) be the unique vertex in \(V(G') \setminus V\), let \(v_0 v_1, \ldots , v_0 v_{d+1} \in E(G')\) be the added edges for distinct \(v_1, \ldots ,v_{d+1} \in V\), and let \(v_d v_{d+1}\) be the deleted edge. If G is independent in X then there exists a placement p of G in X for which (G, p) is independent. By translating the framework (G, p) we may assume without loss of generality that \(p_v\not =0\) for all \(v\in V\) and \(p_{v_{d+1}} = - p_{v_d}\). By Lemma 4.2, and since the set of independent placements of G is open in \(X^{V}\), we may also assume that the linear functionals \(\varphi _{p_{v_1}}, \ldots , \varphi _{p_{v_d}}\) are linearly independent. Define a placement \(p'\) of \(G'\) in X by setting \(p'_v=p_v\) for all \(v\in V\) and \(p'_{v_0} =0\). We claim that \((G',p')\) is independent in X.

Suppose \(a=(a_{e})_{e\in E(G')} \in \mathbb {R}^{E(G')}\) is a linear dependence on the rows of \(R(G',p')\). From the entries of the \(v_0\)-column of \(R(G',p')\) we obtain,

Thus, since \(\varphi _{p_{v_1}}, \ldots , \varphi _{p_{v_d}}\) are linearly independent, we have \(a_{v_0 v_1} = \ldots = a_{v_0 v_{d-1}} = 0\) and \(a_{v_0 v_{d}} = a_{v_0 v_{d+1}}\). Define \(b=(b_{e})_{e\in E} \in \mathbb {R}^{E}\) with \(b_{e} = a_{e}\) for \(e \ne v_d v_{d+1}\) and \(b_{v_d v_{d+1}} = \frac{1}{2}a_{v_0 v_{d}}\). Then b is a linear dependence on the rows of R(G, p). Thus \(b=0\) as (G, p) is independent. It now follows that \(a=0\) and so \((G',p')\) is independent, as required.

The final statement of the proposition follows since \(f_d(G') = f_d(G)\). \(\square \)

4.3 Vertex splitting

Definition 4.6

Let \(G=(V,E)\) be a graph containing a vertex \(v_0 \in V\) and edges \(v_0 v_i \in E\) for \(i=1, \ldots , d-1\). Let \(G'\) be a graph obtained from G by the following process:

-

(i)

adjoin a new vertex \(w_0\) to G together with the edges \(w_0 v_0, w_0 v_1, \ldots , w_0 v_{d-1}\),

-

(ii)

for every edge of the form \(v_0 w\) in E, where \(w \notin \{v_1,\ldots , v_{d-1}\}\), either leave the edge as it is or replace it with the edge \(w_0 w\).

The graph \(G'\) is said to be obtained from G by a d-dimensional vertex split at the vertex \(v_0\in V\) and edges \(v_0 v_1, \ldots , v_0 v_{d-1}\in E\); see Fig. 5.

For a graph \(G=(V,E)\) and a vertex \(v\in V\), we will use \(N_G(v)\), or N(v) when the context is clear, to denote the set of neighbours of v in G.

Proposition 4.7

Let X be a smooth and strictly convex normed space with dimension d. Suppose \(G'\) is a d-dimensional vertex split of G. If G is independent in X then \(G'\) is independent in X. Further; if both G and \(G'\) are independent then \(G'\) is rigid in X if and only if G is rigid in X.

Proof

Let \(v_0,w_0,v_1, \ldots , v_{d-1}\) be as described in Definition 4.6. Since G is independent in X there exists a placement \(p\in X^V\) of G in X such that R(G, p) is independent. Choose \(y \in X\backslash \{0\}\). By Lemma 4.2, and since the set of independent placements of G is open in \(X^V\), we may assume that the linear functionals \(\varphi _y, \varphi _{p_{v_0} - p_{v_1}}, \ldots , \varphi _{p_{v_0} - p_{v_{d-1}}}\) are linearly independent. Write \(E(G')=E_1\cup E_2 \cup \{v_0w_0\}\) where \(E_1\) consists of all edges in \(G'\) which are not incident with \(w_0\) and \(E_2\) consists of all edges in \(G'\) of the form \(vw_0\) with \(v \ne v_0\). Fix a basis \(b_1, \ldots ,b_d\) for X and define R to be the \(|E(G')| \times d|V(G')|\) matrix with non-zero row entries as described below and zero entries everywhere else,

Suppose \(a \in \mathbb {R}^{E(G')}\) is a linear dependence on the rows of R. Define \(b \in \mathbb {R}^{E}\), where

If \(v\not = v_0\) then note that \(\sum _{w \in N_G(v)} b_{v w} \varphi _{p_v-p_w} = \sum _{w \in N_{G'}(v)} a_{v w} \varphi _{p_v-p_w}=0.\) Also note that \(\sum _{w \in N_G(v_0)} b_{v w} \varphi _{p_{v_0}-p_w}=A+B\) where,

Thus if \(b\not =0\) then b is a linear dependence on the rows of R(G, p), a contradiction. We conclude that \(b=0\). In particular, we have \(a_{v_0 v_i}= -a_{w_0 v_i}\) for all \(i=1,\ldots ,d-1\) and \(a_{vw}=0\) for all edges vw in \(E(G')\backslash \{v_0w_0,v_0v_i,w_0v_i:i=1,\ldots ,d-1\}\). As \(\varphi _y,\varphi _{p_{v_0} - p_{v_1}}, \ldots , \varphi _{p_{v_0} - p_{v_{d-1}}}\) are linearly independent then by observing how the linear dependence acts on the \(v_0\) columns of R we obtain \(a_{v_0w_0}=0\) and \(a_{v_0v_i}=0\) for all \(i=1,\ldots , d-1\). Thus \(a=0\) and so R is independent.

Let \(\epsilon >0\) and let \(R_\epsilon \) denote the independent matrix obtained by multiplying the entries of the \(v_0w_0\) row of R by \(\epsilon \). Define a placement \(p'\) of \(G'\) in X by setting \(p'_v=p_v\) for all \(v\in V\) and \(p'_{w_0} =p_{v_0} +\epsilon y\). Note that for each edge \(v_0w\in E(G)\), \(p_{v_0}-p_w\) is a smooth point of X. Thus, using Lemma 2.1, it follows that for \(\epsilon \) sufficiently small, the rigidity matrix \(R(G',p')\) will lie in an open neighbourhood of \(R_\epsilon \) consisting of independent matrices. We conclude that \(G'\) is independent in X.

The final statement of the proposition follows since \(f_d(G') = f_d(G)\). \(\square \)

Remark 4.8

There is a natural variant of vertex splitting known as spider splitting. In this version, d vertices adjacent to \(v_0\) become adjacent to both \(v_0\) and \(w_0\) but there is no edge between \(v_0\) and \(w_0\), see Fig. 6. With a simplified version of the proof of Proposition 4.7 above we obtain the analogous result. The 2-dimensional spider split has been considered in Euclidean contexts under other names such as the vertex-to-4-cycle move [17].

4.4 Graph substitution

Definition 4.9

Let G and H be graphs and choose \(v_0\in V(G)\). A graph \(G'\) is obtained from G by a vertex-to-H substitution at \(v_0\) if it is formed by replacing the vertex \(v_0 \in V(G)\) with V(H), adding the edges E(H) and changing each edge \(v_0w \in E(G)\) to vw for some \(v \in V(H)\). See Fig. 7 for an example of a vertex-to-\(K_4\) substitution applied to a wheel graph.

A vertex-to-\(K_4\) substitution at the center vertex of the wheel graph on 5 vertices. This graph operation will preserve rigidity in any non-Euclidean 2-dimensional normed space [6, Lemma 5.5]

Recall that \({\mathcal {T}}(p)\) denotes the tangent space at p of \({\mathcal {O}}_p\); the smooth manifold of placements isometric to p. Our next result shows that the vertex-to-H substitution move preserves independence for a normed space X whenever H is independent.

Proposition 4.10

Let X be a normed space with dimension d and suppose that the set of smooth points of X form an open subset. Suppose \(G'\) is obtained from G by a vertex-to-H substitution at \(v_0\). If G and H are independent in X then \(G'\) is independent in X. Further; if \(\dim {\mathcal {T}}(r) = d\) for any placement r of H and H is rigid in X then \(G'\) is rigid in X if and only if G is rigid in X.

Proof

Let (G, p) and (H, r) be independent in X. Denote by \(\partial V(H)\) all the edges in \(G'\) with exactly one vertex in V(H). Let \(b_1, \ldots , b_d\) be a basis for X. Consider the \(|E(G')| \times d|V(G')|\) matrix R with non-zero row entries as described below,

Suppose \(a \in \mathbb {R}^{E(G')}\) is a linear dependence on the rows of R. Define \(b \in \mathbb {R}^{E(G)}\) by setting \(b_{vw} = a_{vw}\) if \(vw \in E(G) \cap E(G')\) and \(b_{v_0 w} = a_{vw}\) if the edge \(v_0 w \in E(G)\) is replaced by \(vw \in \partial V(H)\). If \(v\notin N_G(v_0)\cup \{v_0\}\) then note that,

If \(v\in N_G(v_0)\) and \(vv_0\in E(G)\) is replaced by \(vz \in \partial V(H)\) then note that,

Since,

we have,

Thus, if \(b\not =0\) then b is a linear dependence on the rows of R(G, p). Since R(G, p) is independent, it follows that \(b=0\). In particular, \(a_{vw}=0\) for all \(vw\in E(G')\backslash E(H)\). Note that if \(a_H=(a_{vw})_{vw \in E(H)}\) is non-zero then \(a_H\) is a linear dependence on the rows of R(H, r). Since R(H, r) is independent, we conclude that \(a_H=0\) and so \(a=0\). Thus we have shown that R is independent.

Let \(\epsilon >0\) and let \(R_\epsilon \) denote the independent matrix obtained by multiplying the entries of the E(H) rows of R by \(\epsilon \). Define a placement \(p'\) of \(G'\) in X by setting \(p'_v=p_v\) for all \(v\in V(G')\backslash V(H)\) and \(p'_{v} =p_{v_0}+ \epsilon r_v\) for all \(v\in V(H)\). Note that for each edge \(v_0w\in E(G)\), \(p_{v_0}-p_w\) is a smooth point of X. Thus, using Lemma 2.1, it follows that for \(\epsilon \) sufficiently small, the rigidity matrix \(R(G',p')\) will lie in an open neighbourhood of \(R_\epsilon \) consisting of independent matrices. We conclude that \(G'\) is independent in X.

If H is also rigid and \(\dim \mathcal {T}(r)=d\) for any choice of placement r of H, then we note that \(f_d(G') = f_d(G)\), thus \(G'\) is rigid if and only if G is rigid. \(\square \)

Remark 4.11

It can be shown that Proposition 4.10 holds for any normed space. Since the proof is significantly more technical we refer the reader to [8] for details.

5 Degree-bounded graphs

Recall that Conjecture 2.7 proposed a characterisation of independence in \(\ell _q^d\). We will prove the conjecture for a certain family of degree bounded graphs. This is analogous to a theorem of Jackson and Jordán [11] who worked in the Euclidean space \(\ell _2^d\).

Let \(G=(V,E)\). For \(U\subset V\), let G[U] denote the subgraph of G induced by U and let \(i_G(U)\), or simply i(U) when the context is clear, denote the number of edges in G[U]. We also use d(U, W) to denote the number of edges of the form xy with \(x\in U\setminus W\) and \(y\in W\setminus U\), where \(U,W\subset V\). Let \(\delta (G)\) denote the minimum degree in the graph G and \(\Delta (G)\) denote the maximum degree in G. Let \(d_G(v)\), or simply d(v), denote the degree of a vertex v in G.

Theorem 5.1

Let \(q\in (1,\infty )\), \(q\not =2\) and let \(d\ge 3\). Suppose G is a connected graph with \(\delta (G)\le d+1\) and \(\Delta (G)\le d+2\) for any \(d\ge 3\). Then G is independent in \(\ell _q^d\) if and only if G is (d, d)-sparse.

To prove the theorem we will need several additional lemmas. The first of these is easily proved by counting the contribution to both sides.

Lemma 5.2

Let \(G=(V,E)\). For any \(U,W\subset V\) we have \(i(U)+i(W)+d(U,W)=i(U\cup W)+i(U\cap W)\).

We will say that \(U\subset V\) is critical if \(|U|>1\) and \(i(U)=d|U|-d\).

Lemma 5.3

Let \(G=(V,E)\) be (d, d)-sparse and suppose \(U\subset V\) is critical. Then \(d_{G[U]}(v)\ge d\) for all \(v\in U\).

Proof

Suppose U is critical and there exists \(x\in U\) with \(d_{G[U]}(x)< d\). Then

contradicting the (d, d)-sparsity of G. \(\square \)

Let \(G=(V,E)\). A graph \(G'\) is said to be obtained from G by a (d-dimensional) 1-reduction at v adding \(x_1x_2\) if \(V(G')=V-\{v\}\), for some vertex v with \(N_G(v)=\{x_1,x_2,\dots , x_{d+1}\}\), and \(E(G')=E\setminus \{vx_1,vx_2,\dots ,vx_{d+1} \}\cup \{x_1x_2\}\).

Lemma 5.4

Let \(G=(V,E)\) be (d, d)-sparse, suppose \(v\in V\) has \(d(v)=d+1\) and \(x,y\in N(v)\). Then the graph resulting from a 1-reduction at v adding xy is not (d, d)-sparse if and only if either \(xy\in E\) or there exists a critical set U with \(x,y\in U\subset V-\{v\}\).

Proof

If \(xy\in E\) or there exists a critical set U with \(x,y\in U\subset V-\{v\}\) then it is obvious that the 1-reduction at v adding xy does not result in a (d, d)-sparse graph. Conversely if a 1-reduction at v adding xy does not result in a (d, d)-sparse graph then either there is a pair of parallel edges between x and y in the resulting graph giving \(xy\in E\) or there is a violation of (d, d)-sparsity. In the latter case let \(G'\) be the graph resulting from the specified 1-reduction. Then there is a subgraph of \(H_1=(V_1,E_1)\) of \(G'\) with \(i(V_1)=d|V_1|-(d-1)\). Clearly \(x,y\in V_1\), otherwise \(H_1\) is a subgraph of G contradicting the (d, d)-sparsity of G. Hence \(V_1\), as a subset of V, is the required critical set in G. \(\square \)

The key technical lemma we will need is the following.

Lemma 5.5

Let \(d\ge 3\) and suppose \(G=(V,E)\) is (d, d)-sparse. Suppose \(v\in V\) has \(d(v)=d+1\) and \(d(x)\le d+2\) for all \(x\in N(v)\). Then there is a 1-reduction at v which results in a (d, d)-sparse graph unless \(G[\{v\}\cup N(v)]=K_{d+2}\).

Proof

Suppose \(G[\{v\}\cup N(v)]\ne K_{d+2}\). Then without loss of generality we may suppose that \(xy\notin E\) for some \(x,y\in N(v)\). Hence Lemma 5.4 implies there is a critical set \(U\subset V-v\) with \(x,y\in U\). Choose U to be the maximal critical set containing x, y but not v. If \(N(v)\subset U\) then \(i(U\cup \{v\})> d|U\cup \{v\}|-d\), contradicting (d, d)-sparsity. So without loss of generality we may suppose \(w\notin U\) for some \(w\in N(v)\setminus \{x,y\}\).

Suppose there is a critical set W with \(y,w\in W\subset V-\{v\}\). Then, by the maximality of U, \(U\cup W\) is not critical, so \(i(U\cup W)\le d|U\cup W|-(d+1)\). Since G is (d, d)-sparse we also have \(i(U\cap W)\le d|U\cap W|-d\). Now using Lemma 5.2 we get \(d|U|+d|W|-2d+d(U,W)\le d|U\cup W|+d|U\cap W|-2d-1\), a contradiction.

Hence Lemma 5.4 implies that \(yw\in E\). The same argument applied to the pair x, w implies that \(xw\in E\). Since \(d\ge 3\), there exists \(z\in N(v)\setminus \{x,y,w\}\). If \(z\notin U\) then we can repeat the same argument to the pair y, z to find that \(yz\in E\). However this would imply that \(d+2\ge d(y)\ge d_{G[U]}(y)+3\), which is a contradiction by Lemma 5.3. Hence for all \(z\in N(v)\setminus \{x,y,w\}\) we have that \(z\in U\). We may now apply the previous argument to each pair z, w to see that each \(zw\in E\). Hence w has d neighbours in U so \(U'=U\cup \{w\}\) is critical, contradicting the maximality of U. \(\square \)

We can now prove the theorem.

Proof of Theorem 5.1

Necessity is easy. For the sufficiency we use induction on |V|. The base cases are \(K_1\) and \(K_{d+2}\). The latter of which is independent in \(\ell _q^d\) by Corollary 3.4(i).

Suppose \(G=(V,E)\) is (d, d)-sparse, \(|V|\ge 2\), \(G\ne K_{d+2}\) and \(v\in V\) has minimum degree. Suppose first that \(G-v\) is disconnected. Then each component \(H_i=(V_i,E_i)\) of \(G-v\) is connected with \(\delta (H_i)\le d+1\) and \(\Delta (H_i)\le d+2\). Hence \(H_i\) is independent in \(\ell _q^d\) by induction. Since \(d_{H_i+v}(v)\le d\), Proposition 4.3 implies that \(G[V_i+v]\) is independent in \(\ell _q^d\). Hence G is independent in \(\ell _q^d\) by Proposition 4.10. Thus we may suppose that \(G-v\) is connected.

Suppose \(d(v)\le d\). Then \(G-v\) is connected with \(\delta (G-\{v\})\le d+1\) and \(\Delta (G-\{v\})\le d+2\). Hence \(G-\{v\}\) is independent in \(\ell _q^d\) by induction and G is independent in \(\ell _q^d\) by Proposition 4.3. Thus we may suppose that \(d(v)=d+1\). Suppose \(G[\{v\}\cup N(v)]\ne K_{d+2}\). Then Lemma 5.5 implies there is a 1-reduction at v which results in a (d, d)-sparse graph \(G'\). Since \(G-\{v\}\) is connected, \(G'\) is connected. Since \(\delta (G)\le d+1\) and \(\Delta (G)\le d+2\) we also have \(\delta (G')\le d+1\) and \(\Delta (G')\le d+2\). By induction \(G'\) is independent in \(\ell _q^d\) and hence G is independent in \(\ell _q^d\) by Proposition 4.5.

Hence \(G[\{v\}\cup N(v)]= K_{d+2}\). Since \(G\ne K_{d+2}\), there exists \(u\in V\setminus V(K_{d+2})\). Consider \(H=G-K_{d+2}\). Each component \(H_i\) of H is connected with \(\delta (H_i)\le d+1\) and \(\Delta (H_i)\le d+2\). Hence \(H_i\) is independent in \(\ell _q^d\) by induction, and trivially H is independent in \(\ell _q^d\). Note that for each vertex \(r\in K_{d+2}\), there is at most one edge of the form rs where \(s\in H\). Thus G is a subgraph of the graph formed from \(K_{d+3}\) by a vertex-to-H move on t where t is the vertex of \(K_{d+3}\) not in the \(K_{d+2}\). Also, since \(d\ge 3\), \(K_{d+3}\) is independent in \(\ell _q^d\) by Corollary 3.4(i). That G is independent in \(\ell _q^d\) now follows from Proposition 4.10. \(\square \)

We close this section by noting another independence result for normed spaces which we adapt from [11]. This time we may use the combinatorics of [11] directly.

Theorem 5.6

Let X be a smooth and strictly convex normed space of dimension 3 and let \(G=(V,E)\) be a graph such that \(i(U)\le \frac{1}{2}(5|U|-7)\) for all \(U\subset V\) with \(|U|\ge 2\). Then G is independent in X.

Proof

We use induction on |V|. If \(|V|=2\) then trivially \(K_2\) is independent in X. If \(|V|\ge 3\) then, in the proof of [11, Theorem 5.1], it was shown that there must exist a 0-reduction or a 1-reduction on G to a smaller graph satisfying the hypotheses of the theorem. Since this smaller graph is independent in X by induction the proof is completed by application of Propositions 4.3 and 4.5. \(\square \)

Note that neither Theorem 5.1 nor 5.6 are best possible. Indeed if Conjecture 2.7 is true then one can remove the degree hypotheses in Theorem 5.1 and replace the sparsity assumption in Theorem 5.6 by (3, k)-sparsity, where k is the dimension of the isometry group of the normed space. On the other hand it seems to be a difficult problem to work with vertices of degree 5 so even extending Theorem 5.6 to include the case when \(i(U)=\frac{1}{2}5|U|\) may be challenging.

6 Surface graphs

In this final section we consider the graphs of triangulated surfaces. We will use our results to deduce first that every triangulation of the sphere is independent in \(\ell _q^3\) and then that every triangulation of the projective plane is minimally rigid in \(\ell _q^3\) for \(1<q\ne 2<\infty \). To this end we will use the following topological results providing recursive constructions of triangulations of the sphere and of the projective plane by vertex splitting due to Steinitz [19] and Barnette [1]. In the statements we use topological vertex splitting to mean a vertex splitting operation that preserves the surface, and we use \(K_7-K_3\) to denote the unique graph obtained from \(K_7\) by deleting the edges of a triangle.

Proposition 6.1

([19]) Every triangulation of the sphere can be obtained from \(K_4\) by topological vertex splitting operations.

Proposition 6.2

([1]) Every triangulation of the projective plane can be obtained from \(K_6\) or \(K_7-K_3\) by topological vertex splitting operations.

Theorem 6.3

Let X be a smooth and strictly convex normed space of dimension 3, and let G be a triangulation of the sphere. Then G is independent in X.

Proof

Let G be a triangulation of the sphere. Proposition 6.1 shows that G can be generated from \(K_4\) by vertex splitting operations. We may use Proposition 4.3 to deduce that \(K_4\) is indepedendent in X and Proposition 4.7 shows that vertex splitting preserves minimal rigidity in X. The theorem follows from these results by an elementary induction argument.

To give an analogous result for the projective plane we will need to restrict to \(\ell _q^3\) and make use of the following lemmas.

Lemma 6.4

Let \(x> y > 0\). If \(k > 1\) then \(x^k - y^k > (x -y )^k\) and if \(k<1\) then \(x^k - y^k < (x -y )^k\).

Proof

Fix \(y \in (0, \infty )\) and define the smooth function \(f : (y , \infty ) \rightarrow \mathbb {R}, ~ t \mapsto t^k - y^k - (t - y)^k\). We note that \(f'(t)= k t^{k-1} - k (t- y)^{k-1}.\) If \(k > 1\) then \(f'(t) >0\) and f is strictly increasing, while if \(k < 1\) then \(f'(t) <0\) and f is strictly decreasing. As \(\lim _{t \rightarrow y} f(t) =0\), it follows that if \(k > 1\) then \(f(t) >0\), while if \(k < 1\) then \(f(t) <0\). The result now follows by choosing \( x >y \) and rearranging f(x). \(\square \)

Lemma 6.5

Let \(q \in (1,2) \cup (2,\infty )\), let \(\gamma \in (0,1)\) and let \(p^\gamma \) be the placement of the complete graph \(K_4\) on the vertex set \(\{v_0 , v_1 , v_2, v_3 \}\) with,

Then \((K_4,p^\gamma )\) is independent in \(\ell _q^2\).

Proof

Consider the \(6 \times 6\)-matrix

Note that \(M_\gamma \) is the submatrix of the altered \(\tilde{R}(K_4,p^\gamma )\) formed by removing the columns corresponding to \(v_0\). Thus, if \(M_\gamma \) is invertible then \((K_4,p^\gamma )\) is independent. We have,

By Lemma 6.4, if \(q-1 >1\) then,

while if \(q-1 <1\) then,

Thus \(\det M_\gamma \not =0\) and so \(M_\gamma \) is invertible, as required. \(\square \)

Lemma 6.6

The graph \(K_7 - K_3\) is minimally rigid in \(\ell _q^3\) for any \(q \in (1,\infty ), q\ne 2\).

Proof

Let \(G:=K_7 - K_3\) be the graph with vertex set \(V := \{ v_0 , v_1 , v_2, v_3 , a , b , c \}\) and edge set \(E := K(V) \setminus \{ ab, ac , bc \}\). Choose \(\gamma \in (0,1)\). We now define a placement p of G in \(\ell _q^3\) by putting

Let \((K_4,r)\) be the bar-joint framework in \(\ell _q^2\) with,

Then, by Lemma 6.5, the altered rigidity matrix \(\tilde{R}(K_4,r)\) is independent. By shifting all \((v_i;1)\) and \((v_i;2)\) columns of \(\tilde{R}(G,p)\) to the left, we obtain the matrix

where for any \(a \in \mathbb {R}\) we define \(a_{n \times m}\) to be the \(n \times m\) matrix with a for each entry, and M is a \(12 \times 13\) matrix. To show \(\tilde{R}(G,p)\) is independent it suffices to show M has row independence.

By reordering rows and columns if needed, we have that

(we order the rows \((v_0,a), \ldots , (v_3,a)\), etc.) where \(I_4\) is the \(4 \times 4\) identity matrix and

By applying row operations to M we obtain a \(12 \times 13\) matrix of the form

where N is the \(8 \times 9\) matrix

and we note that the rows of N are linearly independent if and only if the rows of M are linearly independent. By adding the seventh and ninth columns to the eighth column followed by subtracting the first four rows of N from the last four rows of N (i.e. subtract the first from the fifth, the second from the sixth, etc.) we obtain

We may remove the eighth column to obtain the \(8 \times 8\) matrix

and note that the rows of N are linearly independent if and only if the rows of O are linearly independent. By subtracting the first row from the second, third and fourth rows, and by subtracting the fifth row from the sixth, seventh and eighth rows, followed by deleting the first and fifth rows and the last two columns, we obtain the \(6 \times 6\) matrix

and, as \(\det P=\det O\), O is invertible if and only if P is invertible. By subtracting the second column of P from the fourth, adding the sixth column of P to the fourth, and then deleting the second and sixth columns and the first and fourth rows, we obtain the \(4 \times 4\) matrix

and, as \(\det Q = -\det P\), P is invertible if and only if Q is invertible. By adding the fourth column of Q to the second and then deleting the third row and fourth columns, we obtain the \(3 \times 3\) matrix

Since \(\det R = (1-2^{q-1}) \det Q\) and \(2^{q-1} \ne 1\), Q is invertible if and only if R is invertible.

We now calculate that

thus R is not invertible if and only if either \(1 -\gamma ^{q-1} = (1-\gamma )^{q-1}\) or

By Lemma 6.4, as \(q \ne 2\) and \(1 >\gamma \), the first equality cannot hold, thus R is invertible if and only if Eq. (1) does not hold.

Consider the continuous function \(f:\mathbb {R} \rightarrow \mathbb {R}\) with

Note that \(f(1) = 2^{q-1}-2 \ne 0\), as \(q \ne 1\), and so we can choose \(\gamma \in (0,1)\) such that \(f(\gamma ) \ne 0\). Thus Eq. (1) does not hold and R is invertible. This now implies that R(G, p) has linearly independent rows, thus \(K_7 - K_3\) is independent in \(\ell _q^3\). Since \(f_3(K_7 - K_3) = 3\) also, we have that \(K_7 - K_3\) is minimally rigid in \(\ell _q^3\). \(\square \)

Theorem 6.7

Let \(G=(V,E)\) be a triangulation of the projective plane. Then G is minimally rigid in \(\ell _q^3\) for all \(q\in (1,\infty )\), \(q\ne 2\).

Proof

We prove the result by induction on |V|. Corollary 3.4(ii) shows that \(K_6\) is minimally rigid in \(\ell _q^3\) and Lemma 6.6 shows that \(K_7-K_3\) is minimally rigid in \(\ell _q^3\). Let \(G=(V,E)\) be a triangulation of the projective plane. Proposition 6.2 shows that G can be generated from \(K_6\) or \(K_7-K_3\) by topological vertex splitting operations. We can now apply Proposition 4.7 to show that G is minimally rigid in \(\ell _q^3\) completing the proof. \(\square \)

References

Barnette, D.: Generating the triangulations of the projective plane. J. Comb. Theory Ser. B 33, 222–230 (1982)

Beauzamy B.: Introduction to Banach spaces and their geometry, North-Holland Mathematics Studies, 68. Notas de Matemtica [Mathematical Notes], 86. North-Holland Publishing Co., Amsterdam (1985)

Cauchy, A.: Sur les polygones et polyedres. Second memoir. J. Ecol. Polytech. 9(1913), 87–99 (1813). (Oeuvres. T. 1. Paris 1905, 26–38)

Cioranescu, I.: Geometry of Banach Spaces, Duality Mappings and Nonlinear Problems, Mathematics and Its Applications, 62. Kluwer Academic Publishers Group, Dordrecht (1990)

Cook, J., Lovett, J., Morgan, F.: Rotation in a normed plane. Am Math Mon. 114(7), 628–632 (2007)

Dewar, S.: Infinitesimal rigidity in normed planes. SIAM J. Discrete Math. 34(2), 1205–1231 (2020)

Dewar, S.: Equivalence of continuous, local and infinitesimal rigidity in normed spaces. Discret. Comput. Geom. 65, 655–679 (2021)

Dewar, S.: The rigidity of countable frameworks in normed spaces. PhD thesis, Lancaster University (2019). https://doi.org/10.17635/lancaster/thesis/756

Fogelsanger, A.: The Generic Rigidity of Minimal Cycles. Department of Mathematics, University of Cornell, Ithaca (1988). PhD Thesis

Gluck, H.: Almost all simply connected closed surfaces are rigid, Geometric Topology, Lecture Notes in Mathematics, no. 438, pp. 225–239. Springer, Berlin (1975)

Jackson, B., Jordán, T.: The \(d\)-dimensional rigidity matroid of sparse graphs. J. Comb. Theory Ser. B 95, 118–133 (2005)

Kitson, D.: Finite and infinitesimal rigidity with polyhedral norms. Discret. Comput. Geom. 54, 390–411 (2015)

Kitson, D., Levene, R.H.: Graph rigidity for unitarily invariant matrix norms. J. Math. Anal. Appl. 491(2), 124353 (2020)

Kitson, D., Power, S.C.: Infinitesimal rigidity for non-Euclidean bar-joint frameworks. Bull. Lond. Math. Soc. 46(4), 685–697 (2014)

Laman, G.: On graphs and rigidity of plane skeletal structures. J. Eng. Math. 4, 331–340 (1970)

Maxwell, J.C.: On the calculation of the equilibrium and stiffness of frames. Lond. Edinb. Dublin Philos. Mag. J. Sci. 27(182), 294–299 (1864)

Nixon, A., Ross, E.: Inductive Constructions for Combinatorial Local and Global Rigidity. Handbook of Geometric Constraint Systems Principles. CRC Press, Boca Raton (2018)

Pollaczek-Geiringer, H.: Uber die Gliederung ebener Fachwerke. ZAMM J. Appl. Math. Mech. 7(1927), 58–72 and 12 (1932), 369–376

Steinitz, E., Rademacher, H.: Vorlesongen uber die Theorie der Polyeder. Springer, Berlin (1934)

Whiteley, W.: Cones, infinity and \(1\)-story buildings. Struct. Topol. 8, 53–70 (1983)

Whiteley, W.: Vertex splitting in isostatic frameworks. Struct. Topol. 16, 23–30 (1990)

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

D.K. supported by the Engineering and Physical Sciences Research Council (Grant Numbers EP/P01108X/1 and EP/S00940X/1). S.D. supported by the Austrian Science Fund (FWF): P31888.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Dewar, S., Kitson, D. & Nixon, A. Which graphs are rigid in \(\ell _p^d\)?. J Glob Optim 83, 49–71 (2022). https://doi.org/10.1007/s10898-021-01008-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10898-021-01008-z