Abstract

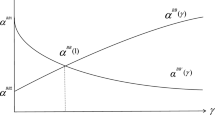

In this paper, we revisit the \(\alpha \)BB method for solving global optimization problems. We investigate optimality of the scaling vector used in Gerschgorin’s inclusion theorem to calculate bounds on the eigenvalues of the Hessian matrix. We propose two heuristics to compute a good scaling vector \(d\), and state three necessary optimality conditions for an optimal scaling vector. Since the scaling vectors calculated by the presented methods satisfy all three optimality conditions, they serve as cheap but efficient solutions. A small numerical study shows that they are practically always optimal.

Similar content being viewed by others

References

Floudas, C., Akrotirianakis, I., Caratzoulas, S., Meyer, C., Kallrath, J.: Global optimization in the 21st century: advances and challenges. Comput. Chem. Eng. 29(6), 1185–1202 (2005)

Floudas, C.A.: Deterministic global optimization. Theory, methods and applications. In: Nonconvex Optimization and its Applications, vol. 37. Kluwer, Dordrecht (2000)

Hansen, E.R., Walster, G.W.: Global Optimization using Interval Analysis, 2nd edn. Marcel Dekker, New York (2004)

Hendrix, E.M.T., Gazdag-Tóth, B.: Introduction to nonlinear and global optimization. In: Optimization and Its Applications, vol. 37. Springer, New York (2010)

Kearfott, R.B.: Rigorous Global Search: Continuous Problems. Kluwer, Dordrecht (1996)

Kearfott, R.B.: Interval computations, rigour and non-rigour in deterministic continuous global optimization. Optim. Methods Softw. 26(2), 259–279 (2011)

Kreinovich, V., Kubica, B.J.: From computing sets of optima, Pareto sets, and sets of Nash equilibria to general decision-related set computations. J. Univ. Comput. Sci. 16(18), 2657–2685 (2010)

Neumaier, A.: Complete search in continuous global optimization and constraint satisfaction. Acta Numer. 13, 271–369 (2004)

Ninin, J., Messine, F.: A metaheuristic methodology based on the limitation of the memory of interval branch and bound algorithms. J. Glob. Optim. 50(4), 629–644 (2011)

Adjiman, C.S., Androulakis, I.P., Floudas, C.A.: A global optimization method, \(\alpha \)BB, for general twice-differentiabe constrained NLPs - II. Implementation and computational results. Comput. Chem. Eng. 22(9), 1159–1179 (1998)

Adjiman, C.S., Dallwig, S., Floudas, C.A., Neumaier, A.: A global optimization method, \(\alpha \)BB, for general twice-differentiable constrained NLPs - I. Theoretical advances. Comput. Chem. Eng. 22(9), 1137–1158 (1998)

Androulakis, I.P., Maranas, C.D., Floudas, C.A.: \(\alpha BB\): a global optimization method for general constrained nonconvex problems. J. Glob. Optim. 7(4), 337–363 (1995)

Floudas, C.A., Gounaris, C.E.: A review of recent advances in global optimization. J. Glob. Optim. 45(1), 3–38 (2009)

Floudas, C.A., Pardalos, P.M. (eds.): Encyclopedia of Optimization, 2nd edn. Springer, New York (2009)

Skjäl, A., Westerlund, T.: New methods for calculating \(\alpha BB\)-type underestimators. J. Glob. Optim. pp. 1–17 (2014). doi:10.1007/s10898-013-0057-y

Akrotirianakis, I.G., Meyer, C.A., Floudas, C.A.: The role of the off-diagonal elements of the hessian matrix in the construction of tight convex underestimators for nonconvex functions. In: Discovery Through Product and Process Design. Sixth International Conference on Foundations of Computer-Aided Process Design, FOCAPD 2004, Princeton, New Jersey, pp. 501–504 (2004)

Skjäl, A., Westerlund, T., Misener, R., Floudas, C.A.: A generalization of the classical \(\alpha BB\) convex underestimation via diagonal and nondiagonal quadratic terms. J. Optim. Theory Appl. 154(2), 462–490 (2012)

Akrotirianakis, I.G., Floudas, C.A.: Computational experience with a new class of convex underestimators: Box-constrained NLP problems. J. Glob. Optim. 29(3), 249–264 (2004)

Akrotirianakis, I.G., Floudas, C.A.: A new class of improved convex underestimators for twice continuously differentiable constrained NLPs. J. Glob. Optim. 30(4), 367–390 (2004)

Floudas, C.A., Kreinovich, V.: On the functional form of convex underestimators for twice continuously differentiable functions. Optim. Lett. 1(2), 187–192 (2007)

Zhu, Y., Kuno, T.: A global optimization method, QBB, for twice-differentiable nonconvex optimization problem. J. Glob. Optim. 33(3), 435–464 (2005)

Anstreicher, K.M.: On convex relaxations for quadratically constrained quadratic programming. Math. Program. 136(2), 233–251 (2012)

Domes, F., Neumaier, A.: Rigorous filtering using linear relaxations. J. Glob. Optim. 53(3), 441–473 (2012)

Scott, J.K., Stuber, M.D., Barton, P.I.: Generalized mccormick relaxations. J. Glob. Optim. 51(4), 569–606 (2011)

Hladík, M.: Bounds on eigenvalues of real and complex interval matrices. Appl. Math. Comput. 219(10), 5584–5591 (2013)

Hladík, M., Daney, D., Tsigaridas, E.: Bounds on real eigenvalues and singular values of interval matrices. SIAM J. Matrix Anal. Appl. 31(4), 2116–2129 (2010)

Hladík, M., Daney, D., Tsigaridas, E.P.: A filtering method for the interval eigenvalue problem. Appl. Math. Comput. 217(12), 5236–5242 (2011)

Mönnigmann, M.: Fast calculation of spectral bounds for hessian matrices on hyperrectangles. SIAM J. Matrix Anal. Appl. 32(4), 1351–1366 (2011)

Hladík, M.: The effect of Hessian evaluations in the global optimization \(\alpha \)BB method (2013). URL: http://arxiv.org/abs/1307.2791. Preprint

Boyd, S., Vandenberghe, L.: Convex Optimization. Cambridge University Press, Cambridge (2004)

Hogben, L. (ed.): Handbook of Linear Algebra. Chapman & Hall/CRC, London (2007)

Meyer, C.D.: Matrix Analysis and Applied Linear Algebra. SIAM, Philadelphia (2000)

Gounaris, C.E., Floudas, C.A.: Tight convex underestimators for \({\cal {C}}^{2}\)-continuous problems. II: multivariate functions. J. Glob. Optim. 42(1), 69–89 (2008)

Rump, S.M.: INTLAB—INTerval LABoratory. In: Csendes, T. (ed.) Developments in Reliable Computing. Kluwer, Dordrecht pp. 77–104 (1999). URL: http://www.ti3.tu-harburg.de/rump/

Acknowledgments

The author was supported by the Czech Science Foundation Grant P402-13-10660S.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Hladík, M. On the efficient Gerschgorin inclusion usage in the global optimization \(\alpha \hbox {BB}\) method. J Glob Optim 61, 235–253 (2015). https://doi.org/10.1007/s10898-014-0161-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10898-014-0161-7