Abstract

Purpose

Responses provided by unmotivated survey participants in a careless, haphazard, or random fashion can threaten the quality of data in psychological and organizational research. The purpose of this study was to summarize existing approaches to detect insufficient effort responding (IER) to low-stakes surveys and to comprehensively evaluate these approaches.

Design/Methodology/Approach

In an experiment (Study 1) and a nonexperimental survey (Study 2), 725 undergraduates responded to a personality survey online.

Findings

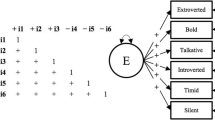

Study 1 examined the presentation of warnings to respondents as a means of deterrence and showed the relative effectiveness of four indices for detecting IE responses: response time, long string, psychometric antonyms, and individual reliability coefficients. Study 2 demonstrated that the detection indices measured the same underlying construct and showed the improvement of psychometric properties (item interrelatedness, facet dimensionality, and factor structure) after removing IE respondents identified by each index. Three approaches (response time, psychometric antonyms, and individual reliability) with high specificity and moderate sensitivity were recommended as candidates for future application in survey research.

Implications

The identification of effective IER indices may help researchers ensure the quality of their low-stake survey data.

Originality/value

This study is a first attempt to comprehensively evaluate IER detection methods using both experimental and nonexperimental designs. Results from both studies corroborated each other in suggesting the three more effective approaches. This study also provided convergent validity evidence regarding various indices for IER.

Similar content being viewed by others

Notes

The extra item on each page, named check item, represented a failed attempt to improve on the infrequency approach. Each check item instructed participants to select a particular response option, e.g., “Please select Moderately Inaccurate for this item”, and selecting any other response category would indicate IER. We excluded check items from this study because—inconsistent with the survey instruction, the manipulation check, and the other indices—the check item index flagged an unusually high rate of IER, even in the group of motivated respondents in Cell 1 of Study 1. We suspect some respondents may have viewed the check items as a measure of personality rather than an instruction set. Additional analysis also revealed significant trait influence on responses to the check items, after controlling for the other IER indices.

When severe departure from homogeneity of variance occurred, ANOVA results were verified using pairwise unequal variance t-tests. For all repeated ANOVA, the Greenhouse–Geisser adjusted p value was reported when Mauchly’s W test for sphericity was significant.

We explored the correlation between (a) the extent to which each of the 30 scales contained unequal numbers of positively and negatively worded items (i.e., |N positive − N negative|) and (b) the increase in Cronbach’s alpha on each scale after removal of suspect IER using the 99% specificity long string index. The result of r = −.33, p = .07, N = 30 suggests, albeit inconclusively, that IER in the form of long string responding had a stronger impact on scales with equal rather than unbalanced number of positively and negatively worded items.

References

Archer, R. P., & Elkins, D. E. (1999). Identification of random responding on the MMPI-A. Journal of Personality Assessment, 73, 407–421.

Archer, R. P., Fontaine, J., & McCrae, R. R. (1998). Effects of two MMPI-2 validity scales on basic scale relations to external criteria. Journal of Personality Assessment, 70, 87–102.

Babbie, E. (2001). The practice of social research (9th ed.). Belmont, CA: Wadsworth.

Baer, R. A., Ballenger, J., Berry, D. T. R., & Wetter, M. W. (1997). Detection of random responding on the MMPI-A. Journal of Personality Assessment, 68, 139–151.

Baer, R. A., Kroll, L. S., Rinaldo, J., & Ballenger, J. (1999). Detecting and discriminating between random responding and overreporting on the MMPI-A. Journal of Personality Assessment, 72, 308–320.

Bagby, R. M., Gillis, J. R., & Rogers, R. (1991). Effectiveness of the Millon Clinical Multiaxial Inventory Validity Index in the detection of random responding. Psychological Assessment, 3, 285–287.

Barton, M. B., Harris, R., & Fletcher, S. W. (1999). Does this patient have breast cancer? The screening clinical breast examination: should it be done? How? JAMA, 282, 1270–1280.

Beach, D. A. (1989). Identifying the random responder. Journal of Psychology: Interdisciplinary and Applied, 123, 101–103.

Behrend, T. S., Sharek, D. J., Meade, A. W., & Wiebe, E. N. (2011). The viability of crowdsourcing for survey research. Behavior Research Methods. doi:10.3758/s13428-011-0081-0

Berry, D. T. R., Baer, R. A., & Harris, M. J. (1991). Detection of malingering on the MMPI: a meta-analysis. Clinical Psychology Review, 11, 585–598.

Berry, D. T. R., Wetter, M. W., Baer, R. A., Larsen, L., Clark, C., & Monroe, K. (1992). MMPI-2 random responding indices: validation using a self-report methodology. Psychological Assessment, 4, 340–345.

Bruehl, S., Lofland, K. R., Sherman, J. J., & Carlson, C. R. (1998). The Variable Responding Scale for detection of random responding on the Multidimensional Pain Inventory. Psychological Assessment, 10, 3–9.

Buechley, R., & Ball, H. (1952). A new test of “validity” for the group MMPI. Journal of Consulting Psychology, 16, 299–301.

Butcher, J. N., Dahlstrom, W. G., Graham, J. R., Tellegen, A., & Kaemmer, B. (1989). Minnesota Multiphasic Personality Inventory-2 (MMPI-2): manual for administration and scoring. Minneapolis: University of Minnesota Press.

Butcher, J. N., Williams, C. L., Graham, J. R., Archer, R. P., Tellegen, A., Ben-Porath, Y. S., et al. (1992). MMPI–A: Minnesota Multiphasic Personality Inventory–Adolescent: manual for administration, scoring, and interpretation. Minneapolis: University of Minnesota Press.

Charter, R. A. (1994). Determining random responding for the Category, Speech-Sounds Perception, and Seashore Rhythm tests. Journal of Clinical and Experimental Neuropsychology, 16, 744–748.

Cortina, J. M. (1993). What is coefficient alpha? An examination of theory and applications. Journal of Applied Psychology, 78, 98–104.

Costa, P. T., Jr., & McCrae, R. R. (1992). NEO PI-R professional manual. Odessa, FL: Psychological Assessment Resources.

Costa, P. T., Jr., & McCrae, R. R. (1997). Stability and change in personality assessment: The Revised NEO Personality Inventory in the Year 2000. Journal of Personality Assessment, 68, 86–94.

Costa, P. T., Jr., & McCrae, R. R. (2008). The Revised NEO Personality Inventory (NEO-PI-R). In G. J. Boyle, G. Matthews, & D. H. Saklofske (Eds.), The Sage handbook of personality theory and assessment: personality measurement and testing (pp. 179–198). London: Sage.

Curran, P. G., Kotrba, L., & Denison, D. (2010, April). Careless responding in surveys: applying traditional techniques to organizational settings. Paper presented at the 25th annual conference of Society for Industrial and Organizational Psychology, Atlanta, GA.

DiLalla, D. L., & Dollinger, S. J. (2006). Cleaning up data and running preliminary analyses. In F. T. L. Leong & J. T. Austin (Eds.), The psychology research handbook: a guide for graduate students and research assistants (2nd ed., pp. 241–253). Thousand Oaks, CA: Sage.

Dwight, S. A., & Donovan, J. J. (2003). Do warnings not to fake reduce faking? Human Performance, 16, 1–23.

Evans, R. G., & Dinning, W. D. (1983). Response consistency among high F scale scorers on the MMPI. Journal of Clinical Psychology, 39, 246–248.

Gleason, J. M., & Barnum, D. T. (1991). Predictive probabilities in employee drug-testing. Risk, 2, 3–18.

Goldberg, L. R. (1999). A broad-bandwidth, public-domain, personality inventory measuring the lower-level facets of several five-factor models. In I. Mervielde, I. Deary, F. D. Fruyt, & F. Ostendorf (Eds.), Personality psychology in Europe (Vol. 7, pp. 7–28). Tilburg, The Netherlands: Tilburg University Press.

Goldberg, L. R., & Kilkowski, J. M. (1985). The prediction of semantic consistency in self-descriptions: characteristics of persons and of terms that affect the consistency of responses to synonym and antonym pairs. Journal of Personality and Social Psychology, 48, 82–98.

Green, S. B., & Stutzman, T. M. (1986). An evaluation of methods to select respondents to structured job-analysis questionnaires. Personnel Psychology, 39, 543–564.

Greene, R. L. (1978). An empirically derived MMPI Carelessness Scale. Journal of Clinical Psychology, 34, 407–410.

Haertzen, C. A., & Hill, H. E. (1963). Assessing subjective effects of drugs: an index of carelessness and confusion for use with the Addiction Research Center Inventory (ARCI). Journal of Clinical Psychology, 19, 407–412.

Hartwig, F., & Dearing, B. E. (1979). Exploratory data analysis. Thousand Oaks, CA: Sage.

Hough, L. M., Eaton, N. K., Dunnette, M. D., Kamp, J. D., & McCloy, R. A. (1990). Criterion-related validities of personality constructs and the effect of response distortion on those validities. Journal of Applied Psychology, 75, 581–595.

Hsu, L. M. (2002). Diagnostic validity statistics and the MCMI-III. Psychological Assessment, 14, 410–422.

Jackson, D. N. (1976). The appraisal of personal reliability. Paper presented at the meetings of the Society of Multivariate Experimental Psychology, University Park, PA.

Johnson, J. A. (2005). Ascertaining the validity of individual protocols from Web-based personality inventories. Journal of Research in Personality, 39, 103–129.

Kline, R. B. (2009). Becoming a behavioral science researcher: a guide to producing research that matters. New York: Guilford.

Kurtz, J. E., & Parrish, C. L. (2001). Semantic response consistency and protocol validity in structured personality assessment: the case of the NEO-PI-R. Journal of Personality Assessment, 76, 315–332.

Lucas, R. E., & Baird, B. M. (2005). Global self-assessment. In M. Eid & E. Diener (Eds.), Handbook of multimethod measurement in psychology (pp. 29–42). Washington, DC: American Psychological Association.

Marsh, H. W. (1987). The Self-Description Questionnaire 1: manual and research monograph. San Antonio, TX: Psychological Corporation.

Martin, S. L., & Terris, W. (1990). The four-cell classification table in personnel selection: a heuristic device gone awry. The Industrial-Organizational Psychologist, 47(3), 49–55.

McGrath, R. E., Mitchell, M., Kim, B. H., & Hough, L. (2010). Evidence for response bias as a source of error variance in applied assessment. Psychological Bulletin, 136, 450–470.

Meade, A. W., & Craig, S. B. (2011, April). Identifying careless responses in survey data. Paper presented at the 26th annual conference of the Society for Industrial and Organizational Psychology, Chicago, IL.

Morey, L. C., & Hopwood, C. J. (2004). Efficiency of a strategy for detecting back random responding on the personality assessment inventory. Psychological Assessment, 16, 197–200.

Morgeson, F. P., & Campion, M. A. (1997). Social and cognitive sources of potential inaccuracy in job analysis. Journal of Applied Psychology, 82, 627–655.

Nichols, D. S., Greene, R. L., & Schmolck, P. (1989). Criteria for assessing inconsistent patterns of item endorsement on the MMPI: rationale, development, and empirical trials. Journal of Clinical Psychology, 45, 239–250.

O’Rourke, T. (2000). Techniques for screening and cleaning data for analysis. American Journal of Health Studies, 16, 205–207.

Piedmont, R. L., McCrae, R. R., Riemann, R., & Angleitner, A. (2000). On the invalidity of validity scales: evidence from self-reports and observer ratings in volunteer samples. Journal of Personality and Social Psychology, 78, 582–593.

Pinsoneault, T. B. (1998). A Variable Response Inconsistency Scale and a True Response Inconsistency Scale for the Jesness Inventory. Psychological Assessment, 10, 21–32.

Pinsoneault, T. B. (2007). Detecting random, partially random, and nonrandom Minnesota Multiphasic Personality Inventory-2 protocols. Psychological Assessment, 19, 159–164.

Rosse, J. G., Levin, R. A., & Nowicki, M. D. (1999, April). Assessing the impact of faking on job performance and counter-productive behaviors. Paper presented at the 14th annual meeting of the Society for Industrial and Organizational Psychology, Atlanta.

Schinka, J. A., Kinder, B. N., & Kremer, T. (1997). Research validity scales for the NEO-PI-R: development and initial validation. Journal of Personality Assessment, 68, 127–138.

Schmit, M. J., & Ryan, A. M. (1993). The Big Five in personnel selection: factor structure in applicant and nonapplicant populations. Journal of Applied Psychology, 78, 966–974.

Schmitt, N., & Stults, D. M. (1985). Factors defined by negatively keyed items: the result of careless respondents? Applied Psychological Measurement, 9, 367–373.

Seo, M. G., & Barrett, L. F. (2007). Being emotional during decision making–good or bad? An empirical investigation. Academy of Management Journal, 50, 923–940.

Smith, P. C., Budzeika, K. A., Edwards, N. A., Johnson, S. M., & Bearse, L. N. (1986). Guidelines for clean data: detection of common mistakes. Journal of Applied Psychology, 71, 457–460.

Stevens, J. P. (1984). Outliers and influential data points in regression analysis. Psychological Bulletin, 95, 334–344.

Streiner, D. L. (2003). Diagnosing tests: using and misusing diagnostic and screening tests. Journal of Personality Assessment, 81, 209–219.

Swets, J. A. (1992). The science of choosing the right decision threshold in high-stakes diagnostics. American Psychologist, 47, 522–532.

Thompson, A. H. (1975). Random responding and the questionnaire measurement of psychoticism. Social Behavior and Personality, 3, 111–115.

Tukey, J. W. (1977). Exploratory data analysis. Reading, MA: Addison-Wesley.

van Ginkel, J. R., & van der Ark, L. A. (2005). SPSS syntax for missing value imputation in test and questionnaire data. Applied Psychological Measurement, 29, 152–153.

Wetter, M. W., Baer, R. A., Berry, D. T. R., Smith, G. T., & Larsen, L. H. (1992). Sensitivity of MMPI-2 validity scales to random responding and malingering. Psychological Assessment, 4, 369–374.

Wilkinson, L., & The Task Force on Statistical Inference. (1999). Statistical methods in psychology journals: guidelines and explanations. American Psychologist, 54, 594–604.

Wilson, M. A., Harvey, R. J., & Macy, B. A. (1990). Repeating items to estimate the test-retest reliability of task inventory ratings. Journal of Applied Psychology, 75, 158–163.

Wise, S. L., & DeMars, C. E. (2006). An application of item response time: the effort-moderated IRT model. Journal of Educational Measurement, 43, 19–38.

Wise, S. L., & Kong, X. (2005). Response time effort: a new measure of examinee motivation in computer-based tests. Applied Measurement in Education, 18, 163–183.

Woods, C. M. (2006). Careless responding to reverse-worded items: implications for confirmatory factor analysis. Journal of Psychopathology and Behavioral Assessment, 28, 189–194.

Acknowledgments

We thank Goran Kuljanin for collecting data for the two studies. We are grateful for the constructive comments from Neal Schmitt and Ann Marie Ryan on an earlier draft of this article.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Huang, J.L., Curran, P.G., Keeney, J. et al. Detecting and Deterring Insufficient Effort Responding to Surveys. J Bus Psychol 27, 99–114 (2012). https://doi.org/10.1007/s10869-011-9231-8

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10869-011-9231-8