Abstract

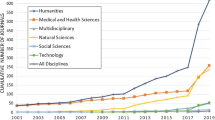

Far from allowing a governance of universities by the invisible hand of market forces, research performance assessments do not just measure differences in research quality, but yield themselves visible symptoms in terms of a stratification and standardization of disciplines. The article illustrates this with a case study of UK history departments and their assessment by the Research Assessment Exercise (RAE) and the Research Excellence Framework (REF), drawing on data from the three most recent assessments (RAE 2001, 2008, REF 2014). Symptoms of stratification are documented by the distribution of memberships in assessment panels, of research active staff, and of external research grants. Symptoms of a standardization are documented by the publications submitted to the assessments. The main finding is that the RAEs/REF and the selective allocation of funds they inform consecrate and reproduce a disciplinary center that, in contrast to the periphery, is well-endowed with grants and research staff, decides in panels over the quality standards of the field, and publishes a high number of articles in high-impact journals. This selectivity is oriented toward previous distributions of resources and a standardized notion of “excellence” rather than research performance.

Similar content being viewed by others

Notes

Assessments of the evolution of the RAE/REF over the years are abound (Bence and Oppenheim 2005; Martin and Whitley 2010). While the RAE 2001 is above all characterized by a grade inflation and a subsequent much more concentrated funding policy by the HEFCE, the main change in the RAE 2008a, b, c was the introduction of research profiles for each department, based on what proportion of its publications was judged to be of national or international quality. The most important novelty of the REF is that “output quality” (now weighed at 60 %) is supplemented with “impact” (25 %) and “research environment” (15 %) (REF 2011).

A complete list of journals can be requested from the author.

The flow of research staff should always be seen in proportion to absolute research positions. While a 55 % increase in research staff for the “bottom 6” of 2008 corresponds to an absolute growth of 15.8 FTE research positions, the 17 % increase of research staff at the “top 6” departments in the same period equals an absolute growth of 35.5 FTE research positions. Although the differences in relative staff increase (55 and 17 %) may indicate the contrary, the gap between both rank groups still grows in favor of the top rank group.

The extraordinary gap between the top and bottom groups in the RAE 2008 is caused by the financial position of the history department at UCL described in footnote 6.

The peculiar financial position of the history department at UCL in Fig. 1 is caused by an institutional exception: from 1966 to 2012, the UCL Centre for the History of Medicine was primarily funded by the Wellcome Trust. Accordingly, during the period in question the trust awarded the Centre two grants, which explain the exceptional position of UCL in terms of external research funds (RAE 2008c).

A less pronounced relation could be found between book chapters and rank group, no distinct relation could be found between edited books and rank groups. The data for chapters and edited volumes can be requested from the author.

This is particularly remarkable since the history panel of the REF 2014 vowed not to “privilege any journal or conference rankings/lists, the perceived standing of the publisher or the medium of publication, or where the research output is published.” (REF 2012: 87).

References

Archambault, É., Vignola Gagné, É., Côté, G., Larivière, V., & Gingras, Y. (2006). Benchmarking scientific output in the social sciences and humanities: the limits of existing databases. Scientometrics, 68(3), 329–342.

Bence, V., & Oppenheim, C. (2005). The evolution of the UK’s Research Assessment Exercise: Publications, performance and perceptions. Journal of Educational Administration and History, 37(2), 137–155.

Benner, M., & Sandström, U. (2000). Institutionalizing the triple helix: research funding and norms in the academic system. Research Policy, 29(2000), 291–301.

Bourdieu, P. (1986). The forms of capital. In J. G. Richardson (Ed.), Handbook of Theory and Research for the Sociology of Education (pp. 241–258). New York: Greenwood Press.

Bourdieu, P. (1988). Homo Academicus. Cambridge: Polity Press.

Brown, R., & Carasso, H. (2013). Everything for Sale? The marketisation of UK higher education. London: Routledge.

Burris, V. (2004). The academic caste system: Prestige hierarchies in PhD exchange networks. American Sociological Review, 69(2), 239–264.

Campbell, D. T. (1979). Assessing the impact of planned social change. Evaluation and Program Planning, 2(1), 67–90.

Campbell, K., Vick, D. W., Murray, A. D., & Little, G. F. (1999). Journal publishing, journal reputation, and the United Kingdom’s Research Assessment Exercise. Journal of Law and Society, 26(4), 470–501.

Cole, J. R., & Cole, S. (1973). Social Stratification in Science. Chicago: Chicago University Press.

Davis, K., & Moore, W. E. (1944). Some principles of stratification. American Sociological Review, 10(2), 242–249.

Deem, R., Hillyard, S., & Reed, M. (2008). Knowledge, higher education, and the new managerialism: The changing management of UK universities. Oxford: Oxford University Press.

Elton, L. (2000). The UK Research Assessment Exercise: Unintended consequences. Higher Education Quarterly, 54(3), 274–283.

Espeland, W. N., & Sauder, M. (2007). Rankings and reactivity. How public measures recreate social worlds. American Journal of Sociology, 113(1), 1–40.

Foucault, M. (2010). The government of self and others. Lectures at the Collège de France 1982–1983. New York: Palgrave Macmillan.

Gengnagel, V., & Hamann, J. (2014). The making and persisting of modern german humanities. Balancing acts between autonomy and social relevance. In R. Bod, J. Maat, & T. Weststeijn (Eds.), The making of the humanities III. The modern humanities (pp. 641–654). Amsterdam: Amsterdam University Press.

Geuna, A., & Martin, B. R. (2003). University research evaluation and funding: An international comparison. Minerva, 41(4), 277–304.

Hamann, J. (2014). Die Bildung der Geisteswissenschaften. Zur Genese einer sozialen Konstruktion zwischen Diskurs und Feld. Konstanz: UVK.

Hare, P. G. (2003). The United Kingdom’s Research Assessment Exercise: Impact on institutions, departments, individuals. Higher Education Management and Policy, 15(2), 43–61.

Harley, S. (2002). The impact of research selectivity on academic work and identity in UK universities. Studies in Higher Education, 27(2), 187–205.

Harley, S., & Lee, F. S. (1997). Research selectivity, managerialism, and the academic labor process: The future of nonmainstream economics in UK universities. Human Relations, 50(11), 1427–1460.

Harman, G. (2005). Australian social scientists and transition to a more commercial university environment. Higher Education Research & Development, 24(1), 79–94.

Hazelkorn, E. (2007). The Impact of league tables and ranking systems on higher education decision making. Higher Education Management and Policy, 19(2), 1–24.

Henkel, M. (1999). The modernisation of research evaluation: The case of the UK. Higher Education, 38, 105–122.

Hicks, D. (2012). Performance-based university research funding systems. Research Policy, 41(2), 251–261.

Kehm, B. M., & Leišytė, L. (2010). Effects of new governance on research in the humanities--. The example of medieval history. In D. Jansen (Ed.), Governance and performance in the German Public Research Sector. Disciplinary differences (pp. 73–90). Berlin: Springer.

Laudel, G. (2005). Is external research funding a valid indicator for research performance? Research Evaluation, 14(1), 27–34.

Lee, F. S. (2007). The Research Assessment Exercise, the state and the dominance of mainstream economics in British universities. Cambridge Journal of Economics, 31(2), 309–325.

Lee, F. S., & Harley, S. (1998). Peer review, the Research Assessment Exercise and the demise of non-mainstream economics. Capital & Class, 22(3), 23–51.

Lee, F. S., Pham, X., & Gu, G. (2013). The UK Research Assessment Exercise and the narrowing of UK economics. Cambridge Journal of Economics, 37(4), 693–717.

Leišytė, L., & Westerheijden, D. (2014). Research Evaluation and Its Implications for Academic Research in the United Kingdom and the Netherlands. Discussion Papers des Zentrums für HochschulBildung, Technische Universität Dortmund, 2014(1), 3–32.

Lucas, L. (2006). The Research Game in Academic Life. Maidenhead: Open University Press.

Martin, B. R., & Whitley, R. D. (2010). The UK Research Assessment Exercise. A case of regulatory capture? In R. D. Whitley, J. Gläser, & L. Engwall (Eds.), Reconfiguring knowledge production. Changing authority relationships in the sciences and their consequences for intellectual innovation (pp. 51–80). Oxford: Oxford University Press.

Merton, R. K. (1968). The Matthew effect in science. Science, 159(3810), 56–63.

Merton, R. K. (1973). The sociology of science. Theoretical and empirical investigations. Chicago: University of Chicago Press.

Moed, H. F. (2008). UK Research Assessment Exercises: Informed judgments on research quality or quantity? Scientometrics, 74(1), 153–161.

Morgan, K. J. (2004). The research assessment exercise in English universities, 2001. Higher Education, 48, 461–482.

Morrissey, J. (2013). Governing the academic subject: Foucault, governmentality and the performing university. Oxford Review of Education, 39(6), 797–810.

Münch, R. (2008). Stratifikation durch Evaluation. Mechanismen der Konstruktion und Reproduktion von Statushierarchien in der Forschung. Zeitschrift für Soziologie, 37(1), 60–80.

Münch, R., & Schäfer, L. O. (2014). Rankings, diversity and the power of renewal in science. A comparison between Germany, the UK and the US. European Journal of Education, 49(1), 60–76.

Power, M. (1997). The Audit Society. Rituals of verification. Oxford: Oxford University Press.

RAE. (1992). Universities Funding Council. Research Assessment Exercise 1992: The Outcome. Circular 26/92 Table 62, History. http://www.rae.ac.uk/1992/c26_92t62.html. Accessed 08 Aug 2015.

RAE. (1996). 1996 Research Assessment Exercise. Unit of assessment: 59 History. http://www.rae.ac.uk/1996/1_96/t59.html. Accessed 08 Aug 2015.

RAE. (2001a). 2001 Research Assessment Exercise. Unit of Assessment: 59 History. http://www.rae.ac.uk/2001/results/byuoa/uoa59.htm. Accessed 08 Aug 2015.

RAE. (2001b). Panel list history. http://www.rae.ac.uk/2001/PMembers/Panel59.htm. Accessed 08 Aug 2015.

RAE. (2001c). Section III: Panels’ criteria and working methods. http://www.rae.ac.uk/2001/pubs/5_99/ByUoA/Crit59.htm. Accessed 08 Aug 2015.

RAE. (2001d). Submissions, UoA history. http://www.rae.ac.uk/2001/submissions/Inst.asp?UoA=59. Accessed 08 Aug 2015.

RAE. (2001e). What is the RAE 2001? http://www.rae.ac.uk/2001/AboutUs/. Accessed 08 Aug 2015.

RAE. (2008a). RAE 2008 panels. http://www.rae.ac.uk/aboutus/panels.asp. Accessed 08 Aug 2015.

RAE. (2008b). RAE 2008 quality profiles UOA 62 history. http://www.rae.ac.uk/results/qualityProfile.aspx?id=62&type=uoa. Accessed 08 Aug 2015.

RAE. (2008c). RAE 2008 submissions, UOA 62 history. http://www.rae.ac.uk/submissions/submissions.aspx?id=62&type=uoa. Accessed 08 Aug 2015.

REF. (2011). Assessment framework and guidance on submissions. http://www.ref.ac.uk/media/ref/content/pub/assessmentframeworkandguidanceonsubmissions/GOS%20including%20addendum.pdf. Accessed 08 Aug 2015.

REF. (2012). Panel criteria and working methods, Part 2D: Main panel D criteria. http://www.ref.ac.uk/media/ref/content/pub/panelcriteriaandworkingmethods/01_12_2D.pdf. Accessed 08 Aug 2015.

REF. (2014a). Panel membership, Main panel D and sub-panels 27-36. http://www.ref.ac.uk/media/ref/content/expanel/member/Main%20Panel%20D%20membership%20%28Sept%202014%29.pdf. Accessed 08 Aug 2015.

REF. (2014b). REF 2014 results & submissions, UOA 30—History. http://results.ref.ac.uk/Results/ByUoa/30. Accessed 08 Aug 2015.

Royal Society. (2009). Journals under threat: a joint response from history of science, technology and medicine authors. Qualität in der Wissenschaft, 34(4), 62–63.

Sayer, D. (2014). Rank Hypocrisies. The Insult of the REF. New York et al.: Sage.

Sharp, S., & Coleman, S. (2005). Ratings in the Research Assessment Exercise 2001—The patterns of university status and panel membership. Higher Education Quarterly, 59(2), 153–171.

Slaughter, S., & Leslie, L. L. (1999). Academic Capitalism: Politics, Policies, and the Entrepreneurial University. Baltimore: John Hopkins University Press.

Strathern, M. (1997). ’Improving ratings’: audit in the British University system. European Review, 5(3), 305–321.

Talib, A. A. (2001). The Continuing behavioural modification of academics since the 1992 Research Assessment Exercise. Higher Education Review, 33(3), 30–46.

Tapper, T., & Salter, B. (2002). The external pressures on the internal governance of universities. Higher Education Quarterly, 56(3), 245–256.

Tapper, T., & Salter, B. (2004). Governance of higher education in Britain: The significance of the Research Assessment Exercise for the founding council model. Higher Education Quarterly, 58(1), 4–30.

Teixeira, P., Jongbloed, B. W., Dill, D., & Amaral, A. (Eds.). (2004). Markets in higher education. Rhetoric or reality? Dordrecht: Springer.

The Past Speaks. (2011). Rankings of history journals. http://pastspeaks.com/2011/06/15/erih-rankings-of-history-journals/. Accessed 08. Aug 2015.

Times Higher Education. (2008). Historians decry journal rankings. (2008, 04. Jan 2008).

Weber, M. (1978). Economy and society, 2 vol. Berkeley, Los Angeles: University of California Press.

Whitley, R. D., Gläser, J., & Engwall, L. (Eds.). (2010). Reconfiguring knowledge production. Changing authority relationships in the sciences and their consequences for intellectual innovation. Oxford: Oxford University Press.

Willmott, H. (2011). Journal list fetishism and the perversion of scholarship: reactivity and the ABS list. Organization, 18(4), 429–442.

Wooding, S., van Leeuwen, T. N., Parks, S., Kapur, S., & Grant, J. (2015). UK doubles its “World-Leading” research in life sciences and medicine in six years: Testing the claim? PLoS One, 10(7), e0132990.

Yokoyama, K. (2006). The effect of the research assessment exercise on organisational culture in English universities: collegiality versus managerialism. Tertiary Education and Management, 12(4), 311–322.

Zuccala, A., Guns, R., Cornacchia, R., & Bod, R. (2014). Can we rank scholarly book publishers? A bibliometric experiment with the field of history. Journal of the American Society for Information Science and Technology, 66(7), 1333–1347.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The author declares that he has no conflict of interest.

Rights and permissions

About this article

Cite this article

Hamann, J. The visible hand of research performance assessment. High Educ 72, 761–779 (2016). https://doi.org/10.1007/s10734-015-9974-7

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10734-015-9974-7