Abstract

Deep convolutional networks have significantly improved the performance of smoke image recognition. However, the trained spatially-shared weights are applied to all pixels irrespective of the image content at the specific position, which may be suboptimal to address complicated smoke variants in shape, texture and color. Based on this background, we propose a deep convolutional network with pixel-aware attention for smoke recognition. A pixel-aware attention module is devised to modify the standard convolution in a pixel-specific fashion. The learned weights are dynamically conditioned on pixels in the smoke image, adaptively recalibrating the pixel features at the identical position along feature channels, and therefore enrich the feature representation space. Then, we build a simple and efficient deep convolutional network by introducing pixel-aware attention modules to recognize smoke images. Experimental results conducted on the publicly available smoke recognition database verify that the proposed smoke recognition network has achieved a very high detection rate that exceeds 98.3% on average, superior to state-of-the-art relevant competitors. Furthermore, our network only employs 0.3M learnable parameters and 90M FLOPs.

Similar content being viewed by others

References

Geetha S, Abhishek CS, Akshayanat CS (2020) Machine vision based fire detection techniques: a survey. Fire Technol 57(4):591–623

Gaur A, Singh A, Kumar A, Kumar A, Kapoor K (2020) Video flame and smoke based fire detection algorithms: a literature review. Fire Technol 56(5):1943–1980

Yuan F, Shi J, Xia X, Yang Y, Wang R (2016) Sub oriented histograms of local binary patterns for smoke detection and texture classification. KSII Trans Internet Inf Syst 10(4):1807–1823

Yuan F, Shi J, Xia X, Yang Y, Fang Y, Fang Z, Mei T (2016) High-order local ternary patterns with locality preserving projection for smoke detection and image classification. Inf Sci 372:225–240

Dubey SR, Singh SK, Singh RK (2016) Multichannel decoded local binary patterns for content-based image retrieval. IEEE Trans Image Process 25(9):4018–4032

Alamgir N, Nguyen K, Chandran V, Boles W (2018) Combining multi-channel color space with local binary co-occurrence feature descriptors for accurate smoke detection from surveillance videos. Fire Saf J 102(12):1–10

Gubbi J, Marusic S, Palaniswami M (2009) Smoke detection in video using wavelets and support vector machines. Fire Saf J 44(8):1110–1115

Ferrari RJ, Zhang H, Kube CR (2007) Real-time detection of steam in video images. Pattern Recogn 40(3):1148–1159

Krizhevsky A, Sutskever I, Hinton GE (2017) Imagenet classification with deep convolutional neural networks. Commun ACM 60(6):84–90

Geng J, Wang H, Fan J, Ma X (2017) Deep supervised and contractive neural network for SAR image classification. IEEE Trans Geosci Romote Sens 55(4):2442–2459

Szeged C, Liu W, Ji Y, Sermanet P, Reed S (2015) Going deeper with convolutions. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR), pp 1–9

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR), pp 770–778

Yin Z, Wang B, Yuan F, Xia X, Shi J (2017) A deep normalization and convolutional neural network for image smoke detection. IEEE Access 5:18429–18438

Yuan F, Zhang L, Wan B, Xia X, Shi J (2019) Convolutional neural networks based on multi-scale additive merging layers for visual smoke recognition. Mach Vis Appl 30(2):345–358

Liu Y, Qin W, Liu K, Fang Z, Xiao Z (2019) A dual convolution network using dark channel prior for image smoke classification. IEEE Access 7:60697–60706

Pundir AS, Raman B (2019) Dual deep learning model for image based smoke detection. Fire Technol 55(6):2419–2442

Gu K, Xia Z, Qiao J, Lin W (2020) Deep dual-channel neural network for image-based smoke detection. IEEE Trans Multimedia 22(2):311–323

Zhang F, Qin W, Liu Y, Xiao Z, Liu K (2020) A dual-channel convolution neural network for image smoke detection. Multimedia Tools Appl 79(8):34587–34603

Selvaraju R, Cogswell M, Das A, Vedantam R, Parikh D, Batra D (2020) Grad-cam: visual explanations from deep networks via gradient-based localization. Int J Comput Vis 128(2):336–359

Simonyan K, Zisserman A (2014) Very deep convolutional networks for large-scale image recognition. Comput Sci. arXiv:1409.1556

Huang G, Liu Z, Laurens V, Weinberger KQ (2017) Densely connected convolutional networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR), pp 1–8

Wang F, Jiang M, Chen Q, Yang S, Tang X (2017) Residual attention network for image classification. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR), pp 6450–6458

Zhu X, Cheng D, Zhang Z, Lin S, Dai J (2019) An empirical study of spatial attention mechanisms in deep networks. In: Proceedings of the IEEE/CVF international conference on computer vision (ICCV), pp 6687–6696

Jie H, Li S, Albanie S, Gang S, Enhua W (2020) Squeeze-and-excitation networks. IEEE Trans Pattern Anal Mach Intell 42(8):2011–2023

Irwan B, Barret Z, Ashish V, Jonathon S (2019) Attention augmented convolutional networks. In: Proceedings of the IEEE international conference on computer vision (ICCV), pp 3286–3295

Zhao H, Jia J, Koltun V (2020) Exploring self-attention for image recognition. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (CVPR), pp 10076–10085

Ashish V, Noam S, Niki P, Jakob U, Llion J, Aidan NG, Lukasz K (2016) Attention is all you need. In: Proceedings of the intererational conference on neural information processing systems (NIPS), pp 6000–6010

Yi T, Mostafa D, Bahri D, Metzler D (2020) Efficient transformers: a survey. arXiv:2009.06732

Khan S, Naseer M, Hayat M, Zamir S, Khan F, Shah M (2021) Transformers in vision: a survey. arXiv:210101169v2

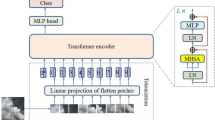

Dosovitskiy A, Beyer L, Kolesnikov A, Weissenborn D, Zhai X, Unterthiner T, Dehghani M, Minderer M, Heigold G, Gelly S, Uszkoreit JNH (2021) An image is worth \(16\times 16\) words: Transformers for image recognition at scale. In: Proceedings of the international conference on learning representations (ICLR)

Liu Z, Lin Y, Cao Y, Hu H, Wei Y, Zhang Z, Lin S, Guo B (2021) Swin transformer: Hierarchical vision transformer using shifted windows. In: Proceedings of the IEEE international conference on computer vision (ICCV), pp. 10012–10022

Touvron H, Cord M, Matthijs D, Massa F, Sablayrolles A, Jegou H (2021) Training data-efficient image transformers & distillation through attention. In: Proceedings of the international conference on learning representations (ICLR), pp. 10347–10357

Frizzi S, Kaabi R, Bouchouicha M (2016) Convolutional neural network for video fire and smoke detection. In: Proceedings of the IECON 2016-42nd annual conference of the ieee industrial electronics society, pp 877–882

Xu G, Zhang Y, Zhang Q, Lin G, Wang Z, Jia Y, Wang J (2019) Video smoke detection based on deep saliency network. Fire Saf J 105(4):277–285

Li C, Yang B, Ding H, Shi H, Jiang X, Sun J (2020) Real-time video-based smoke detection with high accuracy and efficiency. Fire Saf J 117(8):103184

Cao Y, Yang F, Tang Q, Lu X (2019) An attention enhanced bidirectional ISTM for early forest fire smoke recognition. IEEE Access 7:154732–154742

Ioffe S, Szegedy C (2015) Batch normalization: Accelerating deepnetwork training by reducing internal covariate shift. In: Proceedings of the international conference on machine learning, pp 448–456

He K, Zhang X, Ren S, Sun J (2015) Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In: Proceedings of the IEEE conference on computer vision (ICCV), pp 1–13

Ding X, Zhang X, Ma N, Han J, Ding G, Sun J (2021) Repvgg: Making vgg-style convnets great again. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (CVPR), pp 13733–13742

Szegedy C, Ioffe S, Vanhoucke V, Alemi AA (2017) Inception-v4, inception-resnet and the impact of residual connections on learning. In: Proceedings of the Thirty-first AAAI Conference on Artificial Intelligence, pp 4278–4284

Yuan F, Li G, Xia X, Lei B (2019) Fusing texture, edge and line features for smoke recognition. IET Image Proc 13(14):2805–2812

Shorten C, Khoshgoftaar TM (2019) A survey on image data augmentation for deep learning. J Big Data 6(1):1–48

Huang S, Lin C, Chen S, Wu Y, Lai S (2018) Auggan: Cross domain adaptation with gan-based data augmentation. In: Proceedings of the European conference on computer vision (ECCV), pp 731–744

Tan C, Sun F, Kong T, Xhang W, Yang C, Liu C (2018) A survey on deep transfer learning. In: Proceedings of the international conference on artifical neural networks, pp 270–279

Acknowledgements

This work was supported in part by the National Key R&D Program of China under Grant 2020YFC1522600 and Grant 2017YFC0821006-3, and in part by Fundamental Research Funds for the Central Universities under Grant D2020028 and Grant 2018QNZX14.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Cheng, G., Chen, X. & Gong, J. Deep Convolutional Network with Pixel-Aware Attention for Smoke Recognition. Fire Technol 58, 1839–1862 (2022). https://doi.org/10.1007/s10694-022-01231-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10694-022-01231-4