Abstract

In this essay, I review some results that suggest that rational choice theory has interesting things to say about the virtues. In particular, I argue that rational choice theory can show, first, the role of certain virtues in a game-theoretic analysis of norms. Secondly, that it is useful in the characterization of these virtues. Finally, I discuss how rational choice theory can be brought to bear upon the justification of these virtues by showing how they contribute to a flourishing life. I do this by discussing one particular example of a norm - the requirement that agents to honor their promises of mutual assistance - and one particular virtue, trustworthiness.

Similar content being viewed by others

Notes

For example, Hursthouse (1996).

E.g., Broome (1991).

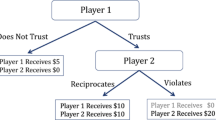

Both agents are supposed to elect their strategies in advance and then carry those out going through Fig. 1.

This stands for the strategy ‘choose C if A cooperates; choose C if A defects.’

There are some deep and fundamental puzzles about what kind of knowledge agents are supposed to have in order for backward induction in situations such as these to be possible in the first place and which choice of strategy in the end can be justified through backward induction. For a nice overview of the literature, as well as a critical evaluation, see (De Bruin 2004).

Some would argue that this is a very plausible assumption in the light of real-world situations of mutual assistance. Perhaps this is exactly how the situation looks like in the eyes of the participants. However, it is not entirely an innocent assumption that gives credence to the model. For I also assumed that p is constant. That is, I assume that in each round the prospects of future gains outweigh the temptation to defect. This is a very strong assumption, for, as we shall see, this makes that backward induction reasoning will not result in defection. Thanks to an anonymous referee for pressing me on this point.

See Axelrod (1984). Tit-for-tat (TFT) is usually described for the synchronous version of the prisoner’s dilemma. In the a-synchronous context, it needs to be bifurcated for whether one is first to move (A) or second (B). TFT then says that the A-player in round 1 should start by offering cooperation and repeat the previous move of the other agent in each subsequent round. The B-player should simply repeat the move of the other agent.

In technical terms: TFT is a Nash-equilibrium, but not an Evolutionary Stable Strategy. See Smith (1982)

In Axelrod’s computer tournament exactly such a deadlock ending in continuous mutual defection happened between TFT and the strategy called JOSS. JOSS played tit-for-tat but defected occasionally (10% of the time). One could say, it made a mistake 10% of the time. See Axelrod (1984, 36–38).

What follows is a version of Robert Sugden’s proof that T-strategies are evolutionary stable equilibria. I discuss this proof at length in Verbeek (2002).

However, not all strategies of the class T are uniquely best replies against themselves. If n is very large, it may pay to play ‘Always Defect’ against such a strategy, depending on the chance of future interactions and the values of b and c. In general T n is the best reply against itself provided p n > b ∕ c. See Sugden (1986, 115).

See also Verbeek (2007).

Therefore, though the analysis is clearly related to the standard evolutionary game-theoretic analysis of norms, I deviate in two ways from this analysis. Fist, on my analysis norms are patterns of convergent expectations, rather than patterns of convergent behavior. Secondly, the agents that have these expectations are capable of reasoning in a strategic fashion about these expectations, whereas the agents in contemporary evolutionary game theory often are portrayed as a-rational creatures.

I explain this in greater detail in Verbeek (2007).

This echoes Hart’s (1994, 89) analysis of norms and his concept of the ‘internal point of view’. This ‘internal point of view’ consists inter alia in regarding such reactions to norm violations as fitting or proper. See also the discussion in Verbeek (2002, 63–81). Similar points can be found in Baurmann (2001)

See Strawson (1962)

See Verbeek (2002, 69–70)

In Verbeek (2002, 89–93) I argue that there are at least two kinds of phenomenologically distinguishable forms of altruism. First there is ‘ sympathetic altruism’ which is the result of ‘feeling along’ with the person(s) one is sympathetic towards. On the other hand there is ‘sacrificing altruism’ where one does not ‘feel along’ with the suffering of the other, but nevertheless gives up on one’s own interests. This may be how the utilitarians thought of the Greatest Happiness Principle and how Kant thought of the duty of benevolence.

The normal form representation of a game consists of a matrix of the combination of all the strategies of each player.

Note that this implicitly assumes that there is interpersonal comparability of utility measure. This is a contentious assumption. However, there are ways to generate such measures which do not suffer from standard objections to interpersonal comparisons. See for example Broome (1991).

There is a straightforward argument to the same conclusion. An altruist cooperates in this game, regardless of what he can expect about the behavior of the other. That is, the fact that he made promise to help is totally irrelevant to him for his decision whether or not to assist B. This is why an altruist does not comply with the norm to honor one’s promise. See Verbeek (2002, 93–99)

To be precise, let r be the rate of transfer, then if r < c/b, (D, CC/DD) and (D, CD/DD) are in equilibrium. If r > c/b, then (D, CC/DC) and (C, CC/DD) are in equilibrium. There is no r such that just and only just (C, CC/DD) is in equilibrium.

This is by no means the only plausible account of trust–if it is plausible to begin with. In the literature there are those who argued that trust is a cognitive state, e.g., Hardin (1993). Others favor a more affective account of trust, e.g., Pettit (1995); Jones (1996); Lahno (2002) or some kind of hybrid of these two, e.g, Baier (1986). It might seem that the specific notion of trust that I am suggesting here is just a cognitive one. Trust is characterized here in the first place as a kind of dispositional notion. There are several authors who have criticized such a cognitive account of trust (see Lahno 2002 for a great discussion). These authors typically argue for a more emotional, non-cognitive account of trust. I agree with these critics that the mere characterization of trust as a disposition to expect a certain kind of behavior does not do fully justice to the concept, just as a purely behavioral account of norms misses crucial elements of norms. Trust is bound up with dispositions of gratitude and resentment, approval and disapproval. That is to say, trust is conjoined with cognitive as well as non-cognitive elements.

Harrington (1989).

Hursthouse (1999).

Neo-Aristotelians differ, however, on exactly how the relation between virtue and flourishing is to be conceptualized. All neo-Aristotelians believe that the virtuous life is the good life, opinions differ as to how the virtues do this. See Hursthouse (2009).

A similar observations can be found in MacIntyre (1981).

Strawson (1962).

Essentially, but, of course, not exclusively, for many other elements have a complex social life. The identification of the human life with a social life is a common theme in Aristotelian thinking.

Nicomachean Ethics 1106b36-1107a1.

Hume (1998, p. 85–87). Also “We do not infer a character to be virtuous, because it pleases: But in feeling that it pleases after such a particular manner, we in effect feel that it is virtuous.” Hume (2001, III.1.ii). A contemporary author who is inspired by Hume’s way of thinking of the virtues is Slote (2001).

To be precise, it is an ‘artificial virtue’: the approval it attracts is not for the acts of a trustworthy agent, rather for the contributions such acts in general make to a beneficial practice. See Hume (2001, III.2.i–ii).

Note that these type of situations are exactly the sort of situations where the need for the cooperative virtues is highest: a situation where an appeal to mutual rationality is not sufficient to ensure continuing cooperation.

If the intervals do not overlap at all, S i is a guarantee for the honesty or dishonesty of i and if the intervals overlap completely, S i is totally unreliable as a signal of i’s disposition. Harrington (1989) shows how important the assumption is. If this does not hold, it no longer follows that trustworthy agents will do better than untrustworthy ones.

The distributions Frank uses are derived from two identical normal distributions around μ D =2 and μ D = 3. These are “cut off” at two standard deviations from the mean and then renormalized. The result is two partly overlapping bell curves. See Frank (1987, 263).

S* depends also on the shape of the distribution of S H and S D . See also Harrington (1989).

Harrington (1989) points out that this can only be true in a sufficiently small population. In an infinite population, if , the chance that the other is an honest agent is infinitesimally small regardless of his value of S. However, in the case of the farmers, it seems to me that real life cases of such agricultural communities are sufficiently small.

This is the case only in virtue of his assumptions about ƒ U and ƒ T , and the fraction c/b-c. Frank introduces a further refinement to the model, by assuming that the observation of people’s S-value is not costless, which results in a more robust equilibrium. I will not go into the refined model here.

For related criticism, see , Morris (1999).

Frank (1988, 84)

Many thanks to Christoph Lumer and two anonymous referees for Ethical Theory and Moral Practice for their comments, criticisms and suggestions for improvement.

References

Ainslie G (1975) Specious reward: A behavioral theory of impulsiveness and impulse control. Psychological Bulletin 21:483–489

Ainslie G (1982) A behavioral economic approach to the defense mechanisms: Freud’s energy theory revisited. Social Science Information 21:735–779

Ainslie G (1992) Picoeconomics: The strategic interaction of successive motivational states within the person. Cambridge, Cambridge University Press

Ainslie G, Herrnstein R (1981) Preference reversal and delayed reinforcement. Animal Learning and Behavior 9:476–482

Aristotle (1932) Politics. Translated by H. Rackham. Vol. XXI. Loeb Classical Library. Cambridge: Harvard University Press

Aristotle (1934) Nicomachean ethics. Translated by H. Rackham. Vol. XIX. Loeb Classical Library. Cambridge: Harvard University Press

Axelrod R (1984) The evolution of cooperation. Basic Books, New York

Baier A (1986) Trust and anti-trust. Ethics 96:231–260

Baurmann M (2001) The market of virtue: morality and commitment in a Liberal Society (Translated by R. Zimmerling). Kluwer Law International, The Hague

Binmore K (1994) Playing fair (game theory and the social contract, volume 1). The MIT Press, Cambridge

Binmore K (1998) Just playing (game theory and the social contract, vol. 2). The MIT Press, Cambridge

Broome J (1991) Weighing goods: Equality, uncertainty and time. Blackwell, Cambridge

De Bruin B (2004) Explaining games. On the logic of game theoretic explanations. PhD Thesis, University of Amsterdam

DePaulo B, Rosenthal R (1979) Ambivalence, discrepancy, and deception in nonverbal communication. Skill in nonverbal communication. R. Rosenthal, Cambridge, Oelgeschlager, Gunn and Hain

DePaulo B, Zuckerman M et al. (1980). “Humans as lie detectors.” Journal of Communications Spring.

Frank R (1987) If homo economicus could choose his own utility function, would he want one with a conscience? American Economic Review 77(4):593–604

Frank R (1988) Passions within reason. London, W. W. Norton & Company, Inc

Frank R, Gilovich T et al (1993) The evolution of one-shot cooperation. Ethology and Sociobiology 14:247–256

Franssen M (1997) Some contributions to methodological individualism in the social sciences, University of Amsterdam

Hardin R (1993) The street-level epistemology of trust. Politics and Society 21:505–529

Hart HLA (1994) The concept of law, 2nd edn. Clarendon Press, Oxford

Harrington J Jr (1989) If homo economicus could choose his own utility function, would he want one with a conscience?: Comment. American Economic Review 79:588–593

Herrnstein R (1970) On the law of effect. Journal of the Experimental Analysis of Behavior 13(2):242–266

Hume D (1998) An enquiry concerning the principles of morals. In: Beauchamp TL (ed). Oxford University Press, Oxford

Hume D (2001) A treatise on human nature. Oxford University Press, Oxford

Hursthouse R (1996) Normative virtue ethics. How should one live? R. Crisp. Oxford, Oxford University Press: 19–36

Hursthouse R (1999) On virtue ethics. Oxford University Press, Oxford

Hursthouse R (2009) Virtue ethics. In : Zalta EN (ed), The Stanford encylopedia of philosophy, Spring edn., http://plato.stanford.edu/archives/spr2009/entries/ethics-virtue/

Jones K (1996) Trust as an affective attitude. Ethics 107(1):4–25

Kuhn ST (2004) Reflections on ethics and game theory. Synthese 141(1):1–44

Lahno B (1995) Versprechen: Überlegungen zu einer künslichen tugend. München, Oldenbourg Verlag

Lahno B (2002) Der begriff des vertrauens. Paderborn, Mentis

Liebrand W, Wilke H et al (1986) Value orientation and conformity. Journal of Conflict Resolution 30(1):77–97

MacIntyre A (1981) After virtue. University of Notre Dame Press, Notre Dame

Margolis H (1981) A new model of rational choice. Ethics 91:265–279

McClintock, Liebrand W (1988) “The role of interdependence structure, individual value orientation, and another's strategy in social decision making.”. Journal of Personality and Social Psychology 55:369–409

Morris CW (1999) What is this thing called 'reputation'? Business Ethics Quarterly 9(1):87–102

Pettit P (1995) The cunning of trust. Philosophy and Public Affairs 24(3):202–225

Rescher N (1975) Unselfishness: The role of the vicarious affects in moral philosophy and social theory. University of Pittsburgh Press, Pittsburgh

Skyrms B (1996) Evolution of the social contract. Cambridge University Press, Cambridge

Skyrms B (2004) The stag hunt and the evolution of social structure. Cambridge University Press, Cambridge

Slote M (2001) Morals from motives. Oxford, Oxford University Press

Smith JM (1982) Evolution and the theory of games. Cambridge University Press, Cambridge

Strawson PF (1962) Freedom and resentment. Proceedings of the British Academy 48:1–25

Sugden R (1986) The economics of rights, co-operation and welfare. Basil Blackwell, Oxford

Vanderschraaf P (1999) Game theory, evolution, and justice. Philosophy and Public Affairs 28(4):325–358

Verbeek B (2002) Instrumental rationality and moral philosophy: An essay on the virtues of cooperation. Kluwer Academic Publishers, Dordrecht

Verbeek B (2007) The authority of norms. American Philosophical Quarterly 44(3):245–258

Verbeek B, Morris C (2004) Game theory and ethics. The Stanford Encyclopedia of Philosophy (Fall 2008 Edition), Edward N. Zalta (ed.), http://plato.stanford.edu/archives/fall2008/entries/game-ethics/

Zuckerman M, Paulo BD et al (1981) “Verbal and nonverbal communication of deception.” Advances in Experimental and Social Psychology 14.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Verbeek, B. Rational Choice Virtues. Ethic Theory Moral Prac 13, 541–559 (2010). https://doi.org/10.1007/s10677-010-9222-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10677-010-9222-2