Abstract

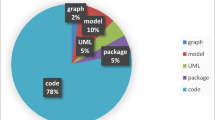

As software systems continue to play an important role in our daily lives, their quality is of paramount importance. Therefore, a plethora of prior research has focused on predicting components of software that are defect-prone. One aspect of this research focuses on predicting software changes that are fix-inducing. Although the prior research on fix-inducing changes has many advantages in terms of highly accurate results, it has one main drawback: It gives the same level of impact to all fix-inducing changes. We argue that treating all fix-inducing changes the same is not ideal, since a small typo in a change is easier to address by a developer than a thread synchronization issue. Therefore, in this paper, we study high impact fix-inducing changes (HIFCs). Since the impact of a change can be measured in different ways, we first propose a measure of impact of the fix-inducing changes, which takes into account the implementation work that needs to be done by developers in later (fixing) changes. Our measure of impact for a fix-inducing change uses the amount of churn, the number of files and the number of subsystems modified by developers during an associated fix of the fix-inducing change. We perform our study using six large open source projects to build specialized models that identify HIFCs, determine the best indicators of HIFCs and examine the benefits of prioritizing HIFCs. Using change factors, we are able to predict 56 % to 77 % of HIFCs with an average false alarm (misclassification) rate of 16 %. We find that the lines of code added, the number of developers who worked on a change, and the number of prior modifications on the files modified during a change are the best indicators of HIFCs. Lastly, we observe that a specialized model for HIFCs can provide inspection effort savings of 4 % over the state-of-the-art models. We believe our results would help practitioners prioritize their efforts towards the most impactful fix-inducing changes and save inspection effort.

Similar content being viewed by others

Notes

git clone git://git.gnome.org/gimp

git clone git://git.apache.org/maven-2.git

git clone github.com/rails/rails.git

git clone github.com/mozilla/rhino.git

References

Arisholm E, Briand LC (2006) Predicting fault-prone components in a Java legacy system. In: Proceedings of International Symposium on Empirical Software Engineering (ISESE), pp 8–17

Bachmann A, Bird C, Rahman F, Devanbu P, Bernstein A (2010) The missing links: Bugs and bug-fix commits. In: Proceedings of the Eighteenth ACM SIGSOFT International Symposium on Foundations of Software Engineering, FSE ’10, pp 97–106

Basili VR, Briand LC, Melo WL (1996) A validation of object-oriented design metrics as quality indicators. IEEE Trans Softw Eng 22(10):751–761

Bird C, Bachmann A, Aune E, Duffy J, Bernstein A, Filkov V, Devanbu P (2009) Fair and balanced? Bias in bug-fix datasets. In: European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE

Boehm B, Basili VR (2005) Software defect reduction top 10 list. Foundations of empirical software engineering: the legacy of Victor R Basili 426

Bradley P, Fayyad U, Reina C (1999) Scaling em (expectation-maximization) clustering to large databases. Technical Report, Microsoft Research, MSR-TR-98-35

Breiman L (2001) Random forests. Mach learn 45:5–32

Caglayan B, Misirli AT, Miranskyy AV, Turhan B, Bener A (2012) Factors characterizing reopened issues: a case study. In: Proceedings of Int’l Conference on Predictive Models in Software Engineering (PROMISE), pp 1–10

Caglayan B, Tosun A, Miranskyy A, Bener A, Ruffolo N (2010) Usage of multiple prediction models based on defect categories. In: Proceedings of Int’l Conference on Predictive Models in Software Engineering (PROMISE), pp 8:1–8:9

Chidamber SR, Kemerer CF (1994) A metrics suite for object oriented design. IEEE Trans Softw Eng 20(6):476–493. doi:10.1109/32.295895

Czerwonka J, Das R, Nagappan N, Tarvo A, Teterev A (2011) Crane: Failure prediction, change analysis and test prioritization in practice – experiences from windows. In: Proceedings of Int’l Confernce on Software Testing, Verification and Validation (ICST), pp 357–366

D’Ambros M, Lanza M, Robbes R (2010) An extensive comparison of bug prediction approaches. In: Proceedings of Int’l Working Conference on Mining Software Repositories (MSR), pp 31–41

Estabrooks A, Japkowicz N (2001) A mixture-of-experts framework for learning from imbalanced data sets. In: Proceedings of Int’l Conference on Advances in Intelligent Data Analysis (IDA), pp 34– 43

Eyolfson J, Tan L, Lam P (2011) Do time of day and developer experience affect commit bugginess. In: Proceedings of Int’l Working Conference on Mining Software Repositories (MSR), pp 153– 162

Fraley C, Raftery A, Murphy B, Scrucca L (2012) mclust version 4 for R: Normal mixture modeling for model-based clustering, classification, and density estimation. Technical Report, Department of Statistics. University of Washington

Gall J, Razavi N, Van Gool L (2012) An introduction to random forests for multi-class object detection. In: sProceedings of Int’l Conference on Theoretical Foundations of Computer Vision: outdoor and large-scale real-world scene analysis, pp 243–263

Gethers M, Dit B, Kagdi H, Poshyvanyk D (2012) Integrated impact analysis for managing software changes. In: International Conference on Software Engineering

Giger E, D’Ambros M, Pinzger M, Gall HC (2012) Method-level bug prediction. In: Proceedings of the ACM-IEEE International Symposium on Empirical Software Engineering and Measurement, ESEM ’12, pp 171–180

Giger E, Pinzger M, Gall H (2012) Can we predict types of code changes? An empirical analysis. In: Mining Software Repositories (MSR), 2012 9th IEEE Working Conference on, pp 217– 226

Graves TL, Karr AF, Marron JS, Siy H (2000) Predicting fault incidence using software change history. IEEE Trans Softw Eng 26(7):653–661

Guo PJ, Zimmermann T, Nagappan N, Murphy B (2010) Characterizing and predicting which bugs get fixed: An empirical study of microsoft windows. In: Proceedings of Int’l Conference on Software Engineering (ICSE), pp 495–504

Halstead M (1977) Elements of Software Science. Elsevier North-Holland, Amsterdam

Hassan AE (2009) Predicting faults using the complexity of code changes. In: Proceedings of Int’l Conf. on Software Engineering (ICSE), pp 78–88

Hata H, Mizuno O, Kikuno T (2012) Bug prediction based on fine-grained module histories. In: Proceedings of the 2012 International Conference on Software Engineering, ICSE 2012, pp 200–210

Herraiz I, Gonzalez-Barahona JM, Robles G (2007) Towards a theoretical model for software growth. In: Proceedings of Int’l Workshop on Mining Software Repositories (MSR), p 21

Herzig K, Just S, Rau A, Zeller A (2013) Predicting defects using change genealogies. In: Software Reliability Engineering (ISSRE), 2013 IEEE 24th International Symposium on, pp 118– 127. IEEE

Jiang Y, Cukic B, Menzies T (2008) Can data transformation help in the detection of fault-prone modules. In: Proceedings of Int’l Workshop on Defects in Large Software Systems (DEFECTS), pp 16–20

Kamei Y, Monden A, Morisaki S, Matsumoto KI (2008) A hybrid faulty module prediction using association rule mining and logistic regression analysis. In: Proceedings of Int’l Symposium on Empirical Software Engineering and Measurement (EMSE), pp 279–281

Kamei Y, Shihab E, Adams B, Hassan AE, Mockus A, Sinha A, Ubayashi N (2013) A large-scale empirical study of just-in-time quality assurance. IEEE Trans Softw Eng 39(6):757–773

Kawrykow D, Robillard MP (2011) Non-essential changes in version histories. In: ICSE, pp 351– 360

Kim S, Ernst MD (2007) Which warnings should I fix first? In: Proceedings of the the 6th joint meeting of the European software engineering conference and the ACM SIGSOFT symposium on The foundations of software engineering, pp 45–54. ACM

Kim S, Whitehead Jr. EJ, Zhang Y (2008) Classifying software changes: Clean or buggy. IEEE Trans Softw Eng 34(2):181–196

Kim S, Zimmermann T, Pan K, Whitehead EJ (2006) Automatic identification of bug-introducing changes. In: Automated Software Engineering, 2006. ASE’06. 21st IEEE/ACM International Conference on, pp 81–90. IEEE

Lehnert S (2011) A review of software change impact analysis. Technical Report, Technische Universität Ilmenau

Leszak M, Perry DE, Stoll D (2002) Classification and evaluation of defects in a project retrospective. J Syst Softw 61(3):173–187

Li B, Sun X, Leung H, Zhang S (2013) A survey of code-based change impact analysis techniques. Soft Test Verification Reliab 23:613–646

Liaw A, Wiener M (2013) randomforest: Breiman and cutler’s random forests for classification and regression. Online: cran.r-project.org/web/packages/randomForest/index.html

Madhavan JT, Whitehead EJ (2007) Predicting buggy changes inside an integrated development environment. In: Proceedings of the 2007 OOPSLA workshop on eclipse technology eXchange, pp 36–40. ACM

Matsumoto S, Kamei Y, Monden A, Matsumoto K, Nakamura M (2010) An analysis of developer metrics for fault prediction. In: Proceedings of Int’l Conference on Predictive Models in Software Engineering (PROMISE), pp 18:1–18:9

McCabe TJ (1976) A complexity measure. In: Proceedings of Int’l Conference on Software Engineering (ICSE), p 407

Mende T, Koschke R (2010) Effort-aware defect prediction models. In: Proceedings of European Conference on Software Maintenance and Reengineering (CSMR), pp 107–116

Menzies T, Turhan B, Bener A, Gay G, Cukic B, Jiang Y (2008) Implications of ceiling effects in defect predictors. In: Proceedings of Int’l Conference on Predictive Models in Software Engineering (PROMISE), pp 47–54

Misirli AT, Caglayan B, Bener A, Turhan B (2013) A retrospective study of software analytics projects: In-depth interviews with practitioners. IEEE Soft 30:54–61

Misirli AT, Caglayan B, Miranskyy A, Bener A, Ruffolo N (2011) Different strokes for different folks: A case study on software metrics for different defect categories. In: Proceedings of Int’l Workshop on Emerging Trends in Software Metrics (WETSOM)

Mockus A, Weiss DM (2000) Predicting risk of software changes. Bell Labs Tech J 5(2):169–180

Moser R, Pedrycz W, Succi G (2008) A comparative analysis of the efficiency of change metrics and static code attributes for defect prediction. In: Proceedings of Int’l Conference on Software Engineering (ICSE), pp 181–190

Nagappan N, Ball T (2005) Static analysis tools as early indicators of pre-release defect density. In: Proceedings of Int’l Conference on Software Engineering (ICSE), pp 580–586

Nagappan N, Ball T (2005) Use of relative code churn measures to predict system defect density. In: Proceedings of Int’l Conf. on Software Engineering (ICSE), pp 284–292

Nagappan N, Ball T, Zeller A (2006) Mining metrics to predict component failures. In: Proceedings of Int’l Conf. on Software Engineering (ICSE), pp 452–461

Ohlsson N, Alberg H (1996) Predicting fault-prone software modules in telephone switches. IEEE Trans Softw Eng 22(12):886–894

Ostrand TJ, Weyuker EJ, Bell RM (2010) Programmer-based fault prediction. In: Proceedings of Int’l Conference on Predictive Models in Software Engineering (PROMISE), pp 19:1–19:10

Purushothaman R, Perry D (2005) Toward understanding the rhetoric of small source code changes. IEEE Trans Softw Eng 31(6):511–526

Ren X, Shah F, Tip F, Ryder BG, Chesley O (2004) Chianti: A tool for change impact analysis of java programs. In: OOPSLA. Vancouver, Canada

Shadish W, Cook T, Campbell D (2002) Experimental and Quasi Experimental Designs for Generilized Causal Inference. Houghton Mifflin, Boston

Shihab E (2011) Pragmatic prioritization of software quality assurance efforts. In: Proceedings of Int’l Conf. on Software Engineering (ICSE), pp 1106–1109

Shihab E, Hassan AE, Adams B, Jiang ZM (2012) An industrial study on the risk of software changes. In: Proceedings of Int’l Sym. on Foundations of Software Engineering (FSE), pp 62:1–62:11

Shihab E, Ihara A, Kamei Y, Ibrahim W, Ohira M, Adams B, Hassan A, Matsumoto KI (2010) Predicting re-opened bugs: A case study on the eclipse project, pp 249–258

Shihab E, Kamei Y, Adams B, Hassan AE (2013) Is lines of code a good measure of effort in effort-aware models Inf Softw Technol 55(11):1981–1993

Shihab E, Mockus A, Kamei Y, Adams B, Hassan AE (2011) High-impact defects: a study of breakage and surprise defects. In: Proceedings of Sym. and European Conf. on Foundations of Software Engineering (ESEC-FSE), pp 300–310

Shin Y, Meneely A, Williams L, Osborne JA (2011) Evaluating complexity, code churn, and developer activity metrics as indicators of software vulnerabilities. IEEE Trans Softw Eng 37(6):772–787

Śliwerski J, Zimmermann T, Zeller A (2005) When do changes induce fixes? In: Proceedings of Int’l Workshop on Mining Software Repositories (MSR), pp 1–5

Thung F, Wang S, Lo D, Jiang L (2012) An empirical study of bugs in machine learning systems. In: Proceedings of Int’l Sym. on Software Reliability Engineering (ISSRE), pp 271–280

Tosun A, Bener A (2009) Reducing false alarms in software defect prediction by decision threshold optimization. In: Proceedings of the 2009 3rd International Symposium on Empirical Software Engineering and Measurement, ESEM ’09, pp. 477–480. IEEE Computer Society, Washington, DC, USA. doi:10.1109/ESEM.2009.5316006

Tosun A, Bener AB, Turhan B, Menzies T (2010) Practical considerations in deploying statistical methods for defect prediction: A case study within the turkish telecommunications industry. Inf Softw Technol 52(11):1242–1257

Yin Z, Yuan D, Zhou Y, Pasupathy S, Bairavasundaram L (2011) How do fixes become bugs? In: Proceedings of Sym. and European Conf. on Foundations of Software Engineering (ESEC-FSE), pp 26–36

Zimmermann T, Nagappan N, Williams L (2010) Searching for a needle in a haystack: Predicting security vulnerabilities for windows vista. In: Proceedings of Int’l Conf. on Software Testing, Verification and Validation (ICST)

Zimmermann T, Premraj R, Zeller A (2007) Predicting defects for Eclipse. In: Proceedings of Int’l Conference on Predictive Models in Software Engineering (PROMISE), pp 9–15

Zimmermann T, Weisgerber P, Diehl S, Zeller A (2004) Mining version histories to guide software changes. In: Proceedings Int’l Conference on Software Engineering (ICSE), pp 563–572

Acknowledgments

This research was partially supported by JSPS KAKENHI Grant Numbers 24680003 and 25540026.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by: Andrea de Lucia

An erratum to this article can be found at http://dx.doi.org/10.1007/s10664-016-9455-3.

Appendix

Appendix

Table 12 presents the minimum, 25 % quartile, median, 75 % quartile and maximum values of three metrics for HIFCs and LIFCs respectively in all projects. Figure 8 presents three-dimensional scatter diagrams of all projects in terms of the total churn, total number of modified files and total number of modified subsystems used for clustering fix-inducing changes. The clusters depicting HIFC are colored as red, whereas the clusters for LIFC are colored as black in the scatter diagrams. The figures are zoomed to the region where LIFCs are highly concentrated and redrawn to show the differences between two clusters. Figure 9 also shows box-plots of all projects in terms of the total churn, total number of modified files and total number of modified subsystems in two, i.e., high impact and low impact, clusters.

Rights and permissions

About this article

Cite this article

Misirli, A.T., Shihab, E. & Kamei, Y. Studying high impact fix-inducing changes. Empir Software Eng 21, 605–641 (2016). https://doi.org/10.1007/s10664-015-9370-z

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10664-015-9370-z