Abstract

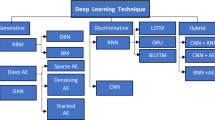

The automated classification of examination questions based on Bloom’s Taxonomy (BT) aims to assist the question setters so that high-quality question papers are produced. Most studies to automate this process adopted the machine learning approach, and only a few utilised the deep learning approach. The pre-trained contextual and non-contextual word embedding techniques effectively solved various natural language processing tasks. This study aims to identify the optimal pre-trained word embedding technique and propose a Convolutional Neural Network (CNN) model with the optimal word embedding technique. Therefore, non-contextual word embedding techniques: Word2vec, GloVe, and FastText, whereas contextualised embedding techniques: BERT, RoBERTa, and ELECTRA, were analysed in this study with two datasets. The experiment results showed that FastText is the most optimal technique in the first dataset, whereas RoBERTa is in the second dataset. This outcome of the first dataset differs from the text classification since contextual embedding generally outperforms non-contextual embedding. It could be due to the comparatively smaller size of the first dataset and the shorter length of the examination questions. Since RoBERTa is the most optimal word embedding technique in the second dataset, hence used along with CNN to build the model. This study used CNN instead of Recurrent Neural Networks (RNNs) since extracting relevant features is more important than the learning sequence from data in the context of examination question classification. The proposed CNN model achieved approximately 86% in both weighted F1-score and accuracy and outperformed all the models proposed by past studies, including RNNs. The proposed model’s robustness could be assessed in the future using a more comprehensive dataset.

Similar content being viewed by others

Data availability

The datasets generated and analysed during the current study are available in the Figshare repository, https://doi.org/10.6084/m9.figshare.22597957.v3

References

Abadi, M., Agarwal, A., Barham, P., Brevdo, E., Chen, Z., Citro, C., Corrado, G. S., Davis, A., Dean, J., Devin, M., et al.. (2016). Tensorflow: Large-scale machine learning on heterogeneous distributed systems. ArXiv Preprint ArXiv:1603.04467.

Abduljabbar, D. A., & Omar, N. (2015). Exam questions classification based on Bloom’s taxonomy cognitive level using classifiers combination. Journal of Theoretical and Applied Information Technology, 78(3), 447–455.

Aninditya, A., Hasibuan, M. A., & Sutoyo, E. (2019). Text Mining Approach Using TF-IDF and Naive Bayes for Classification of Exam Questions Based on Cognitive Level of Bloom’s Taxonomy. In 2019 IEEE International Conference on Internet of Things and Intelligence System (IoTaIS), 112–117. https://doi.org/10.1109/IoTaIS47347.2019.8980428

Barua, A., Thara, S., Premjith, B., & Soman, K. (2020). Analysis of Contextual and Non-contextual Word Embedding Models for Hindi NER with Web Application for Data Collection. In International Advanced Computing Conference, March, 183–202. https://doi.org/10.1007/978-981-16-0401-0_14

Beltagy, I., Lo, K., & Cohan, A. (2019). SCIBERT: A Pretrained Language Model for Scientific Text. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, 3615–3620.

Bloom, B. S. (1956). Taxonomy of educational objectives: The classification of educational goals. David McKay Company.

Bojanowski, P., Grave, E., Joulin, A., & Mikolov, T. (2017). Enriching word vectors with subword information. Transactions of the Association for Computational Linguistics, 5, 135–146. https://doi.org/10.1162/tacl_a_00051

Cho, K., Van Merriënboer, B., Gulcehre, C., Bahdanau, D., Bougares, F., Schwenk, H., & Bengio, Y. (2014). Learning phrase representations using RNN encoder-decoder for statistical machine translation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing, 1724–1734. https://doi.org/10.3115/v1/d14-1179

Clark, K., Luong, M. T., Le, Q. V., & Manning, C. D. (2020). ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators. In 8th International Conference on Learning Representations. https://openreview.net/forum?id=r1xMH1BtvB

Das, S., Mandal, S. K. D., & Basu, A. (2020). Identification of cognitive learning complexity of assessment questions using multi-class text classification. Contemporary Educational Technology, 12(2), 1–14. https://doi.org/10.30935/cedtech/8341

Devlin, J., Chang, M. W., Lee, K., & Toutanova, K. (2019). BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), 4171–4186.

Gani, M. O., Ayyasamy, R. K., Alhashmi, S. M., Sangodiah, A., & Fui, Y. T. (2022a). ETFPOS-IDF: A novel term weighting scheme for examination question classification based on Bloom’s taxonomy. IEEE Access, 10(November), 132777–132785. https://doi.org/10.1109/ACCESS.2022.3230592

Gani, M. O., Ayyasamy, R. K., Fui, T., & Sangodiah, A. (2022b). USTW Vs. STW: A comparative analysis for exam question classification based on Bloom’s taxonomy. Mendel, 28(2), 25–40. https://doi.org/10.13164/mendel.2022.2.025

Grandini, M., Bagli, E., & Visani, G. (2020). Metrics for multi-class classification: An overview. ArXiv Preprint ArXiv:2008.05756.

Hossin, M., & Sulaiman, M. N. (2015). A review on evaluation metrics for data classification evaluations. International Journal of Data Mining & Knowledge Management Process, 5(2), 01–11. https://doi.org/10.5121/ijdkp.2015.5201

Hu, H., Liao, M., Zhang, C., & Jing, Y. (2020). Text classification based recurrent neural network. In Proceedings of 2020 IEEE 5th Information Technology and Mechatronics Engineering Conference (ITOEC), 652–655. https://doi.org/10.1109/ITOEC49072.2020.9141747

Islam, M. Z., Islam, M. M., & Asraf, A. (2020). A combined deep CNN-LSTM network for the detection of novel coronavirus (COVID-19) using X-ray images. Informatics in Medicine Unlocked, 20, 100412. https://doi.org/10.1016/j.imu.2020.100412

Joulin, A., Grave, E., Bojanowski, P., & Mikolov, T. (2017). Bag of tricks for efficient text classification. In Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics: Volume 2, Short Papers, 427–431. https://doi.org/10.18653/v1/e17-2068

Kulkarni, N., Vaidya, R., & Bhate, M. (2021). A comparative study of Word Embedding Techniques to extract features from Text. Turkish Journal of Computer and Mathematics Education, 12(12), 3550–3557.

Kusuma, S. F., Siahaan, D., & Yuhana, U. L. (2016). Automatic Indonesia’s questions classification based on bloom’s taxonomy using Natural Language Processing a preliminary study. In 2015 International Conference on Information Technology Systems and Innovation (ICITSI). https://doi.org/10.1109/ICITSI.2015.7437696

Laddha, M. D., Lokare, V. T., Kiwelekar, A. W., & Netak, L. D. (2021). Classifications of the summative assessment for revised bloom’s taxonomy by using deep learning. International Journal of Engineering Trends and Technology, 69(3), 211–218. https://doi.org/10.14445/22315381/IJETT-V69I3P232

Lee, J., Yoon, W., Kim, S., Kim, D., Kim, S., So, C. H., & Kang, J. (2020). BioBERT: A pre-trained biomedical language representation model for biomedical text mining. Bioinformatics, 36(4), 1234–1240. https://doi.org/10.1093/BIOINFORMATICS/BTZ682

Li, Y., Rakovic, M., Xin Poh, B., Gasevic, D., & Chen, G. (2022). Automatic Classification of Learning Objectives Based on Bloom’s Taxonomy. In Proceedings of the 15th International Conference on Educational Data Mining, July, 530–537. https://doi.org/10.5281/zenodo.6853191

Liu, Y., Ott, M., Goyal, N., Du, J., Joshi, M., Chen, D., Levy, O., Lewis, M., Zettlemoyer, L., & Stoyanov, V. (2019). RoBERTa: A Robustly Optimised BERT Pretraining Approach. ArXiv Preprint ArXiv:1907.11692.

Mikolov, T., Chen, K., Corrado, G., & Dean, J. (2013a). Efficient estimation of word representations in vector space. In 1st International Conference on Learning Representations (ICLR). http://arxiv.org/abs/1301.3781

Mikolov, T., Sutskever, I., Chen, K., Corrado, G., & Dean, J. (2013b). Distributed Representations of Words and Phrases and Their Compositionality. In Proceedings of the 26th International Conference on Neural Information Processing Systems - Volume 2, 3111–3119.

Mohammed, M., & Omar, N. (2018). Question classification based on Bloom’s Taxonomy using enhanced TF-IDF International. Journal on Advanced Science, Engineering and Information Technology, 8(4–2), 1679–1685. https://doi.org/10.18517/ijaseit.8.4-2.6835

Mohammed, M., & Omar, N. (2020). Question classification based on Bloom’s taxonomy cognitive domain using modified TF-IDF and word2vec. PLoS ONE, 15(3), 1–21. https://doi.org/10.1371/journal.pone.0230442

Naseem, U., Razzak, I., Khan, S. K., & Prasad, M. (2021). A comprehensive survey on word representation models: From classical to State-of-the-Art word representation language models. ACM Transactions on Asian and Low-Resource Language Information Processing, 20(5). https://doi.org/10.1145/3434237

Omar, N., Haris, S. S., Hassan, R., Arshad, H., Rahmat, M., Zainal, N. F. A., & Zulkifli, R. (2012). Automated analysis of exam questions according to Bloom’s Taxonomy. Procedia - Social and Behavioral Sciences, 59(1956), 297–303. https://doi.org/10.1016/j.sbspro.2012.09.278

Osadi, K. A., Fernando, M., & Welgama, W. V. (2017). Ensemble classifier based approach for classification of examination questions into Bloom’s Taxonomy cognitive levels. International Journal of Computer Applications, 162(4), 1–6.

Osman, A., & Yahya, A. A. (2016). Classifications of Exam Questions Using Linguistically-Motivated Features: A Case Study Based on Bloom’s Taxonomy. In The Sixth International Arab Conference on Quality Assurance in Higher Education, 2016.

Othman, N., Faiz, R., & Smaili, K. (2017). A Word Embedding based Method for Question Retrieval in Community Question Answering. In International Conference on Natural Language, Signal and Speech Processing (ICNLSSP). https://hal.inria.fr/hal-01660005

Othman, N., Faiz, R., & Smaïli, K. (2019). Enhancing question retrieval in community question answering using word embeddings. Procedia Computer Science, 159, 485–494. https://doi.org/10.1016/j.procs.2019.09.203

Pennington, J., Socher, R., & Manning, C. D. (2014). GloVe: Global Vectors for Word Representation. In Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP), 1532–1543. https://doi.org/10.3115/V1/D14-1162

Peters, M. E., Neumann, M., Iyyer, M., Gardner, M., Clark, C., Lee, K., & Zettlemoyer, L. (2018). Deep contextualised word representations. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers), 2227–2237. https://doi.org/10.18653/v1/n18-1202

Rehurek, R., & Sojka, P. (2011). Gensim–python framework for vector space modelling. NLP Centre, Faculty of Informatics, Masaryk University, Brno, Czech Republic, 3(2).

Sangodiah, A., Ahmad, R., & Ahmad, W. F. W. (2014). A review in feature extraction approach in question classification using Support Vector Machine. In Proceedings IEEE International Conference on Control System, Computing and Engineering (ICCSCE), November, 536–541. https://doi.org/10.1109/ICCSCE.2014.7072776

Sangodiah, A., Fui, Y. T., Heng, L. E., Jalil, N. A., Ayyasamy, R. K., & Meian, K. H. (2021). A Comparative Analysis on Term Weighting in Exam Question Classification. In 5th International Symposium on Multidisciplinary Studies and Innovative Technologies (ISMSIT), 199–206. https://doi.org/10.1109/ISMSIT52890.2021.9604639

Sangodiah, A., Ahmad, R., & Ahmad, W. F. W. (2017). Taxonomy based features in question classification using support vector machine. Journal of Theoretical and Applied Information Technology, 95(12), 2814–2823.

Shaikh, S., Daudpotta, S. M., & Imran, A. S. (2021). Bloom’s learning outcomes’ automatic classification using LSTM and pretrained word embeddings. IEEE Access, 9, 117887–117909. https://doi.org/10.1109/ACCESS.2021.3106443

Sharma, H., Mathur, R., Chintala, T., Dhanalakshmi, S., & Senthil, R. (2022). An effective deep learning pipeline for improved question classification into bloom’s taxonomy’s domains. Education and Information Technologies, 1–41. https://doi.org/10.1007/s10639-022-11356-2

Shrestha, A., & Mahmood, A. (2019). Review of deep learning algorithms and architectures. IEEE Access, 7, 53040–53065. https://doi.org/10.1109/ACCESS.2019.2912200

Tahayna, B., Ayyasamy, R. K., & Akbar, R. (2022). Context-aware sentiment analysis using tweet expansion method. Journal of ICT Research and Applications, 16(2), 138–151. https://doi.org/10.5614/itbj.ict.res.appl.2022.16.2.3

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., & Polosukhin, I. (2017). Attention is all you need. Advances in Neural Information Processing Systems, 30.

Venkateswarlu, R., VasanthaKumari, R., & JayaSri, G. V. (2011). Speech recognition by using recurrent neural networks. International Journal of Scientific & Engineering Research, 2(6), 1–7.

Waheed, A., Goyal, M., Mittal, N., Gupta, D., Khanna, A., & Sharma, M. (2021). BloomNet: A Robust Transformer based model for Bloom’s Learning Outcome Classification. ArXiv Preprint ArXiv:2108.07249. http://arxiv.org/abs/2108.07249

Wang, C., Nulty, P., & Lillis, D. (2020). A Comparative Study on Word Embeddings in Deep Learning for Text Classification. In Proceedings of the 4th International Conference on Natural Language Processing and Information Retrieval, 37–46. https://doi.org/10.1145/3443279.3443304

Yahya, A. A., Toukal, Z., & Osman, A. (2012). Bloom’s Taxonomy–Based Classification for Item Bank Questions Using Support Vector Machines. In Modern Advances in Intelligent Systems and Tools (Vol. 431, pp. 135–140).

Yahya, A. A., Osman, A., Taleb, A., & Alattab, A. A. (2013). Analysing the cognitive level of classroom questions using machine learning techniques. Procedia - Social and Behavioral Sciences, 97, 587–595. https://doi.org/10.1016/j.sbspro.2013.10.277

Yunianto, I., Permanasari, A. E., & Widyawan, W. (2020). Domain-Specific Contextualised Embedding: A Systematic Literature Review. In Proceedings of the 12th International Conference on Information Technology and Electrical Engineering (ICITEE), 162–167. https://doi.org/10.1109/ICITEE49829.2020.9271752

Yusof, N., & Hui, C. J. (2010). Determination of Bloom’s cognitive level of question items using artificial neural network. In 10th International Conference on Intelligent Systems Design and Applications, 866–870. https://doi.org/10.1109/ISDA.2010.5687152

Zhang, J., Wong, C., Giacaman, N., & Luxton-Reilly, A. (2021). Automated Classification of Computing Education Questions using Bloom’s Taxonomy. In Proceedings of the 23rd Australasian Computing Education Conference, 58–65. https://doi.org/10.1145/3441636.3442305

Acknowledgements

This work was supported by the Universiti Tunku Abdul Rahman (UTAR) Research Fund (UTARRF) under Grant IPSR/RMC/UTARRF/2020-C2/A01.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors report there are no competing interests to declare.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Gani, M.O., Ayyasamy, R.K., Sangodiah, A. et al. Bloom’s Taxonomy-based exam question classification: The outcome of CNN and optimal pre-trained word embedding technique. Educ Inf Technol 28, 15893–15914 (2023). https://doi.org/10.1007/s10639-023-11842-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10639-023-11842-1