Abstract

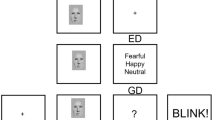

We investigated whether face-specific processes as indicated by the N170 in event-related brain potentials (ERPs) are modulated by emotional significance in facial expressions. Results yielded that emotional modulations over temporo-occipital electrodes typically used to measure the N170 were less pronounced when ERPs were referred to mastoids than when average reference was applied. This offers a potential explanation as to why the literature has so far yielded conflicting evidence regarding effects of emotional facial expressions on the N170. However, spatial distributions of the N170 and emotion effects across the scalp were distinguishable for the same time point, suggesting different neural sources for the N170 and emotion processing. We conclude that the N170 component itself is unaffected by emotional facial expressions, with overlapping activity from the emotion-sensitive early posterior negativity accounting for amplitude modulations over typical N170 electrodes. Our findings are consistent with traditional models of face processing assuming face and emotion encoding to be parallel and independent processes.

Similar content being viewed by others

References

Ashley V, Vuilleumier P, Swick D (2004) Time course and specificity of event-related potentials to emotional expressions. Neuroreport 15(1):211–216

Bartlett JC, Searcy J (1993) Inversion and configuration of faces. Cogn Psychol 25:281–316

Batty M, Taylor MJ (2003) Early processing of the six basic facial emotional expressions. Cogn Brain Res 17:613–620

Bentin S, Deouell LY (2000) Structural encoding and identification in face processing: ERP evidence for separate mechanisms. Cogn Neuropsychol 17(1/2/3):35–54

Bentin S, Allison T, Puce A, Perez E, McCarthy G (1996) Electrophysiological studies of face perception in humans. J Cogn Neurosci 8(6):551–565

Blau VC, Maurer U, Tottenham N, McCandliss BD (2007) The face-specific N170 component is modulated by emotional facial expression. Behav Brain Funct 3(7). doi:10.1186/1744-9081-3-7

Brandeis D, Lehmann D (1986) Event-related potentials of the brain and cognitive processes: approaches and applications. Neuropsychologia 24(1):151–168

Bruce V, Young A (1986) Understanding face recognition. Br J Psychol 77:305–327

Caharel S, Courtay N, Bernard C, Lalonde R, Rebaï M (2005) Familiarity and emotional expression influence an early stage of face processing: an electrophysiological study. Brain Cogn 59:96–100

Calder AJ, Young AW (2005) Understanding the recognition of facial identity and facial expression. Nat Rev Neurosci 6:641–651

Dalrymple KA, Oruc I, Duchaine B, Pancaroglu R, Fox CJ, Iaria G, Handy TC, Barton JJS (2011) The anatomic basis of the right face-selective N170 in acquired prosopagnosia: a combined ERP/fMRI study. Neuropsychologia 49:2553–2563

Deffke I, Sander T, Heidenreich J, Sommer W, Curio G, Lueschow A (2007) MEG/EEG sources of the 170 ms response to faces are co-localized in the fusiform gyrus. Neuroimage 35:1495–1501

Delplanque S, N’diaye K, Scherer K, Grandjean D (2007) Spatial frequencies or emotional effects? A systematic measure of spatial frequencies for IAPS pictures by a discrete wavelet analysis. J Neurosci Methods 165:144–150

Dennis TA, Chen C-C (2007) Neurophysiological mechanisms in the emotional modulation of attention: the interplay between threat sensitivity and attentional control. Biol Psychol 76:1–10

Eimer M (2000a) The face-specific N170 component reflects late stages in the structural encoding of faces. Neuroreport 11:2319–2324

Eimer M (2000b) Effects of face inversion on the structural encoding and recognition of faces. Evidence from event-related brain potentials. Cogn Brain Res 10:145–158

Eimer M, Holmes A (2002) An ERP study on the time course of emotional face processing. Neuroreport 13(4):427–431

Eimer M, Holmes A (2007) Event-related brain potential correlates of emotional face processing. Neuropsychologia 45:15–31

Eimer M, Holmes A, McGlone FP (2003) The role of spatial attention in the processing of facial expression: an ERP study of rapid brain responses to six basic emotions. Cogn Affect Behav Neurosci 3(2):97–110

Eimer M, Gosling A, Nicholas S, Kiss M (2011) The N170 component and its links to configural face processing: a rapid neural adaptation study. Brain Res 1376:76–87

Haxby JV, Ungerleider L, Clark VP, Schouten JL, Hoffman EA, Martin A (1999) The effect of face inversion on activity in human neural systems for face and object perception. Neuron 22:189–199

Haxby JV, Hoffman EA, Gobbini MI (2000) The distributed human neural system for face perception. Trends Cogn Sci 4(6):223–233

Hendriks MCP, van Boxtel GJM, Vingerhoets JFM (2007) An event-related potential study on the early processing of crying faces. Neuroreport 18(7):631–634

Hoffman EA, Haxby JV (2000) Distinct representations of eye gaze and identity in the distributed human neural system for face perception. Nat Neurosci 3(1):80–84

Holmes A, Vuilleumier P, Eimer M (2003) The processing of emotional facial expression is gated by spatial attention: evidence from event-related potentials. Cogn Brain Res 16:174–184

Holmes A, Winston JS, Eimer M (2005) The role of spatial frequency information for ERP components sensitive to faces and emotional facial expressions. Cogn Brain Res 25:508–520

Ishai A (2008) Let’s face it: it’s a cortical network. Neuroimage 40:415–419

Itier RJ, Taylor MJ (2004) N170 or N1? Spatiotemporal differences between object and face processing using ERPs. Cereb Cortex 14:132–142

Joyce C, Rossion B (2005) The face-sensitive N170 and VPP components manifest the same brain processes: the effect of reference electrode site. Clin Neurophysiol 116:2613–2631

Junghöfer M, Peyk P, Flaisch T, Schupp HT (2006) Neuroimaging methods in affective neuroscience: selected methodological issues. In Anders S, Ende G, Junghöfer M, Kissler J, Wildgruber D (eds) Progress in brain research, vol 156. Elsevier, Amsterdam, pp 123–143

Kanwisher N, McDermott J, Chun MM (1997) The fusiform face area: a module in human extrastriate cortex specialized for face perception. J Neurosci 17(11):4302–4311

Kanwisher N, Stanley D, Harris A (1999) The fusiform face area is selective for faces not animals. Neuroreport 10:183–187

Koenig T, Gianotti LRR (2009) Scalp field maps and their characterization. In: Michel CM, Koenig T, Brandeis D, Gianotti LRR, Wackermann J (eds) Electrical neuroimaging. Cambridge University Press, Cambridge, pp 25–47

Lehmann D (1977) The EEG as scalp field distribution. In: Remond A (ed) EEG informatics. A didactic review of methods and applications of EEG. Elsevier, Amsterdam, pp. 365–384

Lehmann D, Skrandies W (1980) Reference-free identification of components of checkerboard-evoked multichannel potential fields. Electroencephalogr Clin Neurophysiol 48:609–621

Leppänen JM, Kauppinen P, Peltola MJ, Hietanen JK (2007) Differential electrocortical responses to increasing intensities of fearful and happy emotional expressions. Brain Res 1166:103–109

Leppänen J, Hietanen JK, Koskinen K (2008) Differential early ERPs to fearful versus neutral facial expressions: a response to the salience of the eyes. Biol Psychol 78:150–158

Lundquist D, Flykt A, Öhman A (1998) Karolinska directed emotional faces, KDEF. Digital Publication, Stockholm

McCarthy G, Wood CC (1985) Scalp distributions of event-related potentials: an ambiguity associated with analysis of variance models. Electroencephalogr Clin Neurophysiol 62:203–208

Michel CM, Murray MM (2012) Towards the utilization of EEG as a brain imaging tool. Neuroimage 61:371–385

Oldfield RC (1971) The assessment and analysis of handedness: the Edinburgh inventory. Neuropsychology 9:97–113

Palermo R, Rhodes G (2007) Are you always on my mind? A review of how face perception and attention interact. Neuropsychologia 45(1):75–92

Pegna AJ, Landis T, Khateb A (2008) Electrophysiological evidence for early non-conscious processing of fearful facial expressions. Int J Psychophysiol 70:127–136

Pivik RT, Broughton RJ, Coppola R, Davidson RJ, Fox N, Nuwer MR (1993) Guidelines for the recording and quantitative analysis of electroencephalographic activity in research contexts. Psychophysiology 30:547–558

Pourtois G, Dan ES, Grandjean D, Sander D, Vuilleumier P (2005) Enhanced extrastriate visual response to bandpass spatial frequency filtered fearful faces: time course and topographic evoked-potentials mapping. Hum Brain Mapp 26:65–79

Proverbio AM, Brignone V, Matarazzo S, Del Zotto M, Zani A (2006) Gender difference in hemispheric asymmetry for face processing. BMC Neurosci 7(44). doi:10.1186/1471-2202-7-44

Puce A, Allison T, Asgari M, Gore JC, McCarthy G (1996) Differential sensitivity of human visual cortex to faces, letter strings, and text structures: a functional magnetic imaging study. J Neurosci 16(16):5205–5215

Rellecke J, Palazova M, Sommer W, Schacht A (2011) On the automaticity of emotion processing in words and faces: event-related brain potentials from a superficial task. Brain Cogn 77(1):23–32

Rellecke J, Sommer W, Schacht A (2012) Does processing of emotional facial expressions depend on intention? Time-resolved evidence from event-related brain potentials. Biol Psychol 90(1):23–32

Righart R, de Gelder B (2005) Context influences early perceptual analysis of faces: an electrophysiological study. Cereb Cortex 16(9):1249–1257

Rossion B, Gauthier I, Tarr MJ, Despland P, Bruyer R, Linotte S, Crommelinch M (2000) The N170 occipito-temporal component is delayed and enhanced to inverted faces but not to inverted objects: an electrophysiological account of face-specific processes in the human brain. Neuroreport 11(1):69–74

Sadeh B, Podlipsky I, Zhdanov A, Yovel G (2010) Event-related potential and functional MRI measures of face-selectivity are highly correlated: a simultaneous ERP-fMRI investigation. Hum Brain Mapp 31:1490–1501

Schacht A, Sommer W (2009) Emotions in word and face processing: early and late cortical responses. Brain Cogn 69:538–550

Schupp HT, Öhman A, Junghöfer M, Weike AI, Stockburger J, Hamm AO (2004) The facilitated processing of threatening faces: an ERP analysis. Emotion 4:189–200

Schupp HT, Flaisch T, Stockburger J, Junghöfer M (2006) Emotion and attention: event-related brain potential studies. In Anders S, Ende G, Junghöfer M, Kissler J, Wildgruber D (eds) Progress in brain research, vol 156. Elsevier, Amsterdam, pp 31–51

Schyns PG, Petro LS, Smith ML (2007) Dynamics of visual information integration in the brain for categorizing facial expressions. Curr Biol 17:1580–1585

Skrandies W (1990) Global field power and topographic similarity. Brain Topogr 3(1):137–141

Sprengelmeyer R, Jentzsch I (2006) Event related potentials and the perception pf intensity in facial expressions. Neuropsychologia 44:2899–2906

Stekelenburg JJ, de Gelder B (2004) The neural correlates of perceiving human bodies: an ERP study on the body-inversion effect. Neuroreport 15(5):777–780

Thierry G, Martin CD, Downing P, Pegna AJ (2007) Controlling for interstimulus perceptual variance abolishes N170 face selectivity. Nat Neurosci 10(4):505–511

Todd RM, Lewis MD, Meusel LA, Zelazo PD (2008) The time course of social-emotional processing in early childhood: ERP responses to facial affect and personal familiarity in a Go-Nogo task. Neuropsychologia 46(2):595–613

Tottenham N, Tanaka JW, Leon AC, McCarry T, Nurse M, Hare TA, Marcus DJ, Westerlund A, Casey BJ, Nelson C (2009) The NimStim set of facial expressions: judgements from untrained research participants. Psychiatry Res 168:242–249

Williams LM, Palmer D, Liddell BJ, Song L, Gordon E (2006) The ‘when’ and ‘where’ of perceiving signals of threat versus non-threat. Neuroimage 31:458–467

Wronka E, Walentowska W (2011) Attention modulates emotional expression processing. Psychophysiology 48(8):1047–1056

Acknowledgments

This research was supported by the German Initiative of Excellence, Cluster of Excellence 302 “Languages of Emotion”, Grant 209 to AS and WS. We thank Guillermo Recio, Sebastian Rose, and Olga Shmuilovich for assistance in data collection, and Thomas Pinkpank and Rainer Kniesche for technical support.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Rellecke, J., Sommer, W. & Schacht, A. Emotion Effects on the N170: A Question of Reference?. Brain Topogr 26, 62–71 (2013). https://doi.org/10.1007/s10548-012-0261-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10548-012-0261-y