Abstract

Machine learning has greatly influenced a variety of fields, including science. However, despite tremendous accomplishments of machine learning, one of the key limitations of most existing machine learning approaches is their reliance on large labeled sets, and thus, data with limited labeled samples remains an important challenge. Moreover, the performance of machine learning methods is often severely hindered in case of diverse data, which is usually associated with smaller data sets or data associated with areas of study where the size of the data sets is constrained by high experimental cost and/or ethics. These challenges call for innovative strategies for dealing with these types of data.

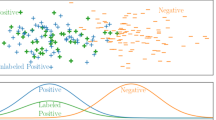

In this work, the aforementioned challenges are addressed by integrating graph-based frameworks, semi-supervised techniques, multiscale structures, and modified and adapted optimization procedures. This results in two innovative multiscale Laplacian learning (MLL) approaches for machine learning tasks, such as data classification, and for tackling data with limited samples, diverse data, and small data sets. The first approach, multikernel manifold learning (MML), integrates manifold learning with multikernel information and incorporates a warped kernel regularizer using multiscale graph Laplacians. The second approach, the multiscale MBO (MMBO) method, introduces multiscale Laplacians to the modification of the famous classical Merriman-Bence-Osher (MBO) scheme, and makes use of fast solvers. We demonstrate the performance of our algorithms experimentally on a variety of benchmark data sets, and compare them favorably to the state-of-art approaches.

Similar content being viewed by others

Code Availability

The source code is available at Github: https://github.com/ddnguyenmath/Multiscale-Laplacian-Learning.

References

Scholkopf B, Herbrich R, Smola AJ (2001) A Generalized Representer Theorem. In: 14th Annual conference on computational learning theory. https://alex.smola.org/papers/2001/SchHerSmo01.pdf

Huilgol P Quick introduction to bag-of-words (BoW) and TF-IDF for creating features from text. https://www.analyticsvidhya.com/blog/2020/02/quick-introduction-bag-of-words-bow-tf-idf/ya.com/blog/2020/02/quick-introduction-bag-of-words-bow-tf-idf/ , year= 2020,

Vedaldi A, Fulkerson B (2008). VLFeat Library. https://www.vlfeat.org

Abu-El-Haija S, Kapoor A, Perozzi B, Lee J (2018) N-GCN: multi-scale graph convolution for semi-supervised node classification. arXiv:1802.08888

Anam K, Al-Jumaily A (2015) A novel extreme learning machine for dimensionality reduction on finger movement classification using sEMG. In: International IEEE/EMBS conference on neural engineering. IEEE, pp 824–827

Anderson C (2010) A Rayleigh-Chebyshev procedure for finding the smallest eigenvalues and associated eigenvectors of large sparse Hermitian matrices. J Comput Phys 229:7477–7487

Bae E, Merkurjev E (2017) Convex variational methods on graphs for multiclass segmentation of high-dimensional data and point clouds. J Math Imaging Vis 58(3):468–493

Belkin M, Matveeva I, Niyogi P (2004) Regularization and semi-supervised learning on large graphs. In: International conference on computational learning theory. Springer, pp 624–638

Belkin M, Niyogi P, Sindhwani V (2006) Manifold regularization: a geometric framework for learning from labeled and unlabeled examples. J Mach Learn Res 7(Nov):2399– 2434

Belongie S, Fowlkes C, Chung F, Malik J (2002) Spectral partitioning with indefinite kernels using the nyström extension. In: European conference on computer vision. Springer, pp 531–542

Bohacek RS, McMartin C, Guida WC (1996) The art and practice of structure-based drug design: a molecular modeling perspective. Med Res Rev 16(1):3–50

Boykov Y, Veksler O, Zabih R (1999) Fast approximate energy minimization via graph cuts. In: ICCV (1), pp 377–384. citeseer.ist.psu.edu/boykov99fast.html

Bruna J, Zaremba W, Szlam A, LeCun Y (2013) Spectral networks and locally connected networks on graphs. arXiv:1312.6203

Budd J, van Gennip Y (2020) Graph merriman-bence-osher as a semi-discrete implicit euler scheme for graph allen-cahn flow. SIAM J Math Anal 52(5):4101–4139

Budd J, van Gennip Y, Latz J (2021) Classification and image processing with a semi-discrete scheme for fidelity forced allen–cahn on graphs. Gesellschaft fur Angewandte Mathematik und Mechanik 44 (1):e202100004

Cang Z, Mu L, Wei GW (2018) Representability of algebraic topology for biomolecules in machine learning based scoring and virtual screening. PLos Computat Bio 14(1):e1005929

Cang ZX, Mu L, Wu K, Opron K, Xia K, Wei GW (2015) A topological approach to protein classification. Molecular Based Math Bio 3:140–162

Cang ZX, Wei GW (2017) Analysis and prediction of protein folding energy changes upon mutation by element specific persistent homology. Bioinformatics 33:3549–3557

Cang ZX, Wei GW (2017) TopologyNet: topology based deep convolutional and multi-task neural networks for biomolecular property predictions. PLOS Computat Bio 13(7):e1005690. https://doi.org/10.1371/journal.pcbi.1005690

Cang ZX, Wei GW (2018) Integration of element specific persistent homology and machine learning for protein-ligand binding affinity prediction. Int J Numer Methods Biomed Eng, vol 34(2). https://doi.org/10.1002/cnm.2914

Cevikalp H, Franc V (2017) Large-scale robust transductive support vector machines. Neurocomputing 235:199–209

Chapelle O, Schölkopf B, Zien A (2006) Semi-supervised learning. MIT Press, Cambridge, MA

Chapelle O, Zien A (2005) Semi-supervised classification by low density separation. In: AISTATS. Citeseer, vol 2005, pp 57–64

Chen C, Xin J, Wang Y, Chen L, Ng MK (2018) A semisupervised classification approach for multidomain networks with domain selection. IEEE Trans Neural Netw Learn Syst 30(1):269–283

Chen J, Zhao R, Tong Y, Wei GW, Vedaldi A., Fulkerson B. (2021) Evolutionary de rham-hodge method. Discrete & continuous dynamical systems - B (In press, 2020)

Chen Y, Ye X (2011) Projection onto a simplex. arXiv:1101.6081

Cheng Y, Zhao X, Cai R, Li Z, Huang K, Rui Y (2016) Semi-supervised multimodal deep learning for RGB-d object recognition. In: International joint conferences on artificial intelligence, pp 3345–3351

Cortes C, Vapnik V (1995) Support-vector networks. Mach Learn 20(3):273–297

Couprie C, Grady L, Najman L, Talbot H (2011) Power watershed: a unifying graph-based optimization framework. IEEE Trans Pattern Anal Mach Intell 33(7):1384–1399

Elmoataz A, Lezoray O, Bougleux S (2008) Nonlocal discrete regularization on weighted graphs: a framework for image and manifold processing. IEEE Trans Image Process 17(7):1047–1060

Fang X, Xu Y, Li X, Fan Z, Liu H, Chen Y (2014) Locality and similarity preserving embedding for feature selection. Neurocomputing 128:304–315

Feng S, Zhou H, Dong H (2019) Using deep neural network with small dataset to predict material defects. Mater Des 162:300–310

Fowlkes C, Belongie S, Chung F, Malik J (2004) Spectral grouping using the Nyström method. IEEE Trans Pattern Anal Mach Intell 26(2):214–225

Fowlkes C, Belongie S, Malik J (2001) Efficient spatiotemporal grouping using the Nyström method. In: Proceedings of the 2001 IEEE computer society conference on computer vision and pattern recognition. CVPR 2001. IEEE, vol 1, pp I–I

Fox NK, Brenner SE, Chandonia JM (2014) SCOPe: structural classification of proteins-extended, integrating SCOP and ASTRAL data and classification of new structures. Nucleic Acids Res 42 (D1):D304–D309

Gadde A, Anis A, Ortega A (2014) Active semi-supervised learning using sampling theory for graph signals. In: Proceedings of the 20th ACM SIGKDD international conference on knowledge discovery and data mining, pp 492–501

Garcia-Cardona C, Merkurjev E, Bertozzi AL, Flenner A, Percus A (2014) Fast multiclass segmentation using diffuse interface methods on graphs. IEEE Trans Pattern Anal Mach Intell

Gerhart T, Sunu J, Lieu L, Merkurjev E, Chang JM, Gilles J, Bertozzi AL (2013) Detection and tracking of gas plumes in LWIR hyperspectral video sequence data. In: SPIE conference on defense, security, and sensing, pp 87430j–87430j

Goldberg AB, Zhu X, Wright S (2007) Dissimilarity in graph-based semi-supervised classification. In: Artificial intelligence and statistics, pp 155–162

Gong C, Tao D, Maybank S, Liu W, Kang G, Yang J (2016) Multi-modal curriculum learning for semi-supervised image classification. IEEE Trans Image Process 25(7):3249–3260

Grandvalet Y, Bengio Y (2005) Semi-supervised learning by entropy minimization. In: Advances in neural information processing systems, pp 529–536

Grimes RG, Lewis JG, Simon HD (1994) A shifted block Lanczos algorithm for solving sparse symmetric generalized eigenproblems. SIAM J Matrix Anal Appl 15 (1):228– 272

Huang G, Song S, Gupta J, Wu C (2014) Semi-supervised and unsupervised extreme learning machines. IEEE Trans Cybern 44(12):2405–2417

Huang G, Song S, Xu ZE, Weinberger K (2014) Transductive minimax probability machine. In: Joint european conference on machine learning and knowledge discovery in databases. Springer, pp 579–594

Hudson DL, Cohen ME (2000) Neural networks and artificial intelligence for biomedical engineering. Institute Electr Electron Eng

Iscen A, Tolias G, Avrithis Y, Chum O (2019) Label propagation for deep semi-supervised learning. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 5070–5079

Jacobs M, Merkurjev E, Esedoḡlu S (2018) Auction dynamics: a volume constrained mbo scheme. J Comput Phys 354:288– 310

Jia X, Jing XY, Zhu X, Chen S, Du B, Cai Z, He Z, Yue D (2020) Semi-supervised multi-view deep discriminant representation learning. IEEE Trans Pattern Anal Mach Intell

Jiang J, Wang R, Wang M, Gao K, Nguyen DD, Wei GW (2020) Boosting tree-assisted multitask deep learning for small scientific datasets. J Chem Inf Model 60(3):1235–1244

Joachims T et al (1999) Transductive inference for text classification using support vector machines. In: International conference on machine learning, vol 99, pp 200–209

Jordan MI, Mitchell TM (2015) Machine learning: trends, perspectives, and prospects. Science 349(6245):255–260

Kapoor A, Ahn H, Qi Y, Picard RW (2006) Hyperparameter and kernel learning for graph based semi-supervised classification. In: Advances in neural information processing systems, pp 627–634

Kasun L, Yang Y, Huang GB, Zhang Z (2016) Dimension reduction with extreme learning machine. IEEE Trans Image Process 25(8):3906–3918

Katz G, Caragea C, Shabtai A (2017) Vertical ensemble co-training for text classification. ACM Trans Intell Syst Technol 9(2):1–23

Kipf TN, Welling M (2016) Semi-supervised classification with graph convolutional networks. arXiv:1609.02907

Kotsiantis SB, Zaharakis I, Pintelas P (2007) Supervised machine learning: a review of classification techniques. Emerging Artif Intell Appl Comput Eng 160(1):3–24

Levin A, Rav-Acha A, Lischinski D (2008) Spectral matting. IEEE Trans Pattern Anal Mach Intell 30(10):1699–1712

Li Q, Han Z, Wu XM (2018) Deeper insights into graph convolutional networks for semi-supervised learning. In: Thirty-second AAAI conference on artificial intelligence

Li YF, Zhou ZH (2014) Towards making unlabeled data never hurt. IEEE Trans Pattern Anal Mach Intell 37(1):175–188

Liao R, Brockschmidt M, Tarlow D, Gaunt A, Urtasun R, Zemel RS (2018) Graph partition neural networks for semi-supervised classification. https://openreview.net/forum?id=rk4Fz2e0b

Lin F, Cohen WW (2010) Semi-supervised classification of network data using very few labels. In: 2010 International conference on advances in social networks analysis and mining. IEEE, pp 192–199

Liu Y, Ng MK, Zhu H (2021) Multiple graph semi-supervised clustering with automatic calculation of graph associations. Neurocomputing 429:33–46

Luo T, Hou C, Nie F, Yi D (2018) Dimension reduction for non-Gaussian data by adaptive discriminative analysis. IEEE Trans Cybern 49(3):933–946

Melacci S, Belkin M (2011) Laplacian support vector machines trained in the primal. J Mach Learn Res, vol 12(3)

Meng G, Merkurjev E, Koniges A, Bertozzi AL (2017) Hyperspectral video analysis using graph clustering methods. Image Process Line 7:218–245

Merkurjev E, Bertozzi AL, Chung F (2018) A semi-supervised heat kernel pagerank mbo algorithm for data classification. Commun Math Sci 16(5):1241–1265

Merkurjev E, Bertozzi AL, Lerman K, Yan X (2017) Modified Cheeger and ratio cut methods using the Ginzburg-Landau functional for classification of high-dimensional data. Inverse Probl 33(7):074003

Merkurjev E, Garcia-Cardona C, Bertozzi AL, Flenner A, Percus A (2014) Diffuse interface methods for multiclass segmentation of high-dimensional data. Appl Math Lett 33:29–34

Merkurjev E, Kostic T, Bertozzi AL (2013) An MBO scheme on graphs for segmentation and image processing. SIAM J Imaging Sci 6(4):1903–1930

Merkurjev E, Sunu J, Bertozzi AL (2014) Graph MBO method for multiclass segmentation of hyperspectral stand-off detection video. Proc IEEE Int Conf Image Process

Merriman B, Bence JK, Osher S (1992) Diffusion generated motion by mean curvature. AMS Select Lect Math Series Computat Crystal Growers Workshop 8966:73–83

Merriman B, Bence JK, Osher SJ (1994) Motion of multiple functions: a level set approach. J Computat Phys 112(2):334–363. https://doi.org/10.1006/jcph.1994.1105

Nguyen D, Wei GW (2019) Agl-score: algebraic graph learning score for protein-ligand binding scoring, ranking, docking, and screening. J Chem Inf Model

Nguyen DD, Cang Z, Wei GW (2020) A review of mathematical representations of biomolecular data. Phys Chem Chem Phys 22(8):4343–4367

Nguyen DD, Wei GW (2019) DG-GL: differential Geometry-based geometric learning of molecular datasets. Int J Numer Methods Biomed Eng 35(3):e3179

Nguyen DD, Xia K, Wei GW (2016) Generalized flexibility-rigidity index. J Chem Phys 144(23):234106

Nguyen DD, Xiao T, Wang ML, Wei GW (2017) Rigidity strengthening: a mechanism for protein-ligand binding. J Chem Inf Model 57:1715–1721

Ni T, Chung FL, Wang S (2015) Support vector machine with manifold regularization and partially labeling privacy protection. Inf Sci 294:390–407

Nie F, Cai G, Li J, Li X (2017) Auto-weighted multi-view learning for image clustering and semi-supervised classification. IEEE Trans Image Process 27(3):1501–1511

Nie F, Cai G, Li X (2017) Multi-view clustering and semi-supervised classification with adaptive neighbours. In: Thirty-first AAAI conference on artificial intelligence

Nie F, Li J, Li X (2016) Parameter-free auto-weighted multiple graph learning: a framework for multiview clustering and semi-supervised classification. In: IJCAI, pp 1881–1887

Nie F, Li J, Li X (2016) Parameter-free auto-weighted multiple graph learning: a framework for multiview clustering and semi-supervised classification. In: International joint conferences on artificial intelligence, pp 1881–1887

Nie F, Wang X, Huang H (2014) Clustering and projected clustering with adaptive neighbors. In: Proceedings of the 20th ACM SIGKDD international conference on knowledge discovery and data mining, pp 977–986

Noroozi V, Bahaadini S, Zheng L, Xie S, Shao W, Philip SY (2018) Semi-supervised deep representation learning for multi-view problems. In: IEEE international conference on big data. IEEE, pp 56–64

Opron K, Xia K, Wei GW (2015) Communication: capturing protein multiscale thermal fluctuations

Paige CC (1972) Computational variants of the Lanczos method for the eigenproblem. IMA J Appl Math 10(3):373–381

Perona P, Zelnik-Manor L (2004) Self-tuning spectral clustering. Adv Neural Inf Process Syst

Qi Z, Tian Y, Shi Y (2012) Laplacian twin support vector machine for semi-supervised classification. Neural Netw 35:46–53

Qiao Y, Shi C, Wang C, Li H, Haberland M, Luo X, Stuart AM, Bertozzi AL (2019) Uncertainty quantification for semi-supervised multi-class classification in image processing and ego-motion analysis of body-worn videos. Electron Imaging 2019(11):264–1

Qu M, Bengio Y, Tang J (2019) GMNN: graph Markov neural networks. arXiv:1905.06214

Rudi A, Carratino L, Rosasco L (2017) Falkon: an optimal large scale kernel method. Adv Neural Inf Process Syst, vol 30

Saha B, Gupta S, Phung D, Venkatesh S (2016) Multiple task transfer learning with small sample sizes. Knowl Inf Syst 46(2):315–342

Sakai T, Plessis MC, Niu G, Sugiyama M (2017) Semi-supervised classification based on classification from positive and unlabeled data. In: International conference on machine learning. PMLR, pp 2998–3006

Sansone E, Passerini A, De Natale F (2016) Classtering: joint classification and clustering with mixture of factor analysers. In: Proceedings of the twenty-second european conference on artificial intelligence, pp 1089–1095

Schwab K (2017) The fourth industrial revolution currency

Shaikhina T, Khovanova NA (2017) Handling limited datasets with neural networks in medical applications: a small-data approach. Artif Intell Med 75:51–63

Shaikhina T, Lowe D, Daga S, Briggs D, Higgins R, Khovanova N (2015) Machine learning for predictive modelling based on small data in biomedical engineering. IFAC-PapersOnLine 48(20):469–474

She Q, Hu B, Luo Z, Nguyen T, Zhang Y (2019) A hierarchical semi-supervised extreme learning machine method for EEG recognition. Med Biol Eng Comput 57(1):147–157

Sindhwani V, Niyogi P, Belkin M (2005) Beyond the point cloud: from transductive to semi-supervised learning. In: Proceedings of the 22nd international conference on machine learning. ACM, pp 824–831

Subramanya A, Bilmes J (2011) Semi-supervised learning with measure propagation. J Mach Learn Res 12:3311–3370

Szummer M, Jaakkola T (2002) Partially labeled classification with markov random walks. In: Advances in neural information processing systems, pp 945–952

Thekumparampil KK, Wang C, Oh S, Li LJ (2018) Attention-based graph neural network for semi-supervised learning. arXiv:1803.03735

Von Luxburg U (2007) A tutorial on spectral clustering. Stat Comput 17(4):395–416

Wang B, Tu Z, Tsotsos JK (2013) Dynamic label propagation for semi-supervised multi-class multi-label classification. In: Proceedings of the IEEE international conference on computer vision, pp 425–432

Wang J, Jebara T, Chang SF (2013) Semi-supervised learning using greedy max-cut. J Mach Learn Res 14(Mar):771–800

Wang M, Fu W, Hao S, Tao D, Wu X (2016) Scalable semi-supervised learning by efficient anchor graph regularization. IEEE Trans Knowl Data Eng 28(7):1864–1877

Wang Q, Qin Z, Nie F, Li X (2020) C2dnda: a deep framework for nonlinear dimensionality reduction. IEEE Trans Ind Electron 68(2):1684–1694

Wang R, Nguyen DD, Wei GW (2020) Persistent spectral graph. Int J Numer Methods Biomed Eng:e3376

Wang W, Arora R, Livescu K, Bilmes J (2015) On deep multi-view representation learning. In: International conference on machine learning. PMLR, pp 1083–1092

Weston J, Ratle F, Mobahi H, Collobert R (2012) Deep learning via semi-supervised embedding. In: Neural networks: tricks of the trade. Springer, pp 639–655

Xia K, Opron K, Wei GW (2015) Multiscale Gaussian network model (mGNM) and multiscale anisotropic network model (mANM). J Chem Phys 143(20):11B616_1

Yang L, Song S, Li S, Chen Y, Huang G (2019) Graph embedding-based dimension reduction with extreme learning machine. IEEE Trans Syst Man Cybern Syst

Yang Y, Wu QJ, Wang Y (2016) Autoencoder with invertible functions for dimension reduction and image reconstruction. IEEE Trans Syst Man Cybern Syst 48(7):1065–1079

Yang Z, Cohen W, Salakhudinov R (2016) Revisiting semi-supervised learning with graph embeddings. In: International conference on machine learning, pp 40–48

Zhang B, Qiang Q, Wang F, Nie F (2020) Fast multi-view semi-supervised learning with learned graph. IEEE Trans Knowl Data Eng

Zhang Y, Ling C (2018) A strategy to apply machine learning to small datasets in materials science. Npj Computat Materials 4(1):1–8

Zhang Y, Pal S, Coates M, Ustebay D (2019) Bayesian graph convolutional neural networks for semi-supervised classification. In: Proceedings of the AAAI conference on artificial intelligence, vol 33, pp 5829–5836

Zhao H, Zheng J, Deng W, Song Y (2020) Semi-supervised broad learning system based on manifold regularization and broad network. IEEE Trans Circuits Syst Regular Papers 67(3):983–994

Zhou D, Bousquet O, Lal TN, Weston J, Schölkopf B (2004) Learning with local and global consistency. In: Thrun S, Saul LK, Schölkopf B (eds) Advances in neural information processing systems 16. MIT Press, Cambridge, MA, pp 321-328

Zhou D, Schölkopf B (2004) A regularization framework for learning from graph data. In: Workshop on statistical relational learning. International conference on machine learning, Banff, Canada

Zhu X, Ghahramani Z (2002) Learning from labeled and unlabeled data with label propagation. CMU CALD Tech Report CMU-CALD-02-107

Zhu X, Ghahramani Z, Lafferty J (2003) Semi-supervised learning using Gaussian fields and harmonic functions. In: Proceedings of the 20th international conference on machine learning, pp 912–919

Zhuang C, Ma Q (2018) Dual graph convolutional networks for graph-based semi-supervised classification. In: Proceedings of the 2018 world wide web conference, pp 499–508

Acknowledgements

This work was supported in part by NSF Grants DMS-2052983, DMS-2053284, DMS2151802, DMS-1761320, and IIS1900473, NIH grants R01GM126189 and NIH R01AI164266, Bristol-Myers Squibb, Pfizer, MSU Foundation, and University of Kentucky Startup Fund.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interests

The authors declare that they have no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Merkurjev, E., Nguyen, D.D. & Wei, GW. Multiscale laplacian learning. Appl Intell 53, 15727–15746 (2023). https://doi.org/10.1007/s10489-022-04333-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-022-04333-2