Abstract

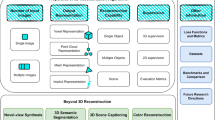

In this paper, we propose a dense depth estimation pipeline for multiview 360∘ images. The proposed pipeline leverages a spherical camera model that compensates for radial distortion in 360∘ images. The key contribution of this paper is the extension of a spherical camera model to multiview by introducing a translation scaling scheme. Moreover, we propose an effective dense depth estimation method by setting virtual depth and minimizing photonic reprojection error. We validate the performance of the proposed pipeline using the images of natural scenes as well as the synthesized dataset for quantitive evaluation. The experimental results verify that the proposed pipeline improves estimation accuracy compared to the current state-of-art dense depth estimation methods.

Similar content being viewed by others

References

da Silveira TLT, Jung C R (2019) Dense 3D scene reconstruction from multiple spherical images for 3-Dof+ vr applications. In: IEEE conference virtual reality and 3d user interfaces (vr), pp 9–18

Kim H, Hilton A (2013) 3D scene reconstruction from multiple spherical stereo pairs. Int J Comput Vis 104:94–116

Ma C, Shi L, Huang H, Yan M (2015) 3D reconstruction from full-view fisheye camera. arXiv:1506.06273

Pathak S, Moro A, Fujii H, Yamashita A, Asama H (2016) 3D reconstruction of structures using spherical cameras with small motion. In: IEEE International Conference on Control, Automation and System (ICCAS), pp 117–122

Pathak S, Moro A, Yamashita A, Asama H (2016) Dense 3D reconstruction from two spherical images via optical flow-based equirectangular epipolar rectification. In: IEEE int. conf. on imaging syst. and technol. (IST). IEEE, pp 140–145

Schönbein M, Geiger A (2014) Omnidirectional 3D reconstruction in augmented manhattan worlds. In: IEEE int. conf. on intell. robot. and syst. (IROS), pp 716–723

Yang S-T, Wang F-E, Peng C-H, Wonka P, Sun M, Chu H-K (2019) DuLa-Net: a dual-projection network for estimating room layouts from a single rgb panorama. In: IEEE conf. on comput. vis. and pattern recognit. (cvpr), pp 3363–3372

Fernandez-Labrador C, Facil J M, Perez-Yus A, Demonceaux C, Civera J, Guerrero J J (2020) Corners for layout: end-to-end layout recovery from 360 images. IEEE Robot Automat Lett 5:1255–1262

Tian F, Gao Y, Fang Z, Fang Y, Gu J, Fujita H, Hwang J-N (2021) Depth estimation using a self-supervised network based on cross-layer feature fusion and the quadtree constraint. IEEE Trans on Circ and Syst for Video Tech. 1–1

Lie W-N, Hsieh C-Y, Lin G-S (2017) Key-frame-based background sprite generation for hole filling in depth image-based rendering. IEEE Trans Multimed 20:1075–1087

Jiang X, Le Pendu M, Guillemot C (2017) Light field compression using depth image based view synthesis. In: IEEE int. conf. on multimedia & expo workshops. (ICMEW), pp 19–24

Yang N, Stumberg L , Wang R, Cremers D (2020) D3VO: Deep depth, deep pose and deep uncertainty for monocular visual odometry. In: IEEE conf. on comput. vis. and pattern recognit. (CVPR), pp 1281–1292

Zhan H, Weerasekera C S, Bian J-W, Reid I (2020) Visual odometry revisited: What should be learnt?. In: IEEE int. conf. on robot. and automat. (ICRA), pp 4203–4210

Xue F, Wang X, Li S, Wang Q, Wang J, Zha H (2019) Beyond tracking: Selecting memory and refining poses for deep visual odometry. In: IEEE conf. on comput. vis. and pattern recognit. (CVPR), pp 8575–8583

Carrio A, Vemprala S, Ripoll A, Saripalli S, Campoy P (2018) Drone detection using depth maps. In: IEEE int. conf. on intell. robot. and syst. (IROS), pp 1034–1037

Kart U, Lukezic A, Kristan M, Kamarainen J-K, Matas J (2019) Object tracking by reconstruction with view-specific discriminative correlation filters. In: IEEE conf. on comput. vis. and pattern recognit. (cvpr), pp 1339–1348

Hirschmuller H (2007) Stereo processing by semiglobal matching and mutual information. IEEE Trans Pattern Anal Mach Intell 30:328–341

Chen Y-S, Hung Y-P, Fuh C-S (2001) Fast block matching algorithm based on the winner-update strategy. IEEE Trans Image Process 10:1212–1222

Wang Q, Shi S, Zheng S, Zhao K, Chu X (2020) FADNet: A fast and accurate network for disparity estimation. In: IEEE int. conf. on robot. and automat. (ICRA). IEEE, pp 101–107

Gao Q, Zhou Y, Li G, Tong T (2020) Compact StereoNet: stereo disparity estimation via knowledge distillation and compact feature extractor. IEEE Access 8:192141–192154

Geyer C, Daniilidis K (2000) A unifying theory for central panoramic systems and practical implications. In: Eur. Conf. on Comput. Vis. (ECCV), pp 445–461

Ying X, Hu Z (2004) Can we consider central catadioptric cameras and fisheye cameras within a unified imaging model. In: Eur. Conf. on Comput. Vis. (ECCV), pp 442–455

Courbon J, Mezouar Y, Eckt L, Martinet P (2007) A generic fisheye camera model for robotic applications. In: IEEE int. conf. on intell. robot. and syst. (IROS), pp 1683–1688

Li S (2008) Binocular spherical stereo. IEEE Trans Intell Transp Syst 9:589–600

Pagani A, Stricker D (2011) Structure from motion using full spherical panoramic cameras. In: IEEE int. conf. on comput. vis. (iccv) workshops, pp 375–382

Im S, Ha H, Rameau F, Jeon H-G, Choe G, Kweon I S (2016) All-around depth from small motion with a spherical panoramic camera. In: Eur. Conf. on Comput. Vis., pp 156–172

Weinzaepfel P, Revaud J, Harchaoui Z, Schmid C (2013) DeepFlow: Large displacement optical flow with deep matching. In: IEEE int. conf. on comput. vis. (ICCV), pp 1385–1392

Zhao Q, Feng W, Wan L, Zhang J (2015) SPHORB: a fast and robust binary feature on the sphere. Int J Compt Vis 113:143–159

Rublee E, Rabaud V, Konolige K, Bradski G (2011) ORB: an efficient alternative to SIFT or SURF. In: IEEE int. conf. on comput. vis. (ICCV), pp 2564–2571

Arican Z, Frossard P (2007) Dense disparity estimation from omnidirectional images. In: IEEE conf. on adv. video and signal based surveillance (avss), pp 399–404

Collins R T (1996) A space-sweep approach to true multi-image matching. In: IEEE conf. on comput. vis. and pattern recognit. (CVPR), pp 358–363

Chang J-R, Chen Y-S (2018) Pyramid stereo matching network. In: IEEE conf. on comput. vis. and pattern recognit. (cvpr), pp 5410–5418

Kendall A, Martirosyan H, Dasgupta S, Henry P, Kennedy R, Bachrach A, Bry A (2017) End-to-end learning of geometry and context for deep stereo regression. In: IEEE int. conf. on comput. vis. (iccv), pp 66–75

Zhang F, Prisacariu V, Yang R, Torr P HS (2019) GA-Net: Guided aggregation net for end-to-end stereo matching. In: IEEE conf. on comput. vis. and pattern recognit. (cvpr), pp 185–194

Wang N-H, Solarte B, Tsai Y-H, Chiu W-C, Sun M (2020) 360SD-Net: 360∘ stereo depth estimation with learnable cost volume. In: IEEE int. conf. on robot. and automat. (icra), pp 582–588

Zioulis N, Karakottas A, Zarpalas D, Alvarez F, Daras P (2019) Spherical view synthesis for self-supervised 360 depth estimation. In: IEEE int. conf. on 3d vis. (3dv), pp 690–699

Wang F-E, Yeh Y-H, Sun M, Chiu W-C, Tsai Y-H (2020) BiFuse: Monocular 360 depth estimation via bi-projection fusion. In: IEEE conf. on comput. vis. and pattern recognit. (cvpr), pp 462–471

Jiang H, Sheng Z, Zhu S, Dong Z, Huang R (2021) UniFuse: Unidirectional fusion for 360∘ panorama depth estimation. IEEE Robot Automat Lett 6:1519–1526

Guan H, Smith William AP (2016) Structure-from-motion in spherical video using the Von Mises-Fisher distribution. IEEE Trans Image Process 26(2):711–723

Hartley R, Zisserman A (2003) Multiple view geometry in computer vision. Cambridge University Press

Hartley R I (1997) In defense of the eight-point algorithm. IEEE Trans Pattern Anal Mach Intell 19:580–593

Silveira T L T d, Jung C R (2019) Perturbation analysis of the 8-point algorithm: A case study for wide fov cameras. In: IEEE conf. on comput. vis. and pattern recognit. (cvpr), pp 11757–11766

Li J, Wang X, Li S (2018) Spherical-model-based slam on full-view images for indoor environments. Appl Sci 8:2268

(2019). Bfmatcher: OpenCV. https://docs.opencv.org/3.4/d3/da1/classcv_1_1BFMatcher.html#details

Fischler M A, Bolles R C (1981) Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun ACM 24:381–395

Tomasi C, Manduchi R (1998) Bilateral filtering for gray and color images. In: IEEE int. conf. on comput. vis. (iccv), pp 839–846

Barron J T, Poole B (2016) The fast bilateral solver. In: Eur. Conf. on Comput. Vis. (ECCV), pp 617–632

Jeong J, Jang D, Son J, Ryu E-S (2018) 3DoF+ 360 video location-based asymmetric down-sampling for view synthesis to immersive VR video streaming. Sensors 18:3148

Zhang Z, Rebecq H, Forster C, Scaramuzza D (2016) Benefit of large field-of-view cameras for visual odometry. In: IEEE int. conf. on robot. and automat. (icra), pp 801–808

Zhou Q-Y, Park J, Koltun V (2018) Open3D: A modern library for 3D data processing. arXiv:1801.09847

Huang T, Yang GJTGY, Tang G (1979) A fast two-dimensional median filtering algorithm. IEEE Trans Acoust Speech Signal Process 27:13–18

Acknowledgements

This work was partially supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT) (No.2020R1F1A1075428) and Institute of Information & Communications Technology Planning & Evaluation (IITP) grant funded by the Korea government (MSIT) (No.2020-0-00994, Development of autonomous VR and AR content generation technology reflecting usage environment).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yang, S., Kim, K. & Lee, Y. Dense depth estimation from multiple 360-degree images using virtual depth. Appl Intell 52, 14507–14517 (2022). https://doi.org/10.1007/s10489-022-03391-w

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-022-03391-w